Kafka核心参数(带完善)

发布时间:2023年12月20日

客户端

api

Kafka提供了以下两套客户端API

- HighLevel(重点)

- LowLevel?

HighLevel API封装了kafka的运行细节,使用起来比较简单,是企业开发过程中最常用的客户端API。 而LowLevel API则需要客户端自己管理Kafka的运行细节,Partition,Offset这些数据都由客户端自行管理。这层API功能更灵活,但是使用起来非常复杂,也更容易出错。只在极少数对性能要求非常极致的场景才会偶尔使用

生产者发送消息

发送流程:

- 组装生产者核心配置参数

- 初始化生产者

- 组装消息

- 发送消息, 三种模式

- 单向发送, 不等待broker返回结果

- 同步发送

- 异步发送

- 关闭生产者

代码:

package com.kk.kafka.demo;

import org.apache.kafka.clients.producer.*;

import java.util.Properties;

import java.util.concurrent.ExecutionException;

public class ProducerTest {

public static final String KAFKA_URL = "192.168.6.128:9092";

public static final String TOPIC = "oneTopic";

public static void main(String[] args) throws ExecutionException, InterruptedException {

// 组装生产者配置

Properties ps = new Properties();

ps.put(ProducerConfig.BOOTSTRAP_SERVERS_CONFIG, KAFKA_URL);

ps.put(ProducerConfig.KEY_SERIALIZER_CLASS_CONFIG, "org.apache.kafka.common.serialization.StringSerializer");

ps.put(ProducerConfig.VALUE_SERIALIZER_CLASS_CONFIG, "org.apache.kafka.common.serialization.StringSerializer");

// 初始化生产者

Producer<String, String> producer = new KafkaProducer<>(ps);

for (int i = 0; i < 5; i++) {

ProducerRecord<String, String> producerRecord = new ProducerRecord<>(TOPIC, "key" + i, "message" + i);

// 同步发送

producer.send(producerRecord);

// 同步发送

RecordMetadata metadata = producer.send(producerRecord).get();

//异步发送

producer.send(producerRecord, new Callback() {

@Override

public void onCompletion(RecordMetadata metadata, Exception e) {

if (metadata != null) {

System.out.println("Message sent successfully! Topic: " + metadata.topic() +

", Partition: " + metadata.partition() +

", Offset: " + metadata.offset() +

", message: " + producerRecord.value());

} else {

System.err.println("Error sending message: " + e.getMessage());

}

}

});

}

producer.close();

}

}消费者消费消息

消费流程:

- 组装消费者核心配置参数

- 初始化消费者

- 订阅topic, 可订阅多个

- 拉取消息, 可配置超时时间

- 提交offset, 分为同步和异步两种方式, 服务端维护offset消费进度

代码:

package com.kk.kafka.demo;

import org.apache.kafka.clients.consumer.*;

import java.time.Duration;

import java.util.Arrays;

import java.util.Properties;

public class ConsumerTest {

public static final String KAFKA_URL = "192.168.6.128:9092";

public static final String TOPIC = "oneTopic";

public static void main(String[] args) {

// 组装消费者配置参数

Properties props = new Properties();

props.put(ConsumerConfig.BOOTSTRAP_SERVERS_CONFIG, KAFKA_URL);

props.put(ConsumerConfig.GROUP_ID_CONFIG, "your-consumer-group");

props.put(ConsumerConfig.KEY_DESERIALIZER_CLASS_CONFIG, "org.apache.kafka.common.serialization.StringDeserializer");

props.put(ConsumerConfig.VALUE_DESERIALIZER_CLASS_CONFIG, "org.apache.kafka.common.serialization.StringDeserializer");

// 初始化消费者

Consumer<String, String> consumer = new KafkaConsumer<>(props);

// 订阅topic

consumer.subscribe(Arrays.asList(TOPIC));

while (true) {

// 拉取消息, 100毫秒超时时间

ConsumerRecords<String, String> records = consumer.poll(Duration.ofNanos(100));

//处理消息

for (ConsumerRecord<String, String> record : records) {

System.out.println("start Consumer offset = " + record.offset() + ";key = " + record.key() + "; value= " + record.value());

}

//提交offset,消息就不会重复推送。

//同步提交,表示必须等到offset提交完毕,再去消费下一批数据。

consumer.commitSync();

//异步提交,表示发送完提交offset请求后,就开始消费下一批数据了。不用等到Broker的确认。

// consumer.commitAsync();

}

}

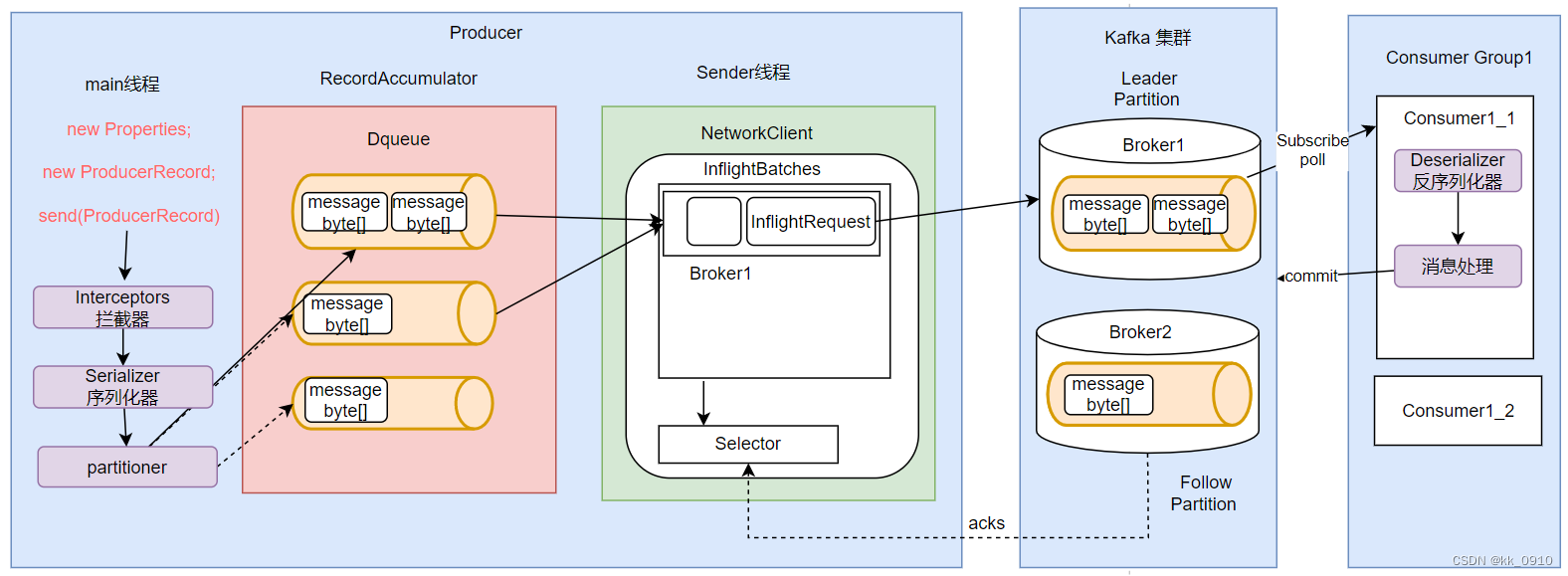

}客户端整体流程

拦截器

序列化器

发送到Dequeue

Dequeue满了或者批次满了或者阈值时间 推到InflightRequest

send线程将InflightRequest推到服务端Partition, 满足一定阈值

缓存机制

broker给生产者ack

其他重要核心参数?

客户端管理offset

幂等性原理

事务消息

文章来源:https://blog.csdn.net/weixin_64027360/article/details/135097247

本文来自互联网用户投稿,该文观点仅代表作者本人,不代表本站立场。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。 如若内容造成侵权/违法违规/事实不符,请联系我的编程经验分享网邮箱:chenni525@qq.com进行投诉反馈,一经查实,立即删除!

本文来自互联网用户投稿,该文观点仅代表作者本人,不代表本站立场。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。 如若内容造成侵权/违法违规/事实不符,请联系我的编程经验分享网邮箱:chenni525@qq.com进行投诉反馈,一经查实,立即删除!

最新文章

- Python教程

- 深入理解 MySQL 中的 HAVING 关键字和聚合函数

- Qt之QChar编码(1)

- MyBatis入门基础篇

- 用Python脚本实现FFmpeg批量转换

- js unshift方法的使用

- 机器视觉测量项目书

- 算法训练day32|贪心算法part02

- 知识蒸馏理论及实操

- JavaScript基础知识解析

- Docker部署Traefik结合内网穿透远程访问Dashboard界面

- 美易平台:安踏体育计划购买Amer Sports普通股,进一步扩大市场份额

- STM32用ST-LINK V2-1烧录后,不会自动重启执行--Keil设置

- React16源码: React中创建更新的方式及ReactDOM.render的源码实现

- Spring Boot 3 集成 Thymeleaf