hyper-v ubuntu 3节点 k8s集群搭建

前奏

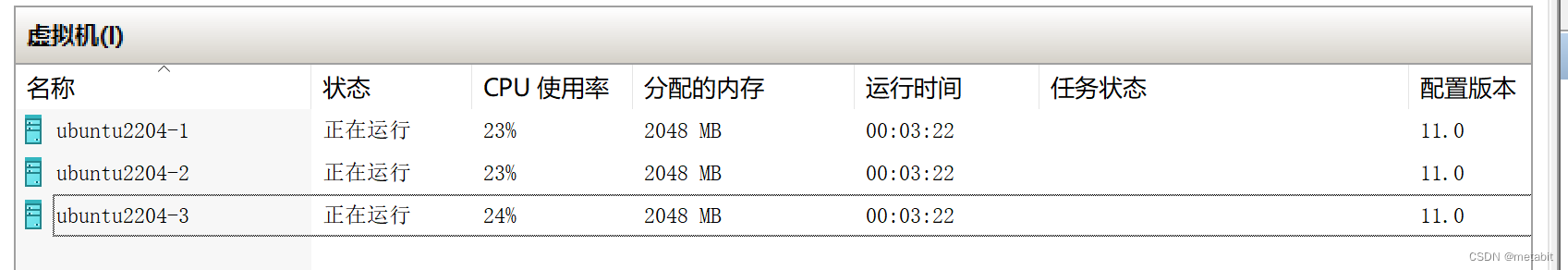

搭建一主二从的k8s集群,如图所示,准备3台虚拟机。

不会创建的同学,可以看我上上篇博客:https://blog.csdn.net/dawnto/article/details/135086252

不会创建的同学,可以看我上上篇博客:https://blog.csdn.net/dawnto/article/details/135086252

和上篇博客:https://blog.csdn.net/dawnto/article/details/135096182

由于测试机器资源有限,将3台虚拟机内存均设置为2048MB,CPU核心均设置为2核。

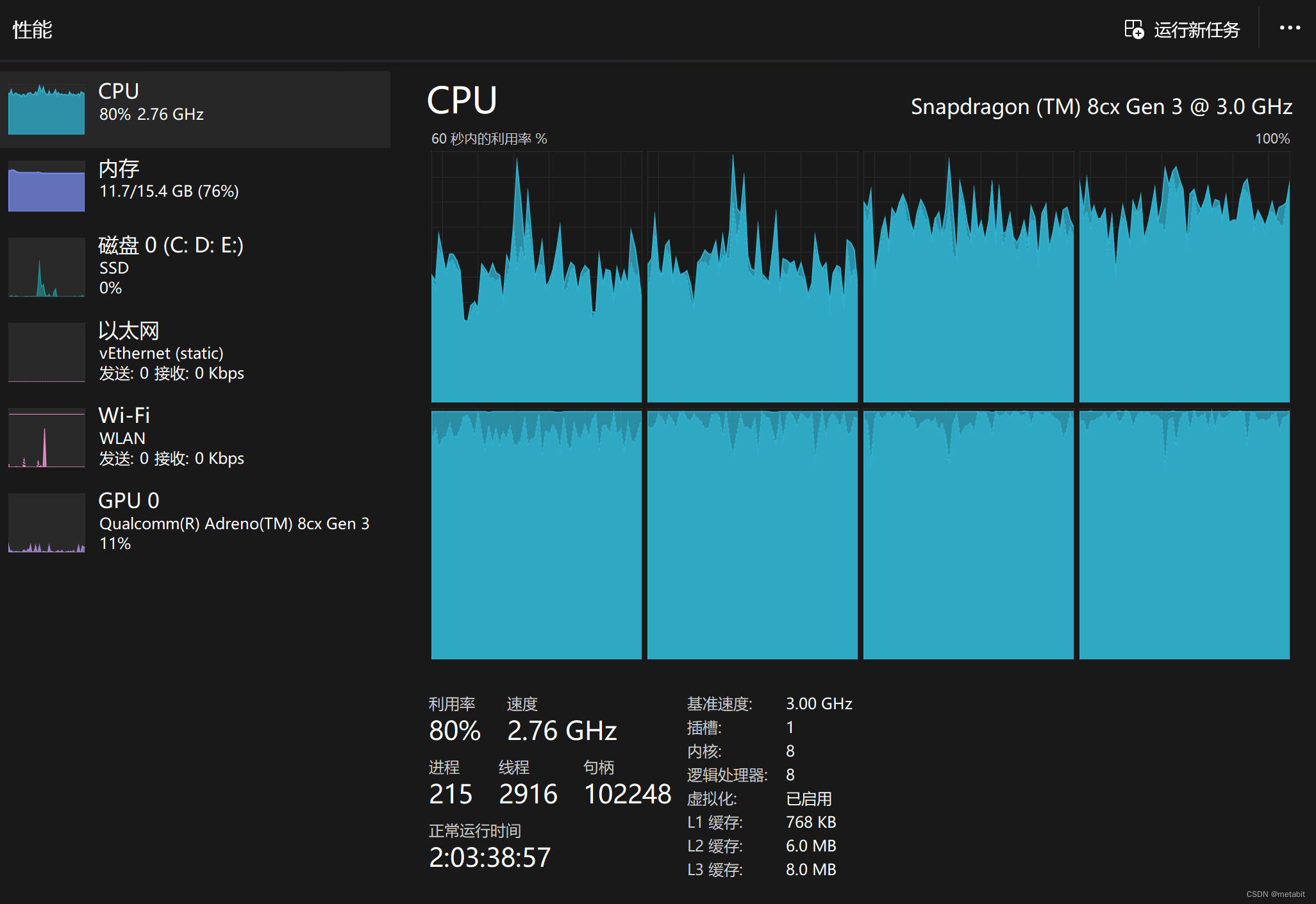

宿主机cpu和内存均在可控范围内,不影响其他程序运行

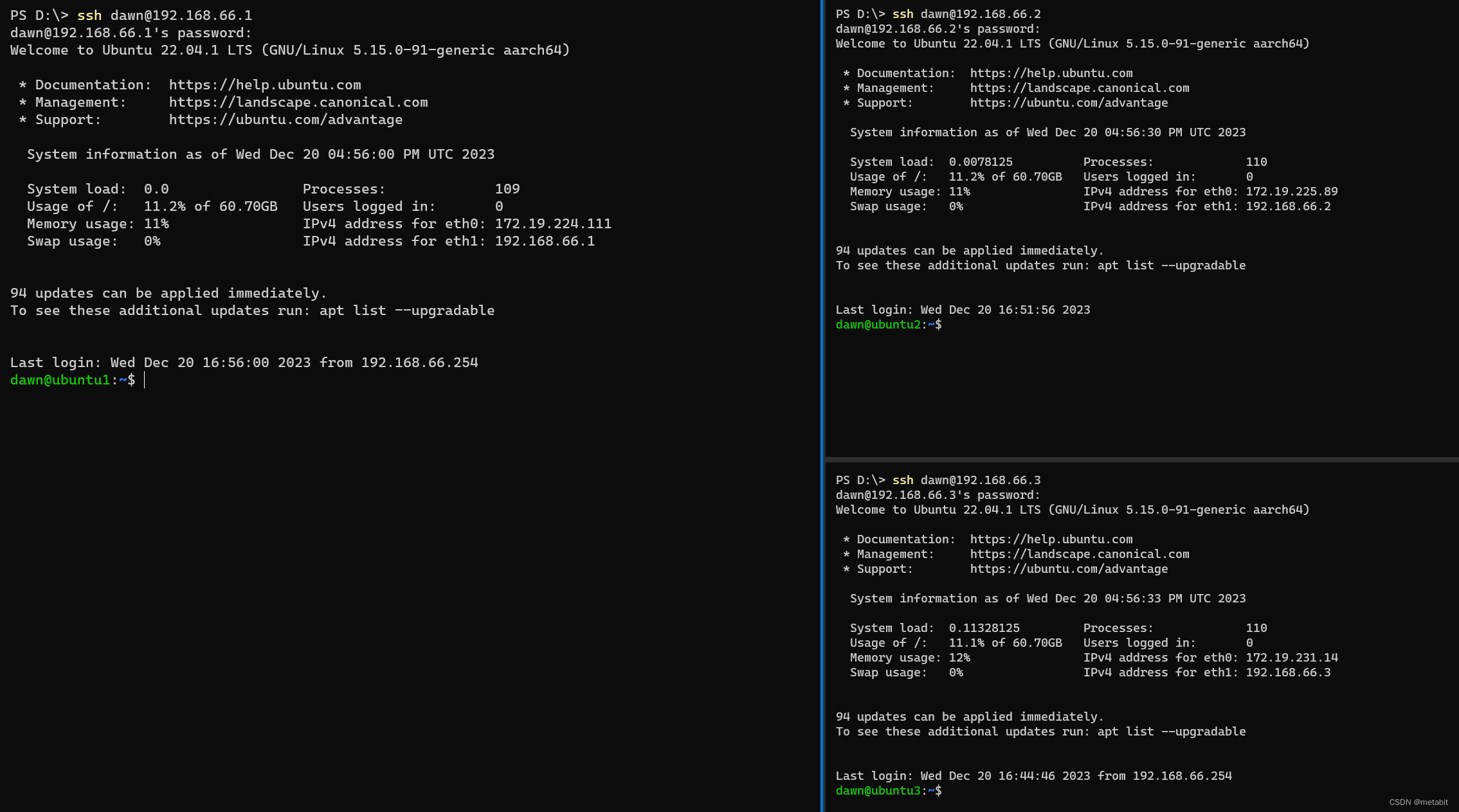

这三台虚拟机都可正常访问互联网,且配置了静态ip,分别是:

这三台虚拟机都可正常访问互联网,且配置了静态ip,分别是:

192.168.66.1

192.168.66.2

192.168.66.3

网关是:192.168.66.254

现在修改一下三台虚拟机的主机名

sudo vim /etc/hostname

分别修改为

ubuntu1

ubuntu2

ubuntu3

分别reboot即可

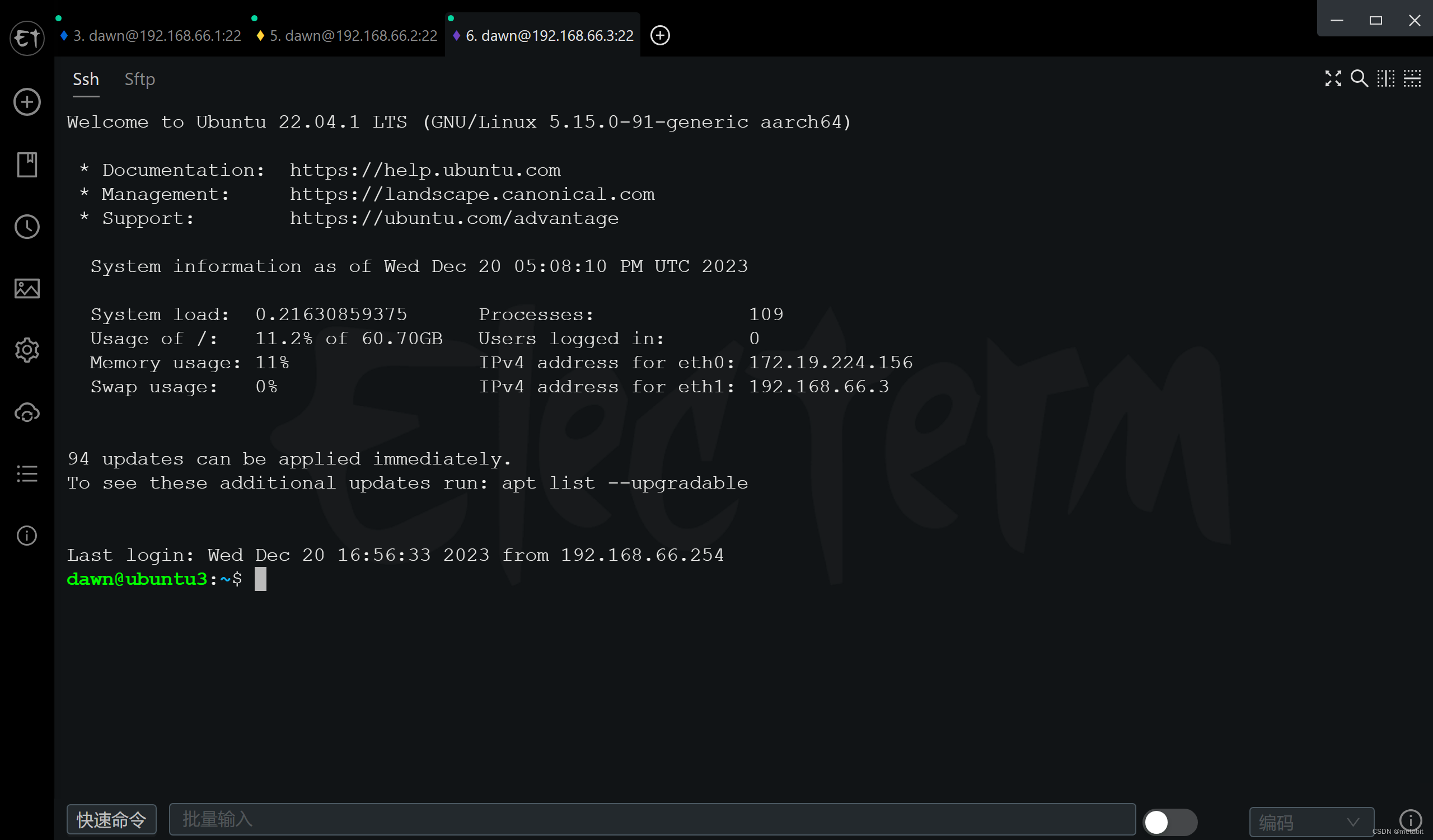

看一下,非常的整齐

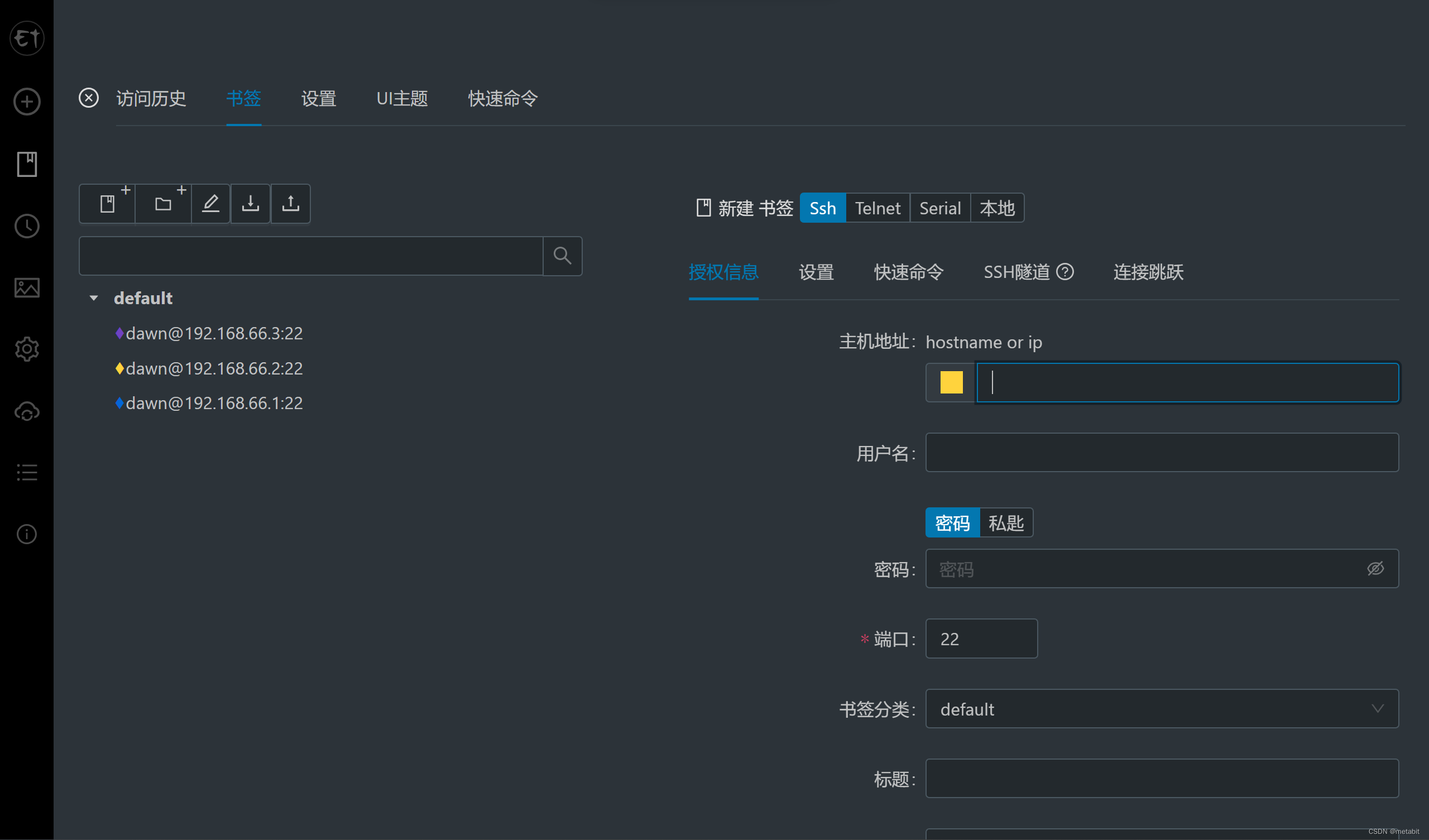

为了加快我们控制三台机器的速度:

为了加快我们控制三台机器的速度:

选择一个好用的,开源免费的,国人维护的终端工具:https://github.com/electerm/electerm/releases

点书签可以创建连接

这里创建3个连接

这里创建3个连接

将下边的开关打开,这样命令会同步在多个终端中执行

将下边的开关打开,这样命令会同步在多个终端中执行

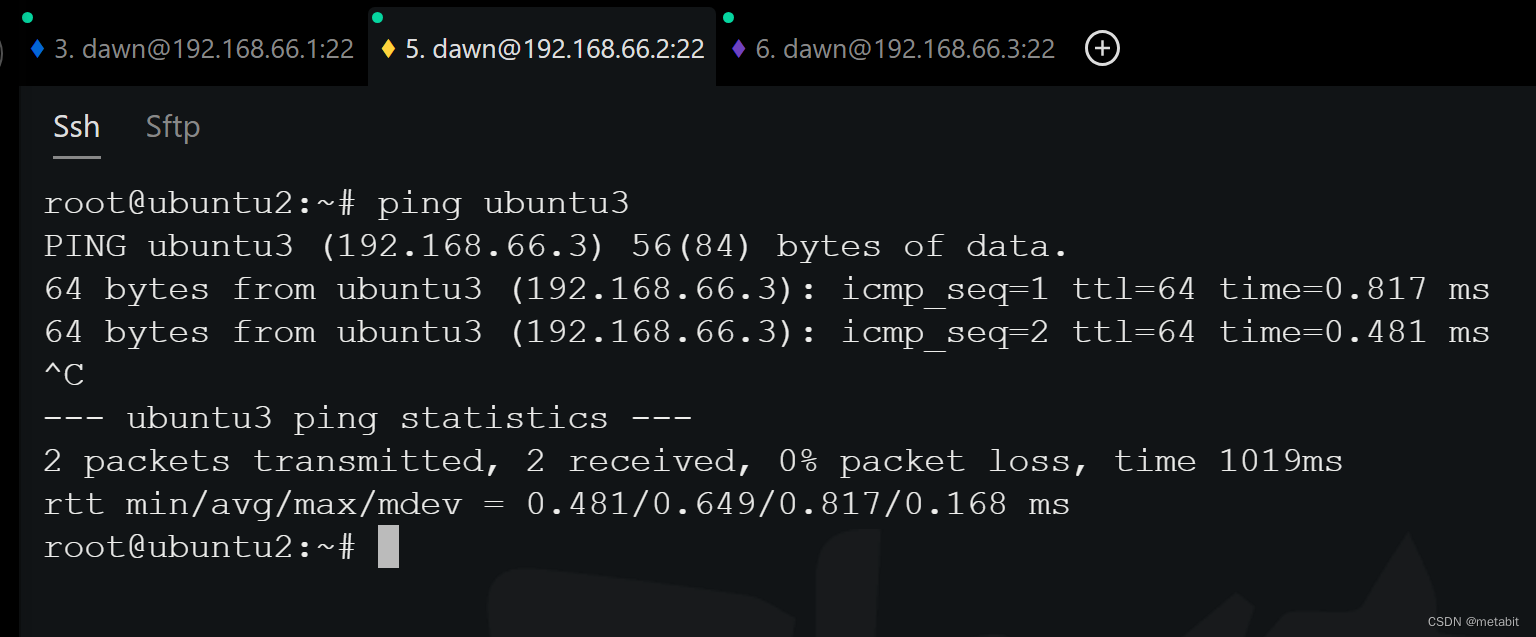

我们同步一下hosts文件,让虚拟机内部可以通过hostname访问其他虚拟机(若DNS支持主机域名解析,则无需配置)

cat >> /etc/hosts <<EOF

192.168.66.1 ubuntu1

192.168.66.2 ubuntu2

192.168.66.3 ubuntu3

EOF

此时验证一下,在ubuntu2 中 ping ubuntu3,可见没有问题

关闭防火墙,两步走

停止:service ufw stop

永久关闭:systemctl disable ufw

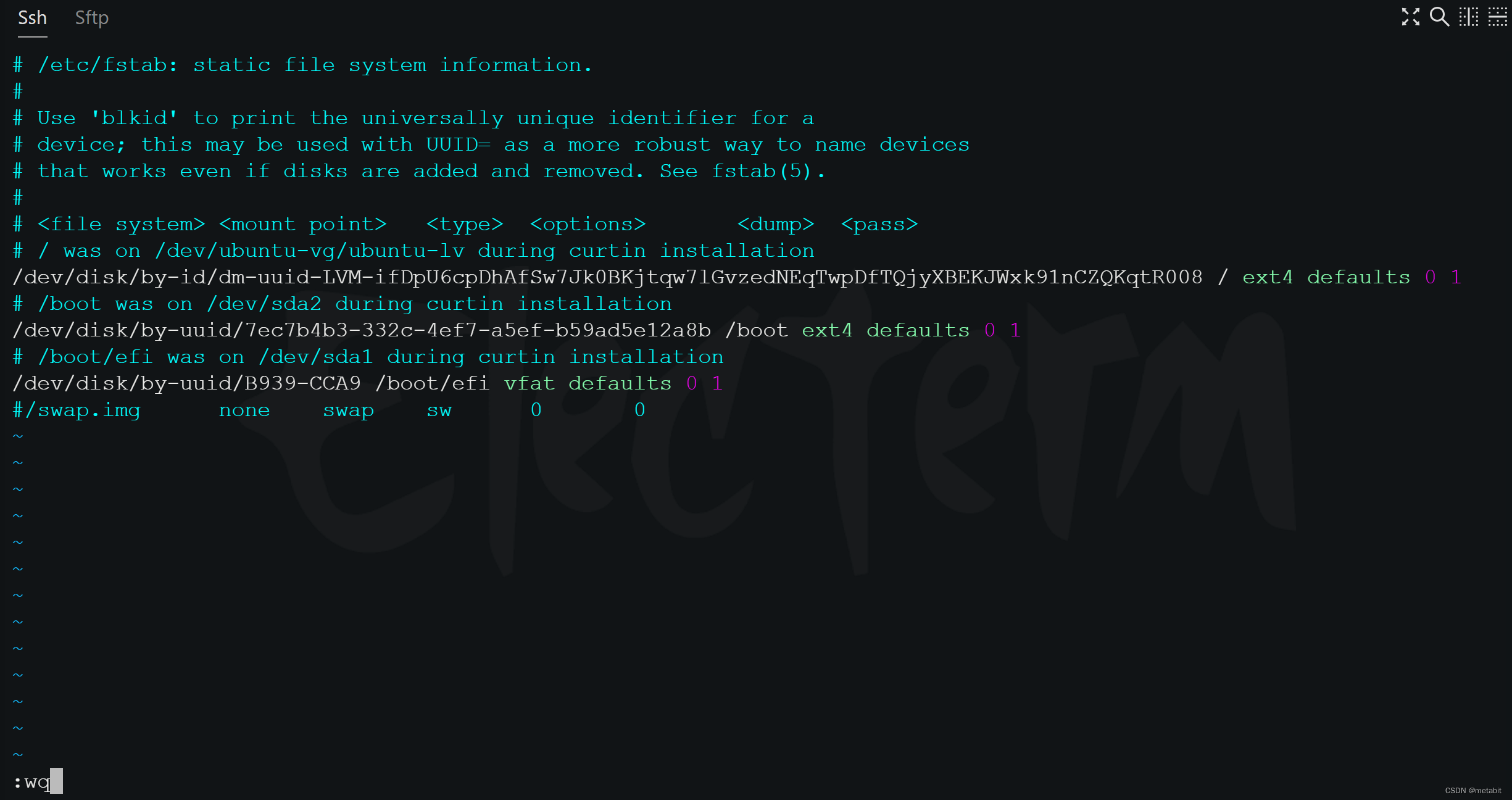

关闭交换分区,两步走

swapoff -a 禁用交换分区

vim /etc/fstab 注释掉下图最后一行 /swap …,永久禁用交换分区

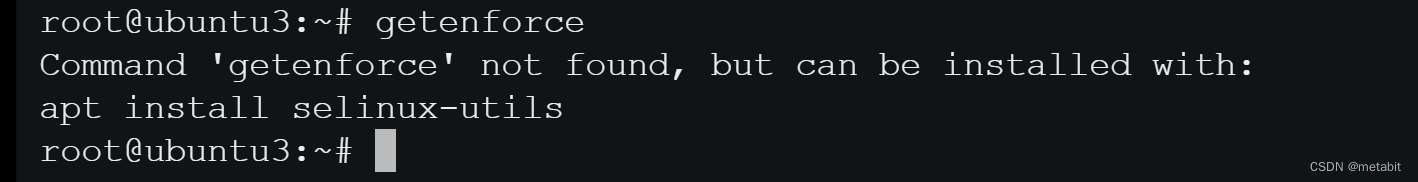

禁用 selinux(可选的)

ubuntu 默认不安装 selinux

使用:getenforce,查看一下,是否有安装selinux,若有该命令则安装,安装则执行下述操作

有的话就,两步走

setenforce 0,临时关闭

vim /etc/selinux/config 永久关闭selinux

将SELINUX设置成disabled

未安装就不用管了

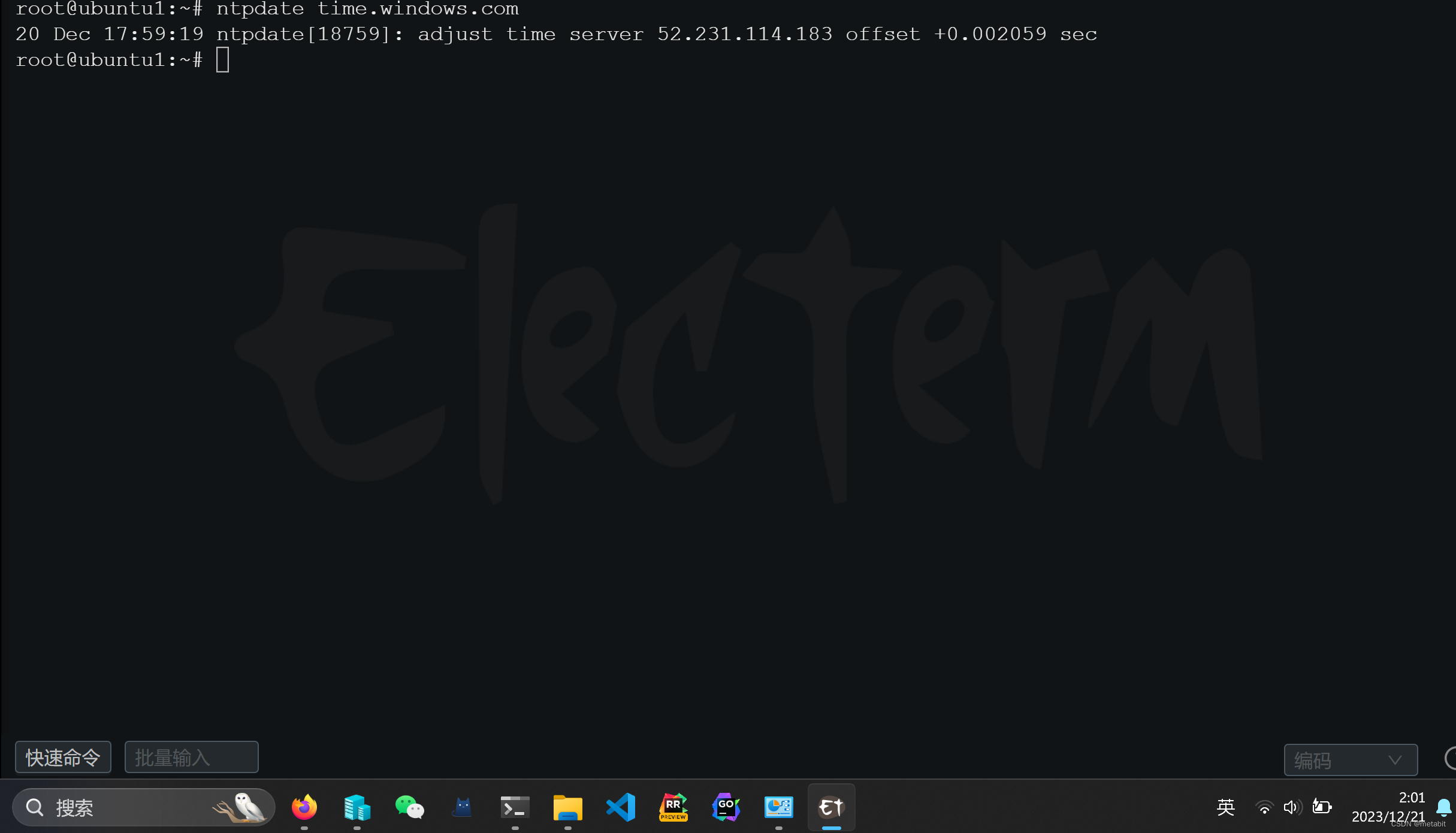

同步时间,使用

同步时间,使用ntpdate工具

sudo apt-get install ntpdate

若没有,此时更新一下索引和源

sudo apt-get update

sudo apt-get upgrade

再次安装

安装以后执行ntpdate time.windows.com,同步时间

发现现在时区还没设置,图片中时区明显不对

发现现在时区还没设置,图片中时区明显不对

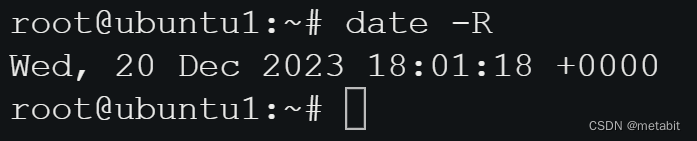

使用date -R再看一番,雀食不对鸭

那就设置一下东八区

那就设置一下东八区

使用命令:timedatectl set-timezone Asia/Shanghai 设置时区,亚洲,上海

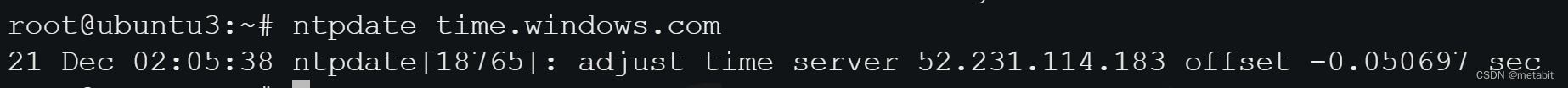

使用命令再次同步:ntpdate time.windows.com,ok啦~

允许 iptables 检查桥接流量

cat <<EOF | sudo tee /etc/modules-load.d/k8s.conf

br_netfilter

EOF

cat <<EOF | sudo tee /etc/sysctl.d/k8s.conf

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

EOF

sudo sysctl --system

后续操作可能不生效,reboot一下再切回root用户

安装containerd,容器运行时,(不直接用docker)

# 使用 docker 源安装最新版本 containerd / docker

sudo apt install \

ca-certificates \

curl \

gnupg \

lsb-release

sudo mkdir -p /etc/apt/keyrings

curl -fsSL https://download.docker.com/linux/ubuntu/gpg | sudo gpg --dearmor -o /etc/apt/keyrings/docker.gpg

echo \

"deb [arch=$(dpkg --print-architecture) signed-by=/etc/apt/keyrings/docker.gpg] https://download.docker.com/linux/ubuntu \

$(lsb_release -cs) stable" | sudo tee /etc/apt/sources.list.d/docker.list > /dev/null

sudo apt update

sudo apt install containerd.io

# sudo apt install docker-ce docker-ce-cli containerd.io docker-compose-plugin

配置containerd

sudo mkdir -p /etc/containerd

containerd config default | sudo tee /etc/containerd/config.toml

sudo vim /etc/containerd/config.toml

将 [plugins.“io.containerd.grpc.v1.cri”]

中 sandbox_image = "registry.aliyuncs.com/google_containers/pause:3.6",换成红色区域内的

将 [plugins.“io.containerd.grpc.v1.cri”.containerd.runtimes.runc.options]

中的 SystemdCgroup = true,替换为true

安装kubenetes

sudo apt-get update

# apt-transport-https 可能是一个虚拟包(dummy package);如果是的话,你可以跳过安装这个包

sudo apt-get install -y apt-transport-https ca-certificates curl gpg

curl -fsSL https://pkgs.k8s.io/core:/stable:/v1.29/deb/Release.key | sudo gpg --dearmor -o /etc/apt/keyrings/kubernetes-apt-keyring.gpg

# 此操作会覆盖 /etc/apt/sources.list.d/kubernetes.list 中现存的所有配置。

echo 'deb [signed-by=/etc/apt/keyrings/kubernetes-apt-keyring.gpg] https://pkgs.k8s.io/core:/stable:/v1.29/deb/ /' | sudo tee /etc/apt/sources.list.d/kubernetes.list

sudo apt-get update

sudo apt-get install -y kubelet kubeadm kubectl

sudo apt-mark hold kubelet kubeadm kubectl

使用kubeadm创建一主二从集群

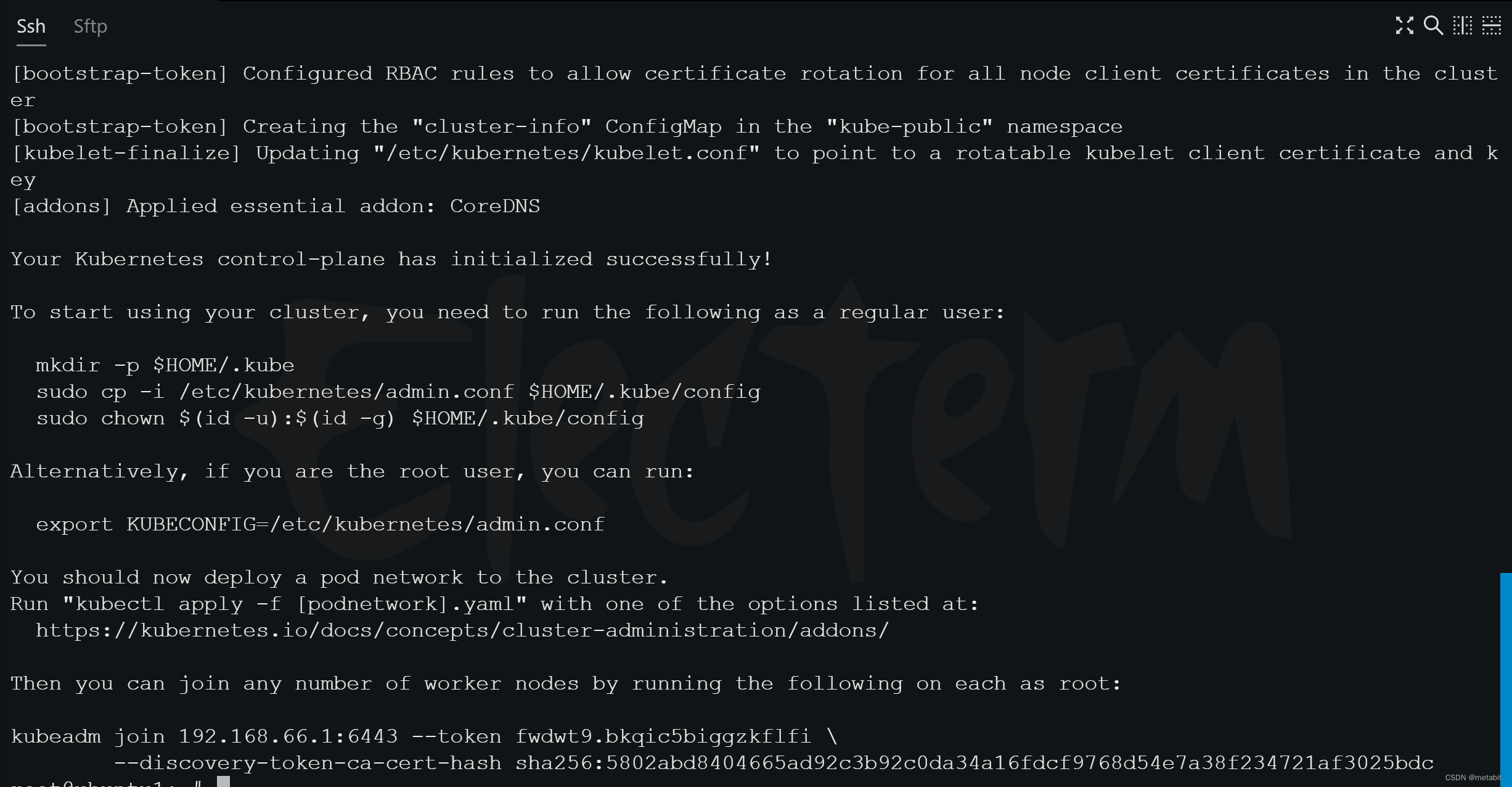

此命令在主节点中执行

kubeadm init \

--image-repository registry.aliyuncs.com/google_containers \

--pod-network-cidr=10.244.0.0/16 \

--cri-socket unix:///run/containerd/containerd.sock \

--skip-phases=addon/kube-proxy \

--apiserver-advertise-address=192.168.66.1

–apiserver-advertise-address=192.168.66.1,即主节点的ip

–pod-network-cidr=10.244.0.0/16 是容器内网络,随意设置,合规即可

然后。。。它报错了

root@ubuntu1:~# kubeadm init \

--image-repository registry.aliyuncs.com/google_containers \

--pod-network-cidr=10.244.0.0/16 \

--cri-socket unix:///run/containerd/containerd.sock \

--skip-phases=addon/kube-proxy \

--apiserver-advertise-address=192.168.66.1

[init] Using Kubernetes version: v1.29.0

[preflight] Running pre-flight checks

error execution phase preflight: [preflight] Some fatal errors occurred:

[ERROR FileContent--proc-sys-net-ipv4-ip_forward]: /proc/sys/net/ipv4/ip_forward contents are not set to 1

[preflight] If you know what you are doing, you can make a check non-fatal with `--ignore-preflight-errors=...`

To see the stack trace of this error execute with --v=5 or higher

root@ubuntu1:~#

使用 echo "1" > /proc/sys/net/ipv4/ip_forward,修改值为1

然后再次执行初始化集群命令,成了

拷贝最后一行kubeadm join …

拷贝最后一行kubeadm join …

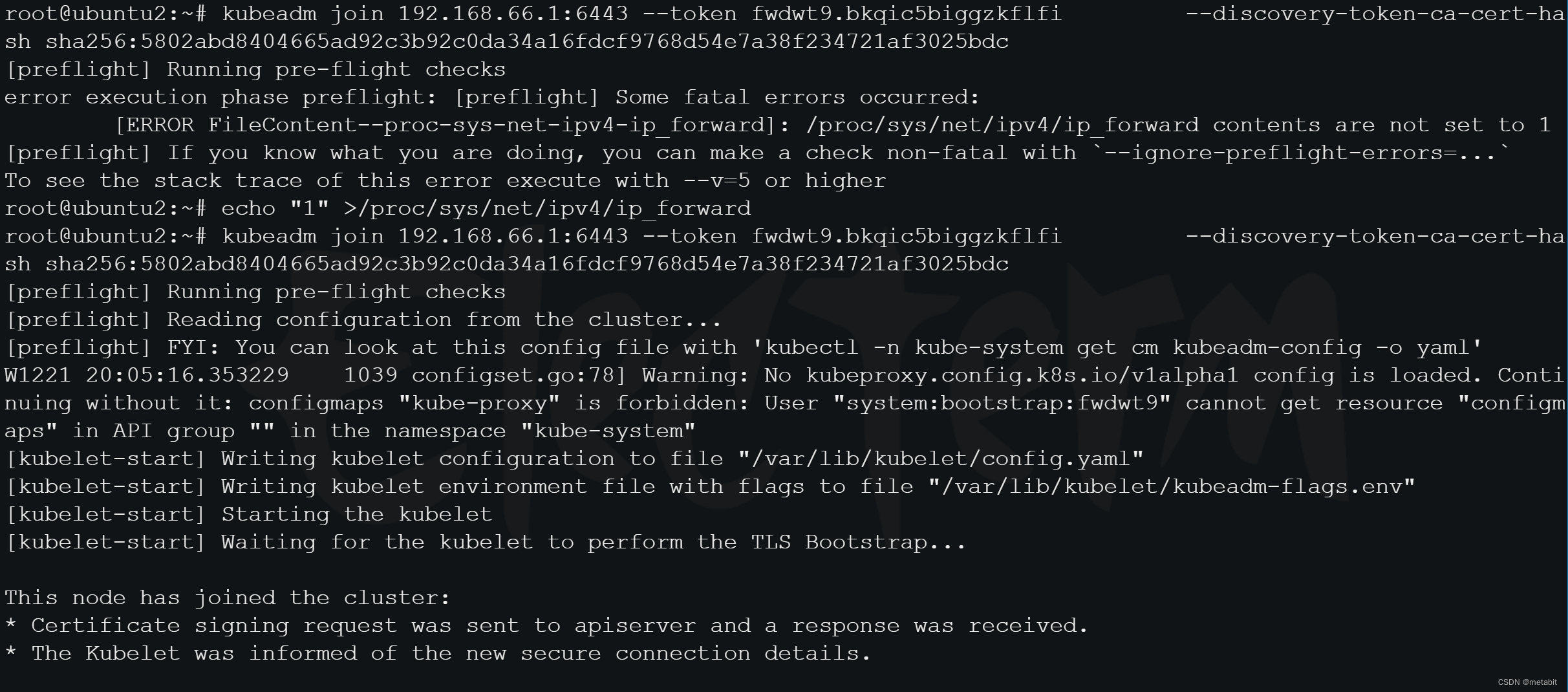

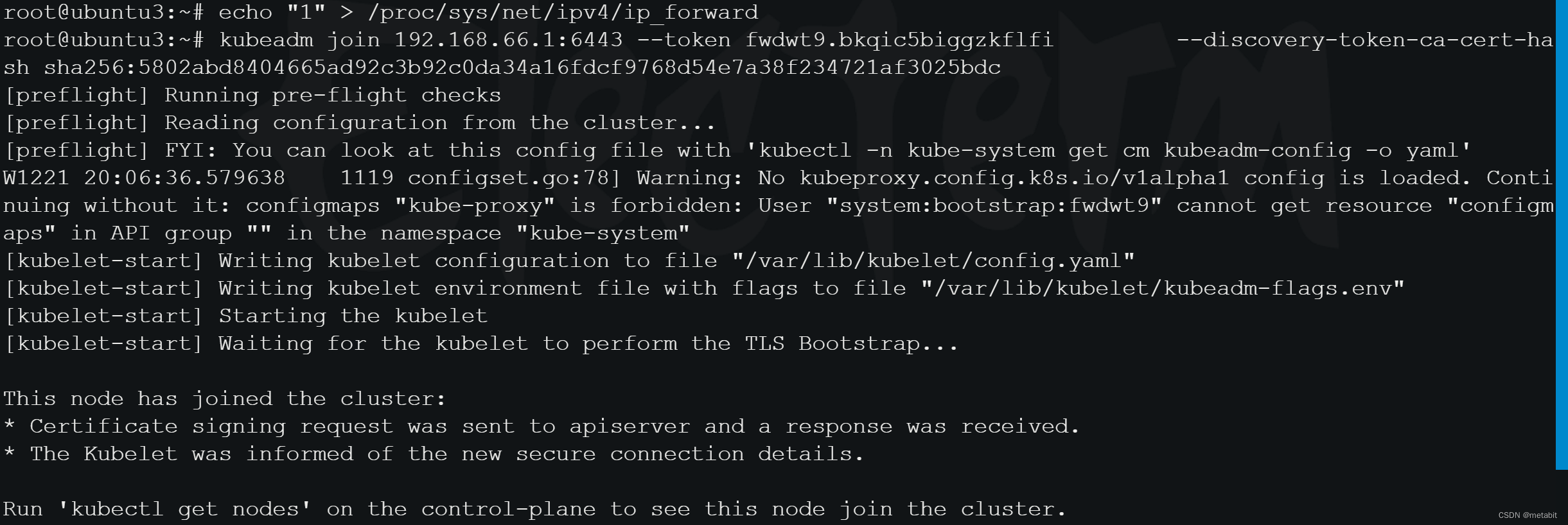

kubeadm join 192.168.66.1:6443 --token fwdwt9.bkqic5biggzkflfi --discovery-token-ca-cert-hash sha256:5802abd8404665ad92c3b92c0da34a16fdcf9768d54e7a38f234721af3025bdc

到另外两个机器中执行,让从节点加入集群

复制一下配置文件

复制一下配置文件

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown (id -u):(id -g) $HOME/.kube/config

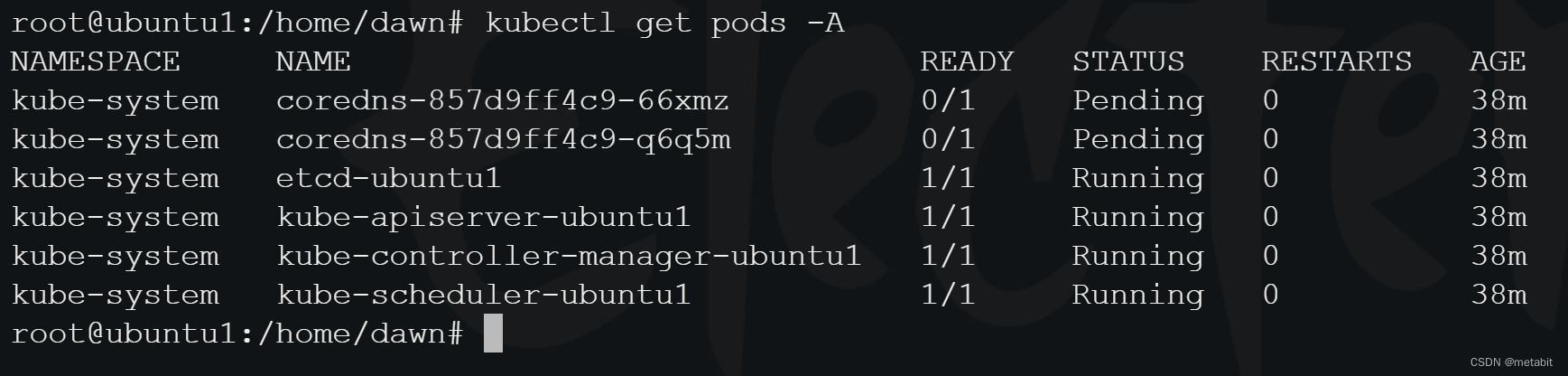

在主节点执行kubectl get pods -A,发现有pod正处于Pending状态

执行

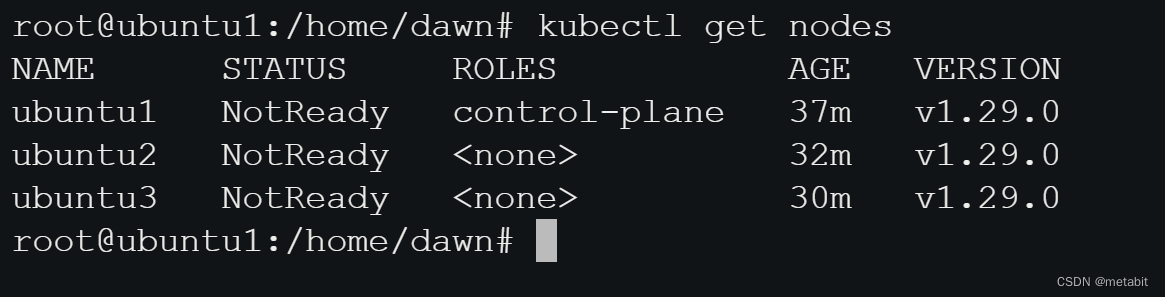

执行kubectl get nodes,发现节点都没有准备好

NotReady的主要原因是集群缺少DNS插件

NotReady的主要原因是集群缺少DNS插件

在主节点上创建一个文件:kube-flannel.yml,内容如下

---

kind: Namespace

apiVersion: v1

metadata:

name: kube-flannel

labels:

k8s-app: flannel

pod-security.kubernetes.io/enforce: privileged

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

labels:

k8s-app: flannel

name: flannel

rules:

- apiGroups:

- ""

resources:

- pods

verbs:

- get

- apiGroups:

- ""

resources:

- nodes

verbs:

- get

- list

- watch

- apiGroups:

- ""

resources:

- nodes/status

verbs:

- patch

- apiGroups:

- networking.k8s.io

resources:

- clustercidrs

verbs:

- list

- watch

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

labels:

k8s-app: flannel

name: flannel

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: flannel

subjects:

- kind: ServiceAccount

name: flannel

namespace: kube-flannel

---

apiVersion: v1

kind: ServiceAccount

metadata:

labels:

k8s-app: flannel

name: flannel

namespace: kube-flannel

---

kind: ConfigMap

apiVersion: v1

metadata:

name: kube-flannel-cfg

namespace: kube-flannel

labels:

tier: node

k8s-app: flannel

app: flannel

data:

cni-conf.json: |

{

"name": "cbr0",

"cniVersion": "0.3.1",

"plugins": [

{

"type": "flannel",

"delegate": {

"hairpinMode": true,

"isDefaultGateway": true

}

},

{

"type": "portmap",

"capabilities": {

"portMappings": true

}

}

]

}

net-conf.json: |

{

"Network": "10.244.0.0/16",

"Backend": {

"Type": "vxlan"

}

}

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: kube-flannel-ds

namespace: kube-flannel

labels:

tier: node

app: flannel

k8s-app: flannel

spec:

selector:

matchLabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: kubernetes.io/os

operator: In

values:

- linux

hostNetwork: true

priorityClassName: system-node-critical

tolerations:

- operator: Exists

effect: NoSchedule

serviceAccountName: flannel

initContainers:

- name: install-cni-plugin

image: docker.io/flannel/flannel-cni-plugin:v1.2.0

command:

- cp

args:

- -f

- /flannel

- /opt/cni/bin/flannel

volumeMounts:

- name: cni-plugin

mountPath: /opt/cni/bin

- name: install-cni

image: docker.io/flannel/flannel:v0.24.0

command:

- cp

args:

- -f

- /etc/kube-flannel/cni-conf.json

- /etc/cni/net.d/10-flannel.conflist

volumeMounts:

- name: cni

mountPath: /etc/cni/net.d

- name: flannel-cfg

mountPath: /etc/kube-flannel/

containers:

- name: kube-flannel

image: docker.io/flannel/flannel:v0.24.0

command:

- /opt/bin/flanneld

args:

- --ip-masq

- --kube-subnet-mgr

resources:

requests:

cpu: "100m"

memory: "50Mi"

securityContext:

privileged: false

capabilities:

add: ["NET_ADMIN", "NET_RAW"]

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

- name: EVENT_QUEUE_DEPTH

value: "5000"

volumeMounts:

- name: run

mountPath: /run/flannel

- name: flannel-cfg

mountPath: /etc/kube-flannel/

- name: xtables-lock

mountPath: /run/xtables.lock

volumes:

- name: run

hostPath:

path: /run/flannel

- name: cni-plugin

hostPath:

path: /opt/cni/bin

- name: cni

hostPath:

path: /etc/cni/net.d

- name: flannel-cfg

configMap:

name: kube-flannel-cfg

- name: xtables-lock

hostPath:

path: /run/xtables.lock

type: FileOrCreate

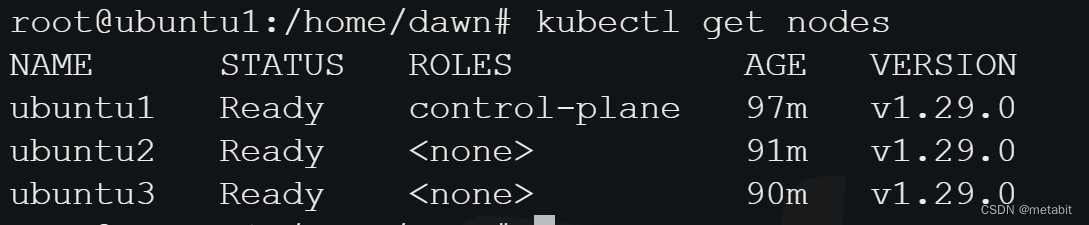

执行:kubectl apply -f kube-flannel.yml,创建kube-flannel pod

再次执行:kubectl get nodes,发现已经Ready,撒花~

若出现:

若出现:

lugin type="flannel" failed (add): loadFlannelSubnetEnv failed: open /run/flannel/subnet.env: no such file or directory

看引用3

在每个节点创建文件/run/flannel/subnet.env写入以下内容

FLANNEL_NETWORK=10.244.0.0/16

FLANNEL_SUBNET=10.244.0.1/24

FLANNEL_MTU=1450

FLANNEL_IPMASQ=true

Reference

1.https://zhuanlan.zhihu.com/p/627310856?utm_id=0

2.https://juejin.cn/post/7208088676853252156

3.https://blog.csdn.net/YangCheney/article/details/127046204

本文来自互联网用户投稿,该文观点仅代表作者本人,不代表本站立场。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。 如若内容造成侵权/违法违规/事实不符,请联系我的编程经验分享网邮箱:chenni525@qq.com进行投诉反馈,一经查实,立即删除!