Transformer代码学习

发布时间:2023年12月21日

Transformer

三类应用:

- 机器翻译类应用 - Encoder和Decoder共同使用

- 只使用Encoder端-文本分类BERT和图片分类VIT

- 只使用Decoder端-生成类模型

源码总览

# code by Tae Hwan Jung(Jeff Jung) @graykode, Derek Miller @dmmiller612

# Reference : https://github.com/jadore801120/attention-is-all-you-need-pytorch

# https://github.com/JayParks/transformer

import numpy as np

import torch

import torch.nn as nn

import torch.optim as optim

import matplotlib.pyplot as plt

# S: Symbol that shows starting of decoding input

# E: Symbol that shows starting of decoding output

# P: Symbol that will fill in blank sequence if current batch data size is short than time steps

def make_batch(sentences):

input_batch = [[src_vocab[n] for n in sentences[0].split()]]

output_batch = [[tgt_vocab[n] for n in sentences[1].split()]]

target_batch = [[tgt_vocab[n] for n in sentences[2].split()]]

return torch.LongTensor(input_batch), torch.LongTensor(output_batch), torch.LongTensor(target_batch)

def get_sinusoid_encoding_table(n_position, d_model):

def cal_angle(position, hid_idx):

return position / np.power(10000, 2 * (hid_idx // 2) / d_model)

def get_posi_angle_vec(position):

return [cal_angle(position, hid_j) for hid_j in range(d_model)]

sinusoid_table = np.array([get_posi_angle_vec(pos_i) for pos_i in range(n_position)])

sinusoid_table[:, 0::2] = np.sin(sinusoid_table[:, 0::2]) # dim 2i

sinusoid_table[:, 1::2] = np.cos(sinusoid_table[:, 1::2]) # dim 2i+1

return torch.FloatTensor(sinusoid_table)

def get_attn_pad_mask(seq_q, seq_k):

batch_size, len_q = seq_q.size()

batch_size, len_k = seq_k.size()

# eq(zero) is PAD token

pad_attn_mask = seq_k.data.eq(0).unsqueeze(1) # batch_size x 1 x len_k(=len_q), one is masking

return pad_attn_mask.expand(batch_size, len_q, len_k) # batch_size x len_q x len_k

def get_attn_subsequent_mask(seq):

attn_shape = [seq.size(0), seq.size(1), seq.size(1)]

subsequent_mask = np.triu(np.ones(attn_shape), k=1)

subsequent_mask = torch.from_numpy(subsequent_mask).byte()

return subsequent_mask

class ScaledDotProductAttention(nn.Module):

def __init__(self):

super(ScaledDotProductAttention, self).__init__()

def forward(self, Q, K, V, attn_mask):

scores = torch.matmul(Q, K.transpose(-1, -2)) / np.sqrt(d_k) # scores : [batch_size x n_heads x len_q(=len_k) x len_k(=len_q)]

scores.masked_fill_(attn_mask, -1e9) # Fills elements of self tensor with value where mask is one.

attn = nn.Softmax(dim=-1)(scores)

context = torch.matmul(attn, V)

return context, attn

class MultiHeadAttention(nn.Module):

def __init__(self):

super(MultiHeadAttention, self).__init__()

self.W_Q = nn.Linear(d_model, d_k * n_heads)

self.W_K = nn.Linear(d_model, d_k * n_heads)

self.W_V = nn.Linear(d_model, d_v * n_heads)

self.linear = nn.Linear(n_heads * d_v, d_model)

self.layer_norm = nn.LayerNorm(d_model)

def forward(self, Q, K, V, attn_mask):

# q: [batch_size x len_q x d_model], k: [batch_size x len_k x d_model], v: [batch_size x len_k x d_model]

residual, batch_size = Q, Q.size(0)

# (B, S, D) -proj-> (B, S, D) -split-> (B, S, H, W) -trans-> (B, H, S, W)

q_s = self.W_Q(Q).view(batch_size, -1, n_heads, d_k).transpose(1,2) # q_s: [batch_size x n_heads x len_q x d_k]

k_s = self.W_K(K).view(batch_size, -1, n_heads, d_k).transpose(1,2) # k_s: [batch_size x n_heads x len_k x d_k]

v_s = self.W_V(V).view(batch_size, -1, n_heads, d_v).transpose(1,2) # v_s: [batch_size x n_heads x len_k x d_v]

attn_mask = attn_mask.unsqueeze(1).repeat(1, n_heads, 1, 1) # attn_mask : [batch_size x n_heads x len_q x len_k]

# context: [batch_size x n_heads x len_q x d_v], attn: [batch_size x n_heads x len_q(=len_k) x len_k(=len_q)]

context, attn = ScaledDotProductAttention()(q_s, k_s, v_s, attn_mask)

context = context.transpose(1, 2).contiguous().view(batch_size, -1, n_heads * d_v) # context: [batch_size x len_q x n_heads * d_v]

output = self.linear(context)

return self.layer_norm(output + residual), attn # output: [batch_size x len_q x d_model]

class PoswiseFeedForwardNet(nn.Module):

def __init__(self):

super(PoswiseFeedForwardNet, self).__init__()

self.conv1 = nn.Conv1d(in_channels=d_model, out_channels=d_ff, kernel_size=1)

self.conv2 = nn.Conv1d(in_channels=d_ff, out_channels=d_model, kernel_size=1)

self.layer_norm = nn.LayerNorm(d_model)

def forward(self, inputs):

residual = inputs # inputs : [batch_size, len_q, d_model]

output = nn.ReLU()(self.conv1(inputs.transpose(1, 2)))

output = self.conv2(output).transpose(1, 2)

return self.layer_norm(output + residual)

class EncoderLayer(nn.Module):

def __init__(self):

super(EncoderLayer, self).__init__()

self.enc_self_attn = MultiHeadAttention()

self.pos_ffn = PoswiseFeedForwardNet()

def forward(self, enc_inputs, enc_self_attn_mask):

enc_outputs, attn = self.enc_self_attn(enc_inputs, enc_inputs, enc_inputs, enc_self_attn_mask) # enc_inputs to same Q,K,V

enc_outputs = self.pos_ffn(enc_outputs) # enc_outputs: [batch_size x len_q x d_model]

return enc_outputs, attn

class DecoderLayer(nn.Module):

def __init__(self):

super(DecoderLayer, self).__init__()

self.dec_self_attn = MultiHeadAttention()

self.dec_enc_attn = MultiHeadAttention()

self.pos_ffn = PoswiseFeedForwardNet()

def forward(self, dec_inputs, enc_outputs, dec_self_attn_mask, dec_enc_attn_mask):

dec_outputs, dec_self_attn = self.dec_self_attn(dec_inputs, dec_inputs, dec_inputs, dec_self_attn_mask)

dec_outputs, dec_enc_attn = self.dec_enc_attn(dec_outputs, enc_outputs, enc_outputs, dec_enc_attn_mask)

dec_outputs = self.pos_ffn(dec_outputs)

return dec_outputs, dec_self_attn, dec_enc_attn

class Encoder(nn.Module):

def __init__(self):

super(Encoder, self).__init__()

self.src_emb = nn.Embedding(src_vocab_size, d_model)

self.pos_emb = nn.Embedding.from_pretrained(get_sinusoid_encoding_table(src_len+1, d_model),freeze=True)

self.layers = nn.ModuleList([EncoderLayer() for _ in range(n_layers)])

def forward(self, enc_inputs): # enc_inputs : [batch_size x source_len]

enc_outputs = self.src_emb(enc_inputs) + self.pos_emb(torch.LongTensor([[1,2,3,4,0]]))

enc_self_attn_mask = get_attn_pad_mask(enc_inputs, enc_inputs)

enc_self_attns = []

for layer in self.layers:

enc_outputs, enc_self_attn = layer(enc_outputs, enc_self_attn_mask)

enc_self_attns.append(enc_self_attn)

return enc_outputs, enc_self_attns

class Decoder(nn.Module):

def __init__(self):

super(Decoder, self).__init__()

self.tgt_emb = nn.Embedding(tgt_vocab_size, d_model)

self.pos_emb = nn.Embedding.from_pretrained(get_sinusoid_encoding_table(tgt_len+1, d_model),freeze=True)

self.layers = nn.ModuleList([DecoderLayer() for _ in range(n_layers)])

def forward(self, dec_inputs, enc_inputs, enc_outputs): # dec_inputs : [batch_size x target_len]

dec_outputs = self.tgt_emb(dec_inputs) + self.pos_emb(torch.LongTensor([[5,1,2,3,4]]))

dec_self_attn_pad_mask = get_attn_pad_mask(dec_inputs, dec_inputs)

dec_self_attn_subsequent_mask = get_attn_subsequent_mask(dec_inputs)

dec_self_attn_mask = torch.gt((dec_self_attn_pad_mask + dec_self_attn_subsequent_mask), 0)

dec_enc_attn_mask = get_attn_pad_mask(dec_inputs, enc_inputs)

dec_self_attns, dec_enc_attns = [], []

for layer in self.layers:

dec_outputs, dec_self_attn, dec_enc_attn = layer(dec_outputs, enc_outputs, dec_self_attn_mask, dec_enc_attn_mask)

dec_self_attns.append(dec_self_attn)

dec_enc_attns.append(dec_enc_attn)

return dec_outputs, dec_self_attns, dec_enc_attns

class Transformer(nn.Module):

def __init__(self):

super(Transformer, self).__init__()

self.encoder = Encoder()

self.decoder = Decoder()

self.projection = nn.Linear(d_model, tgt_vocab_size, bias=False)

def forward(self, enc_inputs, dec_inputs):

enc_outputs, enc_self_attns = self.encoder(enc_inputs)

dec_outputs, dec_self_attns, dec_enc_attns = self.decoder(dec_inputs, enc_inputs, enc_outputs)

dec_logits = self.projection(dec_outputs) # dec_logits : [batch_size x src_vocab_size x tgt_vocab_size]

return dec_logits.view(-1, dec_logits.size(-1)), enc_self_attns, dec_self_attns, dec_enc_attns

def showgraph(attn):

attn = attn[-1].squeeze(0)[0]

attn = attn.squeeze(0).data.numpy()

fig = plt.figure(figsize=(n_heads, n_heads)) # [n_heads, n_heads]

ax = fig.add_subplot(1, 1, 1)

ax.matshow(attn, cmap='viridis')

ax.set_xticklabels(['']+sentences[0].split(), fontdict={'fontsize': 14}, rotation=90)

ax.set_yticklabels(['']+sentences[2].split(), fontdict={'fontsize': 14})

plt.show()

if __name__ == '__main__':

sentences = ['ich mochte ein bier P', 'S i want a beer', 'i want a beer E']

# Transformer Parameters

# Padding Should be Zero

src_vocab = {'P': 0, 'ich': 1, 'mochte': 2, 'ein': 3, 'bier': 4}

src_vocab_size = len(src_vocab)

tgt_vocab = {'P': 0, 'i': 1, 'want': 2, 'a': 3, 'beer': 4, 'S': 5, 'E': 6}

number_dict = {i: w for i, w in enumerate(tgt_vocab)}

tgt_vocab_size = len(tgt_vocab)

src_len = 5 # length of source

tgt_len = 5 # length of target

d_model = 512 # Embedding Size

d_ff = 2048 # FeedForward dimension

d_k = d_v = 64 # dimension of K(=Q), V

n_layers = 6 # number of Encoder of Decoder Layer

n_heads = 8 # number of heads in Multi-Head Attention

model = Transformer()

criterion = nn.CrossEntropyLoss()

optimizer = optim.Adam(model.parameters(), lr=0.001)

enc_inputs, dec_inputs, target_batch = make_batch(sentences)

for epoch in range(20):

optimizer.zero_grad()

outputs, enc_self_attns, dec_self_attns, dec_enc_attns = model(enc_inputs, dec_inputs)

loss = criterion(outputs, target_batch.contiguous().view(-1))

print('Epoch:', '%04d' % (epoch + 1), 'cost =', '{:.6f}'.format(loss))

loss.backward()

optimizer.step()

# Test

predict, _, _, _ = model(enc_inputs, dec_inputs)

predict = predict.data.max(1, keepdim=True)[1]

print(sentences[0], '->', [number_dict[n.item()] for n in predict.squeeze()])

print('first head of last state enc_self_attns')

showgraph(enc_self_attns)

print('first head of last state dec_self_attns')

showgraph(dec_self_attns)

print('first head of last state dec_enc_attns')

showgraph(dec_enc_attns)

main部分

if __name__ == '__main__':

## 句子输入部分,这三个句子是一组句子,属于一个样本(机器翻译例子),batch_size = 1

sentences = ['ich mochte ein bier P', 'S i want a beer', 'i want a beer E']

编码端输入:ich mochte ein bier P

解码端输入:S i want a beer

与解码段输出对比计算损失的真实标签(分类问题计算损失):i want a beer E

三个特殊字符:S——start,E——end,P——pad字符填充字符

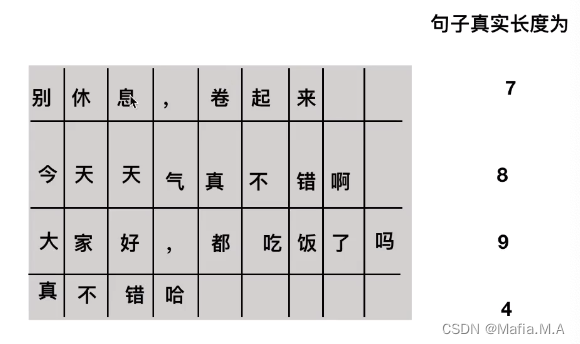

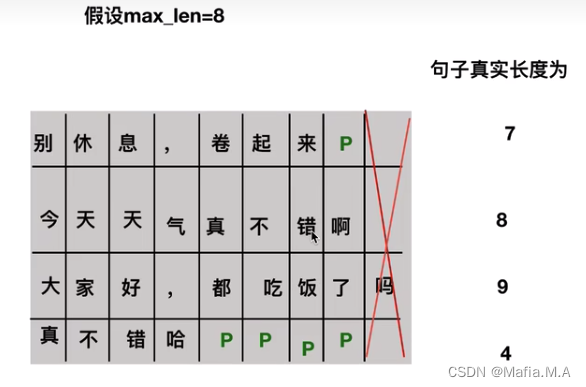

在训练中batch size往往不是1(不止一个样本)。 eg. batch

size设置为4,有四个样本(4组句子),每组句子包含如上3个句子。 下面的图片代表每组句子中的第一个

一个batch在被模型处理时,采用矩阵化运算,若一个batch中句子长度不一致就无法组成有效矩阵

解决方法:设置一个最大长度max_len,大于maxLenth的部分截掉,小于maxLenth的部分用pad字符补齐

encoder解码,从解码端的输入到输出,再把输出拿到解码端作为下一册的输入,该过程无法并行(下一时刻的输入取决于上一时刻的输出);

为加快收敛和训练速度,采用Teacher forcing方法:直接将真实标签作为一种输入,使用mask把后面的单词全部mask住(不让看到当前时刻后面的单词)

# Transformer Parameters

# Padding Should be Zero

## 构建词表

## 编码端词表

src_vocab = {'P': 0, 'ich': 1, 'mochte': 2, 'ein': 3, 'bier': 4}

src_vocab_size = len(src_vocab)

## 解码端词表

tgt_vocab = {'P': 0, 'i': 1, 'want': 2, 'a': 3, 'beer': 4, 'S': 5, 'E': 6}

number_dict = {i: w for i, w in enumerate(tgt_vocab)}

tgt_vocab_size = len(tgt_vocab)

src_len = 5 # length of source 编码端输入长度

tgt_len = 5 # length of target 解码端输入长度

## 模型参数

d_model = 512 # Embedding Size 每个字符转换为embedding时的大小

d_ff = 2048 # FeedForward dimension 前馈神经网络中linear层 映射的维度

d_k = d_v = 64 # dimension of K(=Q), V

n_layers = 6 # number of Encoder of Decoder Layer encoder和decoder堆叠的数量

n_heads = 8 # number of heads in Multi-Head Attention 多头注意力机制有几个头

## 模型部分 最关键部分

model = Transformer()

criterion = nn.CrossEntropyLoss()

optimizer = optim.Adam(model.parameters(), lr=0.001)

enc_inputs, dec_inputs, target_batch = make_batch(sentences)

for epoch in range(20):

optimizer.zero_grad()

outputs, enc_self_attns, dec_self_attns, dec_enc_attns = model(enc_inputs, dec_inputs)

loss = criterion(outputs, target_batch.contiguous().view(-1))

print('Epoch:', '%04d' % (epoch + 1), 'cost =', '{:.6f}'.format(loss))

loss.backward()

optimizer.step()

# Test

predict, _, _, _ = model(enc_inputs, dec_inputs)

predict = predict.data.max(1, keepdim=True)[1]

print(sentences[0], '->', [number_dict[n.item()] for n in predict.squeeze()])

print('first head of last state enc_self_attns')

showgraph(enc_self_attns)

print('first head of last state dec_self_attns')

showgraph(dec_self_attns)

print('first head of last state dec_enc_attns')

showgraph(dec_enc_attns)

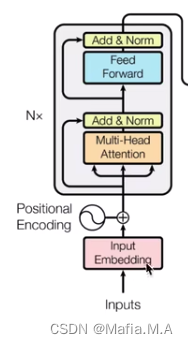

transformer整体结构

从整体网络结构来看,分为三个部分:编码层,解码层,输出层

class Transformer(nn.Module):

#初始化函数:将这三部分列出来

def __init__(self):

super(Transformer, self).__init__()

self.encoder = Encoder() ## 编码层

self.decoder = Decoder() ## 解码层

self.projection = nn.Linear(d_model, tgt_vocab_size, bias=False) ## 输出层 d_model 是解码层每个token输出的维度大小,之后会做一个tat_vocab_size大小的softmax

def forward(self, enc_inputs, dec_inputs):

## 两个数据输入, enc_inputs形状为[batch_size, src_len],作为编码端输入,一个dec_input,形状为[batch_size, tgt_len],作为解码端输入

##enc_inputs作为输入形状为[batch_size,src_len],输出由自己的函数内部指定,想要什么指定输出什么,可以是全部tokens的输出,可以是特定每一层的输出,也可以是中间某些参数的输出;

##enc_outputs就是主要的输出,enc_self_attns这里没记错的是QK转置相乘之后softmax之后的矩阵值,代表的是每个单词和其他单词相关性;

enc_outputs, enc_self_attns = self.encoder(enc_inputs)

## dec_outputs 是decoder主要输出,用于后续的linear映射;dec_self_attns类比于enc_self_attns是查看每个单词对decoder中输入的其余单词的相关性;dec_enc_attns是decoder中每个单词对encoder中每个单词的相关性

dec_outputs, dec_self_attns, dec_enc_attns = self.decoder(dec_inputs, enc_inputs, enc_outputs)

## dec_output做映射到词表大小

dec_logits = self.projection(dec_outputs) # dec_logits : [batch_size x src_vocab_size x tgt_vocab_size]

return dec_logits.view(-1, dec_logits.size(-1)), enc_self_attns, dec_self_attns, dec_enc_attns

Encoder结构

分为三个部分:词向量embedding,位置编码部分,注意力层及后续前馈神经网络

class Encoder(nn.Module):

def __init__(self):

super(Encoder, self).__init__()

self.src_emb = nn.Embedding(src_vocab_size, d_model) ##词向量层 生成一个矩阵 src_vocab_size * d_model

self.pos_emb = nn.Embedding.from_pretrained(get_sinusoid_encoding_table(src_len+1, d_model),freeze=True) ##位置编码情况,这里是固定的正余弦函数,也可以使用类似词向量的nn.Embedding获得一个可以更新学习的位置编码

self.layers = nn.ModuleList([EncoderLayer() for _ in range(n_layers)]) ## 使用ModuleList对多个encoder进行堆叠,因为后续的encoder并没有使用词向量和位置编码,所以抽离出来;

def forward(self, enc_inputs): # enc_inputs : [batch_size x source_len]

## 对于encoder接受一个输入,即enc_input 形状是

## 下面这个代码通过src_emb,进行索引定位,enc_outputs输出形状是[batch_size, src_len, d_model]

## 位置编码,把两者相加放入到了这个函数里面

enc_outputs = self.src_emb(enc_inputs) + self.pos_emb(torch.LongTensor([[1,2,3,4,0]]))

##get_attn_pad_mask是为了得到句子中pad的位置信息,给到模型后面,在计算自注意力和交互注意力的时候去掉pad符号的影响

enc_self_attn_mask = get_attn_pad_mask(enc_inputs, enc_inputs)

enc_self_attns = []

for layer in self.layers:

## 去看EncoderLayer层函数

enc_outputs, enc_self_attn = layer(enc_outputs, enc_self_attn_mask)

enc_self_attns.append(enc_self_attn)

return enc_outputs, enc_self_attns

文章来源:https://blog.csdn.net/weixin_45427596/article/details/135123985

本文来自互联网用户投稿,该文观点仅代表作者本人,不代表本站立场。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。 如若内容造成侵权/违法违规/事实不符,请联系我的编程经验分享网邮箱:chenni525@qq.com进行投诉反馈,一经查实,立即删除!

本文来自互联网用户投稿,该文观点仅代表作者本人,不代表本站立场。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。 如若内容造成侵权/违法违规/事实不符,请联系我的编程经验分享网邮箱:chenni525@qq.com进行投诉反馈,一经查实,立即删除!

最新文章

- Python教程

- 深入理解 MySQL 中的 HAVING 关键字和聚合函数

- Qt之QChar编码(1)

- MyBatis入门基础篇

- 用Python脚本实现FFmpeg批量转换

- Just Laws -- 中华人民共和国法律文库,简单便捷的打开方式

- 无锡市某厂区工人上岗未穿工作服,殒命车间 富维AI守护每位工友

- Spring Boot统一功能处理(Spring拦截器)

- 如何在openSUSE上进行远程登录和文件传输, ssh服务开启秘钥和密码认证

- 【C++】类和对象

- Redis主从复制详解

- ArkTS API10对语法规则提升了要求

- 响应头设置,预防HTTP响应头缺失漏洞

- c语言:输出一个正方形|练习题

- 淘宝新npm镜像