Android平台RTSP流如何添加动态水印后转推RTMP或轻量级RTSP服务

技术背景

我们在对接外部开发者的时候,遇到这样的技术诉求,客户用于地下管道检测场景,需要把摄像头的数据拉取过来,然后叠加上实时位置、施工单位、施工人员等信息,然后对外输出新的RTSP流,并本地录制一份带动态水印叠加后的数据。整个过程,因为摄像头位置一直在变化,所以需要整体尽可能的低延迟,达到可操控摄像头的目的。

技术实现

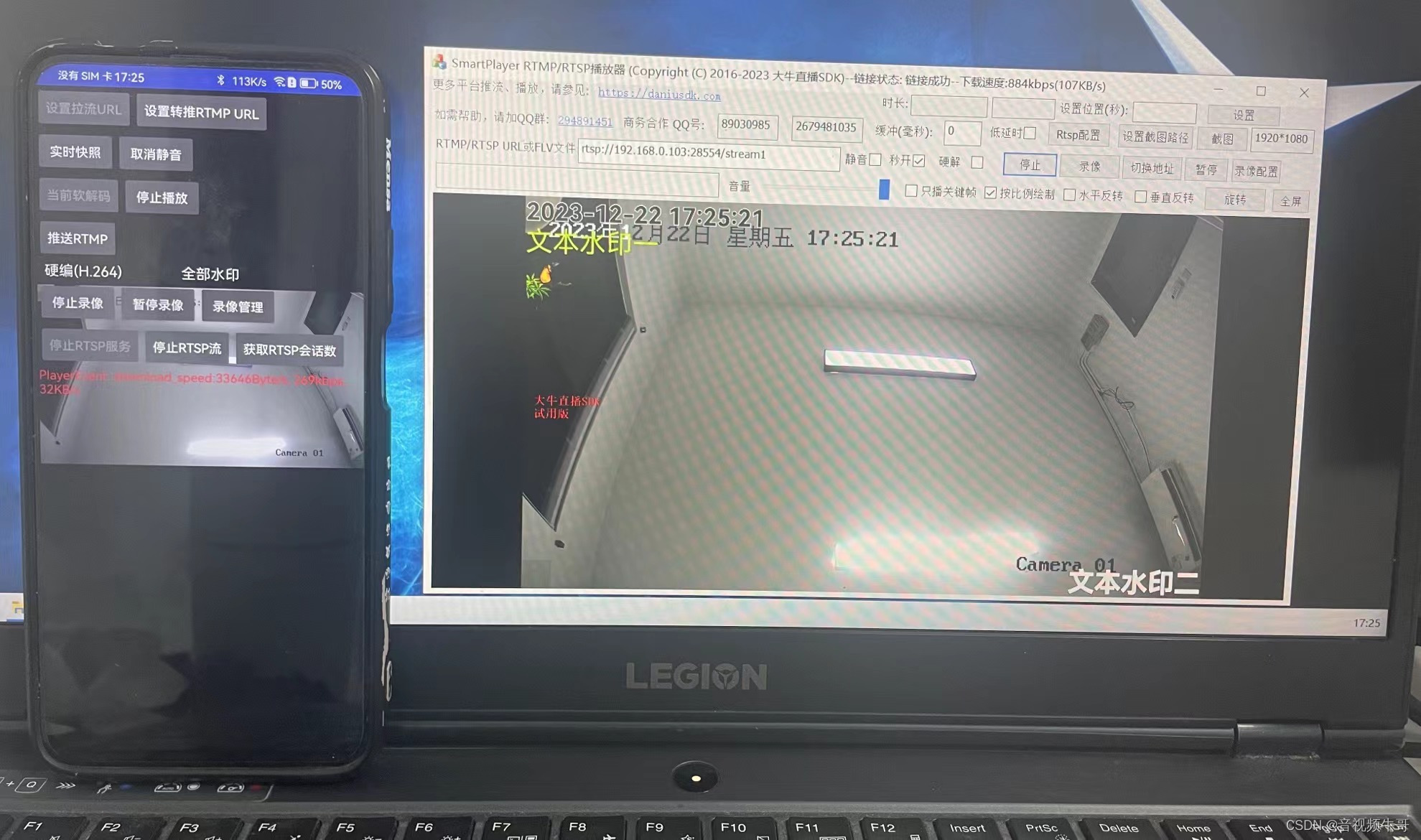

废话不多说,下图先通过Android平台拉取RTSP流,然后把解码后的yuv或rgb数据回上来,以图层的形式投递到推送端,需要加水印的话,添加文字水印或图片水印(系动态水印)图层,底层做动态叠加后二次编码打包,通过启动轻量级RTSP服务,发布RTSP流,生成二次处理后的RTSP新的拉流url,如果需要推送至RTMP,只要调用推送RTMP的接口即可,本地录制,可以设置录制目录等信息,保存二次编码后的MP4文件到本地。

根据设备性能可以软解硬编,或者直接软解软编。

先说拉取摄像头流数据这块,如果需要本地预览,那么SetSurface的时候,就把surfaceview设置上,如果不要预览只做数据处理,就直接传null即可。

private boolean StartPlay()

{

if(isPlaying)

return false;

if(!isPulling)

{

if (!OpenPullHandle())

return false;

}

// 如果第二个参数设置为null,则播放纯音频

libPlayer.SmartPlayerSetSurface(player_handle_, sSurfaceView);

libPlayer.SmartPlayerSetRenderScaleMode(player_handle_, 1);

// External Render

libPlayer.SmartPlayerSetExternalRender(player_handle_, new I420ExternalRender(stream_publisher_));

//libPlayer.SmartPlayerSetExternalAudioOutput(player_handle_, new PlayerExternalPCMOutput(stream_publisher_));

libPlayer.SmartPlayerSetFastStartup(player_handle_, isFastStartup ? 1 : 0);

libPlayer.SmartPlayerSetAudioOutputType(player_handle_, 1);

if (isMute) {

libPlayer.SmartPlayerSetMute(player_handle_, isMute ? 1 : 0);

}

if (isHardwareDecoder)

{

int isSupportH264HwDecoder = libPlayer.SetSmartPlayerVideoHWDecoder(player_handle_, 1);

int isSupportHevcHwDecoder = libPlayer.SetSmartPlayerVideoHevcHWDecoder(player_handle_, 1);

Log.i(TAG, "isSupportH264HwDecoder: " + isSupportH264HwDecoder + ", isSupportHevcHwDecoder: " + isSupportHevcHwDecoder);

}

libPlayer.SmartPlayerSetLowLatencyMode(player_handle_, isLowLatency ? 1 : 0);

libPlayer.SmartPlayerSetRotation(player_handle_, rotate_degrees);

int iPlaybackRet = libPlayer.SmartPlayerStartPlay(player_handle_);

if (iPlaybackRet != 0 && !isPulling) {

Log.e(TAG, "StartPlay failed!");

releasePlayerHandle();

return false;

}

isPlaying = true;

if(audio_opt_ == 2 || video_opt_ == 2) {

StartPull();

}

return true;

}开始播放原生的rtsp摄像头数据的话,我们设置I420数据回调,把需要处理的数据返上来。

private static class I420ExternalRender implements NTExternalRender {

// public static final int NT_FRAME_FORMAT_RGBA = 1;

// public static final int NT_FRAME_FORMAT_ABGR = 2;

// public static final int NT_FRAME_FORMAT_I420 = 3;

private WeakReference<LibPublisherWrapper> publisher_;

private int width_;

private int height_;

private int y_row_bytes_;

private int u_row_bytes_;

private int v_row_bytes_;

private ByteBuffer y_buffer_;

private ByteBuffer u_buffer_;

private ByteBuffer v_buffer_;

public I420ExternalRender(LibPublisherWrapper publisher) {

if (publisher != null)

publisher_ = new WeakReference<>(publisher);

}

@Override

public int getNTFrameFormat() {

Log.i(TAG, "I420ExternalRender::getNTFrameFormat return "

+ NT_FRAME_FORMAT_I420);

return NT_FRAME_FORMAT_I420;

}

private static int align(int d, int a) { return (d + (a - 1)) & ~(a - 1); }

@Override

public void onNTFrameSizeChanged(int width, int height) {

width_ = width;

height_ = height;

int half_w = (width_+1)/2;

int half_h = (height_+1)/2;

y_row_bytes_ = align(width_,2);

u_row_bytes_ = align(half_w, 2);

v_row_bytes_ = u_row_bytes_;

y_buffer_ = ByteBuffer.allocateDirect(y_row_bytes_ * height_);

u_buffer_ = ByteBuffer.allocateDirect(u_row_bytes_ * half_h);

v_buffer_ = ByteBuffer.allocateDirect(v_row_bytes_ * half_h);

Log.i(TAG, "I420ExternalRender::onNTFrameSizeChanged width_="

+ width_ + " height_=" + height_ + " y_row_bytes_="

+ y_row_bytes_ + " u_row_bytes_=" + u_row_bytes_

+ " v_row_bytes_=" + v_row_bytes_);

}

@Override

public ByteBuffer getNTPlaneByteBuffer(int index) {

if (index == 0) {

return y_buffer_;

} else if (index == 1) {

return u_buffer_;

} else if (index == 2) {

return v_buffer_;

} else {

Log.e(TAG, "I420ExternalRender::getNTPlaneByteBuffer index error:" + index);

return null;

}

}

@Override

public int getNTPlanePerRowBytes(int index) {

if (index == 0) {

return y_row_bytes_;

} else if (index == 1) {

return u_row_bytes_;

} else if (index == 2) {

return v_row_bytes_;

} else {

Log.e(TAG, "I420ExternalRender::getNTPlanePerRowBytes index error:" + index);

return 0;

}

}

public void onNTRenderFrame(int width, int height, long timestamp)

{

if (null == y_buffer_ || null == u_buffer_ || null == v_buffer_)

return;

//Log.i(TAG, "I420ExternalRender::onNTRenderFrame w=" + width + " h=" + height + " timestamp=" + timestamp);

LibPublisherWrapper publisher = publisher_.get();

if (null == publisher)

return;

y_buffer_.rewind();

u_buffer_.rewind();

v_buffer_.rewind();

publisher.PostLayerImageI420ByteBuffer(0, 0, 0,

y_buffer_, 0, y_row_bytes_,

u_buffer_, 0, u_row_bytes_,

v_buffer_, 0, v_row_bytes_,

width_, height_, 0, 0,

0,0, 0,0);

}

}返上来的数据,通过PostLayerImageI420ByteBuffer()接口,投递到推送端。

如果音频不做调整的话,可以不做解码,直接投递到推送端,播放端仅回调上来解码前的audio数据:

class PlayerAudioDataCallback implements NTAudioDataCallback

{

private WeakReference<LibPublisherWrapper> publisher_;

private int audio_buffer_size = 0;

private int param_info_size = 0;

private ByteBuffer audio_buffer_ = null;

private ByteBuffer parameter_info_ = null;

public PlayerAudioDataCallback(LibPublisherWrapper publisher) {

if (publisher != null)

publisher_ = new WeakReference<>(publisher);

}

@Override

public ByteBuffer getAudioByteBuffer(int size)

{

//Log.i("getAudioByteBuffer", "size: " + size);

if( size < 1 )

{

return null;

}

if ( size <= audio_buffer_size && audio_buffer_ != null )

{

return audio_buffer_;

}

audio_buffer_size = size + 512;

audio_buffer_size = (audio_buffer_size+0xf) & (~0xf);

audio_buffer_ = ByteBuffer.allocateDirect(audio_buffer_size);

// Log.i("getAudioByteBuffer", "size: " + size + " buffer_size:" + audio_buffer_size);

return audio_buffer_;

}

@Override

public ByteBuffer getAudioParameterInfo(int size)

{

//Log.i("getAudioParameterInfo", "size: " + size);

if(size < 1)

{

return null;

}

if ( size <= param_info_size && parameter_info_ != null )

{

return parameter_info_;

}

param_info_size = size + 32;

param_info_size = (param_info_size+0xf) & (~0xf);

parameter_info_ = ByteBuffer.allocateDirect(param_info_size);

//Log.i("getAudioParameterInfo", "size: " + size + " buffer_size:" + param_info_size);

return parameter_info_;

}

public void onAudioDataCallback(int ret, int audio_codec_id, int sample_size, int is_key_frame, long timestamp, int sample_rate, int channel, int parameter_info_size, long reserve)

{

//Log.i("onAudioDataCallback", "ret: " + ret + ", audio_codec_id: " + audio_codec_id + ", sample_size: " + sample_size + ", timestamp: " + timestamp +

// ",sample_rate:" + sample_rate);

if ( audio_buffer_ == null)

return;

LibPublisherWrapper publisher = publisher_.get();

if (null == publisher)

return;

if (!publisher.is_publishing())

return;

audio_buffer_.rewind();

publisher.PostAudioEncodedData(audio_codec_id, audio_buffer_, sample_size, is_key_frame, timestamp, parameter_info_, parameter_info_size);

}

}需要注意的是,拉流后的rtsp的流数据,可以先拿到分辨率,然后根据分辨率,计算编码的码率:

class EventHandlePlayerV2 implements NTSmartEventCallbackV2 {

@Override

public void onNTSmartEventCallbackV2(long handle, int id, long param1,

long param2, String param3, String param4, Object param5) {

//Log.i(TAG, "EventHandleV2: handle=" + handle + " id:" + id);

String player_event = "";

switch (id) {

...

case NTSmartEventID.EVENT_DANIULIVE_ERC_PLAYER_RESOLUTION_INFO:

player_event = "分辨率信息: width: " + param1 + ", height: " + param2;

Message message = new Message();

message.what = PLAYER_EVENT_MSG_RESOLUTION;

message.arg1 = (int) param1;

message.arg2 = (int) param2;

handler_.sendMessage(message);

break;

......

}

}?初始化推送端:

private void InitAndSetConfig() {

if (null == libPublisher)

return;

if (!stream_publisher_.empty())

return;

Log.i(TAG, "InitAndSetConfig video width: " + video_width_ + ", height" + video_height_);

long handle = libPublisher.SmartPublisherOpen(context_, audio_opt_, video_opt_, video_width_, video_height_);

if (0==handle) {

Log.e(TAG, "sdk open failed!");

return;

}

Log.i(TAG, "publisherHandle=" + handle);

int fps = 25;

int gop = fps * 3;

initialize_publisher(libPublisher, handle, video_width_, video_height_, fps, gop);

stream_publisher_.set(libPublisher, handle);

}对应initialize_publisher()实现:

private boolean initialize_publisher(SmartPublisherJniV2 lib_publisher, long handle, int width, int height, int fps, int gop) {

if (null == lib_publisher) {

Log.e(TAG, "initialize_publisher lib_publisher is null");

return false;

}

if (0 == handle) {

Log.e(TAG, "initialize_publisher handle is 0");

return false;

}

if (videoEncodeType == 1) {

int kbps = LibPublisherWrapper.estimate_video_hardware_kbps(width, height, fps, true);

Log.i(TAG, "h264HWKbps: " + kbps);

int isSupportH264HWEncoder = lib_publisher.SetSmartPublisherVideoHWEncoder(handle, kbps);

if (isSupportH264HWEncoder == 0) {

lib_publisher.SetNativeMediaNDK(handle, 0);

lib_publisher.SetVideoHWEncoderBitrateMode(handle, 1); // 0:CQ, 1:VBR, 2:CBR

lib_publisher.SetVideoHWEncoderQuality(handle, 39);

lib_publisher.SetAVCHWEncoderProfile(handle, 0x08); // 0x01: Baseline, 0x02: Main, 0x08: High

// lib_publisher.SetAVCHWEncoderLevel(handle, 0x200); // Level 3.1

// lib_publisher.SetAVCHWEncoderLevel(handle, 0x400); // Level 3.2

// lib_publisher.SetAVCHWEncoderLevel(handle, 0x800); // Level 4

lib_publisher.SetAVCHWEncoderLevel(handle, 0x1000); // Level 4.1 多数情况下,这个够用了

//lib_publisher.SetAVCHWEncoderLevel(handle, 0x2000); // Level 4.2

// lib_publisher.SetVideoHWEncoderMaxBitrate(handle, ((long)h264HWKbps)*1300);

Log.i(TAG, "Great, it supports h.264 hardware encoder!");

}

} else if (videoEncodeType == 2) {

int kbps = LibPublisherWrapper.estimate_video_hardware_kbps(width, height, fps, false);

Log.i(TAG, "hevcHWKbps: " + kbps);

int isSupportHevcHWEncoder = lib_publisher.SetSmartPublisherVideoHevcHWEncoder(handle, kbps);

if (isSupportHevcHWEncoder == 0) {

lib_publisher.SetNativeMediaNDK(handle, 0);

lib_publisher.SetVideoHWEncoderBitrateMode(handle, 1); // 0:CQ, 1:VBR, 2:CBR

lib_publisher.SetVideoHWEncoderQuality(handle, 39);

// libPublisher.SetVideoHWEncoderMaxBitrate(handle, ((long)hevcHWKbps)*1200);

Log.i(TAG, "Great, it supports hevc hardware encoder!");

}

}

boolean is_sw_vbr_mode = true;

//H.264 software encoder

if (is_sw_vbr_mode) {

int is_enable_vbr = 1;

int video_quality = LibPublisherWrapper.estimate_video_software_quality(width, height, true);

int vbr_max_kbps = LibPublisherWrapper.estimate_video_vbr_max_kbps(width, height, fps);

lib_publisher.SmartPublisherSetSwVBRMode(handle, is_enable_vbr, video_quality, vbr_max_kbps);

}

lib_publisher.SmartPublisherSetAudioCodecType(handle, 1);

libPublisher.SetSmartPublisherEventCallbackV2(handle, new EventHandlePublisherV2());

lib_publisher.SmartPublisherSetSWVideoEncoderProfile(handle, 3);

lib_publisher.SmartPublisherSetSWVideoEncoderSpeed(handle, 2);

lib_publisher.SmartPublisherSetGopInterval(handle, gop);

lib_publisher.SmartPublisherSetFPS(handle, fps);

// lib_publisher.SmartPublisherSetSWVideoBitRate(handle, 600, 1200);

boolean is_noise_suppression = true;

lib_publisher.SmartPublisherSetNoiseSuppression(handle, is_noise_suppression ? 1 : 0);

//boolean is_agc = false;

//lib_publisher.SmartPublisherSetAGC(handle, is_agc ? 1 : 0);

//int echo_cancel_delay = 0;

//lib_publisher.SmartPublisherSetEchoCancellation(handle, 1, echo_cancel_delay);

return true;

}启动、停止RTSP服务:

//启动/停止RTSP服务

class ButtonRtspServiceListener implements View.OnClickListener {

public void onClick(View v) {

if (isRTSPServiceRunning) {

stopRtspService();

btnRtspService.setText("启动RTSP服务");

btnRtspPublisher.setEnabled(false);

isRTSPServiceRunning = false;

return;

}

Log.i(TAG, "onClick start rtsp service..");

rtsp_handle_ = libPublisher.OpenRtspServer(0);

if (rtsp_handle_ == 0) {

Log.e(TAG, "创建rtsp server实例失败! 请检查SDK有效性");

} else {

int port = 28554;

if (libPublisher.SetRtspServerPort(rtsp_handle_, port) != 0) {

libPublisher.CloseRtspServer(rtsp_handle_);

rtsp_handle_ = 0;

Log.e(TAG, "创建rtsp server端口失败! 请检查端口是否重复或者端口不在范围内!");

}

if (libPublisher.StartRtspServer(rtsp_handle_, 0) == 0) {

Log.i(TAG, "启动rtsp server 成功!");

} else {

libPublisher.CloseRtspServer(rtsp_handle_);

rtsp_handle_ = 0;

Log.e(TAG, "启动rtsp server失败! 请检查设置的端口是否被占用!");

}

btnRtspService.setText("停止RTSP服务");

btnRtspPublisher.setEnabled(true);

isRTSPServiceRunning = true;

}

}

}发布RTSP流:

//发布/停止RTSP流

class ButtonRtspPublisherListener implements View.OnClickListener {

public void onClick(View v) {

if (stream_publisher_.is_rtsp_publishing()) {

stopRtspPublisher();

btnRtspPublisher.setText("发布RTSP流");

btnGetRtspSessionNumbers.setEnabled(false);

btnRtspService.setEnabled(true);

return;

}

Log.i(TAG, "onClick start rtsp publisher..");

InitAndSetConfig();

String rtsp_stream_name = "stream1";

stream_publisher_.SetRtspStreamName(rtsp_stream_name);

stream_publisher_.ClearRtspStreamServer();

stream_publisher_.AddRtspStreamServer(rtsp_handle_);

if (!stream_publisher_.StartRtspStream()) {

stream_publisher_.try_release();

Log.e(TAG, "调用发布rtsp流接口失败!");

return;

}

startLayerPostThread();

btnRtspPublisher.setText("停止RTSP流");

btnGetRtspSessionNumbers.setEnabled(true);

btnRtspService.setEnabled(false);

}

}

获取RTSP会话数:

//获取RTSP会话数

class ButtonGetRtspSessionNumbersListener implements View.OnClickListener {

public void onClick(View v) {

if (libPublisher != null && rtsp_handle_ != 0) {

int session_numbers = libPublisher.GetRtspServerClientSessionNumbers(rtsp_handle_);

Log.i(TAG, "GetRtspSessionNumbers: " + session_numbers);

PopRtspSessionNumberDialog(session_numbers);

}

}

}除了数据注入轻量级RTSP服务外,客户还希望本地录制一份:

class ButtonPusherStartRecorderListener implements View.OnClickListener {

public void onClick(View v) {

if (layer_post_thread_ != null)

layer_post_thread_.update_layers();

if (stream_publisher_.is_recording()) {

stopPusherRecorder();

btnPusherRecorder.setText("实时录像");

btnPusherPauseRecorder.setText("暂停录像");

btnPusherPauseRecorder.setEnabled(false);

isPauseRecording = true;

return;

}

Log.i(TAG, "onClick start recorder..");

InitAndSetConfig();

ConfigRecorderParam();

boolean start_ret = stream_publisher_.StartRecorder();

if (!start_ret) {

stream_publisher_.try_release();

Log.e(TAG, "Failed to start recorder.");

return;

}

startLayerPostThread();

btnPusherRecorder.setText("停止录像");

btnPusherPauseRecorder.setEnabled(true);

isPauseRecording = true;

}

}

class ButtonPauseRecorderListener implements View.OnClickListener {

public void onClick(View v) {

if (stream_publisher_.is_recording()) {

if (isPauseRecording) {

boolean ret = stream_publisher_.PauseRecorder(true);

if (ret) {

isPauseRecording = false;

btnPusherPauseRecorder.setText("恢复录像");

} else {

Log.e(TAG, "Pause recorder failed..");

}

} else {

boolean ret = stream_publisher_.PauseRecorder(false);

if (ret) {

isPauseRecording = true;

btnPusherPauseRecorder.setText("暂停录像");

} else {

Log.e(TAG, "Resume recorder failed..");

}

}

}

}

}二次处理的数据,也可以转推到RTMP服务:

btnRTMPPusher.setOnClickListener(new Button.OnClickListener() {

// @Override

public void onClick(View v) {

if (stream_publisher_.is_rtmp_publishing()) {

stopPush();

btnRTMPPusher.setText("推送RTMP");

return;

}

Log.i(TAG, "onClick start push rtmp..");

InitAndSetConfig();

String rtmp_pusher_url = "rtmp://192.168.0.108:1935/hls/stream1";

if (!stream_publisher_.SetURL(rtmp_pusher_url))

Log.e(TAG, "Failed to set publish stream URL..");

boolean start_ret = stream_publisher_.StartPublisher();

if (!start_ret) {

stream_publisher_.try_release();

Log.e(TAG, "Failed to start push stream..");

return;

}

startLayerPostThread();

btnRTMPPusher.setText("停止推送");

}

});总结

以上是Android平台拉取RTSP数据,然后添加动态水印后,二次输出到轻量级RTSP服务、推送至RTMP服务的sample代码,如果需要本地录像,也可以本地录制,配合我们的RTMP、RTSP播放器,整体延迟毫秒级(实测非常低,有需要的开发者可以私聊我测试),可完全用于摄像头的控制。

本文来自互联网用户投稿,该文观点仅代表作者本人,不代表本站立场。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。 如若内容造成侵权/违法违规/事实不符,请联系我的编程经验分享网邮箱:chenni525@qq.com进行投诉反馈,一经查实,立即删除!

- Python教程

- 深入理解 MySQL 中的 HAVING 关键字和聚合函数

- Qt之QChar编码(1)

- MyBatis入门基础篇

- 用Python脚本实现FFmpeg批量转换

- 网络中不中,先看ping行不行

- c语言:用海伦公式,求三角形的面积|练习题

- Docker-Jenkins编译android-app的两种方案

- 跨境电商代采是什么?怎么做代采网站?

- JAVA版鸿鹄云商B2B2C:解析多商家入驻直播带货商城系统的实现与应用

- python数据分析——Matplotlib基本用法

- 线性布局LinearLayout

- Pytest测试 —— 如何使用属性来标记测试函数!

- 字符串删除。输入字符串,输入一个子字符串。删除子字符串,输出删除最终结果。

- LV逻辑卷