java大数据hadoop2.92安装伪分布式文件系统

Apache Hadoop 3.3.6 – Hadoop: Setting up a Single Node Cluster.

1、解压缩到某个路径

/usr/local/hadoop

2、修改配置文件

/usr/local/hadoop/etc/hadoop/hadoop-env.sh

export JAVA_HOME=/usr/local/javajdk

3、修改配置文件

/usr/local/hadoop/etc/hadoop/core-site.xml

<configuration>

<property>

<name>fs.defaultFS</name>

<value>hdfs://localhost:9000</value>

</property>

</configuration>/usr/local/hadoop/etc/hadoop/hdfs-site.xml

<configuration>

<property>

<name>dfs.replication</name>

<value>1</value>

</property>

</configuration>4、测试登录

cd?/usr/local/hadoop/etc/hadoop

ssh localhost

5、添加密钥,方便后续登录不用密码

ssh-keygen -t rsa -P '' -f ~/.ssh/id_rsa

cat ~/.ssh/id_rsa.pub >> ~/.ssh/authorized_keys

chmod 0600 ~/.ssh/authorized_keys

后面登录免密

ssh localhost

6、格式化

cd?/usr/local/hadoop/bin

回车运行下方命令

./hdfs namenode -format

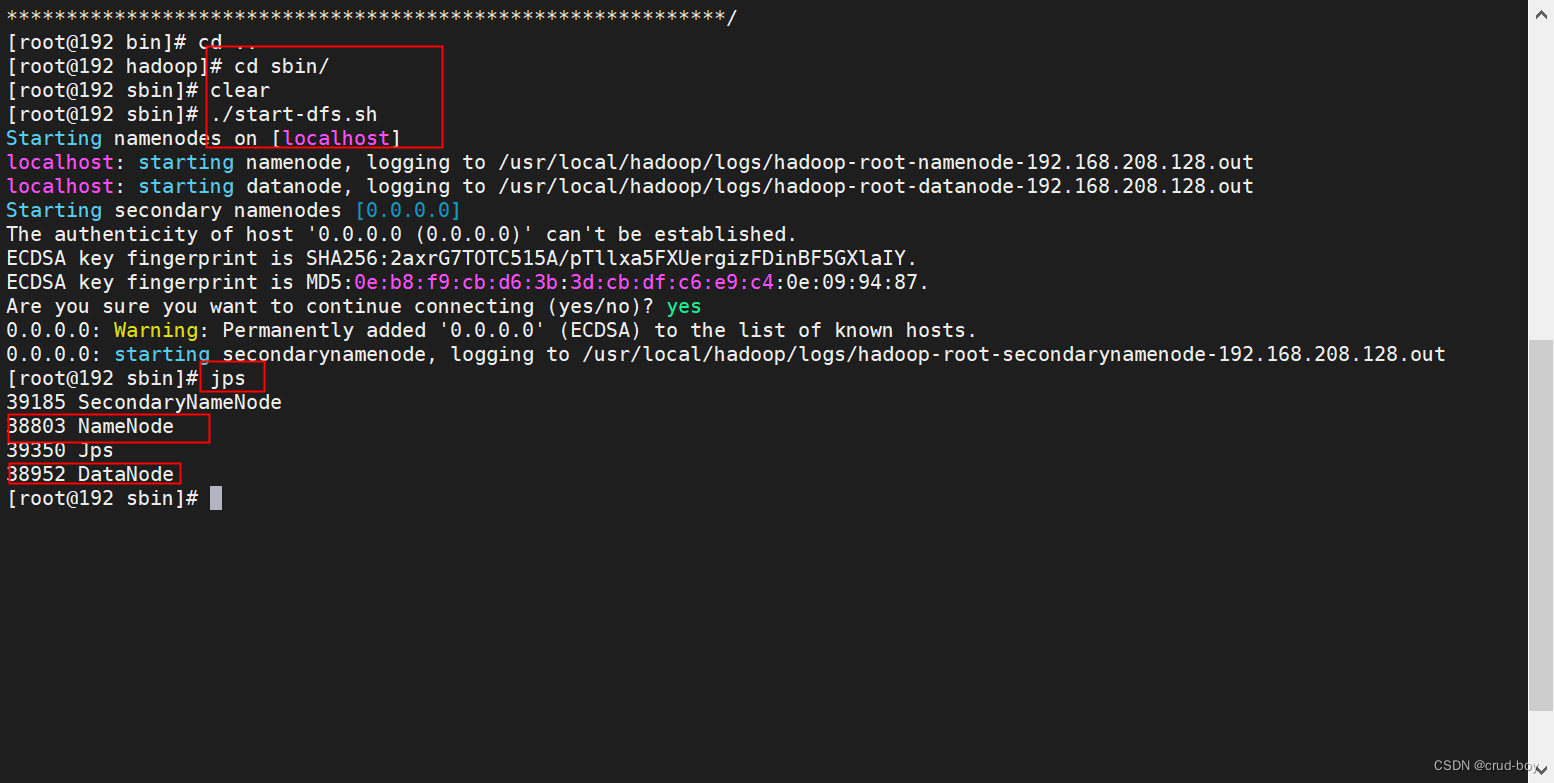

7、启动hadoop伪分布式文件系统

cd?/usr/local/hadoop/sbin

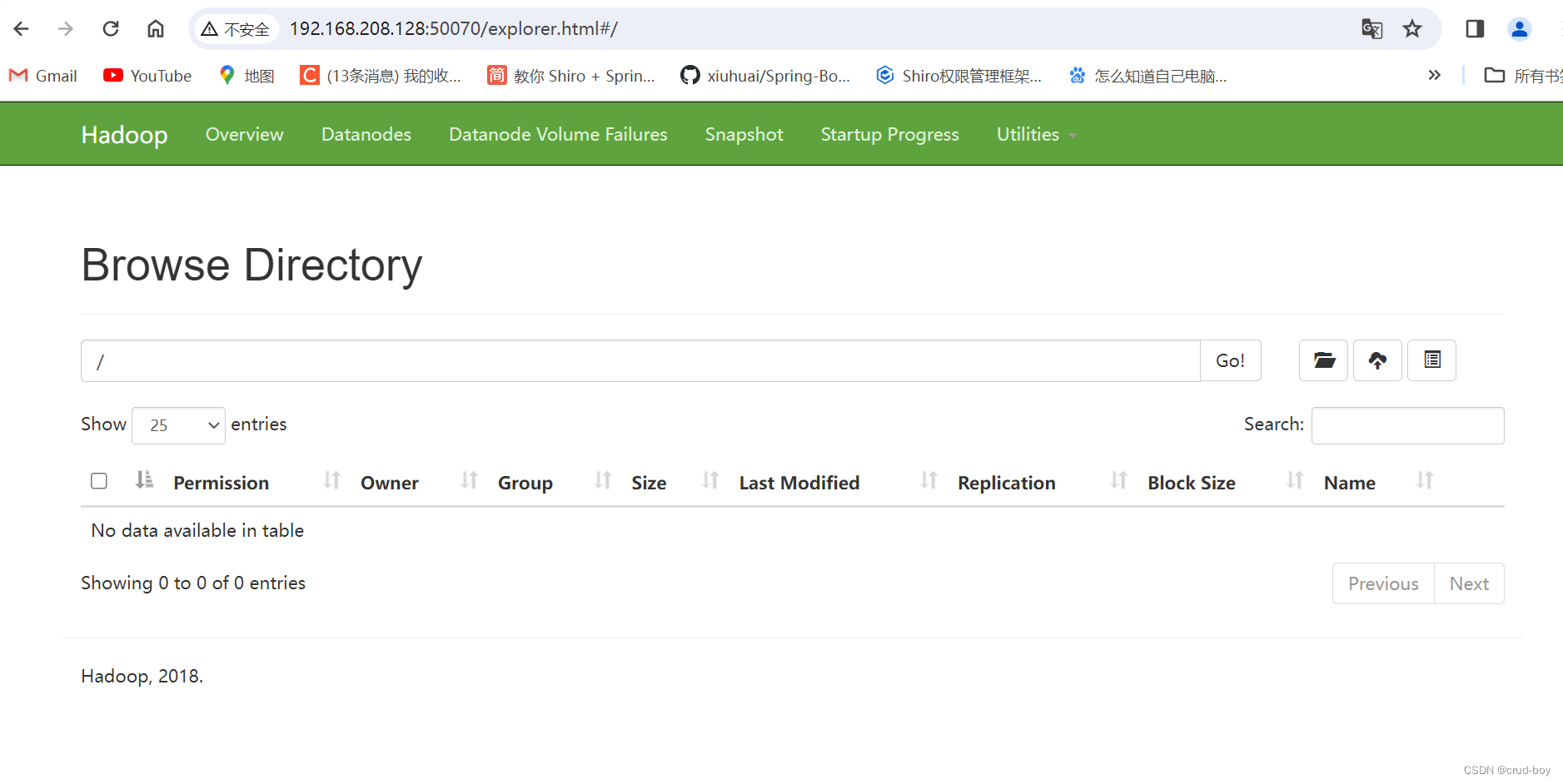

访问web端

8、创建文件夹

cd?/usr/local/hadoop/bin

创建文件夹demo

./hadoop dfs -mkdir /demo

上传文件到demo文件夹

./hadoop dfs -put ./hdfs.cmd /demo

获取上面的文件夹到Linux本地

./hadoop dfs -get?/demo/hdfs.cmd /root/

9、配置全局模式,方便任意目录运行命令

vi /etc/profile

添加下列几行

HADOOP_HOME=/usr/local/hadoop

export HADOOP_HOME

PATH=$HADOOP_HOME/bin:$HADOOP_HOME/sbin:$PATH

export PATH

wq保存退出

执行下方命令,使配置生效

source /etc/profile

后面可在任意目录执行

start-dfs.sh

开启hadoop

stop-dfs.sh

关闭hadoop

本文来自互联网用户投稿,该文观点仅代表作者本人,不代表本站立场。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。 如若内容造成侵权/违法违规/事实不符,请联系我的编程经验分享网邮箱:chenni525@qq.com进行投诉反馈,一经查实,立即删除!