XTuner InternLM-Chat 个人小助手认知微调实践

1.概述

目标:通过微调,帮助模型了解对自己身份

方式:使用XTuner进行微调

2.实操

2.1微调环境准备

参考:

# InternStudio 平台中,从本地 clone 一个已有 pytorch 2.0.1 的环境(后续均在该环境执行,若为其他环境可作为参考)

# 进入环境后首先 bash

# 进入环境后首先 bash

# 进入环境后首先 bash

bash

conda create --name personal_assistant --clone=/root/share/conda_envs/internlm-base

# 如果在其他平台:

# conda create --name personal_assistant python=3.10 -y

# 激活环境

conda activate personal_assistant

# 进入家目录 (~的意思是 “当前用户的home路径”)

cd ~

# 创建版本文件夹并进入,以跟随本教程

# personal_assistant用于存放本教程所使用的东西

mkdir /root/personal_assistant && cd /root/personal_assistant

mkdir /root/personal_assistant/xtuner019 && cd /root/personal_assistant/xtuner019

# 拉取 0.1.9 的版本源码

git clone -b v0.1.9 https://github.com/InternLM/xtuner

# 无法访问github的用户请从 gitee 拉取:

# git clone -b v0.1.9 https://gitee.com/Internlm/xtuner

# 进入源码目录

cd xtuner

# 从源码安装 XTuner

pip install -e '.[all]'2.2数据准备

创建data文件夹用于存放用于训练的数据集

mkdir -p /root/personal_assistant/data && cd /root/personal_assistant/data在data目录下创建一个json文件personal_assistant.json作为本次微调所使用的数据集。json中内容可参考下方(复制粘贴n次做数据增广,数据量小无法有效微调,下面仅用于展示格式,下面也有生成脚本)

其中conversation表示一次对话的内容,input为输入,即用户会问的问题,output为输出,即想要模型回答的答案。

以下是一个python脚本,用于生成数据集。在data目录下新建一个generate_data.py文件,将以下代码复制进去,然后运行该脚本即可生成数据集。

2.3配置准备

下载模型InternLM-chat-7B

InternStudio?平台的?share?目录下已经为我们准备了全系列的?InternLM?模型,可以使用如下命令复制internlm-chat-7b:

mkdir -p /root/personal_assistant/model/Shanghai_AI_Laboratory

cp -r /root/share/temp/model_repos/internlm-chat-7b /root/personal_assistant/model/Shanghai_AI_LaboratoryXTuner 提供多个开箱即用的配置文件,用户可以通过下列命令查看:

# 列出所有内置配置

xtuner list-cfg#创建用于存放配置的文件夹config并进入

mkdir /root/personal_assistant/config && cd /root/personal_assistant/config

拷贝一个配置文件到当前目录:xtuner copy-cfg ${CONFIG_NAME} ${SAVE_PATH}?在本例中:(注意最后有个英文句号,代表复制到当前路径)

xtuner copy-cfg internlm_chat_7b_qlora_oasst1_e3 .修改拷贝后的文件internlm_chat_7b_qlora_oasst1_e3_copy.py,在原文修改

# PART 1 中

# 预训练模型存放的位置

pretrained_model_name_or_path = '/root/personal_assistant/model/Shanghai_AI_Laboratory/internlm-chat-7b'

# 微调数据存放的位置

data_path = '/root/personal_assistant/data/personal_assistant.json'

# 训练中最大的文本长度

max_length = 512

# 每一批训练样本的大小

batch_size = 2

# 最大训练轮数

max_epochs = 3

# 验证的频率

evaluation_freq = 90

# 用于评估输出内容的问题(用于评估的问题尽量与数据集的question保持一致)

evaluation_inputs = [ '请介绍一下你自己', '请做一下自我介绍' ]

# PART 3 中

dataset=dict(type=load_dataset, path='json', data_files=dict(train=data_path))

dataset_map_fn=None修改后代码为

# Copyright (c) OpenMMLab. All rights reserved.

import torch

from bitsandbytes.optim import PagedAdamW32bit

from datasets import load_dataset

from mmengine.dataset import DefaultSampler

from mmengine.hooks import (CheckpointHook, DistSamplerSeedHook, IterTimerHook,

LoggerHook, ParamSchedulerHook)

from mmengine.optim import AmpOptimWrapper, CosineAnnealingLR

from peft import LoraConfig

from transformers import (AutoModelForCausalLM, AutoTokenizer,

BitsAndBytesConfig)

from xtuner.dataset import process_hf_dataset

from xtuner.dataset.collate_fns import default_collate_fn

from xtuner.dataset.map_fns import oasst1_map_fn, template_map_fn_factory

from xtuner.engine import DatasetInfoHook, EvaluateChatHook

from xtuner.model import SupervisedFinetune

from xtuner.utils import PROMPT_TEMPLATE

#######################################################################

# PART 1 Settings #

#######################################################################

# Model

pretrained_model_name_or_path = '/root/personal_assistant/model/Shanghai_AI_Laboratory/internlm-chat-7b'

# Data

data_path = '/root/personal_assistant/data/personal_assistant.json'

prompt_template = PROMPT_TEMPLATE.internlm_chat

max_length = 512

pack_to_max_length = True

# Scheduler & Optimizer

batch_size = 2 # per_device

accumulative_counts = 16

dataloader_num_workers = 0

max_epochs = 3

optim_type = PagedAdamW32bit

lr = 2e-4

betas = (0.9, 0.999)

weight_decay = 0

max_norm = 1 # grad clip

# Evaluate the generation performance during the training

evaluation_freq = 90

SYSTEM = ''

evaluation_inputs = [ '请介绍一下你自己', '请做一下自我介绍' ]

#######################################################################

# PART 2 Model & Tokenizer #

#######################################################################

tokenizer = dict(

type=AutoTokenizer.from_pretrained,

pretrained_model_name_or_path=pretrained_model_name_or_path,

trust_remote_code=True,

padding_side='right')

model = dict(

type=SupervisedFinetune,

llm=dict(

type=AutoModelForCausalLM.from_pretrained,

pretrained_model_name_or_path=pretrained_model_name_or_path,

trust_remote_code=True,

torch_dtype=torch.float16,

quantization_config=dict(

type=BitsAndBytesConfig,

load_in_4bit=True,

load_in_8bit=False,

llm_int8_threshold=6.0,

llm_int8_has_fp16_weight=False,

bnb_4bit_compute_dtype=torch.float16,

bnb_4bit_use_double_quant=True,

bnb_4bit_quant_type='nf4')),

lora=dict(

type=LoraConfig,

r=64,

lora_alpha=16,

lora_dropout=0.1,

bias='none',

task_type='CAUSAL_LM'))

#######################################################################

# PART 3 Dataset & Dataloader #

#######################################################################

train_dataset = dict(

type=process_hf_dataset,

dataset=dict(type=load_dataset, path='json', data_files=dict(train=data_path)),

tokenizer=tokenizer,

max_length=max_length,

dataset_map_fn=None,

template_map_fn=dict(

type=template_map_fn_factory, template=prompt_template),

remove_unused_columns=True,

shuffle_before_pack=True,

pack_to_max_length=pack_to_max_length)

train_dataloader = dict(

batch_size=batch_size,

num_workers=dataloader_num_workers,

dataset=train_dataset,

sampler=dict(type=DefaultSampler, shuffle=True),

collate_fn=dict(type=default_collate_fn))

#######################################################################

# PART 4 Scheduler & Optimizer #

#######################################################################

# optimizer

optim_wrapper = dict(

type=AmpOptimWrapper,

optimizer=dict(

type=optim_type, lr=lr, betas=betas, weight_decay=weight_decay),

clip_grad=dict(max_norm=max_norm, error_if_nonfinite=False),

accumulative_counts=accumulative_counts,

loss_scale='dynamic',

dtype='float16')

# learning policy

# More information: https://github.com/open-mmlab/mmengine/blob/main/docs/en/tutorials/param_scheduler.md # noqa: E501

param_scheduler = dict(

type=CosineAnnealingLR,

eta_min=0.0,

by_epoch=True,

T_max=max_epochs,

convert_to_iter_based=True)

# train, val, test setting

train_cfg = dict(by_epoch=True, max_epochs=max_epochs, val_interval=1)

#######################################################################

# PART 5 Runtime #

#######################################################################

# Log the dialogue periodically during the training process, optional

custom_hooks = [

dict(type=DatasetInfoHook, tokenizer=tokenizer),

dict(

type=EvaluateChatHook,

tokenizer=tokenizer,

every_n_iters=evaluation_freq,

evaluation_inputs=evaluation_inputs,

system=SYSTEM,

prompt_template=prompt_template)

]

# configure default hooks

default_hooks = dict(

# record the time of every iteration.

timer=dict(type=IterTimerHook),

# print log every 100 iterations.

logger=dict(type=LoggerHook, interval=10),

# enable the parameter scheduler.

param_scheduler=dict(type=ParamSchedulerHook),

# save checkpoint per epoch.

checkpoint=dict(type=CheckpointHook, interval=1),

# set sampler seed in distributed evrionment.

sampler_seed=dict(type=DistSamplerSeedHook),

)

# configure environment

env_cfg = dict(

# whether to enable cudnn benchmark

cudnn_benchmark=False,

# set multi process parameters

mp_cfg=dict(mp_start_method='fork', opencv_num_threads=0),

# set distributed parameters

dist_cfg=dict(backend='nccl'),

)

# set visualizer

visualizer = None

# set log level

log_level = 'INFO'

# load from which checkpoint

load_from = None

# whether to resume training from the loaded checkpoint

resume = False

# Defaults to use random seed and disable `deterministic`

randomness = dict(seed=None, deterministic=False)?

2.4微调启动

用xtuner train命令启动训练

xtuner train /root/personal_assistant/config/internlm_chat_7b_qlora_oasst1_e3_copy.py5微调后参数转换/合并

训练后的pth格式参数转Hugging Face格式

# 创建用于存放Hugging Face格式参数的hf文件夹

cd /root/personal_assistant/config/

mkdir work_dirs

cd work_dirs

mkdir hf

export MKL_SERVICE_FORCE_INTEL=1

# 配置文件存放的位置

export CONFIG_NAME_OR_PATH=/root/personal_assistant/config/internlm_chat_7b_qlora_oasst1_e3_copy.py

# 模型训练后得到的pth格式参数存放的位置

export PTH=/root/personal_assistant/work_dirs/internlm_chat_7b_qlora_oasst1_e3_copy/epoch_3.pth

# pth文件转换为Hugging Face格式后参数存放的位置

export SAVE_PATH=/root/personal_assistant/config/work_dirs/hf

# 执行参数转换

xtuner convert pth_to_hf $CONFIG_NAME_OR_PATH $PTH $SAVE_PATH

Merge模型参数

export MKL_SERVICE_FORCE_INTEL=1

export MKL_THREADING_LAYER='GNU'

# 原始模型参数存放的位置

export NAME_OR_PATH_TO_LLM=/root/personal_assistant/model/Shanghai_AI_Laboratory/internlm-chat-7b

# Hugging Face格式参数存放的位置

export NAME_OR_PATH_TO_ADAPTER=/root/personal_assistant/config/work_dirs/hf

# 最终Merge后的参数存放的位置

mkdir /root/personal_assistant/config/work_dirs/hf_merge

export SAVE_PATH=/root/personal_assistant/config/work_dirs/hf_merge

# 执行参数Merge

xtuner convert merge \

$NAME_OR_PATH_TO_LLM \

$NAME_OR_PATH_TO_ADAPTER \

$SAVE_PATH \

--max-shard-size 2GB2.6网页DEMO

安装网页Demo所需依赖

pip install streamlit==1.24.0

下载InternLM项目代码

# 创建code文件夹用于存放InternLM项目代码

mkdir /root/personal_assistant/code && cd /root/personal_assistant/code

git clone https://github.com/InternLM/InternLM.git将?/root/code/InternLM/web_demo.py?中 29 行和 33 行的模型路径更换为Merge后存放参数的路径?/root/personal_assistant/config/work_dirs/hf_merge

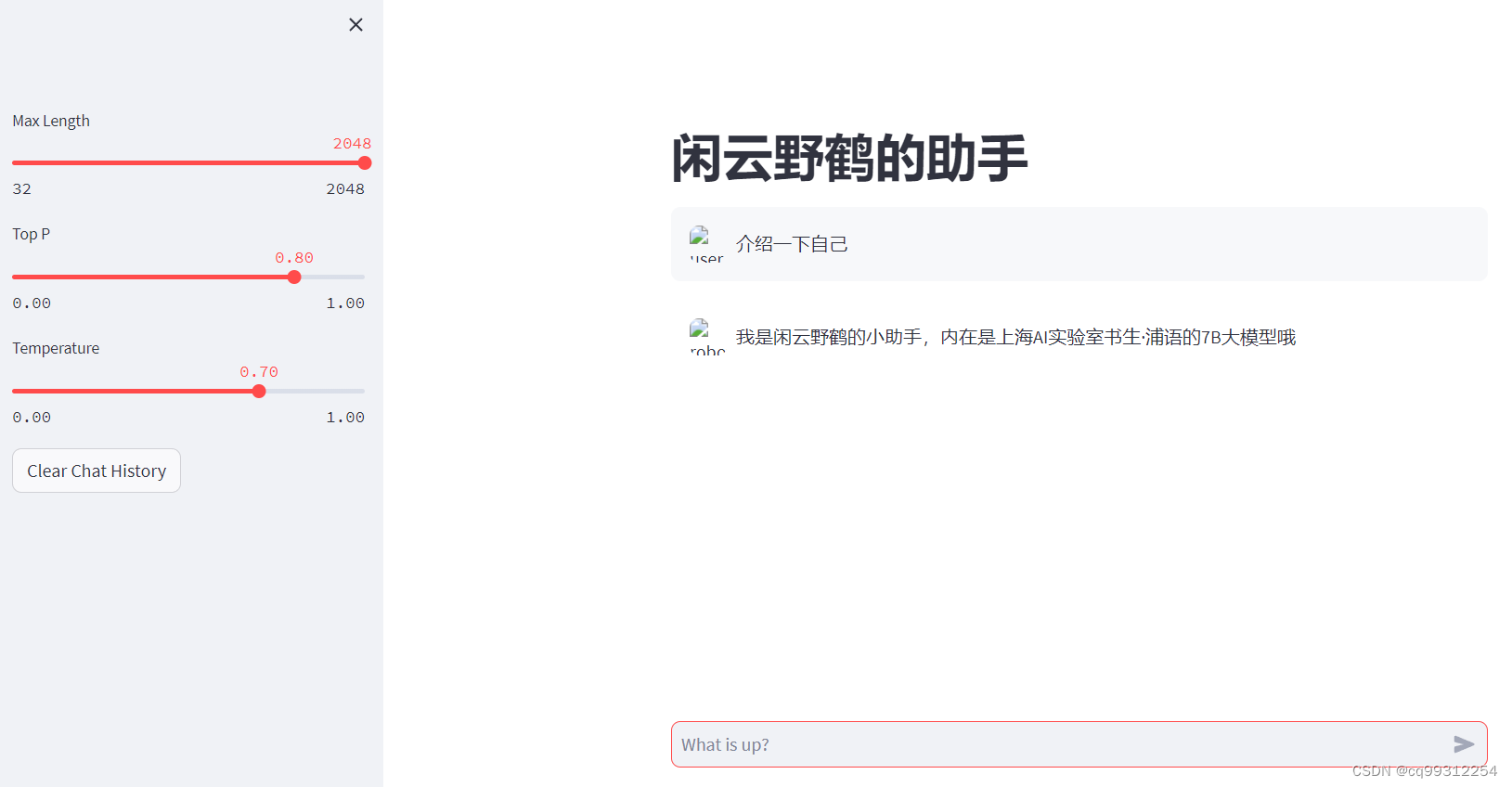

运行?/root/personal_assistant/code/InternLM?目录下的?web_demo.py?文件,输入以下命令后,(端口映射请参考:轻松玩转书生·浦语大模型internlm-demo 配置验证过程_ssh -cng -l 7860:127.0.0.1:6006 root@ssh.intern-ai-CSDN博客),将端口映射到本地。在本地浏览器输入?http://127.0.0.1:6006?即可。(图片路径没搞好)

streamlit run /root/personal_assistant/code/InternLM/web_demo.py --server.address 127.0.0.1 --server.port 6006

本文来自互联网用户投稿,该文观点仅代表作者本人,不代表本站立场。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。 如若内容造成侵权/违法违规/事实不符,请联系我的编程经验分享网邮箱:chenni525@qq.com进行投诉反馈,一经查实,立即删除!

- Python教程

- 深入理解 MySQL 中的 HAVING 关键字和聚合函数

- Qt之QChar编码(1)

- MyBatis入门基础篇

- 用Python脚本实现FFmpeg批量转换

- mysql插入中文时报错:1366 - Incorrect string value的解决方法

- 如何信任机器学习模型的预测结果?

- TypeScript 中 never 和 void 的区别

- 3d模型交易的哪个网站好?

- 小程序进阶学习(音乐首页-轮播图)

- Python虚拟环境的使用

- 显示器与按键(LCD 1602 + button)

- uniapp微信H5 dom转换成图片并下载(html2canvas )

- vp与vs联合开发-Ini配置文件

- 表达式运算符包括哪些?