二进制搭建 Kubernetes v1.20

k8s集群配置

| 主机名 | IP地址 | 服务 |

| k8smaster01 | 192.168.10.10 | apiserver、kube-manager?? ?scheduler、etcd |

| k8smaster02 | 192.168.10.20 | apiserver、kube-manager?? ?scheduler |

| node01 | 192.168.10.30 | kubelet?? ?kube-proxy?? ?etcd |

| node02 | 192.168.10.40 | kubelet?? ??? ?kube-proxy?? ?etcd |

| 负载均衡 | master:192.168.10.50 | nginx+keepalive |

| backup: 192.168.10.60 |

k8smaster01、node01、node02

systemctl stop firewalld

systemctl disable firewalld

iptables -F && iptables -t nat -F && iptables -t mangle -F && iptables -X

setenforce 0

sed -i 's/enforcing/disabled/' /etc/selinux/config

swapoff -a

sed -ri 's/.*swap.*/#&/' /etc/fstab

hostnamectl set-hostname master01

hostnamectl set-hostname node01

hostnamectl set-hostname node02

vim /etc/hosts

192.168.10.10 master01

192.168.10.30 node01

192.168.10.40 node02

--------------------------------------------------------------------------------

vim /etc/sysctl.d/k8s.conf

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

net.ipv6.conf.all.disable_ipv6=1

net.ipv4.ip_forward=1

--------------------------------------------------------------------------------

sysctl --system

yum -y install ntpdate

ntpdate ntp.aliyun.com ?部署 docker引擎

yum install -y yum-utils device-mapper-persistent-data lvm2

yum-config-manager --add-repo https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

yum install -y docker-ce docker-ce-cli containerd.io

systemctl start docker.service

systemctl enable docker.service 同步到此结束

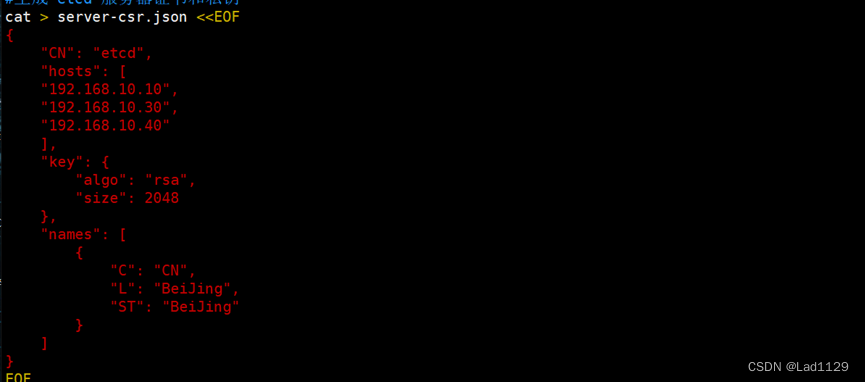

部署 etcd 集群

cd /opt/k8s/

*******************

上传 cfssl、cfssljson、cfssl-certinfo

*******************

mv cfssl cfssljson cfssl-certinfo /usr/local/bin/

chmod 777 /usr/local/bin/cfssl*mkdir /opt/k8s

cd /opt/k8s/

上传 etcd-cert.sh 和 etcd.sh 到 /opt/k8s/ 目录中

chmod 777 etcd-cert.sh etcd.sh

mkdir /opt/k8s/etcd-cert

mv etcd-cert.sh etcd-cert/

cd /opt/k8s/etcd-cert/vim /opt/k8s/etcd-cert.sh

./etcd-cert.sh

#上传 etcd-v3.4.9-linux-amd64.tar.gz 到 /opt/k8s 目录中,启动etcd服务

cd /opt/k8s/

tar zxvf etcd-v3.4.9-linux-amd64.tar.gz

mkdir -p /opt/etcd/{cfg,bin,ssl}

cd /opt/k8s/etcd-v3.4.9-linux-amd64/

mv etcd etcdctl /opt/etcd/bin/

cp /opt/k8s/etcd-cert/*.pem /opt/etcd/ssl/

cd /opt/k8s/

./etcd.sh etcd01 192.168.10.10 etcd02=https://192.168.10.30:2380,etcd03=https://192.168.10.40:2380

#可另外打开一个窗口查看etcd进程是否正常

ps -ef | grep etcd#把etcd相关证书文件、命令文件和服务管理文件全部拷贝到另外两个etcd集群节点

scp -r /opt/etcd/ root@192.168.10.30:/opt/

scp -r /opt/etcd/ root@192.168.10.40:/opt/

scp /usr/lib/systemd/system/etcd.service root@192.168.10.30:/usr/lib/systemd/system/

scp /usr/lib/systemd/system/etcd.service root@192.168.10.40:/usr/lib/systemd/system/在node01节点

vim /opt/etcd/cfg/etcd

systemctl start etcd

systemctl enable etcd

systemctl status etcd在node02节点

vim /opt/etcd/cfg/etcd

systemctl start etcd

systemctl enable etcd

systemctl status etcd#检查etcd群集状态

ETCDCTL_API=3 /opt/etcd/bin/etcdctl --cacert=/opt/etcd/ssl/ca.pem --cert=/opt/etcd/ssl/server.pem --key=/opt/etcd/ssl/server-key.pem --endpoints="https://192.168.10.10:2379,https://192.168.10.30:2379,https://192.168.10.40:2379" endpoint health --write-out=table

#查看etcd集群成员列表

ETCDCTL_API=3 /opt/etcd/bin/etcdctl --cacert=/opt/etcd/ssl/ca.pem --cert=/opt/etcd/ssl/server.pem --key=/opt/etcd/ssl/server-key.pem --endpoints="https://192.168.10.10:2379,https://192.168.10.30:2379,https://192.168.10.40:2379" --write-out=table member list

在 master01 节点上操作

#上传 master.zip 和 k8s-cert.sh 到 /opt/k8s 目录中,解压 master.zip 压缩包

cd /opt/k8s/

unzip master.zip

chmod 777 *.sh

vim /opt/k8s/admin.sh修改ip

vim /opt/k8s/scheduler.sh

#创建kubernetes工作目录

mkdir -p /opt/kubernetes/{bin,cfg,ssl,logs}

#创建用于生成CA证书、相关组件的证书和私钥的目录

mkdir /opt/k8s/k8s-cert

mv /opt/k8s/k8s-cert.sh /opt/k8s/k8s-cert

cd /opt/k8s/k8s-cert/vim /opt/k8s/k8s-cert/k8s-cert.sh

./k8s-cert.sh

cp ca*pem apiserver*pem /opt/kubernetes/ssl/

#上传 kubernetes-server-linux-amd64.tar.gz 到 /opt/k8s/ 目录中,解压 kubernetes 压缩包

cd /opt/k8s/

tar zxvf kubernetes-server-linux-amd64.tar.

cd /opt/k8s/kubernetes/server/bin

cp kube-apiserver kubectl kube-controller-manager kube-scheduler /opt/kubernetes/bin/

ln -s /opt/kubernetes/bin/* /usr/local/bin/cd /opt/k8s/

vim token.sh

************************************************

#!/bin/bash

#获取随机数前16个字节内容,以十六进制格式输出,并删除其中空格

BOOTSTRAP_TOKEN=$(head -c 16 /dev/urandom | od -An -t x | tr -d ' ')

#生成 token.csv 文件,按照 Token序列号,用户名,UID,用户组 的格式生成

cat > /opt/kubernetes/cfg/token.csv <<EOF

${BOOTSTRAP_TOKEN},kubelet-bootstrap,10001,"system:kubelet-bootstrap"

EOF

***********************************************chmod 777 token.sh

./token.sh

cat /opt/kubernetes/cfg/token.csv

cd /opt/k8s/

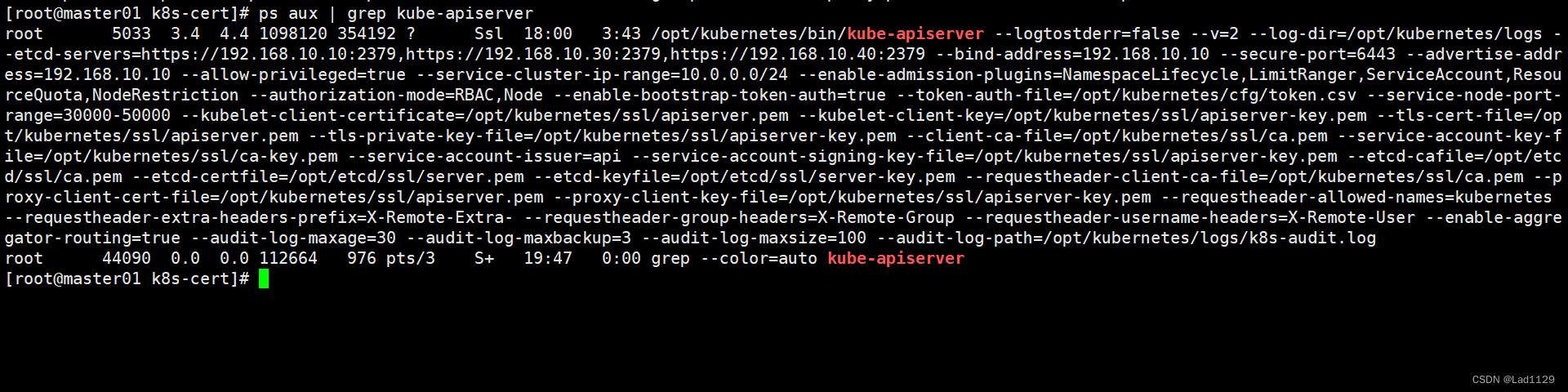

./apiserver.sh 192.168.10.10 https://192.168.10.10:2379,https://192.168.10.30:2379,https://192.168.10.40:2379#检查进程是否启动成功

ps aux | grep kube-apiserver

#安全端口6443用于接收HTTPS请求,用于基于Token文件或客户端证书等认证

netstat -natp | grep 6443

#启动 scheduler 服务

cd /opt/k8s/

./scheduler.sh

ps aux | grep kube-scheduler#启动 controller-manager 服务

./controller-manager.sh

ps aux | grep kube-controller-manager#生成kubectl连接集群的kubeconfig文件

./admin.sh#通过kubectl工具查看当前集群组件状态

kubectl get cs

#查看版本信息

kubectl version

在所有 node 节点上操作

#创建kubernetes工作目录

mkdir -p /opt/kubernetes/{bin,cfg,ssl,logs}#上传 node.zip 到 /opt 目录中,解压 node.zip 压缩包,获得kubelet.sh、proxy.sh

cd /opt/

unzip node.zip

chmod 777 kubelet.sh proxy.sh在 master01 节点上操作

#把 kubelet、kube-proxy 拷贝到 node 节点

cd /opt/k8s/kubernetes/server/bin

scp kubelet kube-proxy root@192.168.10.30:/opt/kubernetes/bin/

scp kubelet kube-proxy root@192.168.10.40:/opt/kubernetes/bin/#上传kubeconfig.sh文件到/opt/k8s/kubeconfig目录中,生成kubelet初次加入集群引导kubeconfig文件和kube-proxy.kubeconfig文件

mkdir /opt/k8s/kubeconfig

cd /opt/k8s/kubeconfig

chmod 777 kubeconfig.sh

./kubeconfig.sh 192.168.10.10 /opt/k8s/k8s-cert/#把配置文件 bootstrap.kubeconfig、kube-proxy.kubeconfig 拷贝到 node 节点

scp bootstrap.kubeconfig kube-proxy.kubeconfig root@192.168.10.30:/opt/kubernetes/cfg/

scp bootstrap.kubeconfig kube-proxy.kubeconfig root@192.168.10.40:/opt/kubernetes/cfg/#RBAC授权,使用户 kubelet-bootstrap 能够有权限发起 CSR 请求证书

kubectl create clusterrolebinding kubelet-bootstrap --clusterrole=system:node-bootstrapper --user=kubelet-bootstrap若执行失败,可先给kubectl绑定默认cluster-admin管理员集群角色,授权对整个集群的管理员权限

kubectl create clusterrolebinding cluster-system-anonymous --clusterrole=cluster-admin --user=system:anonymousnode01

#启动 kubelet 服务

cd /opt/

#检查到 node01 节点的 kubelet 发起的 CSR 请求,Pending 表示等待集群给该节点签发证书

kubectl get csr

./kubelet.sh 192.168.10.30

ps aux | grep kubelet#加载 ip_vs 模块

for i in $(ls /usr/lib/modules/$(uname -r)/kernel/net/netfilter/ipvs|grep -o "^[^.]*");do echo $i; /sbin/modinfo -F filename $i >/dev/null 2>&1 && /sbin/modprobe $i;done#启动proxy服务

cd /opt/

./proxy.sh 192.168.10.30

ps aux | grep kube-proxy//在 master01 节点上操作,通过 CSR 请求

#检查到 node01 节点的 kubelet 发起的 CSR 请求,Pending 表示等待集群给该节点签发证书

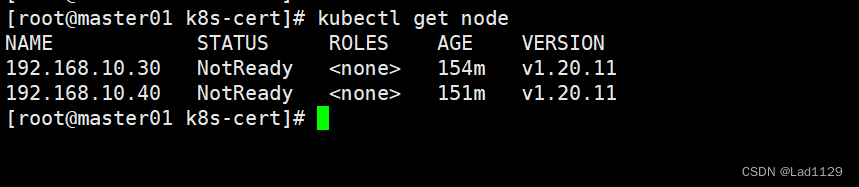

kubectl get csrkubectl certificate approve node-csr-RUWMAWXqd9drfutcgwEiBZdFoxeYQ1G0S_n64sbItrk

node02

#启动 kubelet 服务

cd /opt/

#检查到 node01 节点的 kubelet 发起的 CSR 请求,Pending 表示等待集群给该节点签发证书

kubectl get csr

./kubelet.sh 192.168.10.40

ps aux | grep kubelet#加载 ip_vs 模块

for i in $(ls /usr/lib/modules/$(uname -r)/kernel/net/netfilter/ipvs|grep -o "^[^.]*");do echo $i; /sbin/modinfo -F filename $i >/dev/null 2>&1 && /sbin/modprobe $i;done#启动proxy服务

cd /opt/

./proxy.sh 192.168.10.40

ps aux | grep kube-proxy//在 master01 节点上操作,通过 CSR 请求

#检查到 node01 节点的 kubelet 发起的 CSR 请求,Pending 表示等待集群给该节点签发证书

kubectl get csrkubectl certificate approve node-csr-fLl1kgIHeqVyETUqSeqHlNmHthIQJGbuWfFm8WUXLVg

本文来自互联网用户投稿,该文观点仅代表作者本人,不代表本站立场。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。 如若内容造成侵权/违法违规/事实不符,请联系我的编程经验分享网邮箱:chenni525@qq.com进行投诉反馈,一经查实,立即删除!

- Python教程

- 深入理解 MySQL 中的 HAVING 关键字和聚合函数

- Qt之QChar编码(1)

- MyBatis入门基础篇

- 用Python脚本实现FFmpeg批量转换

- Synplify定义全局变量

- 算法笔记—二分搜索

- three.js绘制网波浪

- cpp_12_异常处理

- 如何实现公网访问GeoServe Web管理界面共享空间地理信息【内网穿透】

- VCG 网格平滑之Laplacian平滑

- k8s其他master节点加入集群命令

- 环形锻件全自动尺寸测量法兰三维检测自动化设备-CASAIM自动化蓝光检测系统

- 【JMeter入门】—— JMeter介绍

- Mybatis事务如何跟Spring结合到一起?