【AI】模型结构可视化工具Netron应用

随着AI模型的发展,模型的结构也变得越来越复杂,理解起来越来越困难,这时候能够画一张结构图就好了,就像我们在开发过程中用到的UML类图,能够直观看出不同层之间的关系,于是Netron就来了。

Netron支持神经网络、深度学习和机器学习网络的可视化。支持 ONNX, TensorFlow Lite, Core ML, Keras, Caffe, Darknet, MXNet, PaddlePaddle, ncnn, MNN 和 TensorFlow.js格式的可视化展示,同时还实验性的支持PyTorch, TorchScript, TensorFlow, OpenVINO, RKNN, MediaPipe, ML.NET,scikit-learn格式的展示。

1.安装运行

Netron支持在线和离线的操作,可以直接在网页上进行展示,

在线运行地址:https://netron.app/

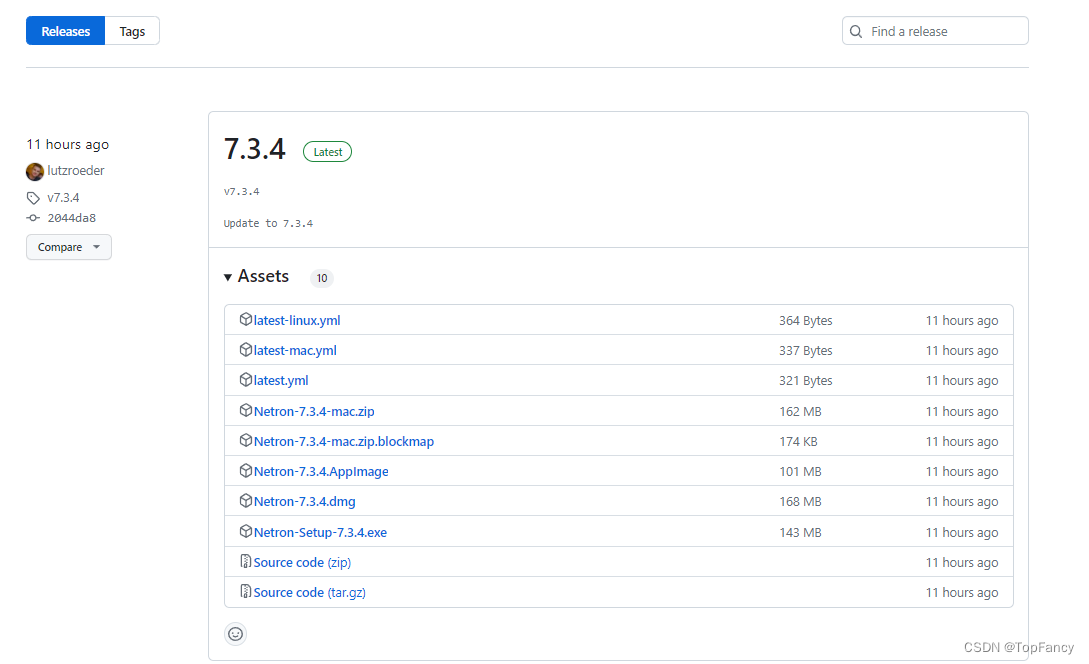

也可以使用官方提供的本地安装文件进行安装,可以直接去github上下载最新版本的。

Github下载地址:https://github.com/lutzroeder/netron/releases/

2.使用

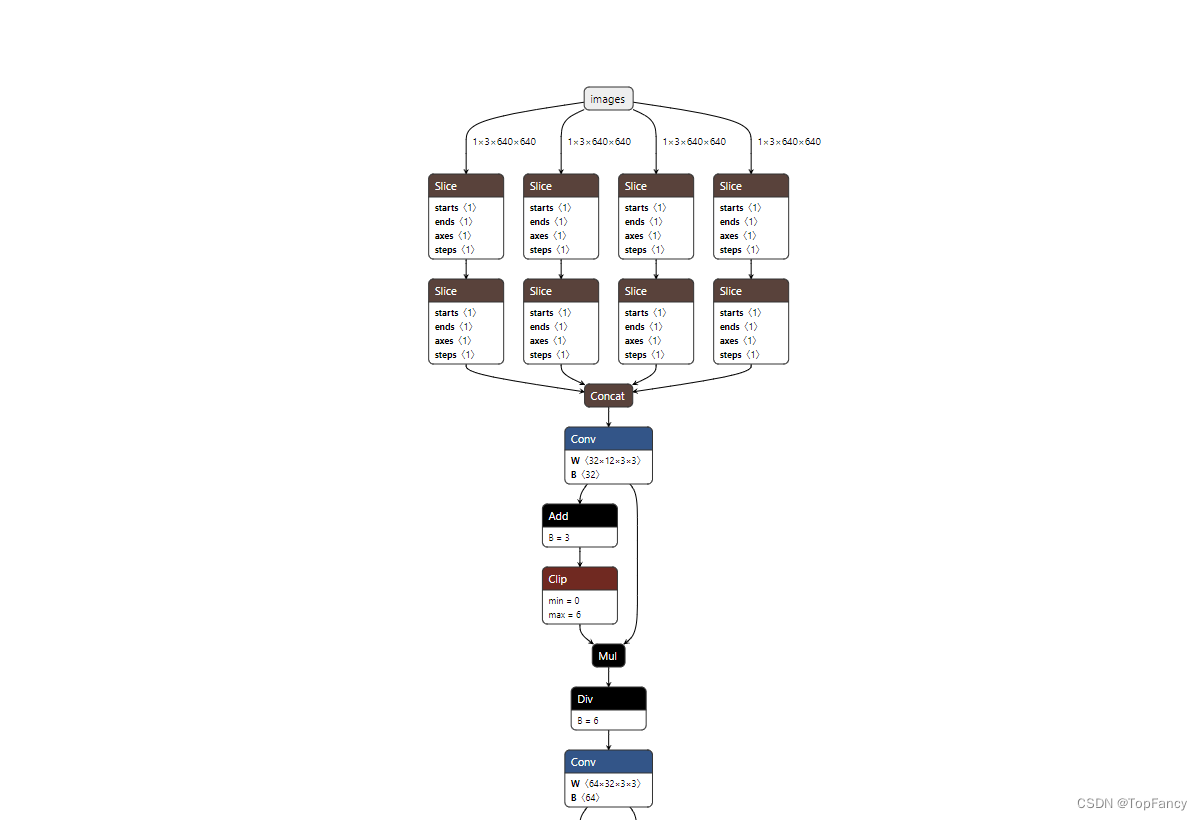

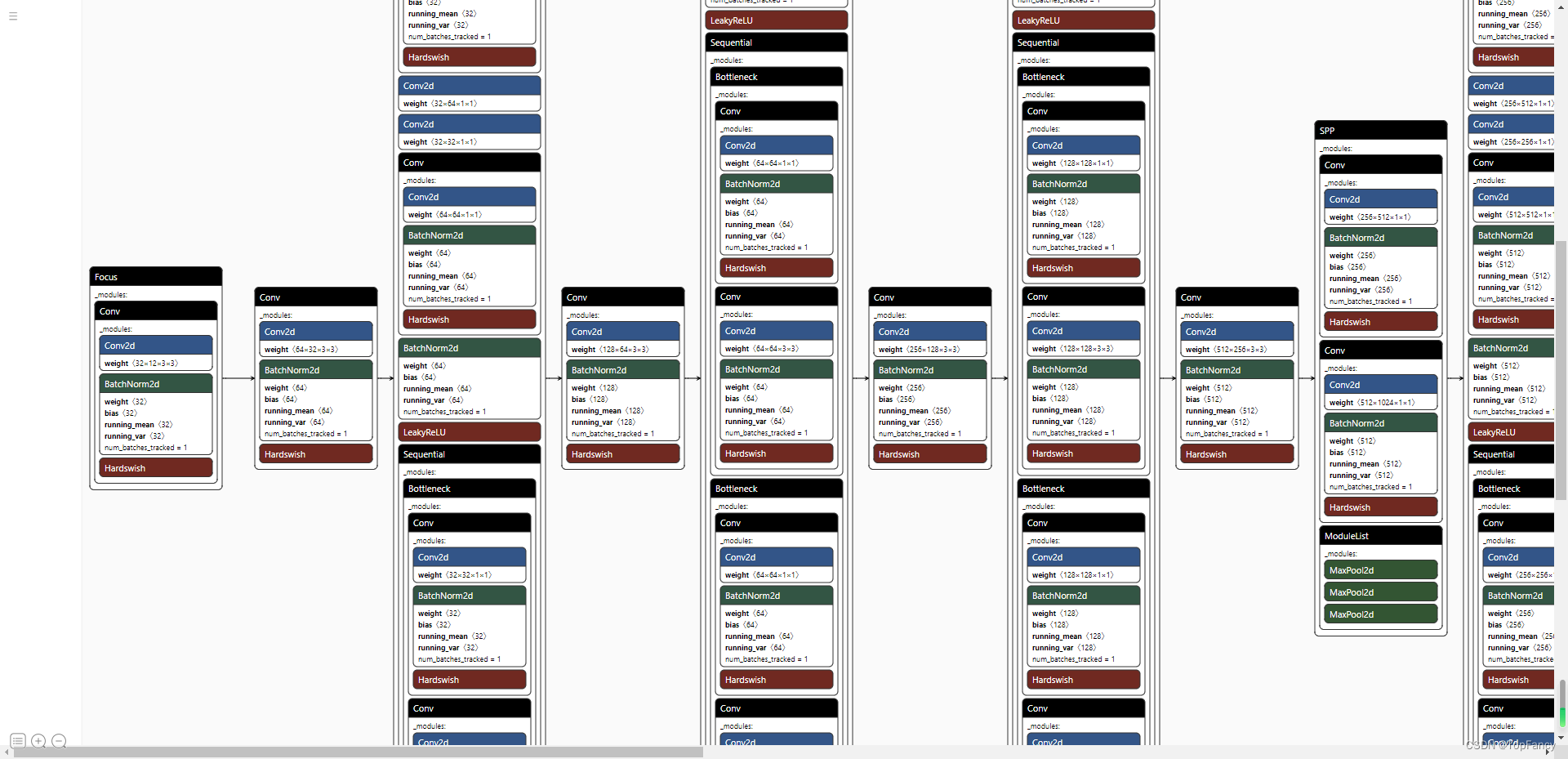

以网页版为例,进去页面之后发现界面很简单,直接点击Open Model就可以选择需要展示的模型结构了,我这边以YOLO V5的模型为例进行演示:

这里可以直观显示每一层结构

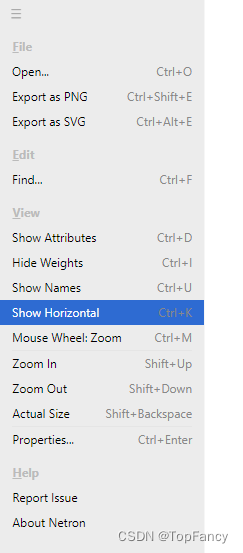

如果不习惯竖着看,可以点击左上角的菜单,选择横向展示

同时,我们看到菜单栏中还有显示Attributes、显示Weights等操作,可以根据自己需要进行选择显示。

最后,可以将网络结构图导出成图片文件,方便后续使用。

3.pt模型转onnx模型

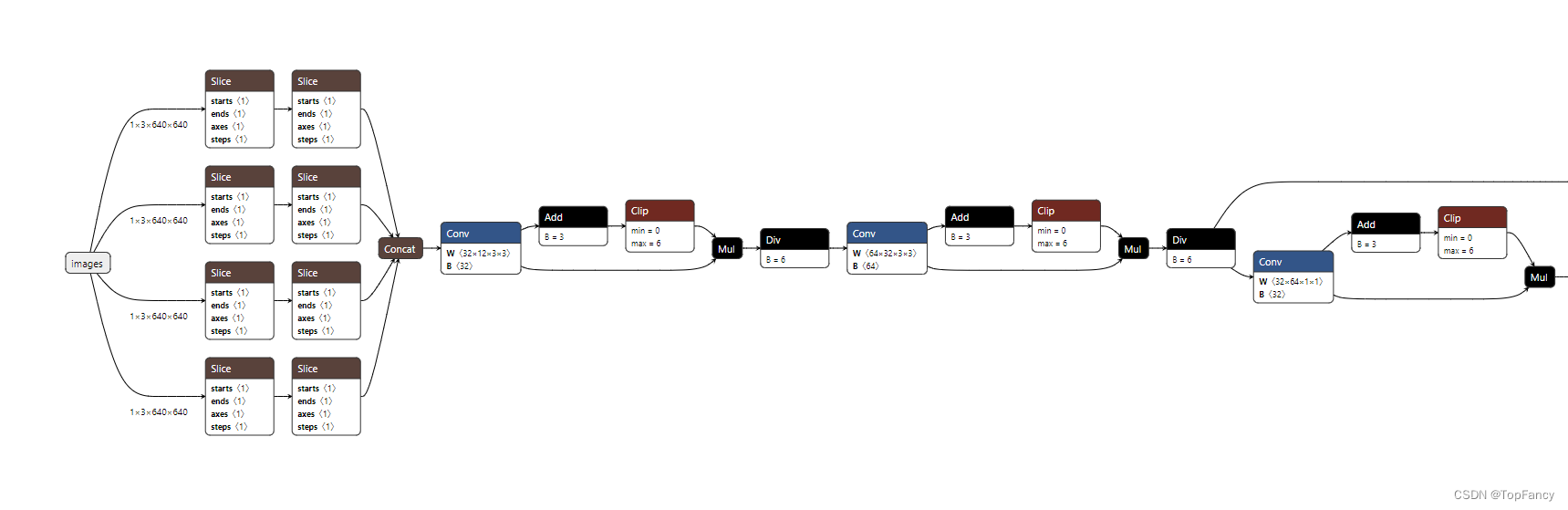

由于Netron对pt模型的支持不是很好,如下图所示,同样是YOLO V5的模型,pt模型打开后长这个样子

可以说不是特别直观,所以我们可以考虑将pt模型转为onnx模型进行展示。这里我们也借鉴YOLO V5的官方代码:

首先要在当前环境下安装onnx包,这个直接使用pip安装即可

pip install onnx

然后可以执行下面的代码进行转换,运行代码需要三个参数:

- –weights:指定pt模型的位置

- –img-size:指定图像的大小

- –batch-size:一般采用默认值1

"""Exports a YOLOv5 *.pt model to ONNX and TorchScript formats

Usage:

$ export PYTHONPATH="$PWD" && python models/export.py --weights ./weights/yolov5s.pt --img 640 --batch 1

"""

#首先pip install onnx

import argparse

import sys

import time

sys.path.append('./') # to run '$ python *.py' files in subdirectories

sys.path.append('../')

import torch

import torch.nn as nn

import models

from models.experimental import attempt_load

from utils.activations import Hardswish

from utils.general import set_logging, check_img_size

if __name__ == '__main__':

parser = argparse.ArgumentParser()

parser.add_argument('--weights', type=str, default='./yolov5s.pt', help='weights path') # from yolov5/models/

parser.add_argument('--img-size', nargs='+', type=int, default=[640, 640], help='image size') # height, width

parser.add_argument('--batch-size', type=int, default=1, help='batch size')

opt = parser.parse_args()

opt.img_size *= 2 if len(opt.img_size) == 1 else 1 # expand

print(opt)

set_logging()

t = time.time()

# Load PyTorch model

model = attempt_load(opt.weights, map_location=torch.device('cpu')) # load FP32 model

labels = model.names

# Checks

gs = int(max(model.stride)) # grid size (max stride)

opt.img_size = [check_img_size(x, gs) for x in opt.img_size] # verify img_size are gs-multiples

# Input

img = torch.zeros(opt.batch_size, 3, *opt.img_size) # image size(1,3,320,192) iDetection

# Update model

for k, m in model.named_modules():

m._non_persistent_buffers_set = set() # pytorch 1.6.0 compatibility

if isinstance(m, models.common.Conv) and isinstance(m.act, nn.Hardswish):

m.act = Hardswish() # assign activation

# if isinstance(m, models.yolo.Detect):

# m.forward = m.forward_export # assign forward (optional)

model.model[-1].export = True # set Detect() layer export=True

y = model(img) # dry run

# TorchScript export

try:

print('\nStarting TorchScript export with torch %s...' % torch.__version__)

f = opt.weights.replace('.pt', '.torchscript.pt') # filename

ts = torch.jit.trace(model, img)

ts.save(f)

print('TorchScript export success, saved as %s' % f)

except Exception as e:

print('TorchScript export failure: %s' % e)

# ONNX export

try:

import onnx

print('\nStarting ONNX export with onnx %s...' % onnx.__version__)

f = opt.weights.replace('.pt', '.onnx') # filename

torch.onnx.export(model, img, f, verbose=False, opset_version=12, input_names=['images'],

output_names=['classes', 'boxes'] if y is None else ['output'])

# Checks

onnx_model = onnx.load(f) # load onnx model

onnx.checker.check_model(onnx_model) # check onnx model

# print(onnx.helper.printable_graph(onnx_model.graph)) # print a human readable model

print('ONNX export success, saved as %s' % f)

except Exception as e:

print('ONNX export failure: %s' % e)

# CoreML export

try:

import coremltools as ct

print('\nStarting CoreML export with coremltools %s...' % ct.__version__)

# convert model from torchscript and apply pixel scaling as per detect.py

model = ct.convert(ts, inputs=[ct.ImageType(name='image', shape=img.shape, scale=1 / 255.0, bias=[0, 0, 0])])

f = opt.weights.replace('.pt', '.mlmodel') # filename

model.save(f)

print('CoreML export success, saved as %s' % f)

except Exception as e:

print('CoreML export failure: %s' % e)

# Finish

print('\nExport complete (%.2fs). Visualize with https://github.com/lutzroeder/netron.' % (time.time() - t))

本文来自互联网用户投稿,该文观点仅代表作者本人,不代表本站立场。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。 如若内容造成侵权/违法违规/事实不符,请联系我的编程经验分享网邮箱:chenni525@qq.com进行投诉反馈,一经查实,立即删除!

- Python教程

- 深入理解 MySQL 中的 HAVING 关键字和聚合函数

- Qt之QChar编码(1)

- MyBatis入门基础篇

- 用Python脚本实现FFmpeg批量转换

- 学习【黑马程序员JavaWeb开发教程】时,课程网址无法访问,所以在本机使用Python语言开启一个服务。

- MySQL主从复制

- Python3 标准库中推荐的命令行解析模块argparse的使用示例

- 戴口罩监测识别摄像机

- 【JavaScript设计模式】Singleton Pattern

- 龙芯+RT-Thread+LVGL实战笔记(27)——超声波测距

- 基于机器学习逻辑归回朴素贝叶斯随机森林等实现Aliens游戏(附完整代码+报告)

- sudo 找不到命令

- HTML5+CSS3+JS小实例:过年3D烟花秀

- 使用Kafka与Spark Streaming进行流数据集成