K8S--部署Nacos

原文网址:K8S--部署Nacos-CSDN博客

简介

本文介绍K8S部署Nacos的方法。Nacos版本是:2.2.3。

部署方案

本文为了简单,使用此部署方式:使用本地pv+configmap,以embedded模式部署单机nacos。以nodePort方式暴露端口。

正式环境可以这样部署:使用nfs,以mysql方式部署集群nacos,以ingress方式暴露端口。

官网网址

https://github.com/nacos-group/nacos-k8s/blob/master/deploy/nacos/nacos-pvc-nfs.yaml

部署结果

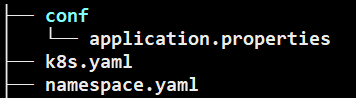

我的工作目录:/work/devops/k8s/app/nacos

1.创建命名空间

创建namespace.yaml文件

内容如下:

# 创建命名空间

apiVersion: v1

kind: Namespace

metadata:

name: middle

labels:

name: middle创建命名空间

kubectl apply -f namespace.yaml结果?

?

2.用ConfigMap创建配置

这里只能用ConfigMap,因为PV不能挂载单个文件,只能挂载目录。ConfigMap可以单独挂载配置文件。(想要挂载单个文件可以用hostPath方式)。

1.修改配置

先从github上下载nacos压缩包,地址:这里

修改配置文件(nacos-server-2.2.3\nacos\conf\application.properties),修改点如下:

备注:除了第一个要改为true之外,下边的几个都是随便写(最后一个配置必须大于32个字符,不然会报错)。?

修改后的配置文件如下:

#

# Copyright 1999-2021 Alibaba Group Holding Ltd.

#

# Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

#

#*************** Spring Boot Related Configurations ***************#

### Default web context path:

server.servlet.contextPath=/nacos

### Include message field

server.error.include-message=ALWAYS

### Default web server port:

server.port=8848

#*************** Network Related Configurations ***************#

### If prefer hostname over ip for Nacos server addresses in cluster.conf:

# nacos.inetutils.prefer-hostname-over-ip=false

### Specify local server's IP:

# nacos.inetutils.ip-address=

#*************** Config Module Related Configurations ***************#

### If use MySQL as datasource:

### Deprecated configuration property, it is recommended to use `spring.sql.init.platform` replaced.

# spring.datasource.platform=mysql

# spring.sql.init.platform=mysql

### Count of DB:

# db.num=1

### Connect URL of DB:

# db.url.0=jdbc:mysql://127.0.0.1:3306/nacos?characterEncoding=utf8&connectTimeout=1000&socketTimeout=3000&autoReconnect=true&useUnicode=true&useSSL=false&serverTimezone=UTC

# db.user.0=nacos

# db.password.0=nacos

### Connection pool configuration: hikariCP

db.pool.config.connectionTimeout=30000

db.pool.config.validationTimeout=10000

db.pool.config.maximumPoolSize=20

db.pool.config.minimumIdle=2

#*************** Naming Module Related Configurations ***************#

### If enable data warmup. If set to false, the server would accept request without local data preparation:

# nacos.naming.data.warmup=true

### If enable the instance auto expiration, kind like of health check of instance:

# nacos.naming.expireInstance=true

### Add in 2.0.0

### The interval to clean empty service, unit: milliseconds.

# nacos.naming.clean.empty-service.interval=60000

### The expired time to clean empty service, unit: milliseconds.

# nacos.naming.clean.empty-service.expired-time=60000

### The interval to clean expired metadata, unit: milliseconds.

# nacos.naming.clean.expired-metadata.interval=5000

### The expired time to clean metadata, unit: milliseconds.

# nacos.naming.clean.expired-metadata.expired-time=60000

### The delay time before push task to execute from service changed, unit: milliseconds.

# nacos.naming.push.pushTaskDelay=500

### The timeout for push task execute, unit: milliseconds.

# nacos.naming.push.pushTaskTimeout=5000

### The delay time for retrying failed push task, unit: milliseconds.

# nacos.naming.push.pushTaskRetryDelay=1000

### Since 2.0.3

### The expired time for inactive client, unit: milliseconds.

# nacos.naming.client.expired.time=180000

#*************** CMDB Module Related Configurations ***************#

### The interval to dump external CMDB in seconds:

# nacos.cmdb.dumpTaskInterval=3600

### The interval of polling data change event in seconds:

# nacos.cmdb.eventTaskInterval=10

### The interval of loading labels in seconds:

# nacos.cmdb.labelTaskInterval=300

### If turn on data loading task:

# nacos.cmdb.loadDataAtStart=false

#*************** Metrics Related Configurations ***************#

### Metrics for prometheus

#management.endpoints.web.exposure.include=*

### Metrics for elastic search

management.metrics.export.elastic.enabled=false

#management.metrics.export.elastic.host=http://localhost:9200

### Metrics for influx

management.metrics.export.influx.enabled=false

#management.metrics.export.influx.db=springboot

#management.metrics.export.influx.uri=http://localhost:8086

#management.metrics.export.influx.auto-create-db=true

#management.metrics.export.influx.consistency=one

#management.metrics.export.influx.compressed=true

#*************** Access Log Related Configurations ***************#

### If turn on the access log:

server.tomcat.accesslog.enabled=true

### The access log pattern:

server.tomcat.accesslog.pattern=%h %l %u %t "%r" %s %b %D %{User-Agent}i %{Request-Source}i

### The directory of access log:

server.tomcat.basedir=file:.

#*************** Access Control Related Configurations ***************#

### If enable spring security, this option is deprecated in 1.2.0:

#spring.security.enabled=false

### The ignore urls of auth

nacos.security.ignore.urls=/,/error,/**/*.css,/**/*.js,/**/*.html,/**/*.map,/**/*.svg,/**/*.png,/**/*.ico,/console-ui/public/**,/v1/auth/**,/v1/console/health/**,/actuator/**,/v1/console/server/**

### The auth system to use, currently only 'nacos' and 'ldap' is supported:

nacos.core.auth.system.type=nacos

### If turn on auth system:

#nacos.core.auth.enabled=false

nacos.core.auth.enabled=true

### Turn on/off caching of auth information. By turning on this switch, the update of auth information would have a 15 seconds delay.

nacos.core.auth.caching.enabled=true

### Since 1.4.1, Turn on/off white auth for user-agent: nacos-server, only for upgrade from old version.

nacos.core.auth.enable.userAgentAuthWhite=false

### Since 1.4.1, worked when nacos.core.auth.enabled=true and nacos.core.auth.enable.userAgentAuthWhite=false.

### The two properties is the white list for auth and used by identity the request from other server.

#nacos.core.auth.server.identity.key=

#nacos.core.auth.server.identity.value=

nacos.core.auth.server.identity.key=exampleKey

nacos.core.auth.server.identity.value=exampleValue

### worked when nacos.core.auth.system.type=nacos

### The token expiration in seconds:

nacos.core.auth.plugin.nacos.token.cache.enable=false

nacos.core.auth.plugin.nacos.token.expire.seconds=18000

### The default token (Base64 String):

#nacos.core.auth.plugin.nacos.token.secret.key=

nacos.core.auth.plugin.nacos.token.secret.key=Ho9pJlDFurhga1847fhj3jtlsvc18jguehfjgkhh17365jdf8

### worked when nacos.core.auth.system.type=ldap,{0} is Placeholder,replace login username

#nacos.core.auth.ldap.url=ldap://localhost:389

#nacos.core.auth.ldap.basedc=dc=example,dc=org

#nacos.core.auth.ldap.userDn=cn=admin,${nacos.core.auth.ldap.basedc}

#nacos.core.auth.ldap.password=admin

#nacos.core.auth.ldap.userdn=cn={0},dc=example,dc=org

#nacos.core.auth.ldap.filter.prefix=uid

#nacos.core.auth.ldap.case.sensitive=true

#*************** Istio Related Configurations ***************#

### If turn on the MCP server:

nacos.istio.mcp.server.enabled=false

#*************** Core Related Configurations ***************#

### set the WorkerID manually

# nacos.core.snowflake.worker-id=

### Member-MetaData

# nacos.core.member.meta.site=

# nacos.core.member.meta.adweight=

# nacos.core.member.meta.weight=

### MemberLookup

### Addressing pattern category, If set, the priority is highest

# nacos.core.member.lookup.type=[file,address-server]

## Set the cluster list with a configuration file or command-line argument

# nacos.member.list=192.168.16.101:8847?raft_port=8807,192.168.16.101?raft_port=8808,192.168.16.101:8849?raft_port=8809

## for AddressServerMemberLookup

# Maximum number of retries to query the address server upon initialization

# nacos.core.address-server.retry=5

## Server domain name address of [address-server] mode

# address.server.domain=jmenv.tbsite.net

## Server port of [address-server] mode

# address.server.port=8080

## Request address of [address-server] mode

# address.server.url=/nacos/serverlist

#*************** JRaft Related Configurations ***************#

### Sets the Raft cluster election timeout, default value is 5 second

# nacos.core.protocol.raft.data.election_timeout_ms=5000

### Sets the amount of time the Raft snapshot will execute periodically, default is 30 minute

# nacos.core.protocol.raft.data.snapshot_interval_secs=30

### raft internal worker threads

# nacos.core.protocol.raft.data.core_thread_num=8

### Number of threads required for raft business request processing

# nacos.core.protocol.raft.data.cli_service_thread_num=4

### raft linear read strategy. Safe linear reads are used by default, that is, the Leader tenure is confirmed by heartbeat

# nacos.core.protocol.raft.data.read_index_type=ReadOnlySafe

### rpc request timeout, default 5 seconds

# nacos.core.protocol.raft.data.rpc_request_timeout_ms=5000

#*************** Distro Related Configurations ***************#

### Distro data sync delay time, when sync task delayed, task will be merged for same data key. Default 1 second.

# nacos.core.protocol.distro.data.sync.delayMs=1000

### Distro data sync timeout for one sync data, default 3 seconds.

# nacos.core.protocol.distro.data.sync.timeoutMs=3000

### Distro data sync retry delay time when sync data failed or timeout, same behavior with delayMs, default 3 seconds.

# nacos.core.protocol.distro.data.sync.retryDelayMs=3000

### Distro data verify interval time, verify synced data whether expired for a interval. Default 5 seconds.

# nacos.core.protocol.distro.data.verify.intervalMs=5000

### Distro data verify timeout for one verify, default 3 seconds.

# nacos.core.protocol.distro.data.verify.timeoutMs=3000

### Distro data load retry delay when load snapshot data failed, default 30 seconds.

# nacos.core.protocol.distro.data.load.retryDelayMs=30000

### enable to support prometheus service discovery

#nacos.prometheus.metrics.enabled=true

### Since 2.3

#*************** Grpc Configurations ***************#

## sdk grpc(between nacos server and client) configuration

## Sets the maximum message size allowed to be received on the server.

#nacos.remote.server.grpc.sdk.max-inbound-message-size=10485760

## Sets the time(milliseconds) without read activity before sending a keepalive ping. The typical default is two hours.

#nacos.remote.server.grpc.sdk.keep-alive-time=7200000

## Sets a time(milliseconds) waiting for read activity after sending a keepalive ping. Defaults to 20 seconds.

#nacos.remote.server.grpc.sdk.keep-alive-timeout=20000

## Sets a time(milliseconds) that specify the most aggressive keep-alive time clients are permitted to configure. The typical default is 5 minutes

#nacos.remote.server.grpc.sdk.permit-keep-alive-time=300000

## cluster grpc(inside the nacos server) configuration

#nacos.remote.server.grpc.cluster.max-inbound-message-size=10485760

## Sets the time(milliseconds) without read activity before sending a keepalive ping. The typical default is two hours.

#nacos.remote.server.grpc.cluster.keep-alive-time=7200000

## Sets a time(milliseconds) waiting for read activity after sending a keepalive ping. Defaults to 20 seconds.

#nacos.remote.server.grpc.cluster.keep-alive-timeout=20000

## Sets a time(milliseconds) that specify the most aggressive keep-alive time clients are permitted to configure. The typical default is 5 minutes

#nacos.remote.server.grpc.cluster.permit-keep-alive-time=300000

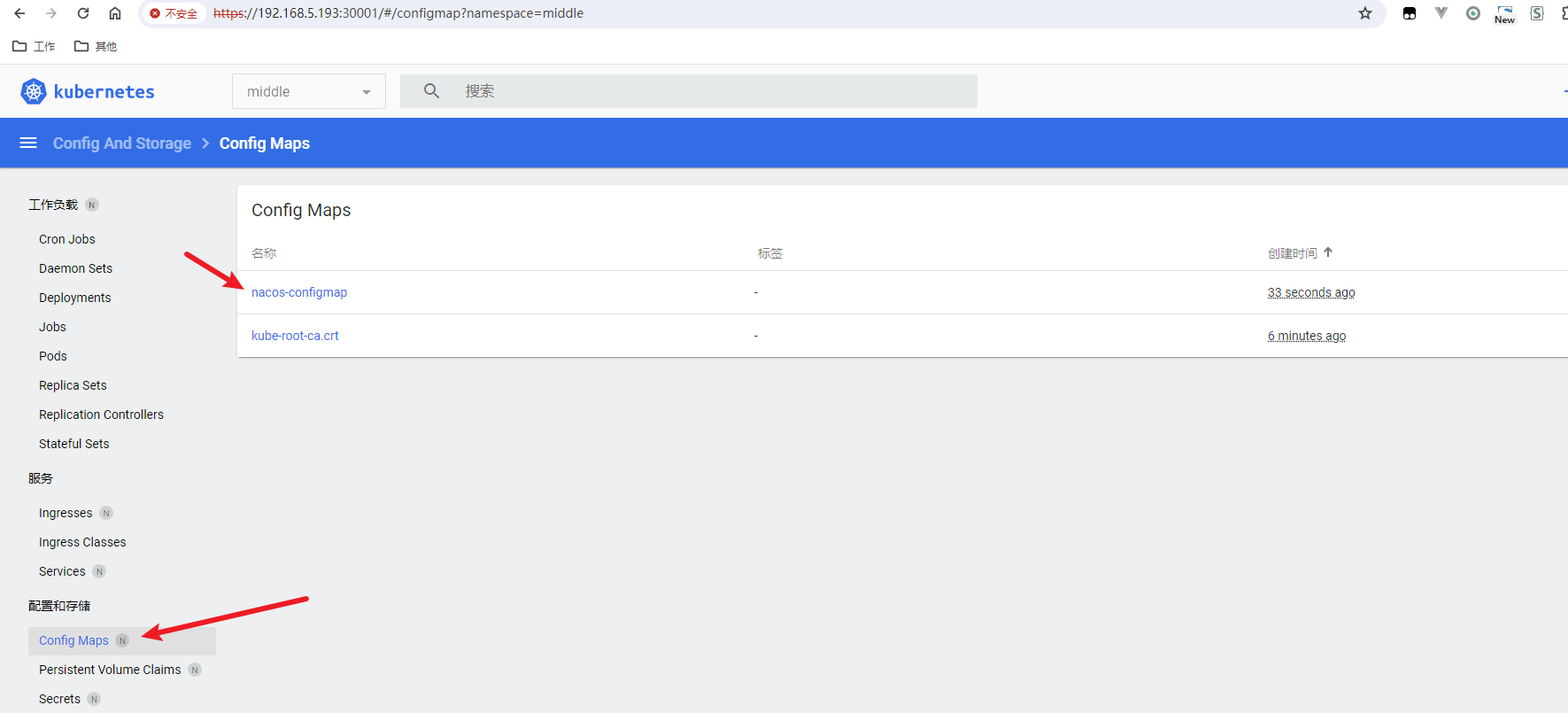

2.生成ConfigMap

将上一步的文件放到此路径:/work/devops/k8s/app/nacos/conf/application.properties

kubectl create configmap nacos-configmap --namespace=middle --from-file=conf/application.properties结果:

configmap/nacos-configmap created命令查看结果

kubectl get configmap -n middle结果

dashboard查看结果?

3.创建K8S配置

创建k8s.yaml文件,内容如下:

#用PV创建存储空间

---

apiVersion: v1

kind: PersistentVolume

metadata:

name: pv-volume-nacos

namespace: middle

labels:

type: local

pv-name: pv-volume-nacos

spec:

storageClassName: manual-nacos

capacity:

storage: 1Gi

accessModes:

- ReadWriteOnce

hostPath:

path: "/work/devops/k8s/app/nacos/pv"

---

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: pv-claim-nacos

namespace: middle

spec:

storageClassName: manual-nacos

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 1Gi

selector:

matchLabels:

pv-name: pv-volume-nacos

#创建Nacos容器

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: nacos-standalone

namespace: middle

spec:

replicas: 1 #单机模式下若副本大于1,注册时会分配到不同副本上。web查看时,只能看到一个副本的注册服务。刷新web网页可切换副本

selector:

matchLabels:

app: nacos-standalone

template:

metadata:

labels:

app: nacos-standalone

annotations:

pod.alpha.kubernetes.io/initialized: "true"

spec:

tolerations: #设置能在master上部署

- key: node-role.kubernetes.io/master

operator: Exists

initContainers:

- name: peer-finder-plugin-install

image: nacos/nacos-peer-finder-plugin:1.1

imagePullPolicy: Always

volumeMounts:

- mountPath: /home/nacos/plugins/peer-finder

name: volume

subPath: peer-finder

containers:

- name: nacos

image: nacos/nacos-server:v2.3.0

imagePullPolicy: IfNotPresent

env:

- name: TZ

value: Asia/Shanghai

- name: MODE

value: standalone

- name: EMBEDDED_STORAGE

value: embedded

volumeMounts:

- name: volume

mountPath: /home/nacos/plugins/peer-finder

subPath: peer-finder

- name: volume

mountPath: /home/nacos/data

subPath: data

- name: volume

mountPath: /home/nacos/logs

subPath: logs

- name: config-map

mountPath: /home/nacos/conf/application.properties

subPath: application.properties

ports:

- containerPort: 8848

name: client

- containerPort: 9848

name: client-rpc

- containerPort: 9849

name: raft-rpc

- containerPort: 7848

name: old-raft-rpc

volumes:

- name: volume

persistentVolumeClaim:

claimName: pv-claim-nacos

- name: config-map

configMap:

name: nacos-configmap

affinity:

podAntiAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

- labelSelector:

matchExpressions:

- key: "app"

operator: In

values:

- nacos-standalone

topologyKey: "kubernetes.io/hostname"

---

apiVersion: v1

kind: Service

metadata:

namespace: middle

name: nacos-service

labels:

app: nacos-service

spec:

type: NodePort

ports:

- port: 8848

name: server-regist

targetPort: 8848

nodePort: 30006

- port: 9848 # 必须开放出去,否则开发和互联网环境无法访问,这是rpc 服务调用,程序默认 端口 偏移 1000

name: server-grpc

targetPort: 9848

nodePort: 30007

- port: 9849 # 必须开放出去,否则开发和互联网环境无法访问,这是rpc 服务调用,程序默认 端口 偏移 1000

name: server-grpc-sync

targetPort: 9849

nodePort: 30008

selector:

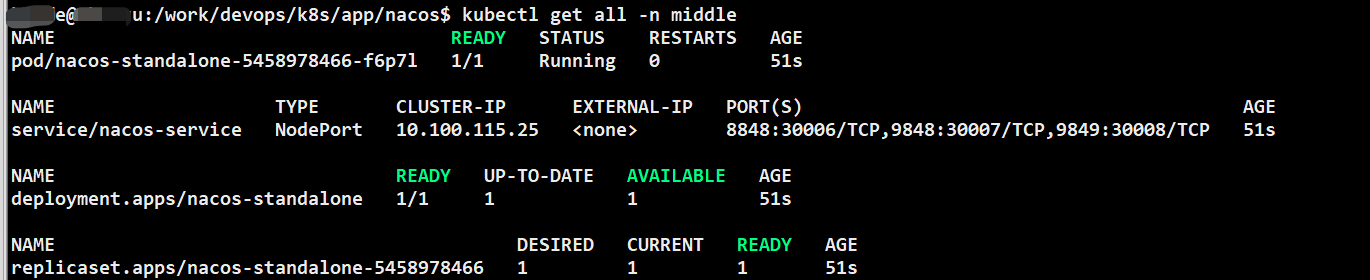

app: nacos-standalone4.启动Nacos

kubectl apply -f k8s.yaml 结果

persistentvolume/pv-volume-nacos created

persistentvolumeclaim/pv-claim-nacos created

deployment.apps/nacos-standalone created

service/nacos-service created用命令查看结果

kubectl get all -n middle结果

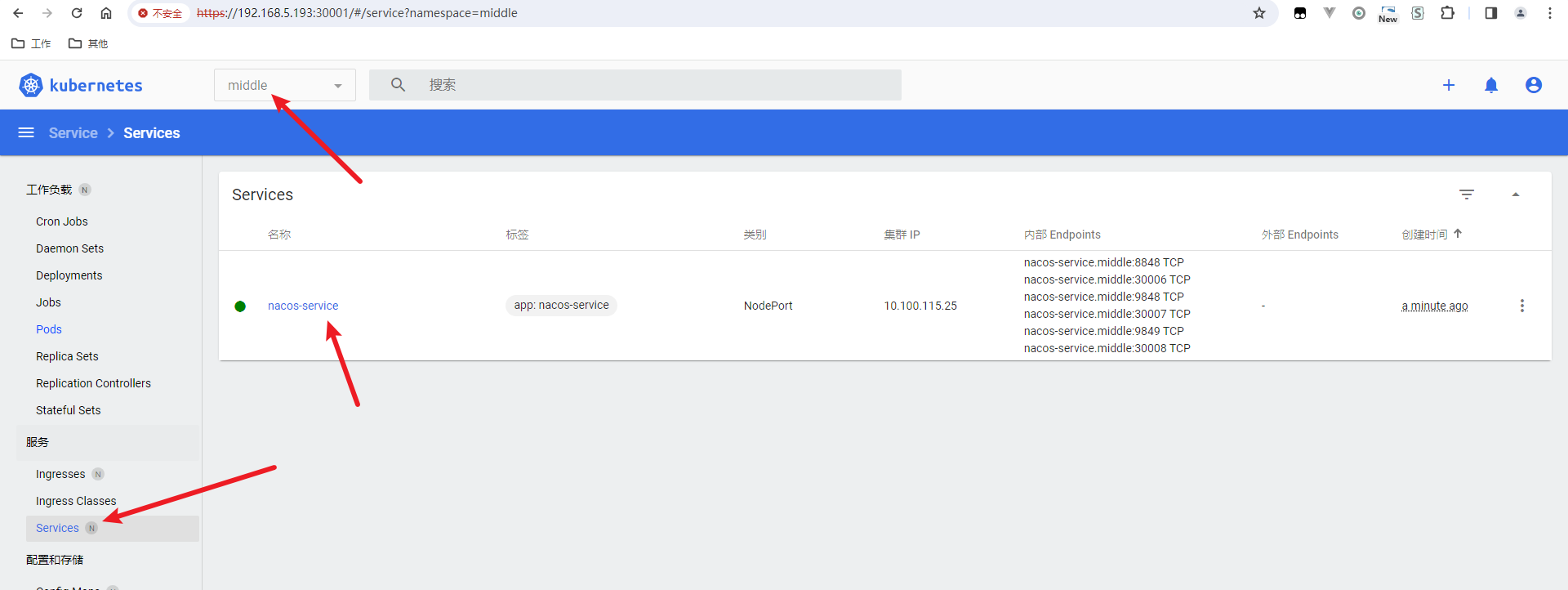

用dashboard查看结果

?

?

?

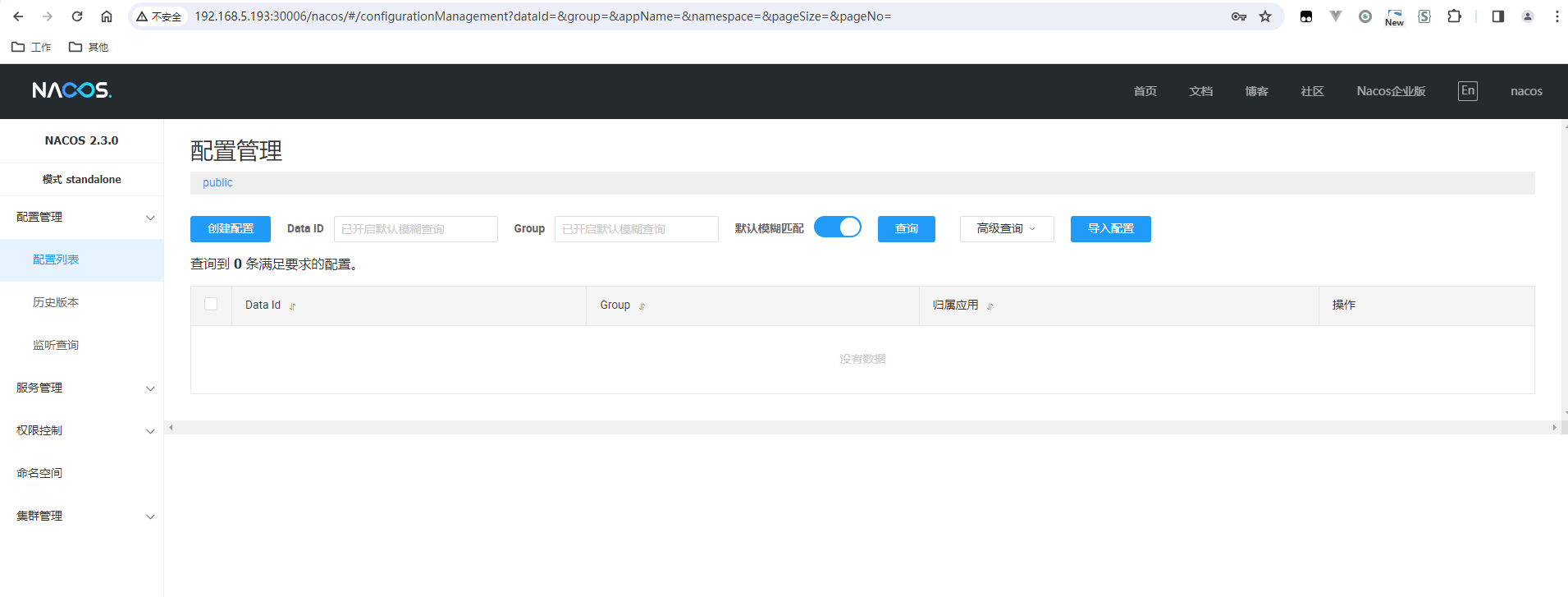

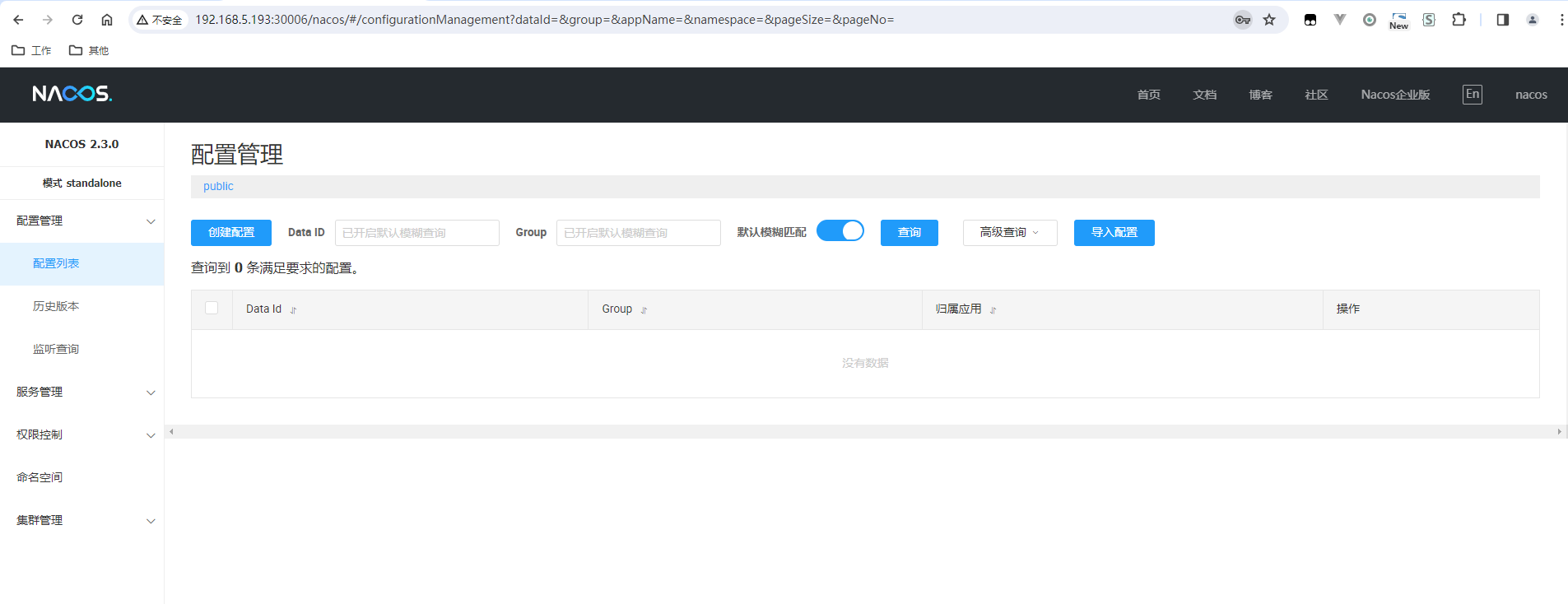

5.访问Nacos页面?

访问:http://192.168.5.193:30006/nacos

结果:

输入默认的账号密码(nacos/nacos),登录进去:

本文来自互联网用户投稿,该文观点仅代表作者本人,不代表本站立场。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。 如若内容造成侵权/违法违规/事实不符,请联系我的编程经验分享网邮箱:chenni525@qq.com进行投诉反馈,一经查实,立即删除!

- Python教程

- 深入理解 MySQL 中的 HAVING 关键字和聚合函数

- Qt之QChar编码(1)

- MyBatis入门基础篇

- 用Python脚本实现FFmpeg批量转换

- 30分钟搞定Vue开发,学Vue入门看这一篇就够啦!!!

- WEB前端知识点整理(JQUERY+Bootstrap+ECharts)

- Python 自动化测试框架unittest与pytest的区别

- 电池组不能充电的原因

- 肯尼斯·里科《C和指针》第6章 指针(6)编程的练习:查找字符

- C++内存分区

- 如何给openai的assistant添加Functions

- 【Qt之模型视图】5. Qt库提供的视图便捷类

- springboot切面获取参数转为实体对象

- goland错误:该版本的1%与您运行的windows版本不兼容