anolisos8.8安装显卡+CUDA工具+容器运行时支持(containerd/docker)+k8s部署GPU插件

发布时间:2023年12月17日

anolisos8.8安装显卡及cuda工具

一、目录

1、测试环境

2、安装显卡驱动

3、安装cuda工具

4、配置容器运行时

5、K8S集群安装nvidia插件

二、测试环境

操作系统:Anolis OS 8.8

内核版本:5.10.134-13.an8.x86_64

显卡安装版本:525.147.05

cuda版本:V10.2.89

外网要求:必须

三、安装显卡驱动

3.1、禁用nonveau

[root@localhost ~]# wget https://ops-publicread-1257137142.cos.ap-beijing.myqcloud.com/shell/disable_nouveau.sh

[root@localhost ~]# bash disable_nouveau.sh

[root@localhost ~]# lsmod | grep nouveau

#重启服务器再次进行检测

[root@localhost ~]# reboot

[root@localhost ~]# lsmod | grep nouveau

3.2、下载显卡驱动并安装

显卡下载地址:https://www.nvidia.com/Download/Find.aspx?lang=en-us#

注:根据显卡型号选择对应驱动进行下载!

[root@localhost src]# lspci |grep NVIDIA

13:00.0 3D controller: NVIDIA Corporation TU104GL [Tesla T4] (rev a1)

[root@localhost src]# wget https://us.download.nvidia.cn/tesla/525.147.05/NVIDIA-Linux-x86_64-525.147.05.run

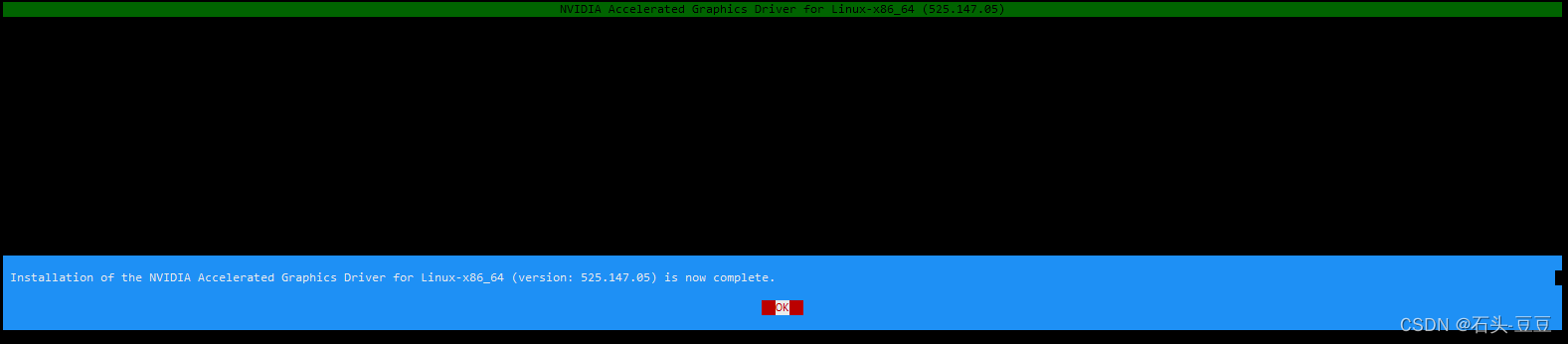

[root@localhost src]# bash NVIDIA-Linux-x86_64-525.147.05.run

#根据提示进行安装

如下则安装完成!

检测

[root@localhost src]# nvidia-smi

Tue Dec 12 10:16:35 2023

+-----------------------------------------------------------------------------+

| NVIDIA-SMI 525.147.05 Driver Version: 525.147.05 CUDA Version: 12.0 |

|-------------------------------+----------------------+----------------------+

| GPU Name Persistence-M| Bus-Id Disp.A | Volatile Uncorr. ECC |

| Fan Temp Perf Pwr:Usage/Cap| Memory-Usage | GPU-Util Compute M. |

| | | MIG M. |

|===============================+======================+======================|

| 0 Tesla T4 Off | 00000000:13:00.0 Off | 0 |

| N/A 63C P0 30W / 70W | 2MiB / 15360MiB | 5% Default |

| | | N/A |

+-------------------------------+----------------------+----------------------+

+-----------------------------------------------------------------------------+

| Processes: |

| GPU GI CI PID Type Process name GPU Memory |

| ID ID Usage |

|=============================================================================|

| No running processes found |

+-----------------------------------------------------------------------------+

四、安装CUDA工具

4.1、官网下载指定版本CUDA

https://developer.nvidia.com/cuda-toolkit-archive

4.2、安装CUDA

[root@localhost src]# wget https://developer.download.nvidia.com/compute/cuda/10.2/Prod/local_installers/cuda_10.2.89_440.33.01_linux.run

[root@localhost src]# sh cuda_10.2.89_440.33.01_linux.run

#加载程序耗时3分钟

—————————————————————————————————————————————————————————————————————————————————

x End User License Agreement x

x - x

x x

x x

x Preface x

x - x

x x

x The Software License Agreement in Chapter 1 and the Supplement x

x in Chapter 2 contain license terms and conditions that govern x

x the use of NVIDIA software. By accepting this agreement, you x

x agree to comply with all the terms and conditions applicable x

x to the product(s) included herein. x

x x

x x

x NVIDIA Driver x

x x

x x

x Description x

x x

x This package contains the operating system driver and x

xq x

x Do you accept the above EULA? (accept/decline/quit): x

x accept x

—————————————————————————————————————————————————————————————————————————————————

#输入accept回车

—————————————————————————————————————————————————————————————————————————————————

x CUDA Installer se Agreement x

x - [ ] Driver x

x [ ] 440.33.01 x

x + [X] CUDA Toolkit 10.2 x

x [X] CUDA Samples 10.2 x

x [X] CUDA Demo Suite 10.2 x

x [X] CUDA Documentation 10.2 x

x Options x

x Install x

x x

x x

x x

x x

x x

x VIDIA Driver x

x x

x x

x escription x

x x

x x

x

x x

x Up/Down: Move | Left/Right: Expand | 'Enter': Select | 'A': Advanced options x

—————————————————————————————————————————————————————————————————————————————————

#去掉显卡驱动选择install继续

4.3、设置cuda环境变量

[root@localhost ~]# echo "export PATH=/usr/local/cuda/bin:$PATH" >> /etc/profile

[root@localhost ~]# echo "export LD_LIBRARY_PATH=/usr/local/cuda/lib64:$LD_LIBRARY_PATH" >> /etc/profile

[root@localhost ~]# source /etc/profile

[root@localhost ~]# nvcc --version

nvcc: NVIDIA (R) Cuda compiler driver

Copyright (c) 2005-2019 NVIDIA Corporation

Built on Wed_Oct_23_19:24:38_PDT_2019

Cuda compilation tools, release 10.2, V10.2.89

五、配置容器运行时

5.1、安装显卡容器运行时

#添加阿里docker-ce源

# step 1: 安装必要的一些系统工具

[root@localhost ~]# yum install -y yum-utils device-mapper-persistent-data lvm2

# Step 2: 添加软件源信息

[root@localhost ~]# yum-config-manager --add-repo https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

# Step 3

[root@localhost ~]# sed -i 's+download.docker.com+mirrors.aliyun.com/docker-ce+' /etc/yum.repos.d/docker-ce.repo

# Step 4: 更新并安装Docker-CE

[root@localhost ~]# yum makecache

# Step 5: 安装显卡容器运行时

[root@localhost ~]# yum -y install nvidia-docker2

5.2、配置containerd支持显卡

# Step1 : 安装containerd

[root@localhost ~]# yum -y install containerd.io

# Step2 :生成默认配置

[root@localhost ~]# containerd config default > /etc/containerd/config.toml

# Step3 :修改containerd配置 /etc/containerd/config.toml

###############################################################

...

[plugins."io.containerd.grpc.v1.cri".containerd]

snapshotter = "overlayfs"

default_runtime_name = "runc"

no_pivot = false

...

[plugins."io.containerd.grpc.v1.cri".containerd.runtimes]

[plugins."io.containerd.grpc.v1.cri".containerd.runtimes.runc]

runtime_type = "io.containerd.runtime.v1.linux" # 将此处 runtime_type 的值改成 io.containerd.runtime.v1.linux

...

[plugins."io.containerd.runtime.v1.linux"]

shim = "containerd-shim"

runtime = "nvidia-container-runtime" # 将此处 runtime 的值改成 nvidia-container-runtime

...

###########################################################

# Step4 : 启动containerd

[root@localhost ~]# systemctl start containerd && systemctl enable containerd

# Step5 : 跑测试容器测试

[root@localhost ~]# ctr image pull docker.io/nvidia/cuda:11.2.2-base-ubuntu20.04

[root@localhost ~]# ctr run --rm -t \

> --runc-binary=/usr/bin/nvidia-container-runtime \

> --env NVIDIA_VISIBLE_DEVICES=all \

> docker.io/nvidia/cuda:11.2.2-base-ubuntu20.04 \

> cuda-11.6.2-base-ubuntu20.04 nvidia-smi

Tue Dec 12 03:01:10 2023

+-----------------------------------------------------------------------------+

| NVIDIA-SMI 525.147.05 Driver Version: 525.147.05 CUDA Version: 12.0 |

|-------------------------------+----------------------+----------------------+

| GPU Name Persistence-M| Bus-Id Disp.A | Volatile Uncorr. ECC |

| Fan Temp Perf Pwr:Usage/Cap| Memory-Usage | GPU-Util Compute M. |

| | | MIG M. |

|===============================+======================+======================|

| 0 Tesla T4 Off | 00000000:13:00.0 Off | 0 |

| N/A 66C P0 30W / 70W | 2MiB / 15360MiB | 4% Default |

| | | N/A |

+-------------------------------+----------------------+----------------------+

+-----------------------------------------------------------------------------+

| Processes: |

| GPU GI CI PID Type Process name GPU Memory |

| ID ID Usage |

|=============================================================================|

| No running processes found |

+-----------------------------------------------------------------------------+

5.3、配置Docker支持显卡

# Step1 : 安装docker

[root@localhost ~]# yum install docker-ce-23.0.6 -y

# Step2 : 配置docker容器运行时,并启动docker

#修改cgroup驱动为systemd[k8s官方推荐]、限制容器日志量、修改存储类型

[root@localhost ~]# mkdir /etc/docker -p

[root@localhost ~]# cat > /etc/docker/daemon.json <<EOF

{

"registry-mirrors": [

"https://tf72mndn.mirror.aliyuncs.com"

],

"exec-opts": ["native.cgroupdriver=systemd"],

"log-opts": {

"max-file": "3",

"max-size": "500m"

},

"runtimes": {

"nvidia": {

"path": "/usr/bin/nvidia-container-runtime",

"runtimeArgs": []

}

}

}

EOF

[root@localhost ~]# systemctl daemon-reload

[root@localhost ~]# systemctl restart docker

[root@localhost ~]# systemctl enable docker

# Step3 : 启动docker测试容器

[root@localhost ~]# docker run --runtime=nvidia --rm nvidia/cuda:11.0-base nvidia-smi

Unable to find image 'nvidia/cuda:11.0-base' locally

11.0-base: Pulling from nvidia/cuda

54ee1f796a1e: Pull complete

f7bfea53ad12: Pull complete

46d371e02073: Pull complete

b66c17bbf772: Pull complete

3642f1a6dfb3: Pull complete

e5ce55b8b4b9: Pull complete

155bc0332b0a: Pull complete

Digest: sha256:774ca3d612de15213102c2dbbba55df44dc5cf9870ca2be6c6e9c627fa63d67a

Status: Downloaded newer image for nvidia/cuda:11.0-base

Tue Dec 12 03:10:32 2023

+-----------------------------------------------------------------------------+

| NVIDIA-SMI 525.147.05 Driver Version: 525.147.05 CUDA Version: 12.0 |

|-------------------------------+----------------------+----------------------+

| GPU Name Persistence-M| Bus-Id Disp.A | Volatile Uncorr. ECC |

| Fan Temp Perf Pwr:Usage/Cap| Memory-Usage | GPU-Util Compute M. |

| | | MIG M. |

|===============================+======================+======================|

| 0 Tesla T4 Off | 00000000:13:00.0 Off | 0 |

| N/A 64C P0 30W / 70W | 2MiB / 15360MiB | 5% Default |

| | | N/A |

+-------------------------------+----------------------+----------------------+

+-----------------------------------------------------------------------------+

| Processes: |

| GPU GI CI PID Type Process name GPU Memory |

| ID ID Usage |

|=============================================================================|

| No running processes found |

+-----------------------------------------------------------------------------+

六、K8S集群安装nvidia插件

# Step1 : GPU主机打标签

[root@localhost ~]# kubectl label node node9 nvidia.com=gpu

# Step2 : K8S集群安装GPU驱动插件(仅需要安装一次!)

[root@localhost ~]# kubectl apply -f https://raw.githubusercontent.com/NVIDIA/k8s-device-plugin/1.0.0-beta4/nvidia-device-plugin.yml

# Step3 : 带GPU资源主机GPU资源信息

[root@localhost ~]# kubectl describe node node9 |grep gpu

gpu/type=nvidia

nvidia.com/gpu: 1

nvidia.com/gpu: 1

nvidia.com/gpu 0 0

# Step4 : 部署使用GPU资源测试容器

apiVersion: v1

kind: Pod

metadata:

name: cuda-vector-add

spec:

restartPolicy: OnFailure

containers:

- name: cuda-vector-add

#image: "k8s.gcr.io/cuda-vector-add:v0.1"

image: "docker.io/nvidia/cuda:11.0.3-base-ubuntu20.04"

command:

- nvidia-smi

resources:

limits:

nvidia.com/gpu: 1

其他:disable_nouveau.sh 脚本内容

#!/bin/bash

echo -e "\033[32m>>>>>>>>更新系统内核,请耐心等待!\033[0m"

yum -y install gcc make elfutils-libelf-devel libglvnd-devel kernel-devel epel-release

yum -y install dkms

rm -f /etc/modprobe.d/blacklist-nvidia-nouveau.conf /etc/modprobe.d/nvidia-unsupported-gpu.conf

echo blacklist nouveau | tee /etc/modprobe.d/blacklist-nvidia-nouveau.conf && \

echo options nouveau modeset=0 | tee -a /etc/modprobe.d/blacklist-nvidia-nouveau.conf && \

echo options nvidia NVreg_OpenRmEnableUnsupportedGpus=1 | tee /etc/modprobe.d/nvidia-unsupported-gpu.conf

mv /boot/initramfs-$(uname -r).img /boot/initramfs-$(uname -r)-nouveau.img

dracut /boot/initramfs-$(uname -r).img $(uname -r)

文章来源:https://blog.csdn.net/xjjj064/article/details/134945680

本文来自互联网用户投稿,该文观点仅代表作者本人,不代表本站立场。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。 如若内容造成侵权/违法违规/事实不符,请联系我的编程经验分享网邮箱:chenni525@qq.com进行投诉反馈,一经查实,立即删除!

本文来自互联网用户投稿,该文观点仅代表作者本人,不代表本站立场。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。 如若内容造成侵权/违法违规/事实不符,请联系我的编程经验分享网邮箱:chenni525@qq.com进行投诉反馈,一经查实,立即删除!

最新文章

- Python教程

- 深入理解 MySQL 中的 HAVING 关键字和聚合函数

- Qt之QChar编码(1)

- MyBatis入门基础篇

- 用Python脚本实现FFmpeg批量转换

- AI浅谈:计算机视觉(CV)技术的优势和挑战

- 英国金融时报关注TRX登陆Mercado Bitcoin交易所:波场TRON强化南美洲布局,国际化进程持续加速

- 8.云原生存储之Ceph集群

- [Linux]chkconfig命令详解

- js 中 复杂json 组装 实例通用模式

- 递归-迷宫问题

- CSS:字体和文本样式

- 18国签署,全球首份《安全AI系统开发指南》发布

- js的for嵌套和数组的map+some两种方法实现两个对象数组进行比对,得到一个期望的新数组

- 【LeetCode算法题】各类排序算法的Python实现