K8s---存储卷(动态pv和pvc)

当我要发布pvc可以生成pv,还可以共享服务器上直接生成挂载目录。pvc直接绑定pv。

动态pv需要两个组件

1、卷插件:k8s本生支持的动态pv创建不包括nfs,需要声明和安装一个外部插件

Provisioner: 存储分配器。动态创建pv,然后根据pvc的请求自动绑定和使用。

2、StorageClass:来定义pv的属性,存储类型、大小。回收策略。

还是用nfs支持动态pv,Nfs支持的方式NFS-client,Provisioner来适配NFS-client

nfs-client-Provisioner卷插件。

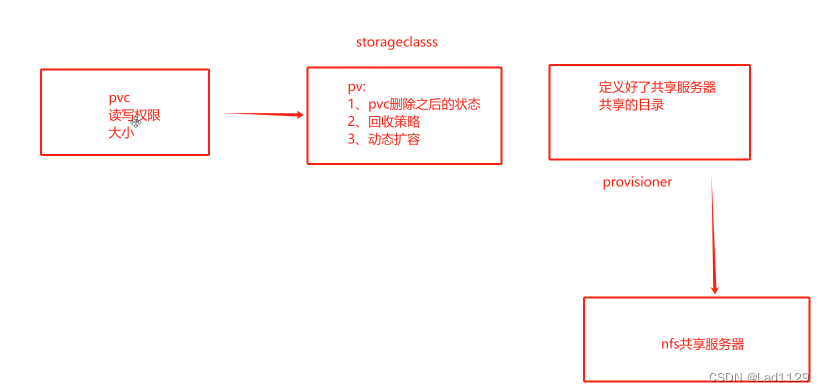

框架

实验:

master:192.168.10.10

node01:192.168.10.20

node02:192.168.10.30

- 创建账号,给卷插件能够在集群内部通信,获取资源,监听事件,创建、删除、更新pv

- 创建卷插件pod:卷插件的pod插件pv

- storageclass:给pv赋予属性 (pvc被删除之后pv的状态,以及回收策略)

- 创建pvc-------完成。

node04:192.168.10.40

mkdir /opt/k8s

vim /etc/exports

/opt/k8s 192.168.10.0/24(rw,no_root_squash,sync)

#注意按先后顺序

systemctl restart rpcbind

systemctl restart nfs

#查看暴露的nfs共享文件

showmount -emaster:192.168.10.1

vim nfs-client-rbac.yaml

#创建 Service Account 账户,用来管理 NFS Provisioner 在 k8s 集群中运行的权限

apiVersion: v1

kind: ServiceAccount

metadata:

name: nfs-client-provisioner

---

#创建集群角色

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

name: nfs-client-provisioner-role

rules:

- apiGroups: [""]

#apigroup定义了规则可以使用哪个api组的权限,空字符""表示直接使用api的核心组的资源。

resources: ["persistentvolumes"]

verbs: ["get","list","watch","create","delete"]

#表示权限的当中

- apiGroups: [""]

resources: ["persistentvolumeclaims"]

verbs: ["watch","get","list","update"]

#定义pv属性

- apiGroups: ["storage.k8s.io"]

resources: ["storageclasses"]

verbs: ["get","watch","list"]

- apiGroups: [""]

resources: ["events"]

verbs: ["list","create","watch","update","patch"]

- apiGroups: [""]

resources: ["endpoints"]

verbs: ["delete","create","get","watch","update","patch","list"]

---

#集群角色绑定 kubectl explain ClusterRoleBinding 查看字段详情

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: nfs-client-provisioner-bind

subjects:

- kind: ServiceAccount

name: nfs-client-provisioner

namespace: default

roleRef:

kind: ClusterRole

name: nfs-client-provisioner-role

apiGroup: rbac.authorization.k8s.ioserviceAccount

NFS Provisioner 是一个插件,没有权限是无法再集群当中获取k8s的信息。插件要有权限能够监听apiserver,获取get,list(获取集群的列表资源)create delete。

rbac:Role-bases-access-control? ?定义角色在集群当中使用的权限

vim nfs-client-provisioner.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: nfs-provisioner

labels:

app: nfs1

spec:

replicas: 1

selector:

matchLabels:

app: nfs1

template:

metadata:

labels:

app: nfs1

spec:

serviceAccountName: nfs-client-provisioner

#指定Service Account账户

containers:

- name: nfs1

image: quay.io/external_storage/nfs-client-provisioner:latest

volumeMounts:

- name: nfs

mountPath: /persistentvolumes

env:

- name: PROVISIONER_NAME

value: nfs-storage

#配置provisioner的账户名称,确保该名称与StorageClass资源中的provisioner名称保持一致

- name: NFS_SERVER

value: 192.168.10.40

#指定的是nfs共享服务器的地址

- name: NFS_PATH

value: /opt/k8s

#配置绑定的nfs服务器目录

#声明nfs数据卷

volumes:

- name: nfs

nfs:

server: 192.168.10.40

path: /opt/k8s

1.20之后有一个新的机制

selfLink: AP的资源对象之一,表示资源对象在集群当中自身的一个连接,selflink是一个唯一的表示符号,可以用于标识每一个资源对象

self link的值是一个URL,指向该资源对象的k8sapi的路径,更好的实现资源对象的查找和引用。

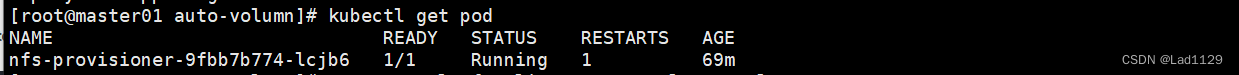

kubectl apply -f nfs-client-provisioner.yaml

kubectl get pod

NAME READY STATUS RESTARTS AGE

nfs-client-provisioner-cd6ff67-sp8qd 1/1 Running 0 14s

vim /etc/kubernetes/manifests/kube-apiserver.yaml

......

spec:

containers:

- command:

- kube-apiserver

- --feature-gates=RemoveSelfLink=false #添加这一行

- --advertise-address=192.168.10.10

......kubectl apply -f /etc/kubernetes/manifests/kube-apiserver.yaml

kubectl delete pods kube-apiserver -n kube-system

kubectl get pods -n kube-system | grep apiservervim nfs-client-storageclass.yaml

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

name: nfs-client-storageclass

#匹配provisioner

provisioner: nfs-storage

parameters:

archiveOnDelete: "false"

#pvc被删除之后,pv的状态,定义的是false,pvc被删除,pv的状态将是released,可以人工调用,继续使用

#如果是true,pv的将是Archived,表示pv不再可用

reclaimPolicy: Delete

#定义pv的回收策略,retain,另一个是delete,不支持回收

allowVolumeExpansion: true

#pv的存储空间可以动态扩缩容(仅云平台)。

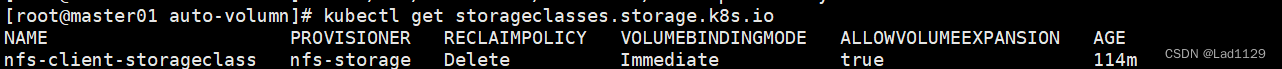

kubectl apply -f nfs-client-storageclass.yaml

[root@master01 auto-volumn]# kubectl get storageclasses.storage.k8s.io

NAME PROVISIONER RECLAIMPOLICY VOLUMEBINDINGMODE ALLOWVOLUMEEXPANSION AGE

nfs-client-storageclass nfs-storage Delete Immediate true 114m

vim pvc.yaml

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: nfs-pvc

spec:

accessModes:

- ReadWriteMany

storageClassName: nfs-client-storageclass

resources:

requests:

storage: 2Gi

#创建一个pvc,名称为nfs-pvc,使用的pv属性是nfs-client-storageclass

---

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: nginx1

name: nginx1

spec:

replicas: 3

selector:

matchLabels:

app: nginx1

template:

metadata:

labels:

app: nginx1

spec:

containers:

- image: nginx:1.22

name: nginx1

volumeMounts:

- name: html

mountPath: /usr/share/nginx/html

volumes:

- name: html

persistentVolumeClaim:

claimName: nfs-pvc

kubectl apply -f test-pvc-pod.yaml

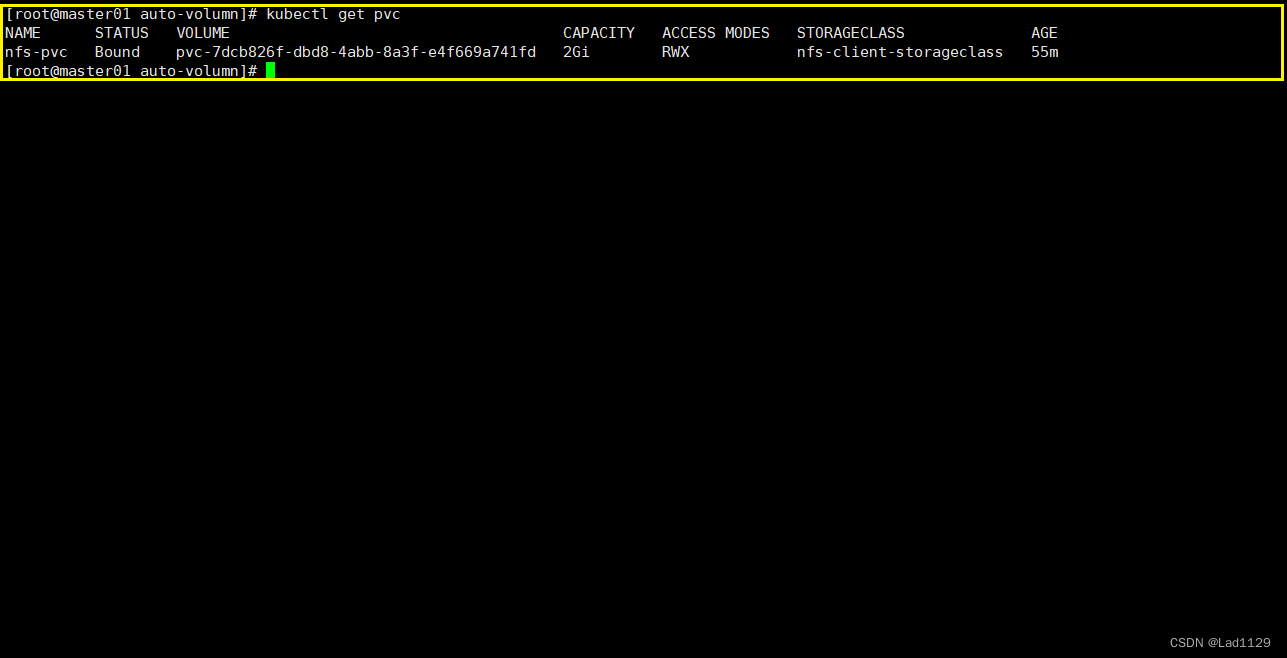

//PVC 通过 StorageClass 自动申请到空间

kubectl get pvc

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE

nfs-pvc Bound pvc-7dcb826f-dbd8-4abb-8a3f-e4f669a741fd 2Gi RWX nfs-client-storageclass 55m

删除

kubectl delete deployments.apps nginx1

kubectl delete pvc nfs-pvc删除后,如果是Retain,pv可以保留复用

? ? ? ? ? ? ? 如果是Delete,pv将会被直接删除

动态pv的默认策略Delete。

总结:

provisioner插件-..--支持nfs

5troageclass: 定义pv的属性

动态pv的默认策略是删除。没有回收

动态pv删除pvc后的状态,released

- 创建账号,给卷插件能够在集群内部通信,获取资源,监听事件,创建、删除、更新pv

- 创建卷插件pod:卷插件的pod插件pv

- storageclass:给pv赋予属性 (pvc被删除之后pv的状态,以及回收策略)

- 创建pvc-------完成。

本文来自互联网用户投稿,该文观点仅代表作者本人,不代表本站立场。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。 如若内容造成侵权/违法违规/事实不符,请联系我的编程经验分享网邮箱:chenni525@qq.com进行投诉反馈,一经查实,立即删除!

- Python教程

- 深入理解 MySQL 中的 HAVING 关键字和聚合函数

- Qt之QChar编码(1)

- MyBatis入门基础篇

- 用Python脚本实现FFmpeg批量转换

- 基于共享储能电站的工业用户日前优化经济调度【复现】

- 刚开始做广告投放,应该怎么入手?

- 为什么要RocketMQ自己实现注册中心,而不是用Zookeeper,Nacos?

- 编译和链接

- signaltap立即触发的错误解决方法

- 什么情况下不应该使用 LockWindowUpdate ?

- 给甲骨文云免费ARM实例安装带magisk的Redroid

- docker部署java后端项目

- java web 校园健康管理系统Myeclipse开发mysql数据库web结构java编程计算机网页项目

- 精选暖心的早安问候语图片,送一份温馨问候、送一串真诚祝福!