NNDL 作业13 优化算法3D可视化 [HBU]

老师作业原博客:【23-24 秋学期】NNDL 作业13 优化算法3D可视化-CSDN博客

编程实现优化算法,并3D可视化

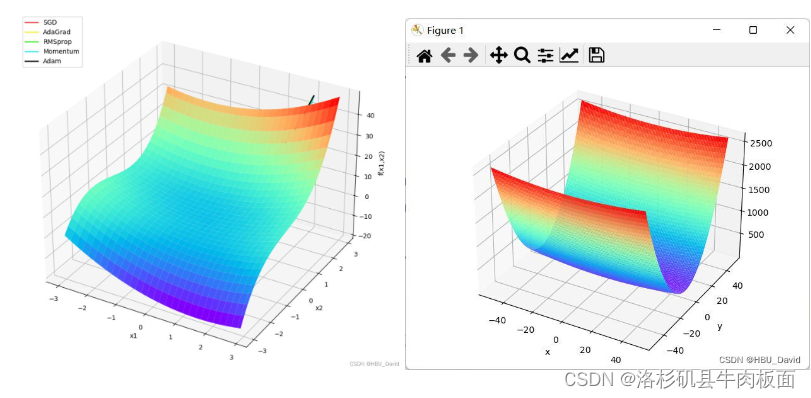

1. 函数3D可视化

分别画出?和?

的3D图

NNDL实验 优化算法3D轨迹 鱼书例题3D版_优化算法3d展示-CSDN博客

代码:

from mpl_toolkits.mplot3d import Axes3D

import numpy as np

from matplotlib import pyplot as plt

import torch

from nndl.op import Op

# 画出x**2

class OptimizedFunction3D(Op):

def __init__(self):

super(OptimizedFunction3D, self).__init__()

self.params = {'x': 0}

self.grads = {'x': 0}

def forward(self, x):

self.params['x'] = x

return x[0] ** 2 + x[1] ** 2 + x[1] ** 3 + x[0] * x[1]

def backward(self):

x = self.params['x']

gradient1 = 2 * x[0] + x[1]

gradient2 = 2 * x[1] + 3 * x[1] ** 2 + x[0]

grad1 = torch.Tensor([gradient1])

grad2 = torch.Tensor([gradient2])

self.grads['x'] = torch.cat([grad1, grad2])

# 使用numpy.meshgrid生成x1,x2矩阵,矩阵的每一行为[-3, 3],以0.1为间隔的数值

x1 = np.arange(-3, 3, 0.1)

x2 = np.arange(-3, 3, 0.1)

x1, x2 = np.meshgrid(x1, x2)

init_x = torch.Tensor(np.array([x1, x2]))

model = OptimizedFunction3D()

# 绘制 f_3d函数 的 三维图像

fig = plt.figure()

ax = plt.axes(projection='3d')

X = init_x[0].numpy()

Y = init_x[1].numpy()

Z = model(init_x).numpy()

ax.plot_surface(X, Y, Z, cmap='plasma')

ax.set_xlabel('x1')

ax.set_ylabel('x2')

ax.set_zlabel('f(x1,x2)')

plt.show()

# 画出x * x / 20 + y * y

def func(x, y):

return x * x / 20 + y * y

def paint_loss_func():

x = np.linspace(-50, 50, 100) # x的绘制范围是-50到50,从改区间均匀取100个数

y = np.linspace(-50, 50, 100) # y的绘制范围是-50到50,从改区间均匀取100个数

X, Y = np.meshgrid(x, y)

Z = func(X, Y)

fig = plt.figure() # figsize=(10, 10))

ax = Axes3D(fig)

plt.xlabel('x')

plt.ylabel('y')

ax.plot_surface(X, Y, Z, rstride=1, cstride=1, cmap='plasma')

plt.show()

paint_loss_func()结果:

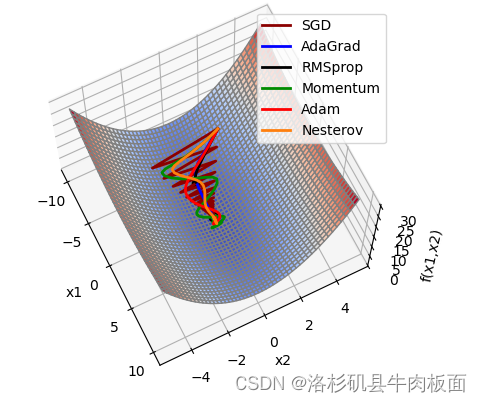

2.加入优化算法,画出轨迹

分别画出?和?

的3D轨迹图

结合3D动画,用自己的语言,从轨迹、速度等多个角度讲解各个算法优缺点

NNDL实验 优化算法3D轨迹 pytorch版_nndl 实验三 将数据转换为 pytorch 张量-CSDN博客

代码为:

?

import torch

import numpy as np

import copy

from matplotlib import pyplot as plt

from matplotlib import animation

from itertools import zip_longest

from nndl.op import Op

class Optimizer(object): # 优化器基类

def __init__(self, init_lr, model):

"""

优化器类初始化

"""

# 初始化学习率,用于参数更新的计算

self.init_lr = init_lr

# 指定优化器需要优化的模型

self.model = model

def step(self):

"""

定义每次迭代如何更新参数

"""

pass

class SimpleBatchGD(Optimizer):

def __init__(self, init_lr, model):

super(SimpleBatchGD, self).__init__(init_lr=init_lr, model=model)

def step(self):

# 参数更新

if isinstance(self.model.params, dict):

for key in self.model.params.keys():

self.model.params[key] = self.model.params[key] - self.init_lr * self.model.grads[key]

class Adagrad(Optimizer):

def __init__(self, init_lr, model, epsilon):

"""

Adagrad 优化器初始化

输入:

- init_lr: 初始学习率 - model:模型,model.params存储模型参数值 - epsilon:保持数值稳定性而设置的非常小的常数

"""

super(Adagrad, self).__init__(init_lr=init_lr, model=model)

self.G = {}

for key in self.model.params.keys():

self.G[key] = 0

self.epsilon = epsilon

def adagrad(self, x, gradient_x, G, init_lr):

"""

adagrad算法更新参数,G为参数梯度平方的累计值。

"""

G += gradient_x ** 2

x -= init_lr / torch.sqrt(G + self.epsilon) * gradient_x

return x, G

def step(self):

"""

参数更新

"""

for key in self.model.params.keys():

self.model.params[key], self.G[key] = self.adagrad(self.model.params[key],

self.model.grads[key],

self.G[key],

self.init_lr)

class RMSprop(Optimizer):

def __init__(self, init_lr, model, beta, epsilon):

"""

RMSprop优化器初始化

输入:

- init_lr:初始学习率

- model:模型,model.params存储模型参数值

- beta:衰减率

- epsilon:保持数值稳定性而设置的常数

"""

super(RMSprop, self).__init__(init_lr=init_lr, model=model)

self.G = {}

for key in self.model.params.keys():

self.G[key] = 0

self.beta = beta

self.epsilon = epsilon

def rmsprop(self, x, gradient_x, G, init_lr):

"""

rmsprop算法更新参数,G为迭代梯度平方的加权移动平均

"""

G = self.beta * G + (1 - self.beta) * gradient_x ** 2

x -= init_lr / torch.sqrt(G + self.epsilon) * gradient_x

return x, G

def step(self):

"""参数更新"""

for key in self.model.params.keys():

self.model.params[key], self.G[key] = self.rmsprop(self.model.params[key],

self.model.grads[key],

self.G[key],

self.init_lr)

class Momentum(Optimizer):

def __init__(self, init_lr, model, rho):

"""

Momentum优化器初始化

输入:

- init_lr:初始学习率

- model:模型,model.params存储模型参数值

- rho:动量因子

"""

super(Momentum, self).__init__(init_lr=init_lr, model=model)

self.delta_x = {}

for key in self.model.params.keys():

self.delta_x[key] = 0

self.rho = rho

def momentum(self, x, gradient_x, delta_x, init_lr):

"""

momentum算法更新参数,delta_x为梯度的加权移动平均

"""

delta_x = self.rho * delta_x - init_lr * gradient_x

x += delta_x

return x, delta_x

def step(self):

"""参数更新"""

for key in self.model.params.keys():

self.model.params[key], self.delta_x[key] = self.momentum(self.model.params[key],

self.model.grads[key],

self.delta_x[key],

self.init_lr)

class Nesterov(Optimizer):

def __init__(self, init_lr, model, rho):

"""

Nesterov优化器初始化

输入:

- init_lr:初始学习率

- model:模型,model.params存储模型参数值

- rho:动量因子

"""

super(Nesterov, self).__init__(init_lr=init_lr, model=model)

self.delta_x = {}

for key in self.model.params.keys():

self.delta_x[key] = 0

self.rho = rho

def nesterov(self, x, gradient_x, delta_x, init_lr):

"""

Nesterov算法更新参数,delta_x为梯度的加权移动平均

"""

delta_x_prev = delta_x

delta_x = self.rho * delta_x - init_lr * gradient_x

x += -self.rho * delta_x_prev + (1 + self.rho) * delta_x

return x, delta_x

def step(self):

"""参数更新"""

for key in self.model.params.keys():

self.model.params[key], self.delta_x[key] = self.nesterov(self.model.params[key],

self.model.grads[key],

self.delta_x[key],

self.init_lr)

class Adam(Optimizer):

def __init__(self, init_lr, model, beta1, beta2, epsilon):

"""

Adam优化器初始化

输入:

- init_lr:初始学习率

- model:模型,model.params存储模型参数值

- beta1, beta2:移动平均的衰减率

- epsilon:保持数值稳定性而设置的常数

"""

super(Adam, self).__init__(init_lr=init_lr, model=model)

self.beta1 = beta1

self.beta2 = beta2

self.epsilon = epsilon

self.M, self.G = {}, {}

for key in self.model.params.keys():

self.M[key] = 0

self.G[key] = 0

self.t = 1

def adam(self, x, gradient_x, G, M, t, init_lr):

"""

adam算法更新参数

输入:

- x:参数

- G:梯度平方的加权移动平均

- M:梯度的加权移动平均

- t:迭代次数

- init_lr:初始学习率

"""

M = self.beta1 * M + (1 - self.beta1) * gradient_x

G = self.beta2 * G + (1 - self.beta2) * gradient_x ** 2

M_hat = M / (1 - self.beta1 ** t)

G_hat = G / (1 - self.beta2 ** t)

t += 1

x -= init_lr / torch.sqrt(G_hat + self.epsilon) * M_hat

return x, G, M, t

def step(self):

"""参数更新"""

for key in self.model.params.keys():

self.model.params[key], self.G[key], self.M[key], self.t = self.adam(self.model.params[key],

self.model.grads[key],

self.G[key],

self.M[key],

self.t,

self.init_lr)

class OptimizedFunction3D(Op):

def __init__(self):

super(OptimizedFunction3D, self).__init__()

self.params = {'x': 0}

self.grads = {'x': 0}

def forward(self, x):

self.params['x'] = x

return x[0] ** 2 + x[1] ** 2 + x[1] ** 3 + x[0] * x[1]

def backward(self):

x = self.params['x']

gradient1 = 2 * x[0] + x[1]

gradient2 = 2 * x[1] + 3 * x[1] ** 2 + x[0]

grad1 = torch.Tensor([gradient1])

grad2 = torch.Tensor([gradient2])

self.grads['x'] = torch.cat([grad1, grad2])

class Visualization3D(animation.FuncAnimation):

""" 绘制动态图像,可视化参数更新轨迹 """

def __init__(self, *xy_values, z_values, labels=[], colors=[], fig, ax, interval=600, blit=True, **kwargs):

"""

初始化3d可视化类

输入:

xy_values:三维中x,y维度的值

z_values:三维中z维度的值

labels:每个参数更新轨迹的标签

colors:每个轨迹的颜色

interval:帧之间的延迟(以毫秒为单位)

blit:是否优化绘图

"""

self.fig = fig

self.ax = ax

self.xy_values = xy_values

self.z_values = z_values

frames = max(xy_value.shape[0] for xy_value in xy_values)

self.lines = [ax.plot([], [], [], label=label, color=color, lw=2)[0]

for _, label, color in zip_longest(xy_values, labels, colors)]

super(Visualization3D, self).__init__(fig, self.animate, init_func=self.init_animation, frames=frames,

interval=interval, blit=blit, **kwargs)

def init_animation(self):

# 数值初始化

for line in self.lines:

line.set_data([], [])

# line.set_3d_properties(np.asarray([])) # 源程序中有这一行,加上会报错。 Edit by David 2022.12.4

return self.lines

def animate(self, i):

# 将x,y,z三个数据传入,绘制三维图像

for line, xy_value, z_value in zip(self.lines, self.xy_values, self.z_values):

line.set_data(xy_value[:i, 0], xy_value[:i, 1])

line.set_3d_properties(z_value[:i])

return self.lines

def train_f(model, optimizer, x_init, epoch):

x = x_init

all_x = []

losses = []

for i in range(epoch):

all_x.append(copy.deepcopy(x.numpy())) # 浅拷贝 改为 深拷贝, 否则List的原值会被改变。 Edit by David 2022.12.4.

loss = model(x)

losses.append(loss)

model.backward()

optimizer.step()

x = model.params['x']

return torch.Tensor(np.array(all_x)), losses

# 构建6个模型,分别配备不同的优化器

model1 = OptimizedFunction3D()

opt_gd = SimpleBatchGD(init_lr=0.01, model=model1)

model2 = OptimizedFunction3D()

opt_adagrad = Adagrad(init_lr=0.5, model=model2, epsilon=1e-7)

model3 = OptimizedFunction3D()

opt_rmsprop = RMSprop(init_lr=0.1, model=model3, beta=0.9, epsilon=1e-7)

model4 = OptimizedFunction3D()

opt_momentum = Momentum(init_lr=0.01, model=model4, rho=0.9)

model5 = OptimizedFunction3D()

opt_adam = Adam(init_lr=0.1, model=model5, beta1=0.9, beta2=0.99, epsilon=1e-7)

model6 = OptimizedFunction3D()

opt_Nesterov = Nesterov(init_lr=0.1, model=model6, rho=0.9)

models = [model1, model2, model3, model4, model5, model6]

opts = [opt_gd, opt_adagrad, opt_rmsprop, opt_momentum, opt_adam, opt_Nesterov]

x_all_opts = []

z_all_opts = []

# 使用不同优化器训练

for model, opt in zip(models, opts):

x_init = torch.FloatTensor([2, 3])

x_one_opt, z_one_opt = train_f(model, opt, x_init, 150) # epoch

# 保存参数值

x_all_opts.append(x_one_opt.numpy())

z_all_opts.append(np.squeeze(z_one_opt))

# 使用numpy.meshgrid生成x1,x2矩阵,矩阵的每一行为[-3, 3],以0.1为间隔的数值

x1 = np.arange(-3, 3, 0.1)

x2 = np.arange(-3, 3, 0.1)

x1, x2 = np.meshgrid(x1, x2)

init_x = torch.Tensor(np.array([x1, x2]))

model = OptimizedFunction3D()

# 绘制 f_3d函数 的 三维图像

fig = plt.figure()

ax = plt.axes(projection='3d')

X = init_x[0].numpy()

Y = init_x[1].numpy()

Z = model(init_x).numpy() # 改为 model(init_x).numpy() David 2022.12.4

ax.plot_surface(X, Y, Z, cmap='plasma')

ax.set_xlabel('x1')

ax.set_ylabel('x2')

ax.set_zlabel('f(x1,x2)')

labels = ['SGD', 'AdaGrad', 'RMSprop', 'Momentum', 'Adam', 'Nesterov']

colors = ['#8B0000', '#0000FF', '#000000', '#008B00', '#FF0000']

animator = Visualization3D(*x_all_opts, z_values=z_all_opts, labels=labels, colors=colors, fig=fig, ax=ax)

ax.legend(loc='upper left')

plt.show()

animator.save('animation.gif')

(一直整不出来动态图,先攒着,等过了期末考试再回来研究,最近实在是太忙了)

代码:

import torch

import numpy as np

import copy

from matplotlib import pyplot as plt

from matplotlib import animation

from itertools import zip_longest

from matplotlib import cm

class Op(object):

def __init__(self):

pass

def __call__(self, inputs):

return self.forward(inputs)

# 输入:张量inputs

# 输出:张量outputs

def forward(self, inputs):

# return outputs

raise NotImplementedError

# 输入:最终输出对outputs的梯度outputs_grads

# 输出:最终输出对inputs的梯度inputs_grads

def backward(self, outputs_grads):

# return inputs_grads

raise NotImplementedError

class Optimizer(object): # 优化器基类

def __init__(self, init_lr, model):

"""

优化器类初始化

"""

# 初始化学习率,用于参数更新的计算

self.init_lr = init_lr

# 指定优化器需要优化的模型

self.model = model

def step(self):

"""

定义每次迭代如何更新参数

"""

pass

class SimpleBatchGD(Optimizer):

def __init__(self, init_lr, model):

super(SimpleBatchGD, self).__init__(init_lr=init_lr, model=model)

def step(self):

# 参数更新

if isinstance(self.model.params, dict):

for key in self.model.params.keys():

self.model.params[key] = self.model.params[key] - self.init_lr * self.model.grads[key]

class Adagrad(Optimizer):

def __init__(self, init_lr, model, epsilon):

"""

Adagrad 优化器初始化

输入:

- init_lr: 初始学习率 - model:模型,model.params存储模型参数值 - epsilon:保持数值稳定性而设置的非常小的常数

"""

super(Adagrad, self).__init__(init_lr=init_lr, model=model)

self.G = {}

for key in self.model.params.keys():

self.G[key] = 0

self.epsilon = epsilon

def adagrad(self, x, gradient_x, G, init_lr):

"""

adagrad算法更新参数,G为参数梯度平方的累计值。

"""

G += gradient_x ** 2

x -= init_lr / torch.sqrt(G + self.epsilon) * gradient_x

return x, G

def step(self):

"""

参数更新

"""

for key in self.model.params.keys():

self.model.params[key], self.G[key] = self.adagrad(self.model.params[key],

self.model.grads[key],

self.G[key],

self.init_lr)

class RMSprop(Optimizer):

def __init__(self, init_lr, model, beta, epsilon):

"""

RMSprop优化器初始化

输入:

- init_lr:初始学习率

- model:模型,model.params存储模型参数值

- beta:衰减率

- epsilon:保持数值稳定性而设置的常数

"""

super(RMSprop, self).__init__(init_lr=init_lr, model=model)

self.G = {}

for key in self.model.params.keys():

self.G[key] = 0

self.beta = beta

self.epsilon = epsilon

def rmsprop(self, x, gradient_x, G, init_lr):

"""

rmsprop算法更新参数,G为迭代梯度平方的加权移动平均

"""

G = self.beta * G + (1 - self.beta) * gradient_x ** 2

x -= init_lr / torch.sqrt(G + self.epsilon) * gradient_x

return x, G

def step(self):

"""参数更新"""

for key in self.model.params.keys():

self.model.params[key], self.G[key] = self.rmsprop(self.model.params[key],

self.model.grads[key],

self.G[key],

self.init_lr)

class Momentum(Optimizer):

def __init__(self, init_lr, model, rho):

"""

Momentum优化器初始化

输入:

- init_lr:初始学习率

- model:模型,model.params存储模型参数值

- rho:动量因子

"""

super(Momentum, self).__init__(init_lr=init_lr, model=model)

self.delta_x = {}

for key in self.model.params.keys():

self.delta_x[key] = 0

self.rho = rho

def momentum(self, x, gradient_x, delta_x, init_lr):

"""

momentum算法更新参数,delta_x为梯度的加权移动平均

"""

delta_x = self.rho * delta_x - init_lr * gradient_x

x += delta_x

return x, delta_x

def step(self):

"""参数更新"""

for key in self.model.params.keys():

self.model.params[key], self.delta_x[key] = self.momentum(self.model.params[key],

self.model.grads[key],

self.delta_x[key],

self.init_lr)

class Nesterov(Optimizer):

def __init__(self, init_lr, model, rho):

"""

Nesterov优化器初始化

输入:

- init_lr:初始学习率

- model:模型,model.params存储模型参数值

- rho:动量因子

"""

super(Nesterov, self).__init__(init_lr=init_lr, model=model)

self.delta_x = {}

for key in self.model.params.keys():

self.delta_x[key] = 0

self.rho = rho

def nesterov(self, x, gradient_x, delta_x, init_lr):

"""

Nesterov算法更新参数,delta_x为梯度的加权移动平均

"""

delta_x_prev = delta_x

delta_x = self.rho * delta_x - init_lr * gradient_x

x += -self.rho * delta_x_prev + (1 + self.rho) * delta_x

return x, delta_x

def step(self):

"""参数更新"""

for key in self.model.params.keys():

self.model.params[key], self.delta_x[key] = self.nesterov(self.model.params[key],

self.model.grads[key],

self.delta_x[key],

self.init_lr)

class Adam(Optimizer):

def __init__(self, init_lr, model, beta1, beta2, epsilon):

"""

Adam优化器初始化

输入:

- init_lr:初始学习率

- model:模型,model.params存储模型参数值

- beta1, beta2:移动平均的衰减率

- epsilon:保持数值稳定性而设置的常数

"""

super(Adam, self).__init__(init_lr=init_lr, model=model)

self.beta1 = beta1

self.beta2 = beta2

self.epsilon = epsilon

self.M, self.G = {}, {}

for key in self.model.params.keys():

self.M[key] = 0

self.G[key] = 0

self.t = 1

def adam(self, x, gradient_x, G, M, t, init_lr):

"""

adam算法更新参数

输入:

- x:参数

- G:梯度平方的加权移动平均

- M:梯度的加权移动平均

- t:迭代次数

- init_lr:初始学习率

"""

M = self.beta1 * M + (1 - self.beta1) * gradient_x

G = self.beta2 * G + (1 - self.beta2) * gradient_x ** 2

M_hat = M / (1 - self.beta1 ** t)

G_hat = G / (1 - self.beta2 ** t)

t += 1

x -= init_lr / torch.sqrt(G_hat + self.epsilon) * M_hat

return x, G, M, t

def step(self):

"""参数更新"""

for key in self.model.params.keys():

self.model.params[key], self.G[key], self.M[key], self.t = self.adam(self.model.params[key],

self.model.grads[key],

self.G[key],

self.M[key],

self.t,

self.init_lr)

class OptimizedFunction3D(Op):

def __init__(self):

super(OptimizedFunction3D, self).__init__()

self.params = {'x': 0}

self.grads = {'x': 0}

def forward(self, x):

self.params['x'] = x

return x[0] * x[0] / 20 + x[1] * x[1] / 1

# return x[0] ** 2 + x[1] ** 2 + x[1] ** 3 + x[0] * x[1]

def backward(self):

x = self.params['x']

gradient1 = 2 * x[0] / 20

gradient2 = 2 * x[1] / 1

grad1 = torch.Tensor([gradient1])

grad2 = torch.Tensor([gradient2])

self.grads['x'] = torch.cat([grad1, grad2])

class Visualization3D(animation.FuncAnimation):

""" 绘制动态图像,可视化参数更新轨迹 """

def __init__(self, *xy_values, z_values, labels=[], colors=[], fig, ax, interval=100, blit=True, **kwargs):

"""

初始化3d可视化类

输入:

xy_values:三维中x,y维度的值

z_values:三维中z维度的值

labels:每个参数更新轨迹的标签

colors:每个轨迹的颜色

interval:帧之间的延迟(以毫秒为单位)

blit:是否优化绘图

"""

self.fig = fig

self.ax = ax

self.xy_values = xy_values

self.z_values = z_values

frames = max(xy_value.shape[0] for xy_value in xy_values)

self.lines = [ax.plot([], [], [], label=label, color=color, lw=2)[0]

for _, label, color in zip_longest(xy_values, labels, colors)]

self.points = [ax.plot([], [], [], color=color, markeredgewidth=1, markeredgecolor='black', marker='o')[0]

for _, color in zip_longest(xy_values, colors)]

# print(self.lines)

super(Visualization3D, self).__init__(fig, self.animate, init_func=self.init_animation, frames=frames,

interval=interval, blit=blit, **kwargs)

def init_animation(self):

# 数值初始化

for line in self.lines:

line.set_data_3d([], [], [])

for point in self.points:

point.set_data_3d([], [], [])

return self.points + self.lines

def animate(self, i):

# 将x,y,z三个数据传入,绘制三维图像

for line, xy_value, z_value in zip(self.lines, self.xy_values, self.z_values):

line.set_data_3d(xy_value[:i, 0], xy_value[:i, 1], z_value[:i])

for point, xy_value, z_value in zip(self.points, self.xy_values, self.z_values):

point.set_data_3d(xy_value[i, 0], xy_value[i, 1], z_value[i])

return self.points + self.lines

def train_f(model, optimizer, x_init, epoch):

x = x_init

all_x = []

losses = []

for i in range(epoch):

all_x.append(copy.deepcopy(x.numpy())) # 浅拷贝 改为 深拷贝, 否则List的原值会被改变。 Edit by David 2022.12.4.

loss = model(x)

losses.append(loss)

model.backward()

optimizer.step()

x = model.params['x']

return torch.Tensor(np.array(all_x)), losses

# 构建6个模型,分别配备不同的优化器

model1 = OptimizedFunction3D()

opt_gd = SimpleBatchGD(init_lr=0.95, model=model1)

model2 = OptimizedFunction3D()

opt_adagrad = Adagrad(init_lr=1.5, model=model2, epsilon=1e-7)

model3 = OptimizedFunction3D()

opt_rmsprop = RMSprop(init_lr=0.05, model=model3, beta=0.9, epsilon=1e-7)

model4 = OptimizedFunction3D()

opt_momentum = Momentum(init_lr=0.1, model=model4, rho=0.9)

model5 = OptimizedFunction3D()

opt_adam = Adam(init_lr=0.3, model=model5, beta1=0.9, beta2=0.99, epsilon=1e-7)

model6 = OptimizedFunction3D()

opt_Nesterov = Nesterov(init_lr=0.1, model=model6, rho=0.9) # 将 model4 改为 model6

models = [model1, model2, model3, model4, model5, model6]

opts = [opt_gd, opt_adagrad, opt_rmsprop, opt_momentum, opt_adam, opt_Nesterov]

x_all_opts = []

z_all_opts = []

# 使用不同优化器训练

for model, opt in zip(models, opts):

x_init = torch.FloatTensor([-7, 2])

x_one_opt, z_one_opt = train_f(model, opt, x_init, 100) # epoch

# 保存参数值

x_all_opts.append(x_one_opt.numpy())

z_all_opts.append(np.squeeze(z_one_opt))

# 使用numpy.meshgrid生成x1,x2矩阵,矩阵的每一行为[-3, 3],以0.1为间隔的数值

x1 = np.arange(-10, 10, 0.01)

x2 = np.arange(-5, 5, 0.01)

x1, x2 = np.meshgrid(x1, x2)

init_x = torch.Tensor(np.array([x1, x2]))

model = OptimizedFunction3D()

# 绘制 f_3d函数 的 三维图像

fig = plt.figure()

ax = plt.axes(projection='3d')

X = init_x[0].numpy()

Y = init_x[1].numpy()

Z = model(init_x).numpy() # 改为 model(init_x).numpy() David 2022.12.4

surf = ax.plot_surface(X, Y, Z, edgecolor='grey', cmap=cm.coolwarm)

# fig.colorbar(surf, shrink=0.5, aspect=1)

# ax.set_zlim(-3, 2)

ax.set_xlabel('x1')

ax.set_ylabel('x2')

ax.set_zlabel('f(x1,x2)')

labels = ['SGD', 'AdaGrad', 'RMSprop', 'Momentum', 'Adam', 'Nesterov']

colors = ['#8B0000', '#0000FF', '#000000', '#008B00', '#FF0000']

animator = Visualization3D(*x_all_opts, z_values=z_all_opts, labels=labels, colors=colors, fig=fig, ax=ax)

ax.legend(loc='upper right')

plt.show()

# animator.save('teaser' + '.gif', writer='imagemagick',fps=10) # 效果不好,估计被挡住了…… 有待进一步提高 Edit by David 2022.12.4图像结果:

3.复现CS231经典动画

结合3D动画,用自己的语言,从轨迹、速度等多个角度讲解各个算法优缺点

NNDL实验 优化算法3D轨迹 复现cs231经典动画_深度学习 优化算法 动画展示-CSDN博客

Animations that may help your intuitions about the learning process dynamics.?

Left: Contours of a loss surface and time evolution of different optimization algorithms. Notice the "overshooting" behavior of momentum-based methods, which make the optimization look like a ball rolling down the hill.?

![]()

import torch

import numpy as np

import copy

from matplotlib import pyplot as plt

from matplotlib import animation

from itertools import zip_longest

from matplotlib import cm

class Op(object):

def __init__(self):

pass

def __call__(self, inputs):

return self.forward(inputs)

# 输入:张量inputs

# 输出:张量outputs

def forward(self, inputs):

# return outputs

raise NotImplementedError

# 输入:最终输出对outputs的梯度outputs_grads

# 输出:最终输出对inputs的梯度inputs_grads

def backward(self, outputs_grads):

# return inputs_grads

raise NotImplementedError

class Optimizer(object): # 优化器基类

def __init__(self, init_lr, model):

"""

优化器类初始化

"""

# 初始化学习率,用于参数更新的计算

self.init_lr = init_lr

# 指定优化器需要优化的模型

self.model = model

def step(self):

"""

定义每次迭代如何更新参数

"""

pass

class SimpleBatchGD(Optimizer):

def __init__(self, init_lr, model):

super(SimpleBatchGD, self).__init__(init_lr=init_lr, model=model)

def step(self):

# 参数更新

if isinstance(self.model.params, dict):

for key in self.model.params.keys():

self.model.params[key] = self.model.params[key] - self.init_lr * self.model.grads[key]

class Adagrad(Optimizer):

def __init__(self, init_lr, model, epsilon):

"""

Adagrad 优化器初始化

输入:

- init_lr: 初始学习率 - model:模型,model.params存储模型参数值 - epsilon:保持数值稳定性而设置的非常小的常数

"""

super(Adagrad, self).__init__(init_lr=init_lr, model=model)

self.G = {}

for key in self.model.params.keys():

self.G[key] = 0

self.epsilon = epsilon

def adagrad(self, x, gradient_x, G, init_lr):

"""

adagrad算法更新参数,G为参数梯度平方的累计值。

"""

G += gradient_x ** 2

x -= init_lr / torch.sqrt(G + self.epsilon) * gradient_x

return x, G

def step(self):

"""

参数更新

"""

for key in self.model.params.keys():

self.model.params[key], self.G[key] = self.adagrad(self.model.params[key],

self.model.grads[key],

self.G[key],

self.init_lr)

class RMSprop(Optimizer):

def __init__(self, init_lr, model, beta, epsilon):

"""

RMSprop优化器初始化

输入:

- init_lr:初始学习率

- model:模型,model.params存储模型参数值

- beta:衰减率

- epsilon:保持数值稳定性而设置的常数

"""

super(RMSprop, self).__init__(init_lr=init_lr, model=model)

self.G = {}

for key in self.model.params.keys():

self.G[key] = 0

self.beta = beta

self.epsilon = epsilon

def rmsprop(self, x, gradient_x, G, init_lr):

"""

rmsprop算法更新参数,G为迭代梯度平方的加权移动平均

"""

G = self.beta * G + (1 - self.beta) * gradient_x ** 2

x -= init_lr / torch.sqrt(G + self.epsilon) * gradient_x

return x, G

def step(self):

"""参数更新"""

for key in self.model.params.keys():

self.model.params[key], self.G[key] = self.rmsprop(self.model.params[key],

self.model.grads[key],

self.G[key],

self.init_lr)

class Momentum(Optimizer):

def __init__(self, init_lr, model, rho):

"""

Momentum优化器初始化

输入:

- init_lr:初始学习率

- model:模型,model.params存储模型参数值

- rho:动量因子

"""

super(Momentum, self).__init__(init_lr=init_lr, model=model)

self.delta_x = {}

for key in self.model.params.keys():

self.delta_x[key] = 0

self.rho = rho

def momentum(self, x, gradient_x, delta_x, init_lr):

"""

momentum算法更新参数,delta_x为梯度的加权移动平均

"""

delta_x = self.rho * delta_x - init_lr * gradient_x

x += delta_x

return x, delta_x

def step(self):

"""参数更新"""

for key in self.model.params.keys():

self.model.params[key], self.delta_x[key] = self.momentum(self.model.params[key],

self.model.grads[key],

self.delta_x[key],

self.init_lr)

class Nesterov(Optimizer):

def __init__(self, init_lr, model, rho):

"""

Nesterov优化器初始化

输入:

- init_lr:初始学习率

- model:模型,model.params存储模型参数值

- rho:动量因子

"""

super(Nesterov, self).__init__(init_lr=init_lr, model=model)

self.delta_x = {}

for key in self.model.params.keys():

self.delta_x[key] = 0

self.rho = rho

def nesterov(self, x, gradient_x, delta_x, init_lr):

"""

Nesterov算法更新参数,delta_x为梯度的加权移动平均

"""

delta_x_prev = delta_x

delta_x = self.rho * delta_x - init_lr * gradient_x

x += -self.rho * delta_x_prev + (1 + self.rho) * delta_x

return x, delta_x

def step(self):

"""参数更新"""

for key in self.model.params.keys():

self.model.params[key], self.delta_x[key] = self.nesterov(self.model.params[key],

self.model.grads[key],

self.delta_x[key],

self.init_lr)

class Adam(Optimizer):

def __init__(self, init_lr, model, beta1, beta2, epsilon):

"""

Adam优化器初始化

输入:

- init_lr:初始学习率

- model:模型,model.params存储模型参数值

- beta1, beta2:移动平均的衰减率

- epsilon:保持数值稳定性而设置的常数

"""

super(Adam, self).__init__(init_lr=init_lr, model=model)

self.beta1 = beta1

self.beta2 = beta2

self.epsilon = epsilon

self.M, self.G = {}, {}

for key in self.model.params.keys():

self.M[key] = 0

self.G[key] = 0

self.t = 1

def adam(self, x, gradient_x, G, M, t, init_lr):

"""

adam算法更新参数

输入:

- x:参数

- G:梯度平方的加权移动平均

- M:梯度的加权移动平均

- t:迭代次数

- init_lr:初始学习率

"""

M = self.beta1 * M + (1 - self.beta1) * gradient_x

G = self.beta2 * G + (1 - self.beta2) * gradient_x ** 2

M_hat = M / (1 - self.beta1 ** t)

G_hat = G / (1 - self.beta2 ** t)

t += 1

x -= init_lr / torch.sqrt(G_hat + self.epsilon) * M_hat

return x, G, M, t

def step(self):

"""参数更新"""

for key in self.model.params.keys():

self.model.params[key], self.G[key], self.M[key], self.t = self.adam(self.model.params[key],

self.model.grads[key],

self.G[key],

self.M[key],

self.t,

self.init_lr)

class OptimizedFunction3D(Op):

def __init__(self):

super(OptimizedFunction3D, self).__init__()

self.params = {'x': 0}

self.grads = {'x': 0}

def forward(self, x):

self.params['x'] = x

return - x[0] * x[0] / 2 + x[1] * x[1] / 1 # x[0] ** 2 + x[1] ** 2 + x[1] ** 3 + x[0] * x[1]

def backward(self):

x = self.params['x']

gradient1 = - 2 * x[0] / 2

gradient2 = 2 * x[1] / 1

grad1 = torch.Tensor([gradient1])

grad2 = torch.Tensor([gradient2])

self.grads['x'] = torch.cat([grad1, grad2])

class Visualization3D(animation.FuncAnimation):

""" 绘制动态图像,可视化参数更新轨迹 """

def __init__(self, *xy_values, z_values, labels=[], colors=[], fig, ax, interval=100, blit=True, **kwargs):

"""

初始化3d可视化类

输入:

xy_values:三维中x,y维度的值

z_values:三维中z维度的值

labels:每个参数更新轨迹的标签

colors:每个轨迹的颜色

interval:帧之间的延迟(以毫秒为单位)

blit:是否优化绘图

"""

self.fig = fig

self.ax = ax

self.xy_values = xy_values

self.z_values = z_values

frames = max(xy_value.shape[0] for xy_value in xy_values)

self.lines = [ax.plot([], [], [], label=label, color=color, lw=2)[0]

for _, label, color in zip_longest(xy_values, labels, colors)]

self.points = [ax.plot([], [], [], color=color, markeredgewidth=1, markeredgecolor='black', marker='o')[0]

for _, color in zip_longest(xy_values, colors)]

# print(self.lines)

super(Visualization3D, self).__init__(fig, self.animate, init_func=self.init_animation, frames=frames,

interval=interval, blit=blit, **kwargs)

def init_animation(self):

# 数值初始化

for line in self.lines:

line.set_data_3d([], [], [])

for point in self.points:

point.set_data_3d([], [], [])

return self.points + self.lines

def animate(self, i):

# 将x,y,z三个数据传入,绘制三维图像

for line, xy_value, z_value in zip(self.lines, self.xy_values, self.z_values):

line.set_data_3d(xy_value[:i, 0], xy_value[:i, 1], z_value[:i])

for point, xy_value, z_value in zip(self.points, self.xy_values, self.z_values):

point.set_data_3d(xy_value[i, 0], xy_value[i, 1], z_value[i])

return self.points + self.lines

def train_f(model, optimizer, x_init, epoch):

x = x_init

all_x = []

losses = []

for i in range(epoch):

all_x.append(copy.deepcopy(x.numpy())) # 浅拷贝 改为 深拷贝, 否则List的原值会被改变。 Edit by David 2022.12.4.

loss = model(x)

losses.append(loss)

model.backward()

optimizer.step()

x = model.params['x']

return torch.Tensor(np.array(all_x)), losses

# 构建5个模型,分别配备不同的优化器

model1 = OptimizedFunction3D()

opt_gd = SimpleBatchGD(init_lr=0.05, model=model1)

model2 = OptimizedFunction3D()

opt_adagrad = Adagrad(init_lr=0.05, model=model2, epsilon=1e-7)

model3 = OptimizedFunction3D()

opt_rmsprop = RMSprop(init_lr=0.05, model=model3, beta=0.9, epsilon=1e-7)

model4 = OptimizedFunction3D()

opt_momentum = Momentum(init_lr=0.05, model=model4, rho=0.9)

model5 = OptimizedFunction3D()

opt_adam = Adam(init_lr=0.05, model=model5, beta1=0.9, beta2=0.99, epsilon=1e-7)

model6 = OptimizedFunction3D()

opt_Nesterov = Nesterov(init_lr=0.1, model=model6, rho=0.9)

models = [model1, model2, model3, model4, model5, model6]

opts = [opt_gd, opt_adagrad, opt_rmsprop, opt_momentum, opt_adam, opt_Nesterov]

x_all_opts = []

z_all_opts = []

# 使用不同优化器训练

for model, opt in zip(models, opts):

x_init = torch.FloatTensor([0.00001, 0.5])

x_one_opt, z_one_opt = train_f(model, opt, x_init, 100) # epoch

# 保存参数值

x_all_opts.append(x_one_opt.numpy())

z_all_opts.append(np.squeeze(z_one_opt))

# 使用numpy.meshgrid生成x1,x2矩阵,矩阵的每一行为[-3, 3],以0.1为间隔的数值

x1 = np.arange(-1, 2, 0.01)

x2 = np.arange(-1, 1, 0.05)

x1, x2 = np.meshgrid(x1, x2)

init_x = torch.Tensor(np.array([x1, x2]))

model = OptimizedFunction3D()

# 绘制 f_3d函数 的 三维图像

fig = plt.figure()

ax = plt.axes(projection='3d')

X = init_x[0].numpy()

Y = init_x[1].numpy()

Z = model(init_x).numpy() # 改为 model(init_x).numpy() David 2022.12.4

surf = ax.plot_surface(X, Y, Z, edgecolor='grey', cmap=cm.coolwarm)

# fig.colorbar(surf, shrink=0.5, aspect=1)

ax.set_zlim(-3, 2)

ax.set_xlabel('x1')

ax.set_ylabel('x2')

ax.set_zlabel('f(x1,x2)')

labels = ['SGD', 'AdaGrad', 'RMSprop', 'Momentum', 'Adam', 'Nesterov']

colors = ['#8B0000', '#0000FF', '#000000', '#008B00', '#FF0000']

animator = Visualization3D(*x_all_opts, z_values=z_all_opts, labels=labels, colors=colors, fig=fig, ax=ax)

ax.legend(loc='upper right')

plt.show()

# animator.save('teaser' + '.gif', writer='imagemagick',fps=10) # 效果不好,估计被挡住了…… 有待进一步提高 Edit by David 2022.12.4

# save不好用,不费劲了,安装个软件做gif https://pc.qq.com/detail/13/detail_23913.html

4. 结合3D动画,用自己的语言,从轨迹、速度等多个角度讲解各个算法优缺点

SGD

SGD较于其他几个算法,速度相对较慢,会呈现“之”字型的轨迹,并且在cs231经典动画中,SGD出现了陷入局部最小值,出不来的情况。所以根据动画可以看出SGD的缺点有:

(1)容易陷入局部最优

(2)速度相对较慢且需要调整学习率

AdaGrad

可以看出,AdaGrad图中的轨迹图都是刚开始速度明显大于RMSprop和SGD算法的,偶尔比Momentum和Nesterov还要快,但是随着时间的增长,AdaGrad会成为图中速度最慢的算法。方向上,该算法的方向一直都很准确,并且明显解决了SGD的“之”字型问题,收敛稳定。相较于SGD算法,AdaGrad的优点:

(1)自适应算法:AdaGrad算法根据每个参数的历史梯度信息来自适应地调整学习率,使得梯度不会太大或太小。

(2)“之”字形的变动程度衰减,呈现稳定的向最优点收敛

缺点:

学习率衰减过快,可能发生早停现象:随着训练的进行,AdaGrad会累积历史梯度的平方和,导致学习率不断减小。在训练后期,学习率可能会变得非常小,甚至接近于零,导致训练过早停止。

RMSprop

RMSprop的轨迹图,速度上很稳定,在前期比AdaGrad要慢,但是后期AdaGrad很慢的时候,RMSprop依然稳定前进。在轨迹方向上,基本和AdaGrad是一样的。所以相较于AdaGrad而言,RMSprop在它的基础上进行改进,优点为:

收敛速度快解决了AdaGrad算法的早停问题: 引入了衰减率,不会一直累积梯度平方,而是通过梯度平方的指数衰减移动平均来调整学习率,解决了AdaGrad的早衰问题。

Momentum

Momentum算法在速度上,要明显快于前几个函数,跟Nesterov差不多,但是在方向上,Momentum算法每次都是去错的方向转几次,然后才能修正过来。所以Momentum的优点为:很快的收敛速度,特别是对于类似鞍点的问题,由于动量维持了运动,能够更有效地收敛至局部最小值或平坦区域。但是方向要相对差些,之前的动量仍然会对下一次的下降造成影响,导致Momentum其实有一点大幅度的“之”字型的轨迹。

Nesterov

Nesterov算法的方向和速度效果都是很好的,速度上,它是最快的;方向上,轨迹正确性要好于Momentum,但是仍然要比AdaGrad、RMSprop要差些。Nesterov是对Momentum进行的改进,不仅仅根据当前梯度调整位置,而是根据当前动量在预期的未来位置计算梯度。它的优点为速度快且轨迹呈现出更加平滑、更有方向性的路径朝向最优点。

Adam

根据3D轨迹图:Adam算法的轨迹为稳定,快速的向最小值收敛,就速度和方向的正确性、稳定性而言,都是居中。所以Adam算法的优点就是结合了调整学习率的算法:RMSprop和梯度估计修正算法:Momentum二者的优点:稳定、快速,实用性较高。?

?

这是我的另一篇博客总结的优化算法,需要期末复习的同学可以点击链接:

动图我一直贴不上去,考完试了回来研究研究,怎么才能贴上动图。

本文来自互联网用户投稿,该文观点仅代表作者本人,不代表本站立场。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。 如若内容造成侵权/违法违规/事实不符,请联系我的编程经验分享网邮箱:chenni525@qq.com进行投诉反馈,一经查实,立即删除!

- Python教程

- 深入理解 MySQL 中的 HAVING 关键字和聚合函数

- Qt之QChar编码(1)

- MyBatis入门基础篇

- 用Python脚本实现FFmpeg批量转换

- 【自然语言处理】【深度学习】文本向量化、one-hot、word embedding编码

- 三层架构——工业控制领域简单理解

- 数据警务技术专业毕业设计一体化平台-计算机毕业设计源码64192

- 网络管理员推荐的网络监控软件-OpManager

- 【用pandas,写入内容到excel工作表的问题】

- 基于SSH的酒店管理系统的设计与实现 (含源码+sql+视频导入教程+文档+PPT)

- 设计模式——备忘录模式

- ESC云服务器使用

- 面试题:进程与线程的关系和区别到底是什么?

- 学习python仅此一篇就够了(python容器:列表)