YOLOv8训练自己的数据集

1. 创建数据集

- 环境:

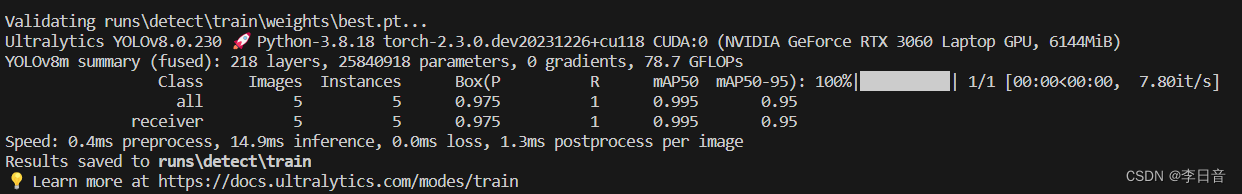

Ultralytics YOLOv8.0.230 🚀

Python-3.8.18

torch-2.3.0.dev20231226+cu118 CUDA:0

(NVIDIA GeForce RTX 3060 Laptop GPU, 6144MiB)

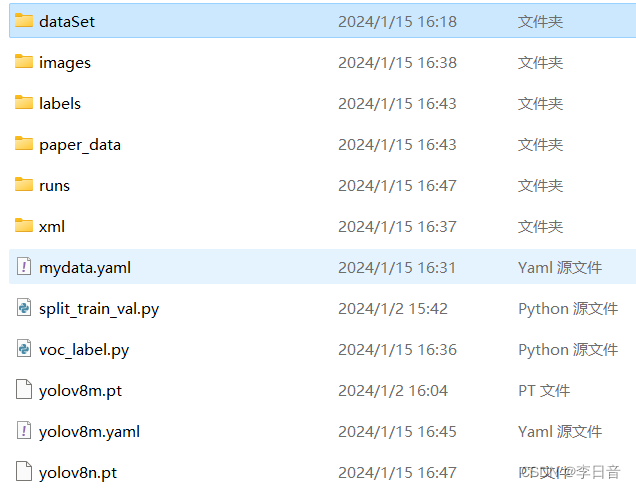

文件结构

- mydata

– dataSet # 保存自动生成train.txt,val.txt,test.txt和trainval.txt四个文件,存放训练集、验证集、测试集图片的名字

– images # 存放待训练的图片

– labels # 标注工具生成文件

– xml # 存放图片的xml文件

– run # 训练结果

split_train_val.py# 分割数据集的脚本

voc_label.py# 转换成yolo_txt格式,供yolo训练

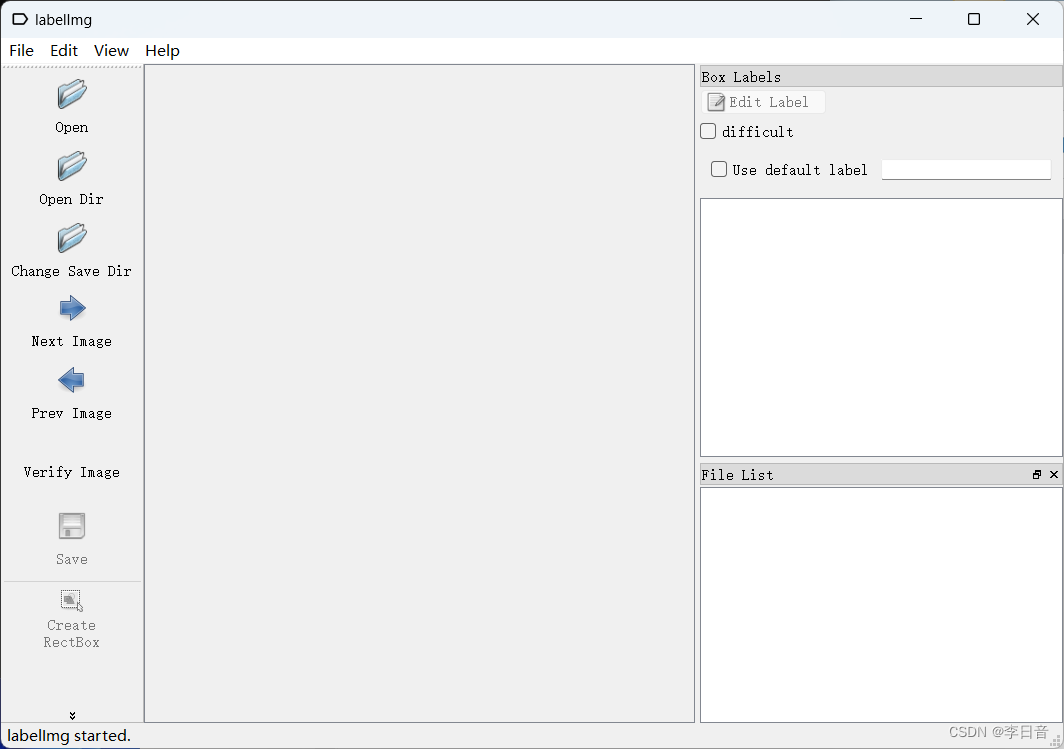

数据集标注

采用labelImg工具进行标注,网上可以直接搜到

- open dir: 选择images文件夹

- change save dir: 选择labels文件夹

- create rectbox: 进行框选

- save: 保存

脚本分割数据集

脚本分割训练集、验证集、测试集split_train_val.py代码如下:

# coding:utf-8

import os

import random

import argparse

parser = argparse.ArgumentParser()

# xml文件的地址,根据自己的数据进行修改 xml一般存放在Annotations下

parser.add_argument('--xml_path', default='xml', type=str, help='input xml label path')

# 数据集的划分,地址选择自己数据下的ImageSets/Main

parser.add_argument('--txt_path', default='dataSet', type=str, help='output txt label path')

opt = parser.parse_args()

trainval_percent = 1.0

train_percent = 0.9

xmlfilepath = opt.xml_path

txtsavepath = opt.txt_path

total_xml = os.listdir(xmlfilepath)

if not os.path.exists(txtsavepath):

os.makedirs(txtsavepath)

num = len(total_xml)

list_index = range(num)

tv = int(num * trainval_percent)

tr = int(tv * train_percent)

trainval = random.sample(list_index, tv)

train = random.sample(trainval, tr)

file_trainval = open(txtsavepath + '/trainval.txt', 'w')

file_test = open(txtsavepath + '/test.txt', 'w')

file_train = open(txtsavepath + '/train.txt', 'w')

file_val = open(txtsavepath + '/val.txt', 'w')

for i in list_index:

name = total_xml[i][:-4] + '\n'

if i in trainval:

file_trainval.write(name)

if i in train:

file_train.write(name)

else:

file_val.write(name)

else:

file_test.write(name)

file_trainval.close()

file_train.close()

file_val.close()

file_test.close()

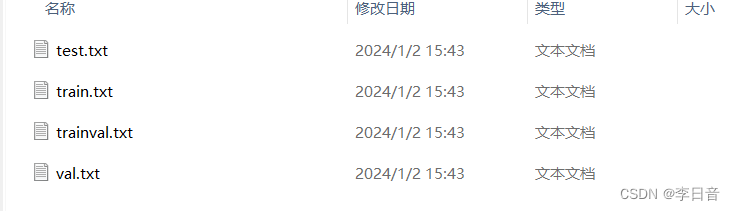

运行代码后,在dataSet 文件夹下生成下面四个txt文档:

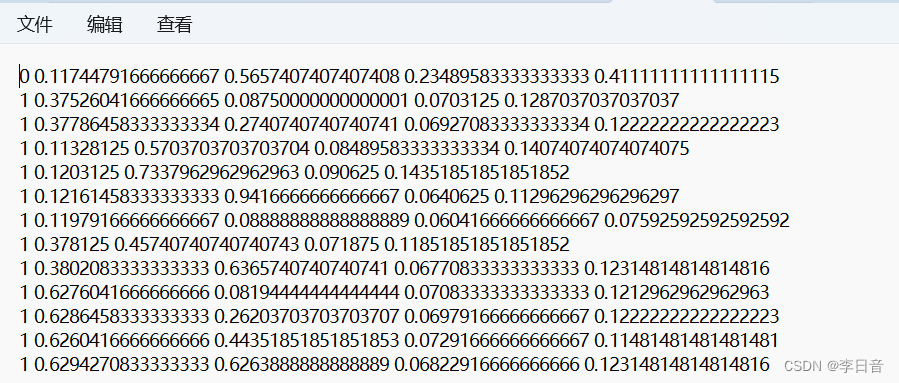

转换数据格式

将labelImage标注的xml文件转化为yolov8训练所需要的yolo_txt格式。即将每个xml标注提取bbox信息为txt格式,每个图像对应一个txt文件,文件每一行为一个目标的信息,包括class, x_center, y_center, width, height格式。

yolo_txt的格式如下:

创建voc_label.py文件,将训练集、验证集、测试集生成label标签(训练中要用到),同时将数据集路径导入txt文件中。

这里出现问题基本都是文件路径设置的不对,可以自行检查下

代码内容如下:

# -*- coding: utf-8 -*-

import xml.etree.ElementTree as ET

import os

from os import getcwd

sets = ['train', 'val', 'test']

classes = ["receiver", "laser"] # 改成自己的类别

abs_path = os.getcwd()

print(abs_path)

def convert(size, box):

dw = 1. / (size[0])

dh = 1. / (size[1])

x = (box[0] + box[1]) / 2.0 - 1

y = (box[2] + box[3]) / 2.0 - 1

w = box[1] - box[0]

h = box[3] - box[2]

x = x * dw

w = w * dw

y = y * dh

h = h * dh

return x, y, w, h

def convert_annotation(image_id):

in_file = open('xml/%s.xml' % (image_id), encoding='UTF-8')

out_file = open('labels/%s.txt' % (image_id), 'w')

tree = ET.parse(in_file)

root = tree.getroot()

size = root.find('size')

w = int(size.find('width').text)

h = int(size.find('height').text)

for obj in root.iter('object'):

difficult = obj.find('difficult').text

#difficult = obj.find('Difficult').text

cls = obj.find('name').text

if cls not in classes or int(difficult) == 1:

continue

cls_id = classes.index(cls)

xmlbox = obj.find('bndbox')

b = (float(xmlbox.find('xmin').text), float(xmlbox.find('xmax').text), float(xmlbox.find('ymin').text),

float(xmlbox.find('ymax').text))

b1, b2, b3, b4 = b

# 标注越界修正

if b2 > w:

b2 = w

if b4 > h:

b4 = h

b = (b1, b2, b3, b4)

bb = convert((w, h), b)

out_file.write(str(cls_id) + " " + " ".join([str(a) for a in bb]) + '\n')

wd = getcwd()

for image_set in sets:

if not os.path.exists('labels/'):

os.makedirs('labels/')

image_ids = open('dataSet/%s.txt' % (image_set)).read().strip().split()

list_file = open('paper_data/%s.txt' % (image_set), 'w')

for image_id in image_ids:

list_file.write(abs_path + '/images/%s.jpg\n' % (image_id))

convert_annotation(image_id)

list_file.close()

2. 配置文件

2.1 数据集配置

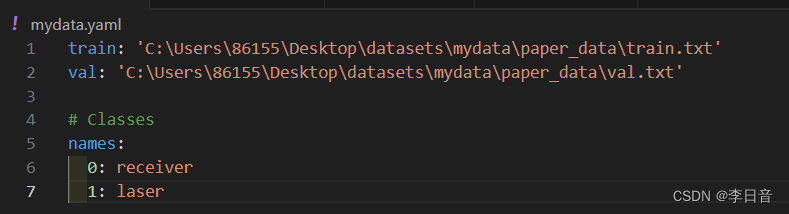

在mydata文件夹下新建一个mydata.yaml文件,用来存放训练集和验证集的划分文件(train.txt和val.txt)。

这两个文件是通过运行voc_label.py代码生成的,然后是目标的类别数目和具体类别列表。

这里最好用绝对路径,我用相对路径报错了不知为什么。

mydata.yaml内容如下:

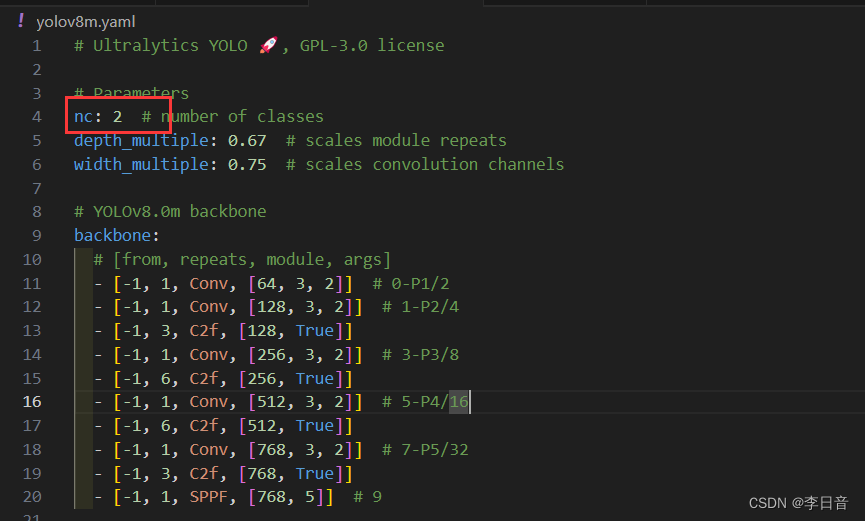

2.2 选择需要的模型

在ultralytics/models/v8/目录下是模型的配置文件,这边提供s、m、l、x版本,逐渐增大(随着架构的增大,训练时间也是逐渐增大).

假设采用yolov8m.yaml,只用修改一个参数,把nc改成自己的类别数,需要取整(可选) 如下:

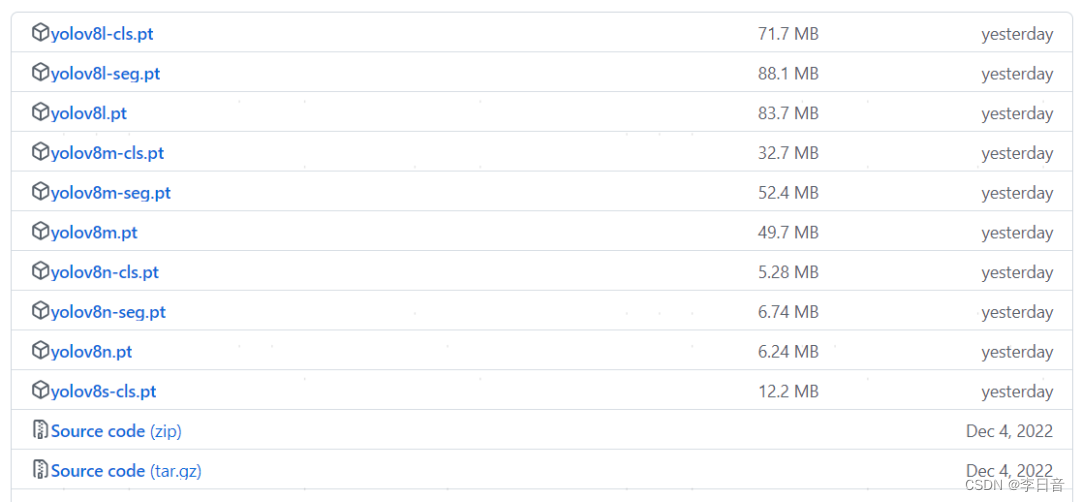

3. 模型训练

在YOLOv8的GitHub开源网址上下载对应版本的模型:

https://github.com/ultralytics/assets/releases

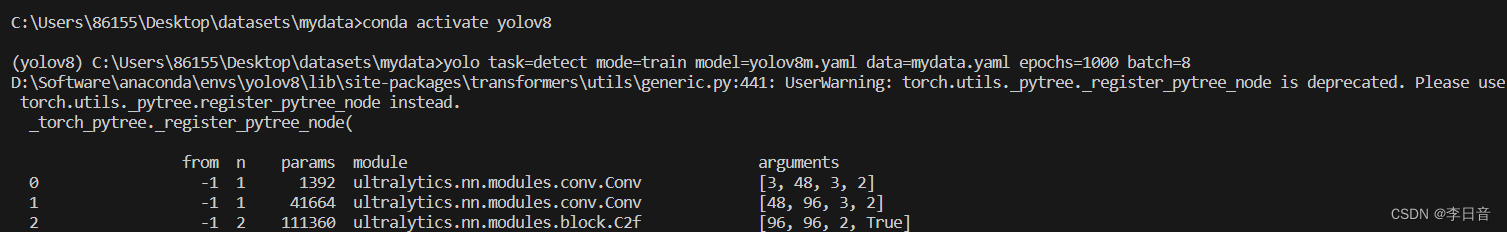

接下来就可以开始训练模型了,我的显卡只有6G显存,所以用的m模型,batch也只开到8。具体命令如下:

yolo task=detect mode=train model=yolov8m.yaml data=mydata.yaml epochs=1000 batch=8

以上参数解释如下:

- task:选择任务类型,可选[‘detect’, ‘segment’, ‘classify’, ‘init’]

- mode: 选择是训练、验证还是预测的任务蕾西 可选[‘train’, ‘val’, ‘predict’]

- model: 选择yolov8不同的模型配置文件,可选yolov8s.yaml、yolov8m.yaml、yolov8l.yaml、yolov8x.yaml

- data: 选择生成的数据集配置文件

- epochs:指的就是训练过程中整个数据集将被迭代多少次,显卡不行你就调小点。

- batch:一次看完多少张图片才进行权重更新,梯度下降的mini-batch,显卡不行你就调小点。

训练过程如下所示:

训练差不多了会提前结束,并保存最好的模型

4. 测试

可以直接用电脑摄像头测,放个代码

import cv2

import sys

import time

import numpy as np

from ultralytics import YOLO

# 加载模型

model = YOLO('path/to/best.pt') # 载入自定义模型

# 视频路径

#video_path = 'test.mp4'

video_path = ""

cap = cv2.VideoCapture(0) # 更改数字,切换不同的摄像头

# loop

while cap.isOpened():

success, frame = cap.read()

if success:

start = time.perf_counter()

# Run YOLOv8 inference on the frame

results = model(frame)

end = time.perf_counter()

total_time = end - start

fps = 1 / total_time

# visualize the results on the frame

annotated_frame = results[0].plot()

# display the annotated frame

cv2.imshow("YOLOv8 Inference:", annotated_frame)

# Break the loop if 'q' is pressed

if cv2.waitKey(1) & 0xFF == ord('q'):

break

else:

# Break the loop if the end of the video is reached

break

# Release the video capture object and close the display windows

cap.release()

cv2.destroyAllWindows()

'''

# 检测视频

results = model.track(source=video_path, conf=0.75, show=True, save=True) # 这里只框选置信度0.75以上的目标

'''

本文来自互联网用户投稿,该文观点仅代表作者本人,不代表本站立场。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。 如若内容造成侵权/违法违规/事实不符,请联系我的编程经验分享网邮箱:chenni525@qq.com进行投诉反馈,一经查实,立即删除!

- Python教程

- 深入理解 MySQL 中的 HAVING 关键字和聚合函数

- Qt之QChar编码(1)

- MyBatis入门基础篇

- 用Python脚本实现FFmpeg批量转换

- 类的6个默认成员函数(上)

- 【前端HTML】HTML基础

- Python自动化神器入门

- What is `addArgumentResolvers` does in `WebMvcConfigurer` ?

- 深度好文:最全的大模型 RAG 技术概览

- 腾讯云服务器买1年送3个月免费领取的方法

- 算法有哪?类?

- JAVA电商平台 免 费 搭 建 B2B2C商城系统 多用户商城系统 直播带货 新零售商城 o2o商城 电子商务 拼团商城 分销商城

- CTFhub-目录遍历

- 实名认证(身份证二要素)API在共享经济中的作用和优势