diffusers-Image-to-image

发布时间:2023年12月19日

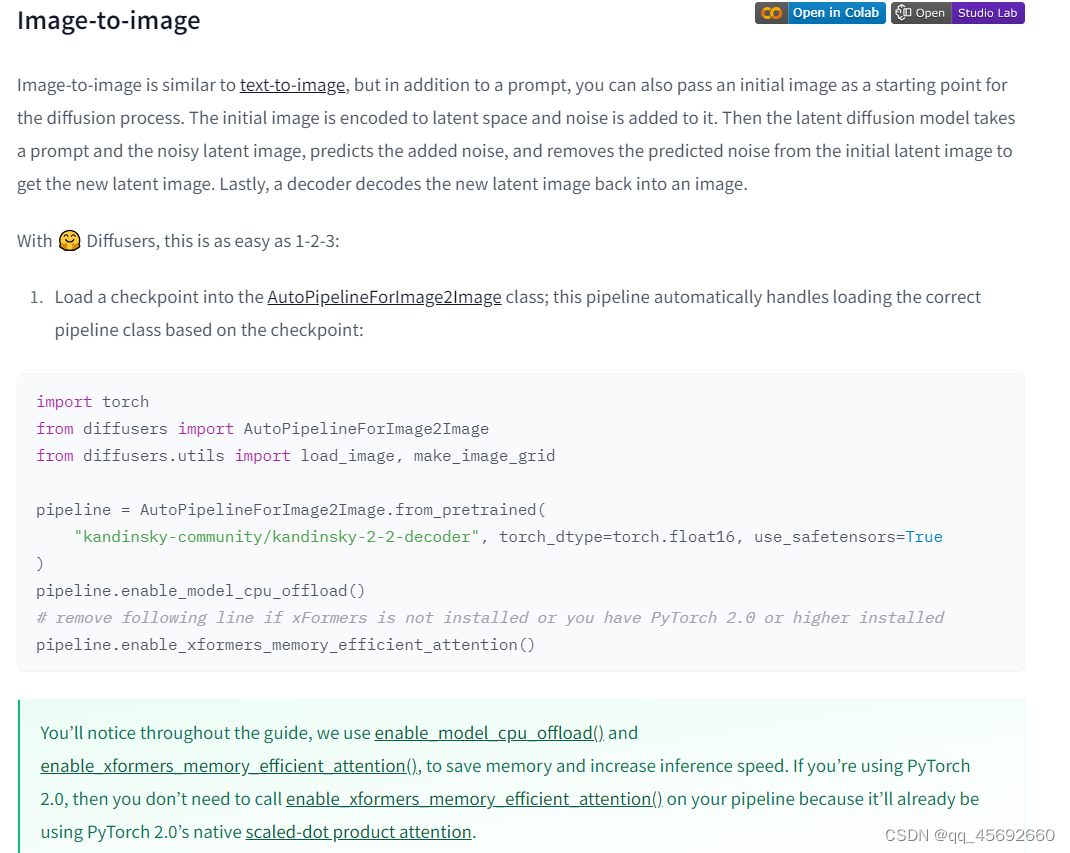

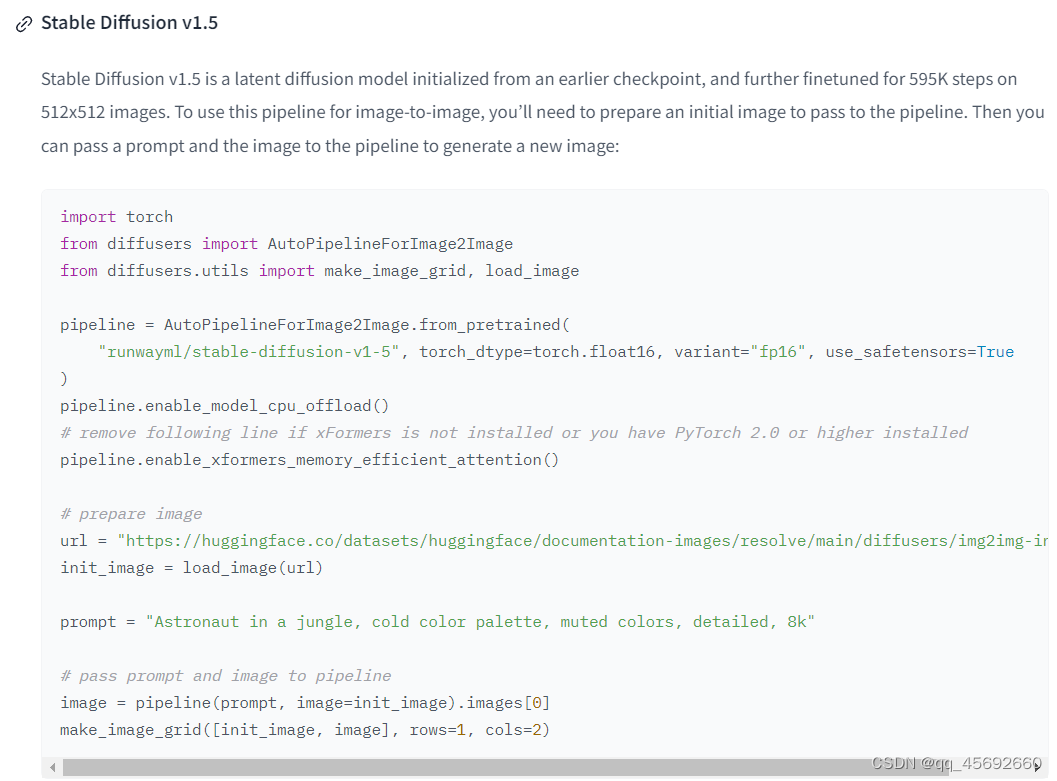

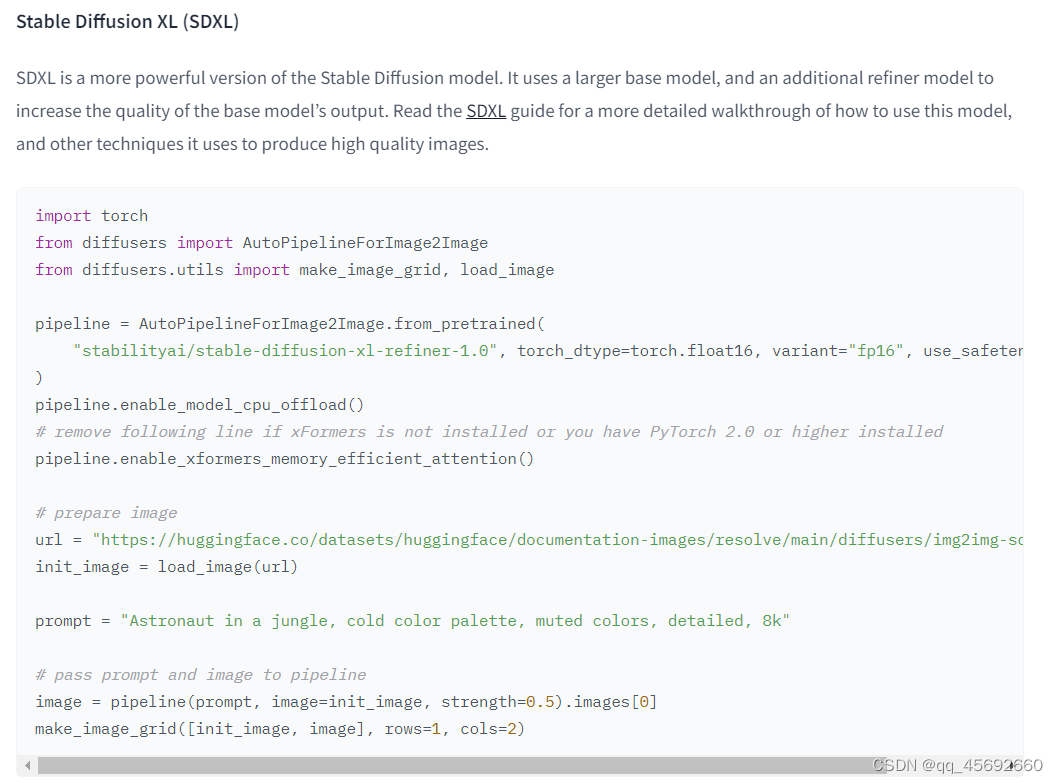

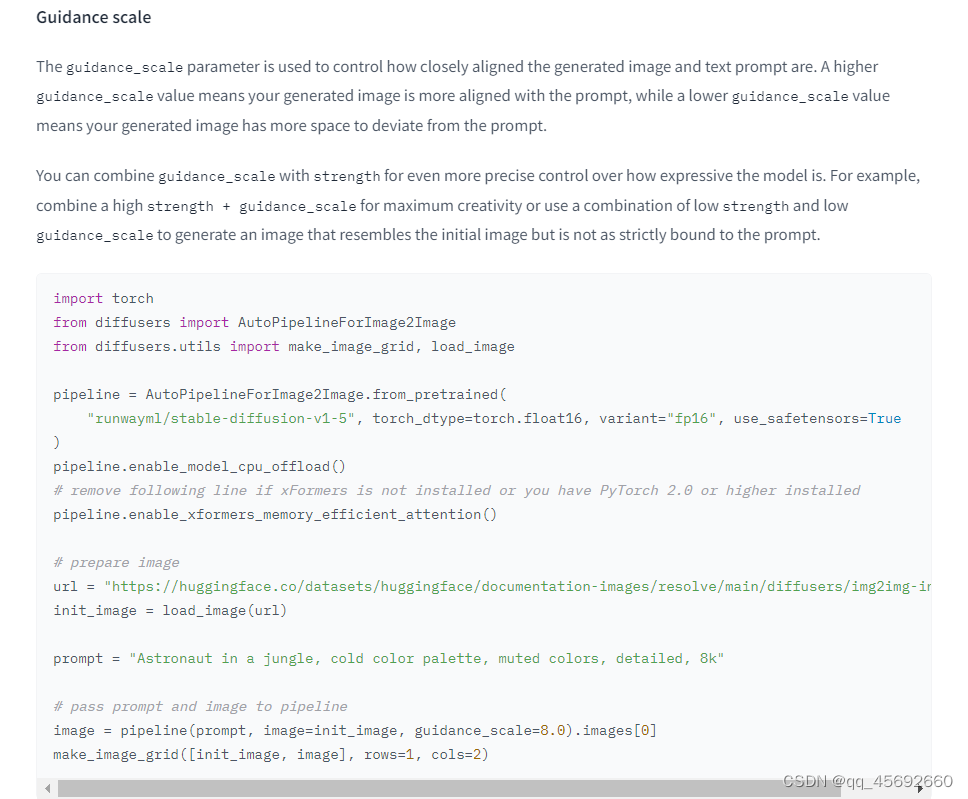

import torch

from diffusers import AutoPipelineForImage2Image

from diffusers.utils import make_image_grid, load_image

pipeline = AutoPipelineForImage2Image.from_pretrained(

"runwayml/stable-diffusion-v1-5", torch_dtype=torch.float16, variant="fp16", use_safetensors=True

)

pipeline.enable_model_cpu_offload()

# remove following line if xFormers is not installed or you have PyTorch 2.0 or higher installed

pipeline.enable_xformers_memory_efficient_attention()

# prepare image

url = "https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/diffusers/img2img-init.png"

init_image = load_image(url)

prompt = "Astronaut in a jungle, cold color palette, muted colors, detailed, 8k"

# pass prompt and image to pipeline

image = pipeline(prompt, image=init_image, strength=0.8).images[0]

make_image_grid([init_image, image], rows=1, cols=2)

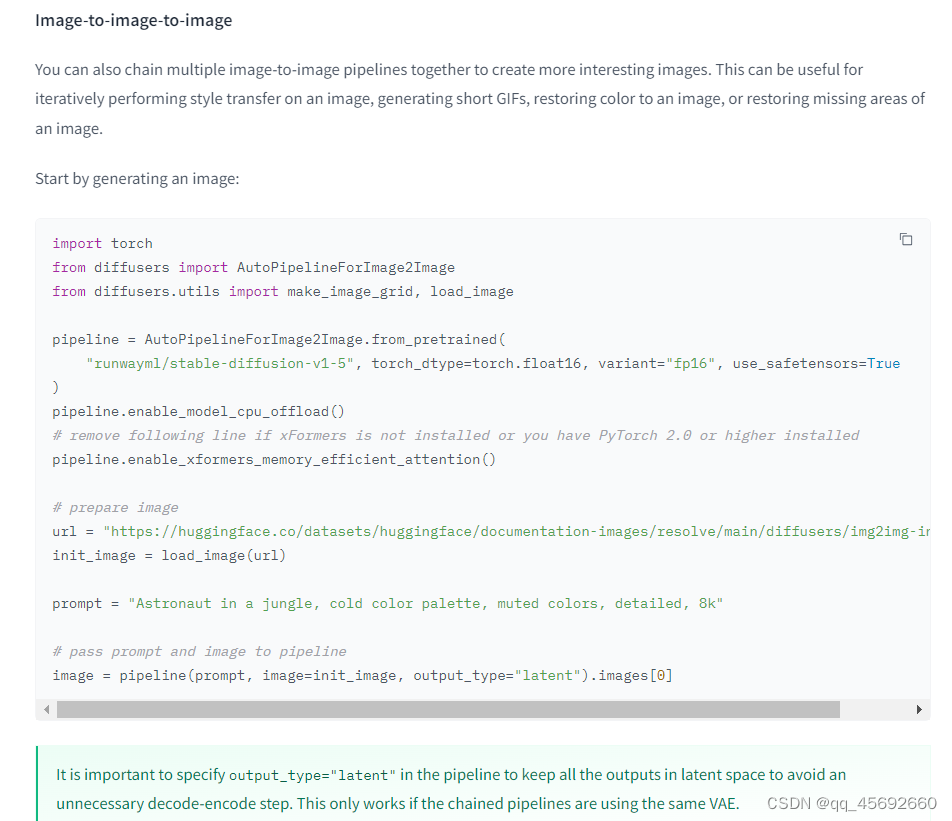

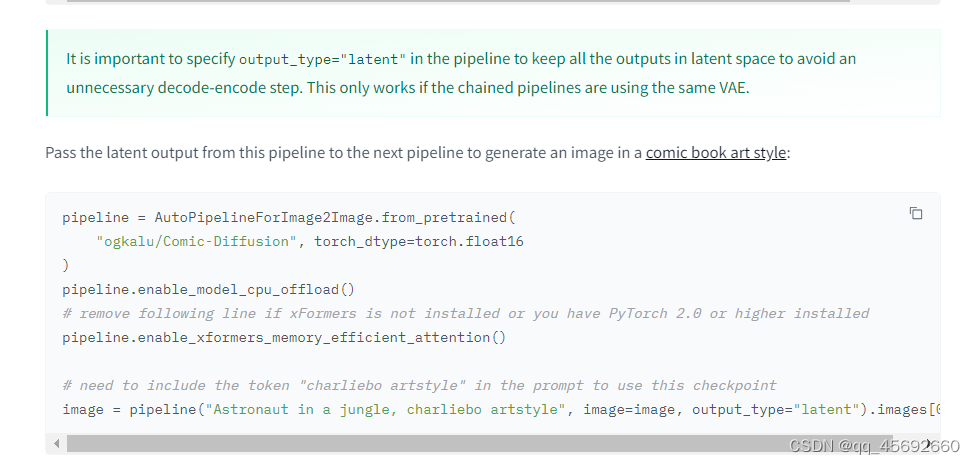

Now you can pass this generated image to the image-to-image pipeline:

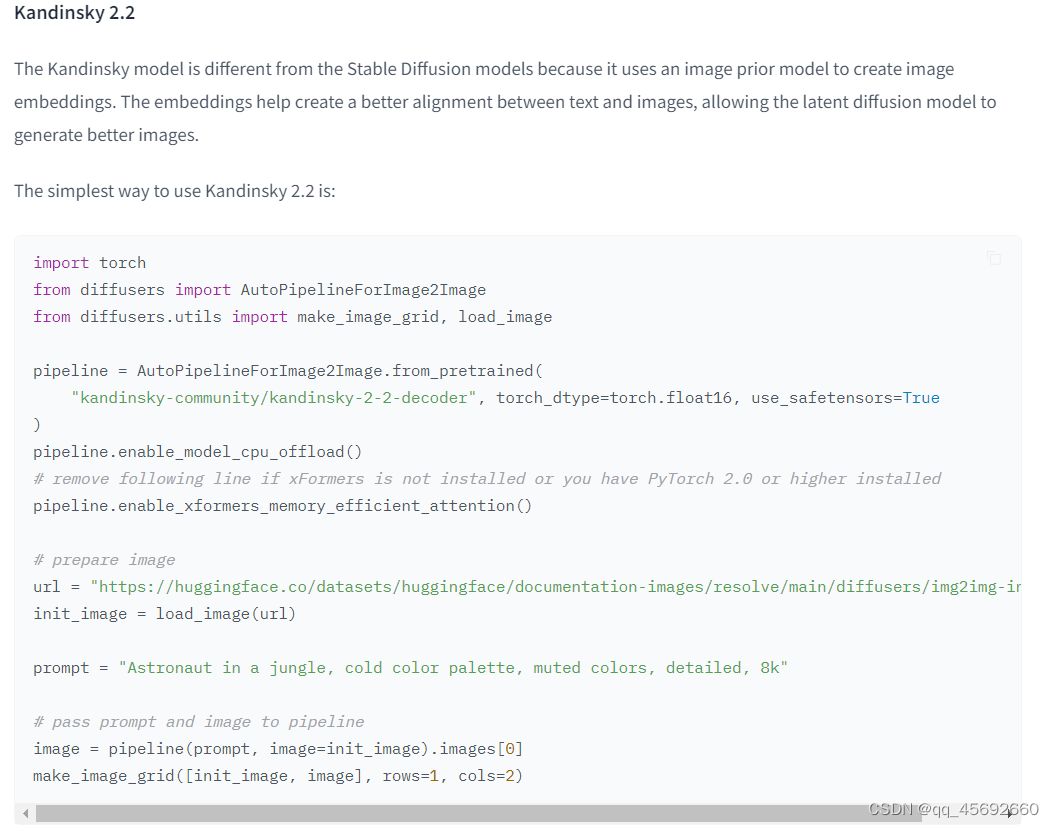

pipeline = AutoPipelineForImage2Image.from_pretrained(

"kandinsky-community/kandinsky-2-2-decoder", torch_dtype=torch.float16, use_safetensors=True

)

pipeline.enable_model_cpu_offload()

# remove following line if xFormers is not installed or you have PyTorch 2.0 or higher installed

pipeline.enable_xformers_memory_efficient_attention()

image2image = pipeline("Astronaut in a jungle, cold color palette, muted colors, detailed, 8k", image=text2image).images[0]

make_image_grid([text2image, image2image], rows=1, cols=2)

有点递归套娃的样子了

文章来源:https://blog.csdn.net/qq_45692660/article/details/135073942

本文来自互联网用户投稿,该文观点仅代表作者本人,不代表本站立场。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。 如若内容造成侵权/违法违规/事实不符,请联系我的编程经验分享网邮箱:chenni525@qq.com进行投诉反馈,一经查实,立即删除!

本文来自互联网用户投稿,该文观点仅代表作者本人,不代表本站立场。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。 如若内容造成侵权/违法违规/事实不符,请联系我的编程经验分享网邮箱:chenni525@qq.com进行投诉反馈,一经查实,立即删除!

最新文章

- Python教程

- 深入理解 MySQL 中的 HAVING 关键字和聚合函数

- Qt之QChar编码(1)

- MyBatis入门基础篇

- 用Python脚本实现FFmpeg批量转换

- 使用pycharm虚拟环境和使用conda管理虚拟环境的区别

- 双双入选 中科驭数第二代DPU芯片K2和低时延DPU卡荣获2023年北京市新技术新产品新服务认定

- Excel2016随手记录-学生按照教学班区分出成绩表,形成独立教学班Excel表。

- APP自动化测试工具:八款推荐解析

- JS 为什么0==““ 会是true

- Spring注解驱动开发之常用注解案例_告别在XML中配置Bean

- 学习Django从零开始之三

- Zabbix 企业级分布式监控

- 万界星空科技电子装配行业MES解决方案

- 【各种**问题系列】Java 数组集合之间的相互转换