TensorRT英伟达官方示例解析(三)

系列文章目录

TensorRT英伟达官方示例解析(一)

TensorRT英伟达官方示例解析(二)

TensorRT英伟达官方示例解析(三)

文章目录

前言

一、04-BuildEngineByONNXParser----pyTorch-ONNX-TensorRT

以pyTorch-ONNX-TensorRT为例

python3 main.py

#

# Copyright (c) 2021-2023, NVIDIA CORPORATION. All rights reserved.

#

# Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

#

import os

from datetime import datetime as dt

from glob import glob

import calibrator

import cv2

import numpy as np

import tensorrt as trt

import torch as t

import torch.nn.functional as F

from cuda import cudart

from torch.autograd import Variable

np.random.seed(31193)

t.manual_seed(97)

t.cuda.manual_seed_all(97)

t.backends.cudnn.deterministic = True

nTrainBatchSize = 128

nHeight = 28

nWidth = 28

onnxFile = "./model.onnx"

trtFile = "./model.plan"

dataPath = os.path.dirname(os.path.realpath(__file__)) + "/../../00-MNISTData/"

trainFileList = sorted(glob(dataPath + "train/*.jpg"))

testFileList = sorted(glob(dataPath + "test/*.jpg"))

inferenceImage = dataPath + "8.png"

# for FP16 mode

bUseFP16Mode = False

# for INT8 model

bUseINT8Mode = False

nCalibration = 1

cacheFile = "./int8.cache"

calibrationDataPath = dataPath + "test/"

os.system("rm -rf ./*.onnx ./*.plan ./*.cache")

np.set_printoptions(precision=3, linewidth=200, suppress=True)

cudart.cudaDeviceSynchronize()

# Create network and train model in pyTorch ------------------------------------

class Net(t.nn.Module):

def __init__(self):

super(Net, self).__init__()

self.conv1 = t.nn.Conv2d(1, 32, (5, 5), padding=(2, 2), bias=True)

self.conv2 = t.nn.Conv2d(32, 64, (5, 5), padding=(2, 2), bias=True)

self.fc1 = t.nn.Linear(64 * 7 * 7, 1024, bias=True)

self.fc2 = t.nn.Linear(1024, 10, bias=True)

def forward(self, x):

x = F.max_pool2d(F.relu(self.conv1(x)), (2, 2))

x = F.max_pool2d(F.relu(self.conv2(x)), (2, 2))

x = x.reshape(-1, 64 * 7 * 7)

x = F.relu(self.fc1(x))

y = self.fc2(x)

z = F.softmax(y, dim=1)

z = t.argmax(z, dim=1)

return y, z

class MyData(t.utils.data.Dataset):

def __init__(self, isTrain=True):

if isTrain:

self.data = trainFileList

else:

self.data = testFileList

def __getitem__(self, index):

imageName = self.data[index]

data = cv2.imread(imageName, cv2.IMREAD_GRAYSCALE)

label = np.zeros(10, dtype=np.float32)

index = int(imageName[-7])

label[index] = 1

return t.from_numpy(data.reshape(1, nHeight, nWidth).astype(np.float32)), t.from_numpy(label)

def __len__(self):

return len(self.data)

model = Net().cuda()

ceLoss = t.nn.CrossEntropyLoss()

opt = t.optim.Adam(model.parameters(), lr=0.001)

trainDataset = MyData(True)

testDataset = MyData(False)

trainLoader = t.utils.data.DataLoader(dataset=trainDataset, batch_size=nTrainBatchSize, shuffle=True)

testLoader = t.utils.data.DataLoader(dataset=testDataset, batch_size=nTrainBatchSize, shuffle=True)

for epoch in range(10):

for xTrain, yTrain in trainLoader:

xTrain = Variable(xTrain).cuda()

yTrain = Variable(yTrain).cuda()

opt.zero_grad()

y_, z = model(xTrain)

loss = ceLoss(y_, yTrain)

loss.backward()

opt.step()

with t.no_grad():

acc = 0

n = 0

for xTest, yTest in testLoader:

xTest = Variable(xTest).cuda()

yTest = Variable(yTest).cuda()

y_, z = model(xTest)

acc += t.sum(z == t.matmul(yTest, t.Tensor([0, 1, 2, 3, 4, 5, 6, 7, 8, 9]).to("cuda:0"))).cpu().numpy()

n += xTest.shape[0]

print("%s, epoch %2d, loss = %f, test acc = %f" % (dt.now(), epoch + 1, loss.data, acc / n))

print("Succeeded building model in pyTorch!")

# Export model as ONNX file ----------------------------------------------------

t.onnx.export(model, t.randn(1, 1, nHeight, nWidth, device="cuda"), onnxFile, input_names=["x"], output_names=["y", "z"], do_constant_folding=True, verbose=True, keep_initializers_as_inputs=True, opset_version=12, dynamic_axes={"x": {0: "nBatchSize"}, "z": {0: "nBatchSize"}})

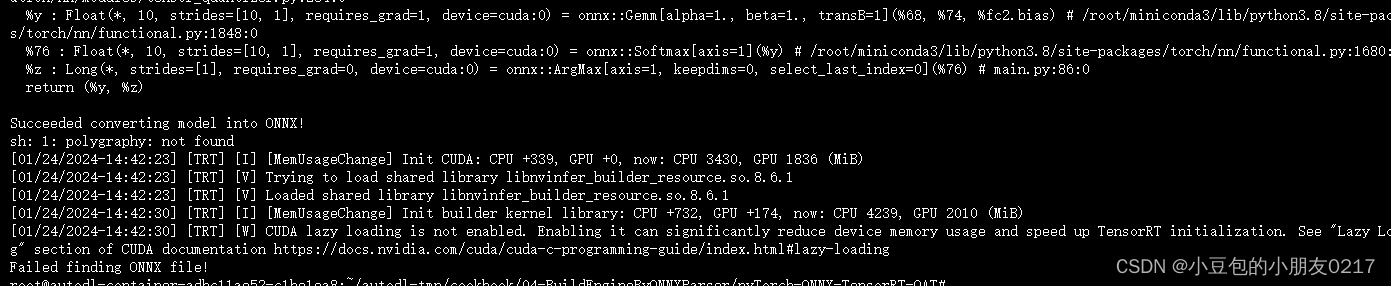

print("Succeeded converting model into ONNX!")

# Parse network, rebuild network and do inference in TensorRT ------------------

logger = trt.Logger(trt.Logger.VERBOSE)

builder = trt.Builder(logger)

network = builder.create_network(1 << int(trt.NetworkDefinitionCreationFlag.EXPLICIT_BATCH))

profile = builder.create_optimization_profile()

config = builder.create_builder_config()

if bUseFP16Mode:

config.set_flag(trt.BuilderFlag.FP16)

if bUseINT8Mode:

config.set_flag(trt.BuilderFlag.INT8)

config.int8_calibrator = calibrator.MyCalibrator(calibrationDataPath, nCalibration, (1, 1, nHeight, nWidth), cacheFile)

parser = trt.OnnxParser(network, logger)

if not os.path.exists(onnxFile):

print("Failed finding ONNX file!")

exit()

print("Succeeded finding ONNX file!")

with open(onnxFile, "rb") as model:

if not parser.parse(model.read()):

print("Failed parsing .onnx file!")

for error in range(parser.num_errors):

print(parser.get_error(error))

exit()

print("Succeeded parsing .onnx file!")

inputTensor = network.get_input(0)

profile.set_shape(inputTensor.name, [1, 1, nHeight, nWidth], [4, 1, nHeight, nWidth], [8, 1, nHeight, nWidth])

config.add_optimization_profile(profile)

network.unmark_output(network.get_output(0)) # remove output tensor "y"

engineString = builder.build_serialized_network(network, config)

if engineString == None:

print("Failed building engine!")

exit()

print("Succeeded building engine!")

with open(trtFile, "wb") as f:

f.write(engineString)

engine = trt.Runtime(logger).deserialize_cuda_engine(engineString)

nIO = engine.num_io_tensors

lTensorName = [engine.get_tensor_name(i) for i in range(nIO)]

nInput = [engine.get_tensor_mode(lTensorName[i]) for i in range(nIO)].count(trt.TensorIOMode.INPUT)

context = engine.create_execution_context()

context.set_input_shape(lTensorName[0], [1, 1, nHeight, nWidth])

for i in range(nIO):

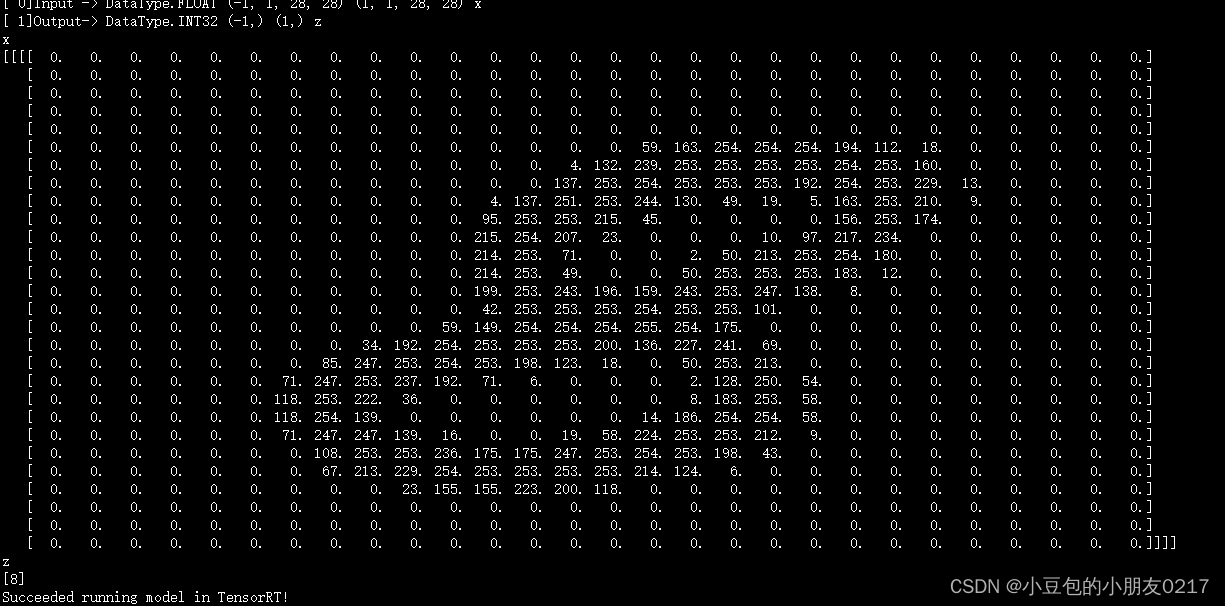

print("[%2d]%s->" % (i, "Input " if i < nInput else "Output"), engine.get_tensor_dtype(lTensorName[i]), engine.get_tensor_shape(lTensorName[i]), context.get_tensor_shape(lTensorName[i]), lTensorName[i])

bufferH = []

data = cv2.imread(inferenceImage, cv2.IMREAD_GRAYSCALE).astype(np.float32).reshape(1, 1, nHeight, nWidth)

bufferH.append(np.ascontiguousarray(data))

for i in range(nInput, nIO):

bufferH.append(np.empty(context.get_tensor_shape(lTensorName[i]), dtype=trt.nptype(engine.get_tensor_dtype(lTensorName[i]))))

bufferD = []

for i in range(nIO):

bufferD.append(cudart.cudaMalloc(bufferH[i].nbytes)[1])

for i in range(nInput):

cudart.cudaMemcpy(bufferD[i], bufferH[i].ctypes.data, bufferH[i].nbytes, cudart.cudaMemcpyKind.cudaMemcpyHostToDevice)

for i in range(nIO):

context.set_tensor_address(lTensorName[i], int(bufferD[i]))

context.execute_async_v3(0)

for i in range(nInput, nIO):

cudart.cudaMemcpy(bufferH[i].ctypes.data, bufferD[i], bufferH[i].nbytes, cudart.cudaMemcpyKind.cudaMemcpyDeviceToHost)

for i in range(nIO):

print(lTensorName[i])

print(bufferH[i])

for b in bufferD:

cudart.cudaFree(b)

print("Succeeded running model in TensorRT!")

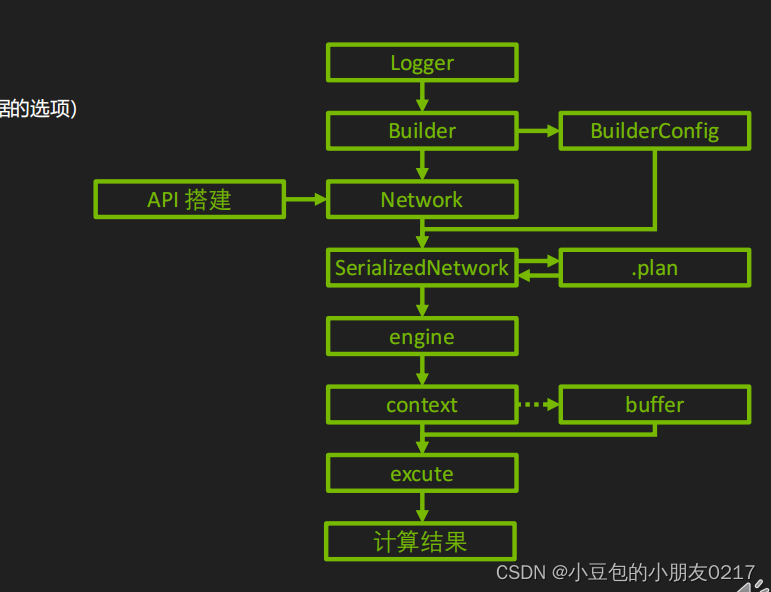

生成了model.onnx和model.plan

生成 TRT 内部表示

engineString = builder.build_serialized_network(network, config)

if engineString == None:

print("Failed building engine!")

exit()

print("Succeeded building engine!")

with open(trtFile, "wb") as f:

f.write(engineString)

生成 Engine

engine = trt.Runtime(logger).deserialize_cuda_engine(engineString)

nIO = engine.num_io_tensors

lTensorName = [engine.get_tensor_name(i) for i in range(nIO)]

nInput = [engine.get_tensor_mode(lTensorName[i]) for i in range(nIO)].count(trt.TensorIOMode.INPUT)

创建 Context

context = engine.create_execution_context()

context.set_input_shape(lTensorName[0], [1, 1, nHeight, nWidth])

for i in range(nIO):

print("[%2d]%s->" % (i, "Input " if i < nInput else "Output"), engine.get_tensor_dtype(lTensorName[i]), engine.get_tensor_shape(lTensorName[i]), context.get_tensor_shape(lTensorName[i]), lTensorName[i])

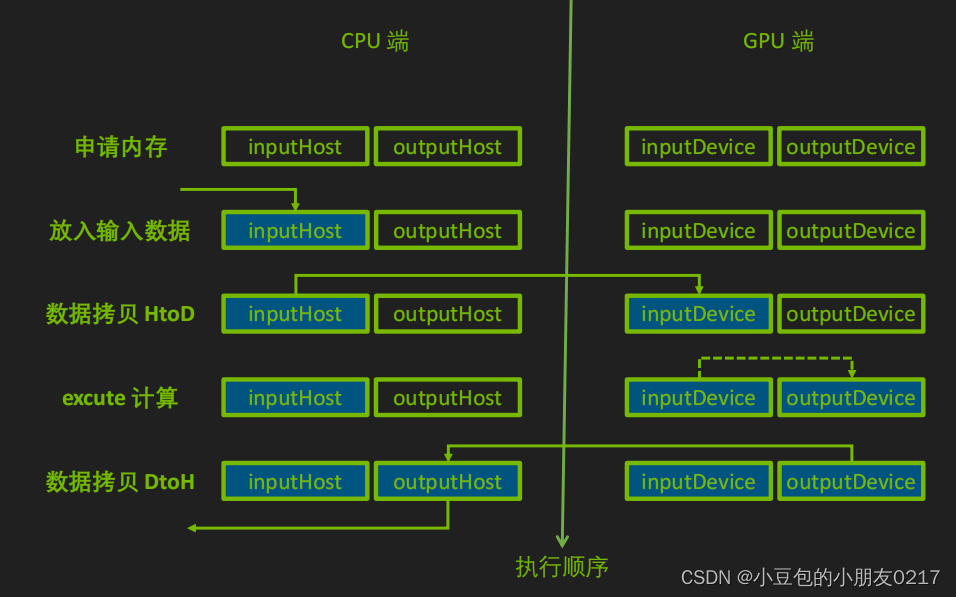

Buffer

内存和显存的申请

? inputHost = np.ascontiguousarray(inputData) # 不要忘了 ascontiguousarray!

? outputHost = np.empty(context.get_tensor_shape(iTensorName[1]), trt.nptype(engine.get_tensor_dtype(iTensorName[1])))

? inputDevice = cudart.cudaMalloc(inputHost.nbytes)[1]

? outputDevice = cudart.cudaMalloc(outputHost.nbytes)[1]

? context.set_tensor_address(iTensorName[0], inputDevice)

? context.set_tensor_address(iTensorName[1], outputDevice)

内存和显存之间的拷贝

? cudart.cudaMemcpy(inputDevice, inputHost.ctypes.data, inputHost.nbytes, cudart.cudaMemcpyKind.cudaMemcpyHostToDevice)

? cudart.cudaMemcpy(outputHost.ctypes.data, outputDevice, outputHost.nbytes, cudart.cudaMemcpyKind.cudaMemcpyDeviceToHost)

推理完成后释放显存

? cudart.cudaFree(inputDevice)

? cudart.cudaFree(outputDevice)

bufferH = []

data = cv2.imread(inferenceImage, cv2.IMREAD_GRAYSCALE).astype(np.float32).reshape(1, 1, nHeight, nWidth)

bufferH.append(np.ascontiguousarray(data))

for i in range(nInput, nIO):

bufferH.append(np.empty(context.get_tensor_shape(lTensorName[i]), dtype=trt.nptype(engine.get_tensor_dtype(lTensorName[i]))))

bufferD = []

for i in range(nIO):

bufferD.append(cudart.cudaMalloc(bufferH[i].nbytes)[1])

for i in range(nInput):

cudart.cudaMemcpy(bufferD[i], bufferH[i].ctypes.data, bufferH[i].nbytes, cudart.cudaMemcpyKind.cudaMemcpyHostToDevice)

for i in range(nIO):

context.set_tensor_address(lTensorName[i], int(bufferD[i]))

context.execute_async_v3(0)

for i in range(nInput, nIO):

cudart.cudaMemcpy(bufferH[i].ctypes.data, bufferD[i], bufferH[i].nbytes, cudart.cudaMemcpyKind.cudaMemcpyDeviceToHost)

for i in range(nIO):

print(lTensorName[i])

print(bufferH[i])

for b in bufferD:

cudart.cudaFree(b)

二、04-BuildEngineByONNXParser----pyTorch-ONNX-TensorRT-QAT

pip install --no-deps pytorch_quantization

pip install -i https://pypi.tuna.tsinghua.edu.cn/simple pyyaml

python main.py

模型量化(Quantization):通过 PyTorch Quantization 库对模型进行量化,包括使用最大值校准器(max calibrator)或直方图校准器(histogram calibrator)。

Calibrate model in pyTorch ---------------------------------------------------

quant_modules.initialize()

with t.no_grad():

# turn on calibration tool

for name, module in model.named_modules():

if isinstance(module, qnn.TensorQuantizer):

if module._calibrator is not None:

module.disable_quant()

module.enable_calib()

else:

module.disable()

for i, (xTrain, yTrain) in enumerate(trainLoader):

if i >= nCalibrationBatch:

break

model(Variable(xTrain).cuda())

# turn off calibration tool

for name, module in model.named_modules():

if isinstance(module, qnn.TensorQuantizer):

if module._calibrator is not None:

module.enable_quant()

module.disable_calib()

else:

module.enable()

def computeArgMax(model, **kwargs):

for _, module in model.named_modules():

if isinstance(module, qnn.TensorQuantizer) and module._calibrator is not None:

if isinstance(module._calibrator, calib.MaxCalibrator):

module.load_calib_amax()

else:

module.load_calib_amax(**kwargs)

if calibrator == "max":

computeArgMax(model, method="max")

#modelName = "./model-max-%d.pth" % (nCalibrationBatch * trainLoader.batch_size)

else:

for percentile in percentileList:

computeArgMax(model, method="percentile")

#modelName = "./model-percentile-%f-%d.pth" % (percentile, nCalibrationBatch * trainLoader.batch_size)

for method in ["mse", "entropy"]:

computeArgMax(model, method=method)

#modelName = "./model-%s-%f.pth" % (method, percentile)

#t.save(model.state_dict(), modelName)

print("Succeeded calibrating model in pyTorch!")

这段代码展示了如何在 PyTorch 中进行模型校准(calibration)。模型校准是量化(quantization)的一部分,它用于确定量化操作的参数,例如最大值或百分位数。

首先,代码通过调用 initialize() 方法初始化量化模块。然后,通过遍历模型中的每个模块,找到需要进行量化的 TensorQuantizer 模块,并为其打开校准工具。

接下来,代码开始进行校准。通过遍历训练数据集中的一定数量的批次,使用模型对输入数据进行前向传播,以触发校准过程。在校准期间,量化模块的量化操作被禁用,而校准操作被启用。

校准完成后,代码关闭校准工具,并根据不同的校准方法计算和加载相应的校准参数。如果使用最大值校准器,会调用 load_calib_amax() 方法加载校准参数;如果使用百分位数校准器,还需指定百分位数的值。

最后,代码保存校准后的模型参数。根据不同的校准方法,会生成相应命名的模型文件。

模型量化相关可参考:https://blog.csdn.net/m0_70420861/article/details/135559743

总结

04-BuildEngineByONNXParser

本文来自互联网用户投稿,该文观点仅代表作者本人,不代表本站立场。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。 如若内容造成侵权/违法违规/事实不符,请联系我的编程经验分享网邮箱:chenni525@qq.com进行投诉反馈,一经查实,立即删除!

- Python教程

- 深入理解 MySQL 中的 HAVING 关键字和聚合函数

- Qt之QChar编码(1)

- MyBatis入门基础篇

- 用Python脚本实现FFmpeg批量转换

- 通用图片转Excel与票证转为结构化数据的Excel识别有什么区别?

- Docker学习与应用(二)

- FreeRTOS学习--59讲 Tickless低功耗

- 前端网络面试:浏览器输入地址后发生了什么?

- 【二叉树线索化】(索引加速 | 标记附加域 | 三叉链表)

- CH32V307VCT6样片申请

- 大数据毕业设计:租房数据爬取分析可视化系统 K-means聚类算法 线性回归预测算法 机器学习(附源码)?

- 使用scipy处理图片——滤镜处理

- Java通过HttpClients发起GET、POST、PUT、DELETE、文件上传,文件下载,工具类HttpClientUtil

- 如何回答好“测得怎么样了?”