XTuner复现

1.XTuner简介

一个大语言模型微调工具箱,由上海人工智能实验室开发。支持的开源LLM (2023.11.01),详情见哔哩哔哩公众号

2.实验过程

在算力平台上进行搭建(InternStudio,该平台是活动期间免费提供使用)

2.1系统安装

# 如果你是在 InternStudio 平台,则从本地 clone 一个已有 pytorch 2.0.1 的环境:

/root/share/install_conda_env_internlm_base.sh xtuner0.1.9

# 如果你是在其他平台:

conda create --name xtuner0.1.9 python=3.10 -y

# 激活环境

conda activate xtuner0.1.9

# 进入家目录 (~的意思是 “当前用户的home路径”)

cd ~

# 创建版本文件夹并进入,以跟随本教程

mkdir xtuner019 && cd xtuner019

# 拉取 0.1.9 的版本源码

git clone -b v0.1.9 https://github.com/InternLM/xtuner

# 无法访问github的用户请从 gitee 拉取:

# git clone -b v0.1.9 https://gitee.com/Internlm/xtuner

# 进入源码目录

cd xtuner

# 从源码安装 XTuner

pip install -e '.[all]'安装完成后, 创建一个微调 oasst1 数据集的工作路径,进入

mkdir ~/ft-oasst1 && cd ~/ft-oasst12.3 微调

2.3.1 准备配置文件

XTuner 提供多个开箱即用的配置文件,用户可以通过下列命令查看:

假如显示bash: xtuner: command not found的话可以考虑在终端输入

export PATH=$PATH:'/root/.local/bin'显示的配置文件

拷贝一个配置文件到当前目录:?# xtuner copy-cfg ${CONFIG_NAME} ${SAVE_PATH}

在本案例中即:(注意最后有个英文句号,代表复制到当前路径)

xtuner copy-cfg internlm_chat_7b_qlora_oasst1_e3 .

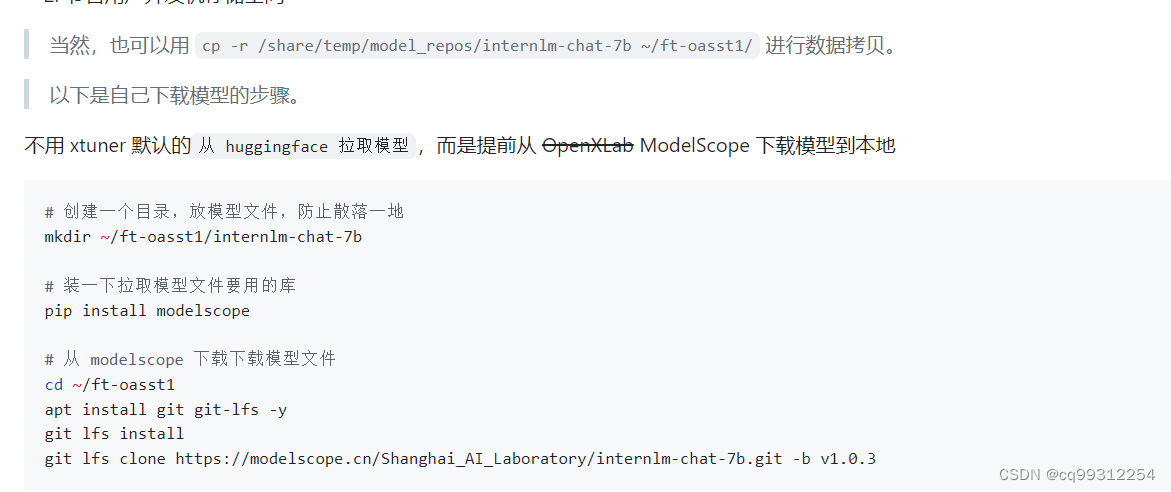

2.3.2 模型下载

由于下载模型很慢,在教学平台可以直接复制模型

ln -s /share/temp/model_repos/internlm-chat-7b ~/ft-oasst1/

2.3.3 数据集下载

由于 huggingface 网络问题,平台已经复制好,复制到正确位置即可:

cd ~/ft-oasst1

# ...-guanaco 后面有个空格和英文句号啊

cp -r /root/share/temp/datasets/openassistant-guanaco .2.3.4 修改配置文件

修改其中的模型和数据集为 本地路径

vim internlm_chat_7b_qlora_oasst1_e3_copy.py减号代表要删除的行,加号代表要增加的行

# 修改模型为本地路径

- pretrained_model_name_or_path = 'internlm/internlm-chat-7b'

+ pretrained_model_name_or_path = './internlm-chat-7b'

# 修改训练数据集为本地路径

- data_path = 'timdettmers/openassistant-guanaco'

+ data_path = './openassistant-guanaco'

2.3.5 开始微调

训练:

xtuner train ${CONFIG_NAME_OR_PATH}

也可以增加 deepspeed 进行训练加速:

xtuner train ${CONFIG_NAME_OR_PATH} --deepspeed deepspeed_zero2

例如,我们可以利用 QLoRA 算法在 oasst1 数据集上微调 InternLM-7B:

# 单卡

## 用刚才改好的config文件训练

xtuner train ./internlm_chat_7b_qlora_oasst1_e3_copy.py

# 多卡

NPROC_PER_NODE=${GPU_NUM} xtuner train ./internlm_chat_7b_qlora_oasst1_e3_copy.py

# 若要开启 deepspeed 加速,增加 --deepspeed deepspeed_zero2 即可

微调得到的 PTH 模型文件和其他杂七杂八的文件都默认在当前的?./work_dirs?中,跑完训练后,当前路径是这样:

2.3.6 将得到的 PTH 模型转换为 HuggingFace 模型,即:生成 Adapter 文件夹

xtuner convert pth_to_hf ${CONFIG_NAME_OR_PATH} ${PTH_file_dir} ${SAVE_PATH}在本示例中,为:

mkdir hf

export MKL_SERVICE_FORCE_INTEL=1

xtuner convert pth_to_hf ./internlm_chat_7b_qlora_oasst1_e3_copy.py ./work_dirs/internlm_chat_7b_qlora_oasst1_e3_copy/epoch_1.pth ./hf

此时,hf 文件夹即为我们平时所理解的所谓 “LoRA 模型文件”

2.4 部署与测试

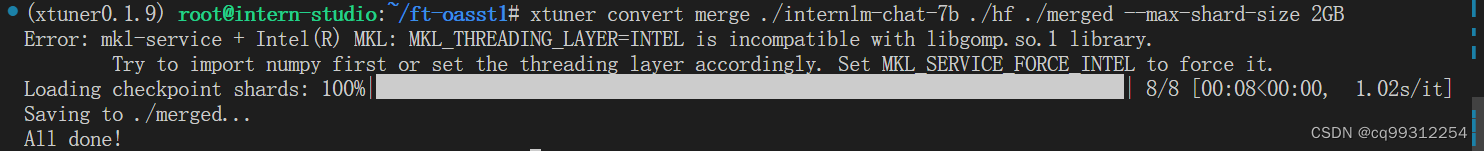

2.4.1 将 HuggingFace adapter 合并到大语言模型:

基本语法

xtuner convert merge ./internlm-chat-7b ./hf ./merged --max-shard-size 2GB

# xtuner convert merge \

# ${NAME_OR_PATH_TO_LLM} \

# ${NAME_OR_PATH_TO_ADAPTER} \

# ${SAVE_PATH} \

# --max-shard-size 2GB2.4.2 与合并后的模型对话:

# 加载 Adapter 模型对话(Float 16)

xtuner chat ./merged --prompt-template internlm_chat

# 4 bit 量化加载

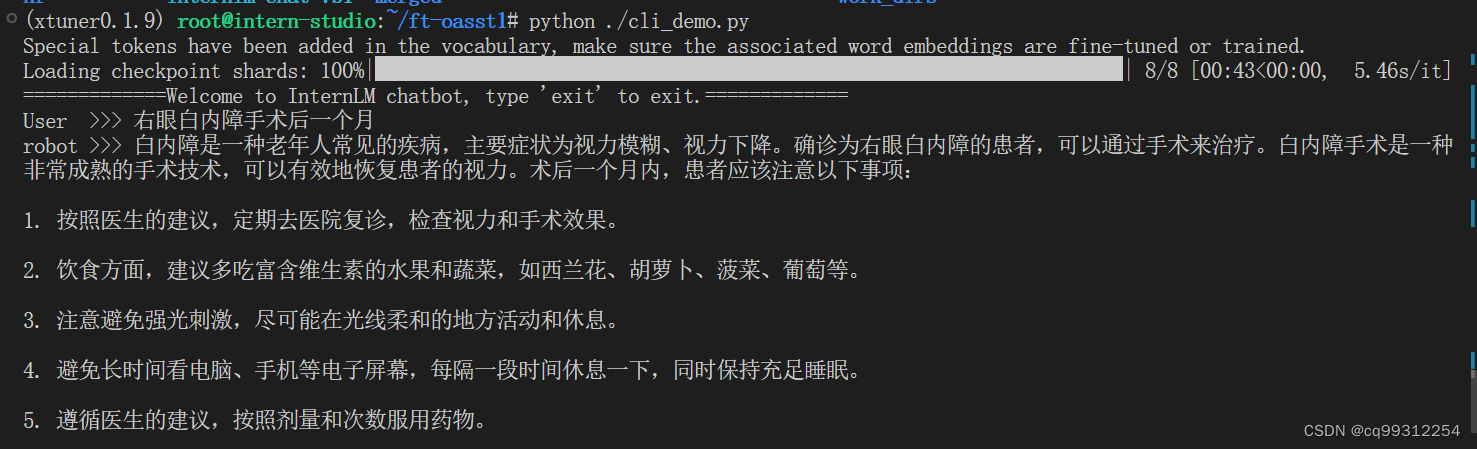

# xtuner chat ./merged --bits 4 --prompt-template internlm_chat2.4.3 Demo

在/ft-oasst1/目录下建立cli_demo.py文件,代码如下:

import torch

from transformers import AutoTokenizer, AutoModelForCausalLM

model_name_or_path = "/root/model/Shanghai_AI_Laboratory/internlm-chat-7b"

tokenizer = AutoTokenizer.from_pretrained(model_name_or_path, trust_remote_code=True)

model = AutoModelForCausalLM.from_pretrained(model_name_or_path, trust_remote_code=True, torch_dtype=torch.bfloat16, device_map='auto')

model = model.eval()

system_prompt = """You are an AI assistant whose name is InternLM (书生·浦语).

- InternLM (书生·浦语) is a conversational language model that is developed by Shanghai AI Laboratory (上海人工智能实验室). It is designed to be helpful, honest, and harmless.

- InternLM (书生·浦语) can understand and communicate fluently in the language chosen by the user such as English and 中文.

"""

messages = [(system_prompt, '')]

print("=============Welcome to InternLM chatbot, type 'exit' to exit.=============")

while True:

input_text = input("User >>> ")

input_text.replace(' ', '')

if input_text == "exit":

break

response, history = model.chat(tokenizer, input_text, history=messages)

messages.append((input_text, response))

print(f"robot >>> {response}")

修改代码

将model_name_or_path = "/root/model/Shanghai_AI_Laboratory/internlm-chat-7b"

修改为model_name_or_path = "merged"

???????

本文来自互联网用户投稿,该文观点仅代表作者本人,不代表本站立场。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。 如若内容造成侵权/违法违规/事实不符,请联系我的编程经验分享网邮箱:chenni525@qq.com进行投诉反馈,一经查实,立即删除!