Flink定制化功能开发,demo代码

前言:

? ? ? ?这是一个Flink自定义开发的基础教学。本文将通过flink的DataStream模块API,以kafka为数据源,构建一个基础测试环境;包含一个kafka生产者线程工具,一个自定义FilterFunction算子,一个自定义MapFunction算子,用一个flink任务的代码逻辑,将实时读kafka并多层处理串起来;让读者体会通过Flink构建自定义函数的技巧。

一、Flink的开发模块分析

Flink提供四个基础模块:核心SDK开发API分别是处理实时计算的DataStream和处理离线计算的DataSet;基于这两个SDK,在其上包装了TableAPI开发模块的SDK;在Table API之上,定义了高度抽象可用SQL开发任务的FlinkSQL。在核心开发API之下,还有基础API的接口,可用于对时间,状态,算子等最细粒度的特性对象做操作,如包装自定义算子的ProcessWindowFunction和ProcessFunction等基础函数以及内置的对象状态StateTtlConfig;

FLINK开发API关系结构如下:

二、定制化开发Demo演示

2.1 场景介绍

Flink实时任务的的通用技术架构是消息队列中间件+Flink任务:

将数据采集到Kafka或pulser这类队列中间件的Topic,然后使用Flink内置的kafkaSource,监控Topic的数据情况,做实时处理。

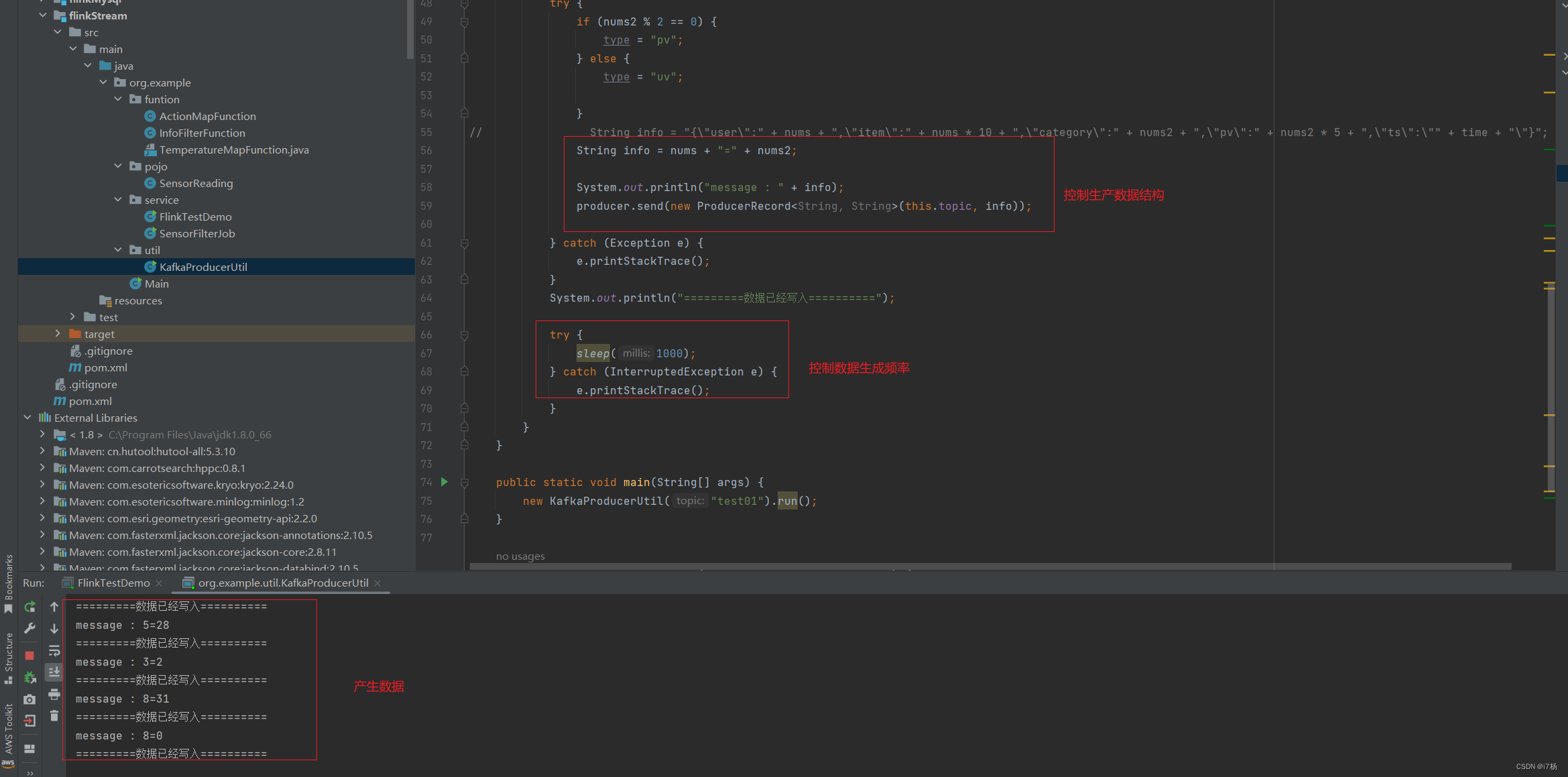

- 这里提供一个kafka的生产者线程,可以自定义构建需要的数据和上传时间,用于控制写入kafka的数据源;

- 重写两个DataStream的基础算子:FilterFunction和MapFunction,用于让读者体会,如何对FLINK函数的重新包装,后续更基础的函数原理一样;我这里用String数据对象做处理,减少对象转换的SDK引入,通常要基于业务做数据polo的加工,这个自己处理,将对象换成业务对象;

- 然后使用Flink将整个业务串起来,从kafka读数据,经过两层处理,最终输出需要的结果;

2.2 本地demo演示

2.2.1 pom文件

这里以flink1.14.6+scala1.12版本为例:

2.2.2 kafka生产者线程方法

package org.example.util;

import org.apache.kafka.clients.producer.KafkaProducer;

import org.apache.kafka.clients.producer.Producer;

import org.apache.kafka.clients.producer.ProducerRecord;

import java.util.*;

/**

* 向kafka生产数据

*

* @author i7杨

* @date 2024/01/12 13:02:29

*/

public class KafkaProducerUtil extends Thread {

private String topic;

public KafkaProducerUtil(String topic) {

super();

this.topic = topic;

}

private static Producer<String, String> createProducer() {

// 通过Properties类设置Producer的属性

Properties properties = new Properties();

// 测试环境 kafka 配置

properties.put("bootstrap.servers", "ip2:9092,ip:9092,ip3:9092");

properties.put("key.serializer", "org.apache.kafka.common.serialization.StringSerializer");

properties.put("value.serializer", "org.apache.kafka.common.serialization.StringSerializer");

return new KafkaProducer<String, String>(properties);

}

@Override

public void run() {

Producer<String, String> producer = createProducer();

Random random = new Random();

Random random2 = new Random();

while (true) {

int nums = random.nextInt(10);

int nums2 = random.nextInt(50);

// double nums2 = random2.nextDouble();

String time = new Date().getTime() / 1000 + 5 + "";

String type = "pv";

try {

if (nums2 % 2 == 0) {

type = "pv";

} else {

type = "uv";

}

// String info = "{\"user\":" + nums + ",\"item\":" + nums * 10 + ",\"category\":" + nums2 + ",\"pv\":" + nums2 * 5 + ",\"ts\":\"" + time + "\"}";

String info = nums + "=" + nums2;

System.out.println("message : " + info);

producer.send(new ProducerRecord<String, String>(this.topic, info));

} catch (Exception e) {

e.printStackTrace();

}

System.out.println("=========数据已经写入==========");

try {

sleep(1000);

} catch (InterruptedException e) {

e.printStackTrace();

}

}

}

public static void main(String[] args) {

new KafkaProducerUtil("test01").run();

}

public static void sendMessage(String topic, String message) {

Producer<String, String> producer = createProducer();

producer.send(new ProducerRecord<String, String>(topic, message));

}

}

2.2.3 自定义基础函数

这里自定义了filter和map两个算子函数,测试逻辑按照数据结构变化:

自定义FilterFunction函数算子:阈值小于40的过滤掉

package org.example.funtion;

import org.apache.flink.api.common.functions.FilterFunction;

/**

* FilterFunction重构

*

* @author i7杨

* @date 2024/01/12 13:02:29

*/

public class InfoFilterFunction implements FilterFunction<String> {

private double threshold;

public InfoFilterFunction(double threshold) {

this.threshold = threshold;

}

@Override

public boolean filter(String value) throws Exception {

if (value.split("=").length == 2)

// 阈值过滤

return Double.valueOf(value.split("=")[1]) > threshold;

else return false;

}

}

自定义MapFunction函数:后缀为2的,添加上特殊信息

package org.example.funtion;

import org.apache.flink.api.common.functions.MapFunction;

public class ActionMapFunction implements MapFunction<String, String> {

@Override

public String map(String value) throws Exception {

System.out.println("value:" + value);

if (value.endsWith("2"))

return value.concat(":Special processing information");

else return value;

}

}

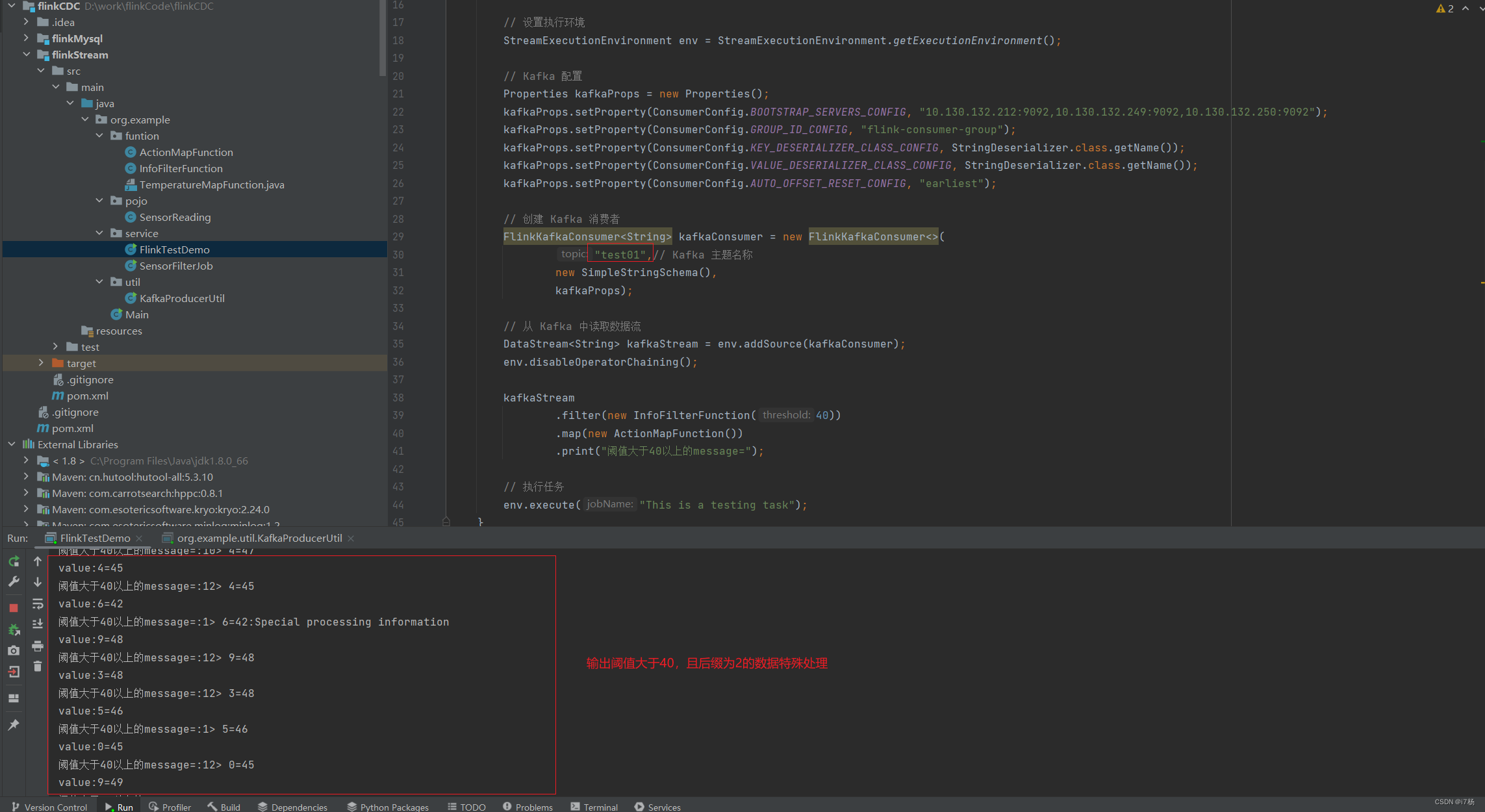

2.2.4 flink任务代码

任务逻辑:使用kafka工具产生数据,然后监控kafka的topic,讲几个函数串起来,输出结果;

package org.example.service;

import org.apache.flink.api.common.serialization.SimpleStringSchema;

import org.apache.flink.streaming.api.datastream.DataStream;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.streaming.connectors.kafka.FlinkKafkaConsumer;

import org.apache.kafka.clients.consumer.ConsumerConfig;

import org.apache.kafka.common.serialization.StringDeserializer;

import org.example.funtion.ActionMapFunction;

import org.example.funtion.InfoFilterFunction;

import java.util.*;

public class FlinkTestDemo {

public static void main(String[] args) throws Exception {

// 设置执行环境

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

// Kafka 配置

Properties kafkaProps = new Properties();

kafkaProps.setProperty(ConsumerConfig.BOOTSTRAP_SERVERS_CONFIG, "ip1:9092,ip2:9092,ip3:9092");

kafkaProps.setProperty(ConsumerConfig.GROUP_ID_CONFIG, "flink-consumer-group");

kafkaProps.setProperty(ConsumerConfig.KEY_DESERIALIZER_CLASS_CONFIG, StringDeserializer.class.getName());

kafkaProps.setProperty(ConsumerConfig.VALUE_DESERIALIZER_CLASS_CONFIG, StringDeserializer.class.getName());

kafkaProps.setProperty(ConsumerConfig.AUTO_OFFSET_RESET_CONFIG, "earliest");

// 创建 Kafka 消费者

FlinkKafkaConsumer<String> kafkaConsumer = new FlinkKafkaConsumer<>(

"test01",// Kafka 主题名称

new SimpleStringSchema(),

kafkaProps);

// 从 Kafka 中读取数据流

DataStream<String> kafkaStream = env.addSource(kafkaConsumer);

env.disableOperatorChaining();

kafkaStream

.filter(new InfoFilterFunction(40))

.map(new ActionMapFunction())

.print("阈值大于40以上的message=");

// 执行任务

env.execute("This is a testing task");

}

}

运行结果:

本文来自互联网用户投稿,该文观点仅代表作者本人,不代表本站立场。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。 如若内容造成侵权/违法违规/事实不符,请联系我的编程经验分享网邮箱:chenni525@qq.com进行投诉反馈,一经查实,立即删除!

- Python教程

- 深入理解 MySQL 中的 HAVING 关键字和聚合函数

- Qt之QChar编码(1)

- MyBatis入门基础篇

- 用Python脚本实现FFmpeg批量转换

- HTML5网页设计作业:明星网站设计与实现——明星薛之谦介绍网页设计7个页面HTML+CSS+JavaScript

- 安全生产信息化平台是如何实现“五要素”的动态管理的

- java参数校验 @Validated和@Valid区别

- Go语言认识

- 【2023年度总结】蜕变与挑战

- sqrt(1./x)和sqrt(1.0/x)得到的结果不同 matlab

- Python实现基于广义线性回归模型进行Meta分析(meta_analysis算法)项目实战

- WeNet语音识别分词制作词云图

- 个人网站微信支付 V免签部署

- 深入理解Vue3中的watch与watchEffect的使用与区别