API资源对象StorageClass;Ceph存储;搭建Ceph集群;k8s使用ceph

API资源对象StorageClass;Ceph存储;搭建Ceph集群;k8s使用ceph

API资源对象StorageClass

SC的主要作用在于,自动创建PV,从而实现PVC按需自动绑定PV。

下面我们通过创建一个基于NFS的SC来演示SC的作用。

要想使用NFS的SC,还需要安装一个NFS provisioner,provisioner里会定义NFS相关的信息(服务器IP、共享目录等)

github地址: https://github.com/kubernetes-sigs/nfs-subdir-external-provisioner

将源码下载下来:

git clone https://github.com/kubernetes-sigs/nfs-subdir-external-provisioner

cd nfs-subdir-external-provisioner/deploy

sed -i 's/namespace: default/namespace: kube-system/' rbac.yaml ##修改命名空间为kube-system

kubectl apply -f rbac.yaml ##创建rbac授权

修改deployment.yaml

sed -i 's/namespace: default/namespace: kube-system/' deployment.yaml ##修改命名空间为kube-system

##你需要修改标红的部分

spec:

serviceAccountName: nfs-client-provisioner

containers:

- name: nfs-client-provisioner

image: chronolaw/nfs-subdir-external-provisioner:v4.0.2 ##改为dockerhub地址

volumeMounts:

- name: nfs-client-root

mountPath: /persistentvolumes

env:

- name: PROVISIONER_NAME

value: k8s-sigs.io/nfs-subdir-external-provisioner

- name: NFS_SERVER

value: 192.168.222.99 ##nfs服务器地址

- name: NFS_PATH

value: /data/nfs ##nfs共享目录

volumes:

- name: nfs-client-root

nfs:

server: 192.168.222.99 ##nfs服务器地址

path: /data/nfs ##nfs共享目录

应用yaml

kubectl apply -f deployment.yaml

kubectl apply -f class.yaml ##创建storageclass

SC YAML示例

cat class.yaml

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

name: nfs-client

provisioner: k8s-sigs.io/nfs-subdir-external-provisioner # or choose another name, must match deployment's env PROVISIONER_NAME'

parameters:

archiveOnDelete: "false" ##自动回收存储空间

有了SC,还需要一个PVC

vi nfsPvc.yaml

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: nfspvc

spec:

storageClassName: nfs-client

accessModes:

- ReadWriteMany

resources:

requests:

storage: 500Mi

下面创建一个Pod,来使用PVC

vi nfsPod.yaml

apiVersion: v1

kind: Pod

metadata:

name: nfspod

spec:

containers:

- name: nfspod

image: nginx:1.23.2

volumeMounts:

- name: nfspv

mountPath: "/usr/share/nginx/html"

volumes:

- name: nfspv

persistentVolumeClaim:

claimName: nfspvc

Ceph存储

说明:Kubernetes使用Ceph作为存储,有两种方式,一种是将Ceph部署在Kubernetes里,需要借助一个工具rook;另外一种就是使用外部的Ceph集群,也就是说需要单独部署Ceph集群。

下面,我们使用的就是第二种。

搭建Ceph集群

1)准备工作

| 机器编号 | 主机名 | IP |

|---|---|---|

| 1 | ceph1 | 192.168.222.111 |

| 2 | ceph2 | 192.168.222.112 |

| 3 | ceph3 | 192.168.222.113 |

关闭selinux、firewalld,配置hostname以及/etc/hosts

为每一台机器都准备至少一块单独的磁盘(vmware下很方便增加虚拟磁盘),不需要格式化。

所有机器安装时间同步服务chrony

yum install -y chrony

systemctl start chronyd

systemctl enable chronyd

设置yum源(ceph1上)

vi /etc/yum.repos.d/ceph.repo #内容如下

cat /etc/yum.repos.d/ceph.repo

[ceph]

name=ceph

baseurl=http://mirrors.aliyun.com/ceph/rpm-pacific/el8/x86_64/

gpgcheck=0

priority =1

[ceph-noarch]

name=cephnoarch

baseurl=http://mirrors.aliyun.com/ceph/rpm-pacific/el8/noarch/

gpgcheck=0

priority =1

[ceph-source]

name=Ceph source packages

baseurl=http://mirrors.aliyun.com/ceph/rpm-pacific/el8/SRPMS

gpgcheck=0

priority=1

所有机器安装docker-ce(ceph使用docker形式部署)

先安装yum-utils工具

yum install -y yum-utils

配置Docker官方的yum仓库,如果做过,可以跳过

yum-config-manager \

--add-repo \

https://download.docker.com/linux/centos/docker-ce.repo

安装docker-ce

yum install -y docker-ce

启动服务

systemctl start docker

systemctl enable docker

所有机器安装python3、lvm2(三台都做)

yum install -y python3 lvm2

2)安装cephadm(ceph1上执行)

yum install -y cephadm

3)使用cephadm部署ceph(ceph1上)

cephadm bootstrap --mon-ip 192.168.222.111

注意看用户名、密码

4)访问dashboard

https://192.168.222.111:8443

更改密码后,用新密码登录控制台

5)增加host

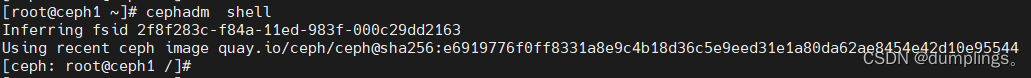

首先进入ceph shell(ceph1上)

cephadm shell ##会进入ceph的shell界面下

生成ssh密钥对儿

[ceph: root@ceph1 /]# ceph cephadm get-pub-key > ~/ceph.pub

配置到另外两台机器免密登录

[ceph: root@ceph1 /]# ssh-copy-id -f -i ~/ceph.pub root@ceph2

[ceph: root@ceph1 /]# ssh-copy-id -f -i ~/ceph.pub root@ceph3

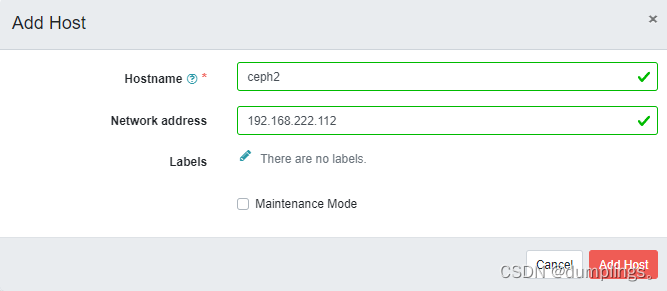

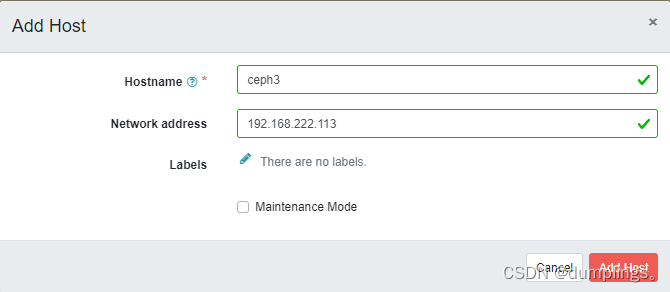

到浏览器里,增加主机

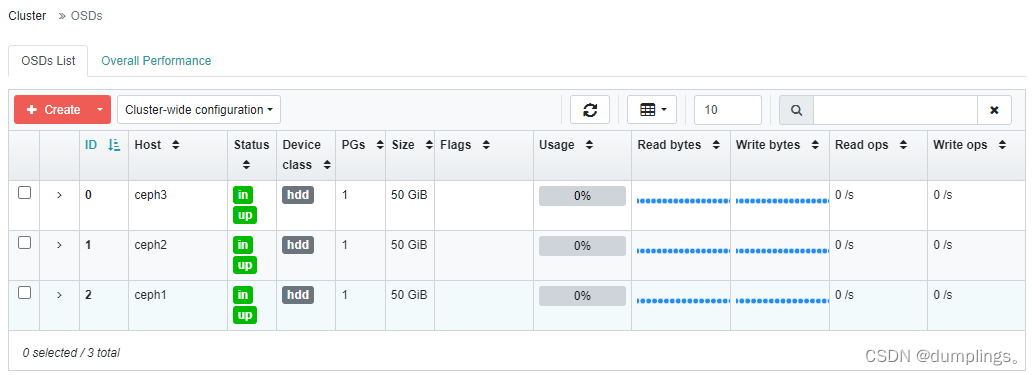

6)创建OSD(ceph shell模式下,在ceph上操作)

假设三台机器上新增的新磁盘为/dev/sdb

ceph orch daemon add osd ceph1:/dev/sdb

ceph orch daemon add osd ceph2:/dev/sdb

ceph orch daemon add osd ceph3:/dev/sdb

查看磁盘列表:

ceph orch device ls

此时dashboard上也可以看到

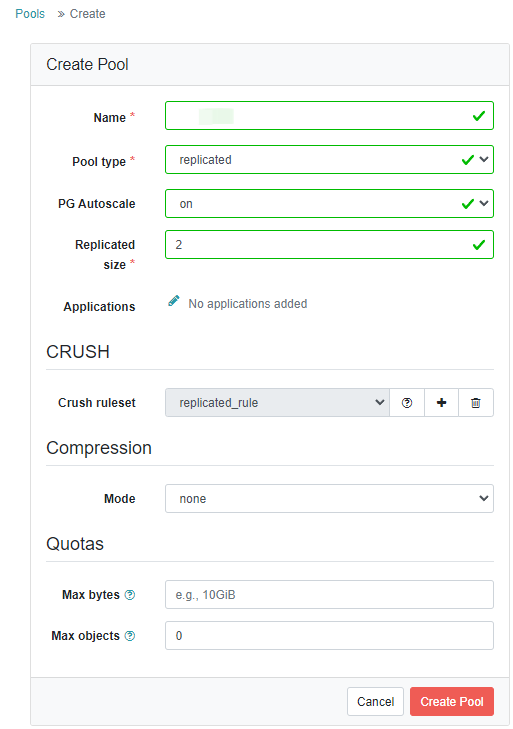

7)创建pool

8)查看集群状态

ceph -s

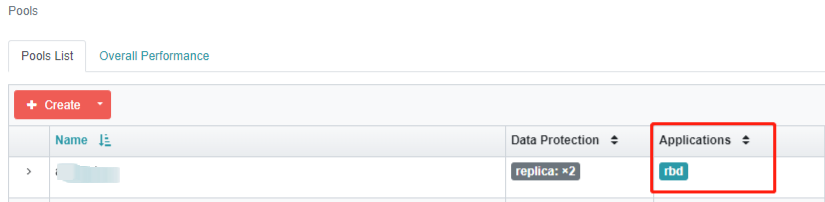

9)针对tang-1 pool启用rbd application

ceph osd pool application enable tang-1 rbd

10)初始化pool

rbd pool init tang-1

k8s使用ceph

1)获取ceph集群信息和admin用户的key(ceph那边)

#获取集群信息

[ceph: root@ceph1 /]# ceph mon dump

epoch 3

fsid 2f8f283c-f84a-11ed-983f-000c29dd2163 ##这一串一会儿用

last_changed 2023-05-22T04:08:00.684893+0000

created 2023-05-22T02:44:15.871443+0000

min_mon_release 16 (pacific)

election_strategy: 1

0: [v2:192.168.222.111:3300/0,v1:192.168.222.111:6789/0] mon.ceph1

1: [v2:192.168.222.112:3300/0,v1:192.168.222.112:6789/0] mon.ceph2

2: [v2:192.168.222.113:3300/0,v1:192.168.222.113:6789/0] mon.ceph3

dumped monmap epoch 3

#获取admin用户key

[ceph: root@ceph1 /]# ceph auth get-key client.admin ; echo

AQD/1mpkHaTTCxAAqETAy48Z/aChnMso92d+ug== #这串一会用

2)下载并导入镜像

将用到的镜像先下载下来,避免启动容器时,镜像下载太慢或者无法下载

可以下载到其中某一个节点上,然后将镜像拷贝到其它节点

#下载镜像(其中一个节点)

wget -P /tmp/ https://d.frps.cn/file/tools/ceph-csi/k8s_1.24_ceph-csi.tar

#拷贝

scp /tmp/k8s_1.24_ceph-csi.tar tanglinux02:/tmp/

scp /tmp/k8s_1.24_ceph-csi.tar tanglinux03:/tmp/

#导入镜像(所有k8s节点)

ctr -n k8s.io i import k8s_1.24_ceph-csi.tar

3)建ceph的 provisioner

创建ceph目录,后续将所有yaml文件放到该目录下

mkdir ceph

cd ceph

创建secret.yaml

cat > secret.yaml <<EOF

apiVersion: v1

kind: Secret

metadata:

name: csi-rbd-secret

namespace: default

stringData:

userID: admin

userKey: AQBnanBkRTh0DhAAJVBdxOySVUasyOJiMAibYQ== #这串上面已经获取

EOF

创建config-map.yaml

cat > csi-config-map.yaml <<EOF

apiVersion: v1

kind: ConfigMap

metadata:

name: "ceph-csi-config"

data:

config.json: |-

[

{

"clusterID": "0fd45688-fb9d-11ed-b585-000c29dd2163",

"monitors": [

"192.168.222.111:6789",

"192.168.222.112:6789",

"192.168.222.113:6789"

]

}

]

EOF

创建ceph-conf.yaml

cat > ceph-conf.yaml <<EOF

apiVersion: v1

kind: ConfigMap

data:

ceph.conf: |

[global]

auth_cluster_required = cephx

auth_service_required = cephx

auth_client_required = cephx

# keyring is a required key and its value should be empty

keyring: |

metadata:

name: ceph-config

EOF

创建csi-kms-config-map.yaml(该config内容为空)

cat > csi-kms-config-map.yaml <<EOF

---

apiVersion: v1

kind: ConfigMap

data:

config.json: |-

{}

metadata:

name: ceph-csi-encryption-kms-config

EOF

下载其余rbac以及provisioner相关yaml

wget https://d.frps.cn/file/tools/ceph-csi/csi-provisioner-rbac.yaml

wget https://d.frps.cn/file/tools/ceph-csi/csi-nodeplugin-rbac.yaml

wget https://d.frps.cn/file/tools/ceph-csi/csi-rbdplugin.yaml

wget https://d.frps.cn/file/tools/ceph-csi/csi-rbdplugin-provisioner.yaml

应用所有yaml(注意,当前目录是在ceph目录下)

for f in `ls *.yaml`; do echo $f; kubectl apply -f $f; done

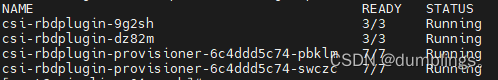

检查provisioner的pod,状态为running才对

kubectl get po

4)创建storageclass

在k8s上创建ceph-sc.yaml

cat > ceph-sc.yaml <<EOF

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

name: csi-rbd-sc #storageclass名称

provisioner: rbd.csi.ceph.com #驱动器

parameters:

clusterID: 0fd45688-fb9d-11ed-b585-000c29dd2163 #ceph集群id

pool: tang-1 #pool空间

imageFeatures: layering #rbd特性

csi.storage.k8s.io/provisioner-secret-name: csi-rbd-secret

csi.storage.k8s.io/provisioner-secret-namespace: default

csi.storage.k8s.io/controller-expand-secret-name: csi-rbd-secret

csi.storage.k8s.io/controller-expand-secret-namespace: default

csi.storage.k8s.io/node-stage-secret-name: csi-rbd-secret

csi.storage.k8s.io/node-stage-secret-namespace: default

reclaimPolicy: Delete #pvc回收机制

allowVolumeExpansion: true #对扩展卷进行扩展

mountOptions: #StorageClass 动态创建的 PersistentVolume 将使用类中 mountOptions 字段指定的挂载选项

- discard

EOF

##应用yaml

kubectl apply -f ceph-sc.yaml

5)创建pvc

在k8s上创建ceph-pvc.yaml

cat > ceph-pvc.yaml <<EOF

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: ceph-pvc #pvc名称

spec:

accessModes:

- ReadWriteOnce #访问模式

resources:

requests:

storage: 1Gi #存储空间

storageClassName: csi-rbd-sc

EOF

#应用yaml

kubectl apply -f ceph-pvc.yaml

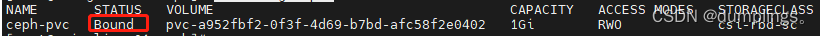

查看pvc状态,STATUS必须为Bound

kubectl get pvc

6)创建pod使用ceph存储

cat > ceph-pod.yaml <<EOF

apiVersion: v1

kind: Pod

metadata:

name: ceph-pod

spec:

containers:

- name: ceph-ng

image: nginx:1.23.2

volumeMounts:

- name: ceph-mnt

mountPath: /mnt

readOnly: false

volumes:

- name: ceph-mnt

persistentVolumeClaim:

claimName: ceph-pvc

EOF

kubectl apply -f ceph-pod.yaml

查看pv

kubectl get pv

NAME CAPACITY ACCESS MODES RECLAIM POLICY STATUS CLAIM STORAGECLASS REASON AGE

pvc-a952fbf2-0f3f-4d69-b7bd-afc58f2e0402 1Gi RWO Delete Bound default/ceph-pvc csi-rbd-sc 1h

在ceph这边查看rbd

[ceph: root@ceph1 /]# rbd ls tang-1

tang-img1

csi-vol-ca03a97d-f985-11ed-ab20-9e95af1f105a

在pod里查看挂载情况

kubectl exec -it ceph-pod -- df

Filesystem 1K-blocks Used Available Use% Mounted on

overlay 18375680 11418696 6956984 63% /

tmpfs 65536 0 65536 0% /dev

tmpfs 914088 0 914088 0% /sys/fs/cgroup

/dev/rbd0 996780 24 980372 1% /mnt

/dev/sda3 18375680 1141866 6956984 63% /etc/hosts

shm 65536 0 65536 0% /dev/shm

tmpfs 1725780 12 1725768 1% /run/secrets/kubernetes.io/serviceaccount

tmpfs 914088 0 914088 0% /proc/acpi

tmpfs 914088 0 914088 0% /proc/scsi

tmpfs 914088 0 914088 0% /sys/firmware

本文来自互联网用户投稿,该文观点仅代表作者本人,不代表本站立场。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。 如若内容造成侵权/违法违规/事实不符,请联系我的编程经验分享网邮箱:chenni525@qq.com进行投诉反馈,一经查实,立即删除!

- Python教程

- 深入理解 MySQL 中的 HAVING 关键字和聚合函数

- Qt之QChar编码(1)

- MyBatis入门基础篇

- 用Python脚本实现FFmpeg批量转换

- Golang结构体的内存布局与性能优化

- IMU用于无人机故障诊断

- 基因表达分析聚类&分析

- 多个显示设备接入卡开机Logo问题分析报告

- 事务的四个特性、四个隔离级别以及数据库的常用锁

- 张驰咨询:六西格玛项目助力麒盛科技提升产能和效益

- 未来 AI 可能给哪些产业带来哪些进步与帮助?

- 代码随想录算法训练营第六天| 哈希表理论基础、242.有效的字母异位词、349. 两个数组的交集、202. 快乐数、1. 两数之和

- DRF-源码解析-2-认证流程,drf的认证源码,drf的认证流程

- 用Python快速从深层嵌套 JSON 中找到特定的 Value