图像融合论文阅读:DDFM: Denoising Diffusion Model for Multi-Modality Image Fusion

@article{zhao2023ddfm,

title={DDFM: denoising diffusion model for multi-modality image fusion},

author={Zhao, Zixiang and Bai, Haowen and Zhu, Yuanzhi and Zhang, Jiangshe and Xu, Shuang and Zhang, Yulun and Zhang, Kai and Meng, Deyu and Timofte, Radu and Van Gool, Luc},

journal={arXiv preprint arXiv:2303.06840},

year={2023}

}

论文级别:ICCV 2023

影响因子:-

文章目录

📖论文解读

这篇文章和CDDFuse是同一个团队的成果。

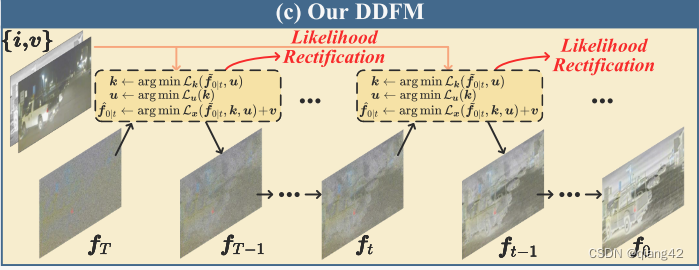

作者利用扩散概率模型DDPM(denoising diffusion probabilistic model )用在多模态图像融合任务中,提出了去噪扩散图像融合模型(Denoising Diffusion image Fusion Model (DDFM)),融合任务被定义为了在DDPM采样网络下的条件生成问题,并进一步划分为了:无条件生成和最大似然这两个子问题。

🔑关键词

扩散概率模型,多模态图像融合

💭核心思想

以后再填坑,公式推导太多了,哭泣.gif

🪢网络结构

作者提出的网络结构如下所示。

📉损失函数

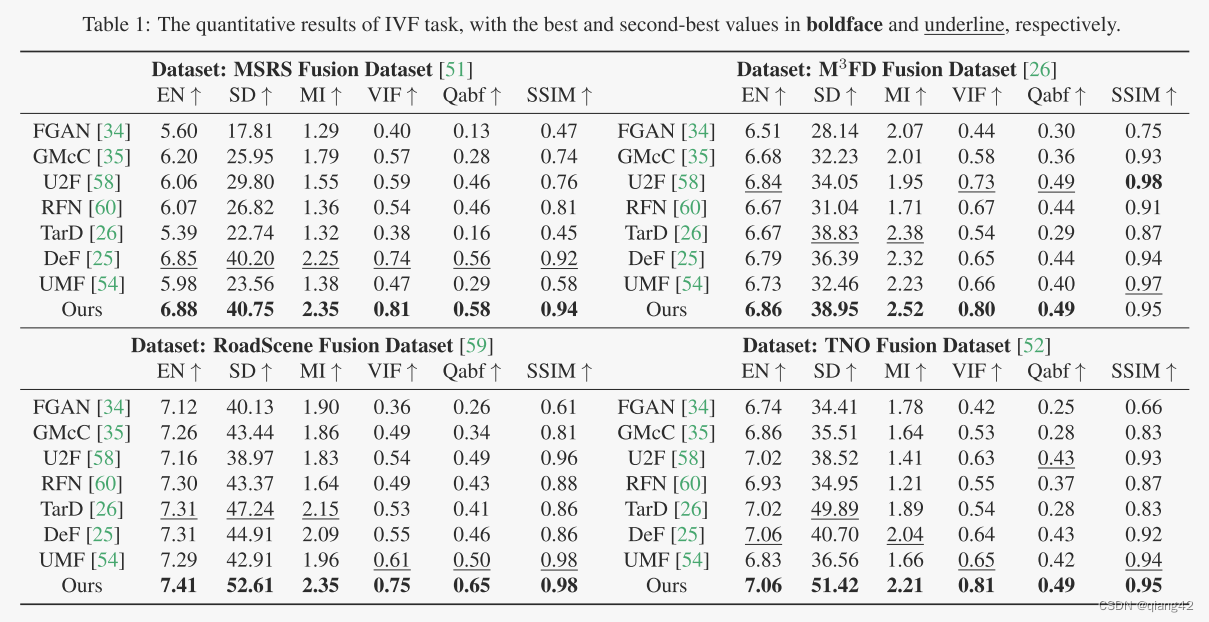

🔢数据集

- TNO, RoadScene, MSRS, M3FD

图像融合数据集链接

[图像融合常用数据集整理]

🎢训练设置

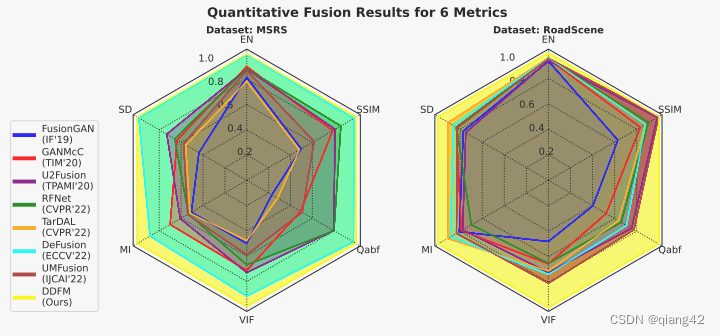

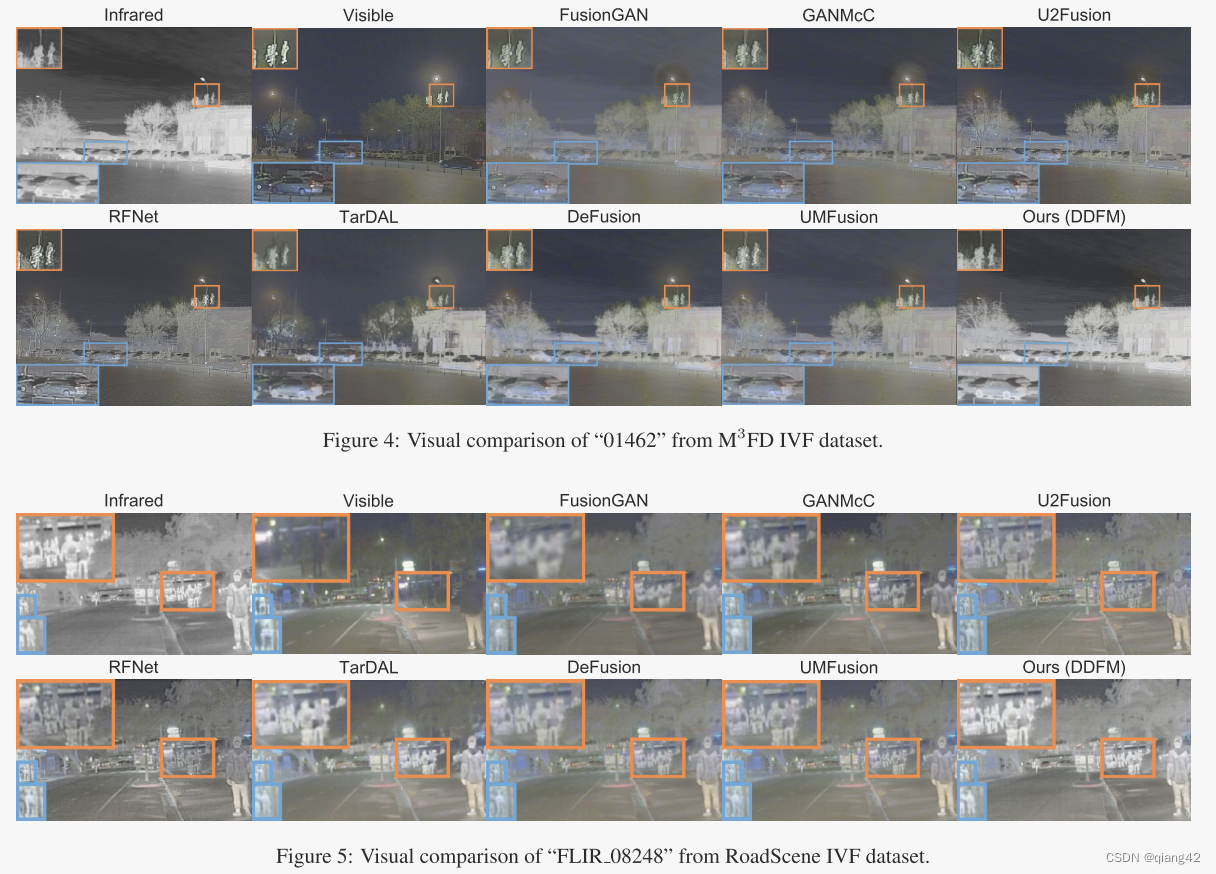

🔬实验

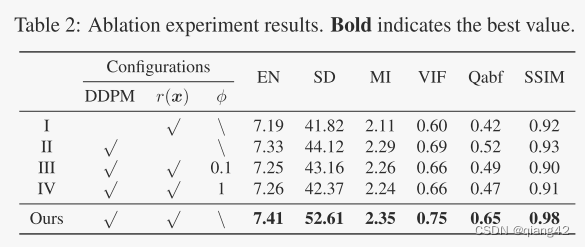

📏评价指标

- EN

- SD

- MI

- VIF

- Qabf

- SSIM

参考资料

[图像融合定量指标分析]

🥅Baseline

- FusionGAN, GANMcC, TarDAL, UMFusion, U2Fusion, RFNet, DeFusion

???参考资料

???强烈推荐必看博客[图像融合论文baseline及其网络模型]???

🔬实验结果

更多实验结果及分析可以查看原文:

📖[论文下载地址]

🚀传送门

📑图像融合相关论文阅读笔记

📑[Dif-fusion: Towards high color fidelity in infrared and visible image fusion with diffusion models]

📑[Coconet: Coupled contrastive learning network with multi-level feature ensemble for multi-modality image fusion]

📑[LRRNet: A Novel Representation Learning Guided Fusion Network for Infrared and Visible Images]

📑[(DeFusion)Fusion from decomposition: A self-supervised decomposition approach for image fusion]

📑[ReCoNet: Recurrent Correction Network for Fast and Efficient Multi-modality Image Fusion]

📑[RFN-Nest: An end-to-end resid- ual fusion network for infrared and visible images]

📑[SwinFuse: A Residual Swin Transformer Fusion Network for Infrared and Visible Images]

📑[SwinFusion: Cross-domain Long-range Learning for General Image Fusion via Swin Transformer]

📑[(MFEIF)Learning a Deep Multi-Scale Feature Ensemble and an Edge-Attention Guidance for Image Fusion]

📑[DenseFuse: A fusion approach to infrared and visible images]

📑[DeepFuse: A Deep Unsupervised Approach for Exposure Fusion with Extreme Exposure Image Pair]

📑[GANMcC: A Generative Adversarial Network With Multiclassification Constraints for IVIF]

📑[DIDFuse: Deep Image Decomposition for Infrared and Visible Image Fusion]

📑[IFCNN: A general image fusion framework based on convolutional neural network]

📑[(PMGI) Rethinking the image fusion: A fast unified image fusion network based on proportional maintenance of gradient and intensity]

📑[SDNet: A Versatile Squeeze-and-Decomposition Network for Real-Time Image Fusion]

📑[DDcGAN: A Dual-Discriminator Conditional Generative Adversarial Network for Multi-Resolution Image Fusion]

📑[FusionGAN: A generative adversarial network for infrared and visible image fusion]

📑[PIAFusion: A progressive infrared and visible image fusion network based on illumination aw]

📑[CDDFuse: Correlation-Driven Dual-Branch Feature Decomposition for Multi-Modality Image Fusion]

📑[U2Fusion: A Unified Unsupervised Image Fusion Network]

📑综述[Visible and Infrared Image Fusion Using Deep Learning]

📚图像融合论文baseline总结

📑其他论文

📑[3D目标检测综述:Multi-Modal 3D Object Detection in Autonomous Driving:A Survey]

🎈其他总结

🎈[CVPR2023、ICCV2023论文题目汇总及词频统计]

?精品文章总结

?[图像融合论文及代码整理最全大合集]

?[图像融合常用数据集整理]

如有疑问可联系:420269520@qq.com;

码字不易,【关注,收藏,点赞】一键三连是我持续更新的动力,祝各位早发paper,顺利毕业~

本文来自互联网用户投稿,该文观点仅代表作者本人,不代表本站立场。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。 如若内容造成侵权/违法违规/事实不符,请联系我的编程经验分享网邮箱:chenni525@qq.com进行投诉反馈,一经查实,立即删除!