pytorch集智4-情绪分类器

发布时间:2024年01月15日

1 目标

从中文文本中识别出句子里的情绪。和上一章节单车预测回归问题相比,这个问题是分类问题,不是回归问题

2 神经网络分类器

2.1 如何用神经网络分类

第二章节用torch.nn.Sequantial做的回归预测器,输出神经元只有一个。分类器和其区别如下

1 分类器输出单元有几个,就有几个分类

2 分类器各输出单元输出值加和为1(值表示某个预测分类的概率,概率求和为1)

3 回归预测最后一层可以用sigmoid,分类不行,因为sigmoid无法保证分类器输出单元值求和为1,可以用softmax,表达式如下

2.2 分类问题损失函数

分类问题损失函数一般用交叉熵:

N表示样本数量,Ci表示第i个样本的类别标签,y表示每个样本的损失值

3 词袋模型分类器

直白理解:就是统计句子里各词语出现数量,忽略语法,语义等因素

思想:1 将句子转化为词袋,然后可以onehot处理,方便用神经网络预测;2 大概思想:在词袋里寻找和输出相关关键词语,然后统计词语数量,哪个输出类别的敏感词语统计数量高,结果就是哪个输出类别

数据预处理:可以归一化处理,网络计算会更快

3.1 简单文本分类器

背景与数据:获取网购商城顾客评论信息,从评论信息获取句子情绪

神经网络组成:用前馈神经网络,有3层,分别是输入,中间,输出层,输出层数量有两个,分别代表情绪是正面还是负面

3.2 程序实现

3.2.1 数据处理

主要步骤:处理标点,句子分词,建立词表

标点处理:正则,涉及的标点全部删除

句子分词:使用jieba模块对句子分词

建立词表:python字典建立词表

def deal_punc(sentence):

sentence = re.sub('[\s+\.\!\/_,$%^*(+\"\'“”《》?]+|[+——!,。?、~@#¥%……&*():)]+', '', sentence)

return sentence

def prepare_data(good_file, bad_file, is_filter=True):

print('prepare data begin')

all_words = []

pos_sen, neg_sen = [], []

for path, sen in zip((good_file, bad_file), (pos_sen, neg_sen)):

with open(path, 'r', encoding='utf-8') as f:

for index, line in enumerate(f):

if is_filter:

line = deal_punc(line)

words = jieba.lcut(line)

if len(words) > 0:

all_words += words

sen.append(words)

print(f'{path} include {index} rows, all words:{len(all_words)}')

print(f'pos_sen len:{len(pos_sen)}, neg_sen len:{len(neg_sen)}')

diction = {}

cnt = Counter(all_words)

for word, freq in cnt.items():

diction[word] = [len(diction), freq]

print(f'diction len:{len(diction)}')

return (pos_sen, neg_sen, diction)

def word2index(word, diction):

if word in diction:

value = diction[word][0]

else:

value = -1

return (value)

def index2word(index, diction):

for w, v in diction.items():

if v[0] == index:

return (w)

return (None)

3.2.2 文本数据向量化

pos_sen, neg_sen, diction = prepare_data_sample(good_file, bad_file)

st = sorted([(v[1], w) for w, v in diction.items()])

datasets, labels, sentences = [], [], []

'''

for sens, tag in zip((pos_sen, neg_sen), (0, 1)):

for sen in sens:

new_sen = []

for l in sen:

if l in diction:

new_sen.append(word2index_sample(1, diction))

datasets.append(sentence2vec_sample(new_sen, diction))

labels.append(tag)

sentences.append(sen)

'''

for sentence in pos_sen:

new_sentence = []

for l in sentence:

if l in diction:

new_sentence.append(word2index(l, diction))

datasets.append(sentence2vec_sample(new_sentence, diction))

labels.append(0) #正标签为0

sentences.append(sentence)

# 处理负向评论

for sentence in neg_sen:

new_sentence = []

for l in sentence:

if l in diction:

new_sentence.append(word2index(l, diction))

datasets.append(sentence2vec_sample(new_sentence, diction))

labels.append(1) #负标签为1

sentences.append(sentence)

indices = np.random.permutation(len(datasets))

datasets = [datasets[i] for i in indices]

labels = [labels[i] for i in indices]

sentences = [sentences[i] for i in indices]3.3.3 划分数据集

训练集,校验集,测试集,比例一般为10:1:1

# split train, test, verify datasets

test_size = int(len(datasets) // 10)

train_data = datasets[2 * test_size :]

train_label = labels[2 * test_size : ]

valid_data = datasets[: test_size]

valid_label = labels[: test_size]

test_data = datasets[test_size : 2 * test_size]

test_label = labels[test_size : 2 * test_size]3.3.4 建立神经网络

结构:输入层(约7000个样本),一个中间层(10个隐单元),输出层(2个单元)

注意,此处用的是relu,不是sigmoid。原因:虽然x>0时没处理数据,但x<0时为0让relu有了非线性的能力,且运算比sigmoid简单,速度更快,方便反向误差传导

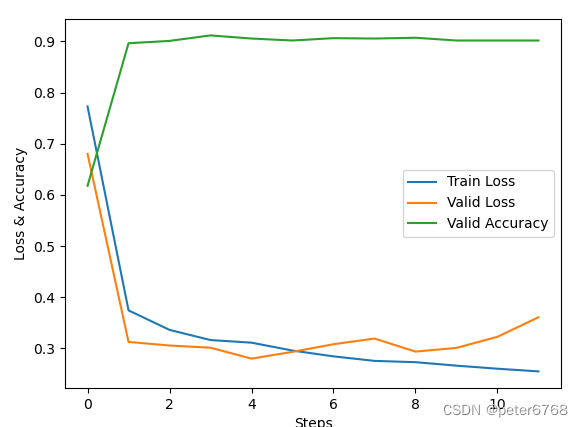

def plot(records):

print('plot begin')

a = [i[0] for i in records]

b = [i[1] for i in records]

c = [i[2] for i in records]

pyplot.plot(a, label='train loss')

pyplot.plot(b, label='verify loss')

pyplot.plot(c, label='valid accuracy')

pyplot.xlabel('step')

pyplot.ylabel('losses & accuracy')

pyplot.legend()

pyplot.show()

def main():

model = torch.nn.Sequential(

torch.nn.Linear(len(diction), 10),

torch.nn.ReLU(),

torch.nn.Linear(10, 2),

torch.nn.LogSoftmax(dim=1),

)

cost = torch.nn.NLLLoss()

optimizer = torch.optim.SGD(model.parameters(), lr=0.01)

records = []

losses = []

for epoch in range(3):

for i, data in enumerate(zip(train_data, train_label)):

x, y = data

x = torch.tensor(x, requires_grad=True, dtype=torch.float).view(1, -1)

y = torch.tensor(np.array([y]), dtype=torch.long)

optimizer.zero_grad()

predict = model(x)

loss = cost(predict, y)

losses.append(loss.data.numpy())

loss.backward()

optimizer.step()

val_losses = []

rights = []

for j, val in enumerate(zip(valid_data, valid_label)):

x, y = val

x = torch.tensor(x, requires_grad=True, dtype=torch.float).view(1, -1)

y = torch.tensor(np.array([y]), dtype = torch.long)

predict = model(x)

right = rightness_sample(predict, y)

rights.append(right)

loss = cost(predict, y)

val_losses.append(loss.data.numpy())

right_ratio = 1.0 * np.sum([i[0] for i in rights]) / np.sum([i[1] for i in rights])

print(f'No.{epoch}, train loss:{np.mean(losses):.4f}, verify loss:{np.mean(val_losses):.4f}, verify accuracy:{right_ratio}')

records.append([np.mean(losses), np.mean(val_losses), right_ratio])

# plot

plot(records)3.3 运行结果

文章来源:https://blog.csdn.net/peter6768/article/details/135578326

本文来自互联网用户投稿,该文观点仅代表作者本人,不代表本站立场。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。 如若内容造成侵权/违法违规/事实不符,请联系我的编程经验分享网邮箱:chenni525@qq.com进行投诉反馈,一经查实,立即删除!

本文来自互联网用户投稿,该文观点仅代表作者本人,不代表本站立场。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。 如若内容造成侵权/违法违规/事实不符,请联系我的编程经验分享网邮箱:chenni525@qq.com进行投诉反馈,一经查实,立即删除!

最新文章

- Python教程

- 深入理解 MySQL 中的 HAVING 关键字和聚合函数

- Qt之QChar编码(1)

- MyBatis入门基础篇

- 用Python脚本实现FFmpeg批量转换

- 吴恩达深度学习l2week1编程作业—Regularization(中文跑通版)

- P1016 [NOIP1999 提高组] 旅行家的预算

- 多层感知机

- 排序嘉年华———选择排序和快排原始版

- 【Unity】音频管理器---拿来即用

- ARM GIC (五)gicv3架构-LPI

- acwing 1358. 约数个数和(莫比乌斯函数)

- List<String> list = map.getOrDefault(key, new ArrayList<String>());中getOrDefault什么意思

- JDK各个版本特性讲解-JDK19特性

- 如何将文件名按1,2,3的顺序批量更改?