cdh6.3.2的hive配udf

发布时间:2024年01月22日

背景

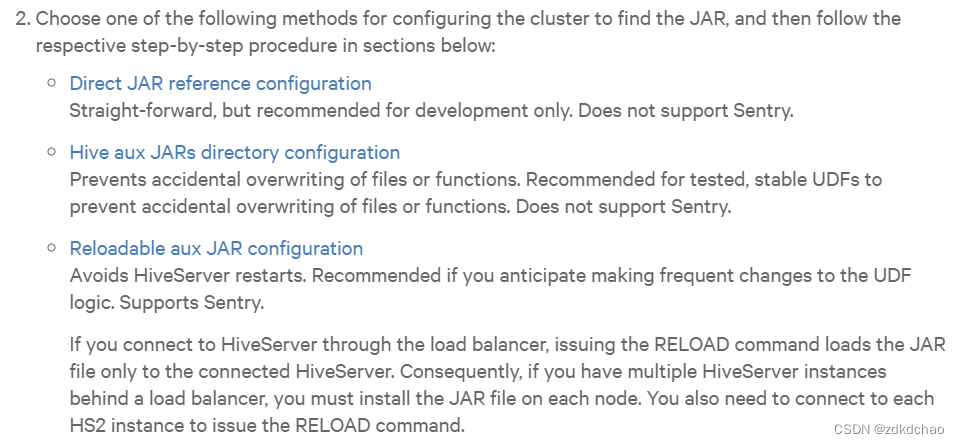

cdh的官网上说配UDF,需要考虑是否重启hs2,是否启用sentry,列出了3种方案。

https://docs.cloudera.com/documentation/enterprise/latest/topics/cm_mc_hive_udf.html

但实际上就用传统的hive的方式就成功了

https://cwiki.apache.org/confluence/display/Hive/LanguageManual+UDF#LanguageManualUDF-CreatingCustomUDFs

pom

<project xmlns="http://maven.apache.org/POM/4.0.0" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>org.example</groupId>

<artifactId>sm3UDF</artifactId>

<version>1.0</version>

<packaging>jar</packaging>

<name>sm3UDF</name>

<url>http://maven.apache.org</url>

<properties>

<project.build.sourceEncoding>UTF-8</project.build.sourceEncoding>

</properties>

<dependencies>

<dependency>

<groupId>org.bouncycastle</groupId>

<artifactId>bcprov-jdk15on</artifactId>

<version>1.68</version>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-common</artifactId>

<version>3.1.1</version>

</dependency>

<!-- <dependency>-->

<!-- <groupId>junit</groupId>-->

<!-- <artifactId>junit</artifactId>-->

<!-- <version>4.13.2</version>-->

<!-- <scope>test</scope>-->

<!-- </dependency>-->

<dependency>

<groupId>org.apache.hive</groupId>

<artifactId>hive-exec</artifactId>

<version>2.1.1-cdh6.3.2</version>

</dependency>

</dependencies>

<build>

<plugins>

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-compiler-plugin</artifactId>

<version>3.1</version>

<configuration>

<source>1.8</source>

<target>1.8</target>

</configuration>

</plugin>

</plugins>

</build>

</project>

java

package org.picc.encrypt;

import org.apache.commons.codec.binary.Hex;

import org.apache.hadoop.io.Text;

import org.bouncycastle.crypto.digests.SM3Digest;

import org.apache.hadoop.hive.ql.exec.UDF;

public class Sm3Fun extends UDF{

public String evaluate(String text) {

if (text == null) {

return null;

}

Text result = new Text();

SM3Digest digest = new SM3Digest();

Text value = new Text(text);

byte[] hashData = new byte[32];

digest.reset();

digest.update(value.getBytes(), 0, value.getLength());

digest.doFinal(hashData, 0);

String sm3Hex = Hex.encodeHexString(hashData);

result.set(sm3Hex);

return result.toString();

}

}

文章来源:https://blog.csdn.net/qq_34224565/article/details/135743658

本文来自互联网用户投稿,该文观点仅代表作者本人,不代表本站立场。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。 如若内容造成侵权/违法违规/事实不符,请联系我的编程经验分享网邮箱:chenni525@qq.com进行投诉反馈,一经查实,立即删除!

本文来自互联网用户投稿,该文观点仅代表作者本人,不代表本站立场。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。 如若内容造成侵权/违法违规/事实不符,请联系我的编程经验分享网邮箱:chenni525@qq.com进行投诉反馈,一经查实,立即删除!

最新文章

- Python教程

- 深入理解 MySQL 中的 HAVING 关键字和聚合函数

- Qt之QChar编码(1)

- MyBatis入门基础篇

- 用Python脚本实现FFmpeg批量转换

- 数据结构和算法 - 前置扫盲

- Python -- 利用pygame库进行游戏开发基础(二)

- 23、Web攻防——Python考点&CTF与CMS-SSTI模板注入&PYC反编译

- 常见排序算法(1) <==>插入排序

- 铸就安全可信的数字化「信息枢纽」—华为云ROMA Connect荣膺软件产品可信【卓越级】认证

- 拓扑排序相关leetcode算法题

- 第11章 一元线性回归

- opencv期末练习题(3)附带解析

- enumerate()函数讲解+同时获取索引和对应的元素值+实例

- 下图给出了一个迷宫的平面图,其中标记为黑色的为障碍,标记为白色的为可以通行的区域。迷宫的入口为左上角的黄色方格,出口为右下角的黄色方格。在迷宫中,只能从一个方格走到相邻的上、下、左、右四个方向之一。