diffusers-Inpainting

发布时间:2023年12月20日

原文链接:添加链接描述

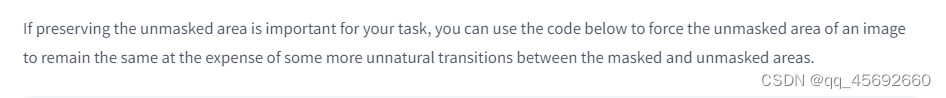

白色mask区域仅使用生成出来的,非白色mask区域使用原始影像,但是图像有点不平滑

import PIL

import numpy as np

import torch

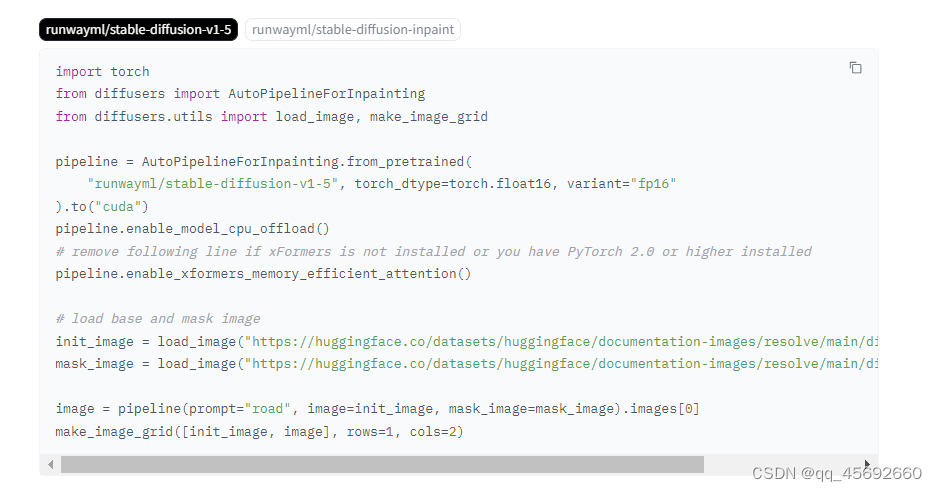

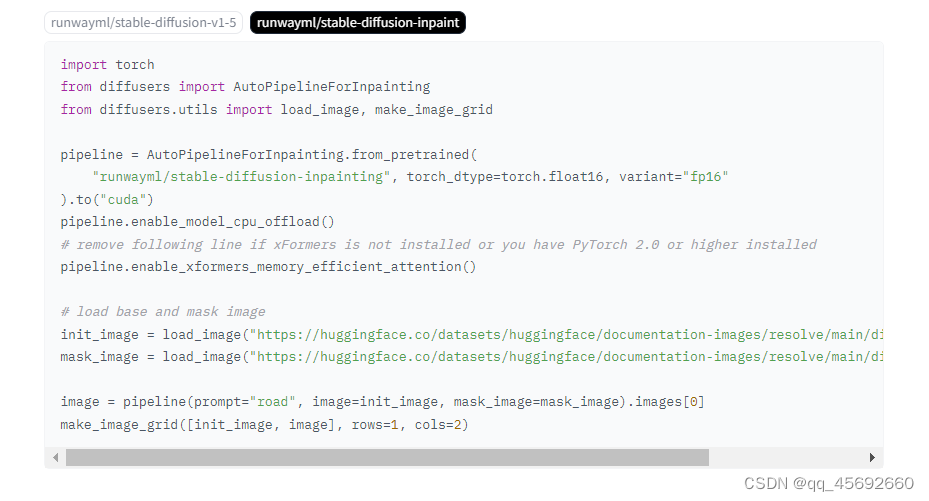

from diffusers import AutoPipelineForInpainting

from diffusers.utils import load_image, make_image_grid

device = "cuda"

pipeline = AutoPipelineForInpainting.from_pretrained(

"runwayml/stable-diffusion-inpainting",

torch_dtype=torch.float16,

)

pipeline = pipeline.to(device)

img_url = "https://raw.githubusercontent.com/CompVis/latent-diffusion/main/data/inpainting_examples/overture-creations-5sI6fQgYIuo.png"

mask_url = "https://raw.githubusercontent.com/CompVis/latent-diffusion/main/data/inpainting_examples/overture-creations-5sI6fQgYIuo_mask.png"

init_image = load_image(img_url).resize((512, 512))

mask_image = load_image(mask_url).resize((512, 512))

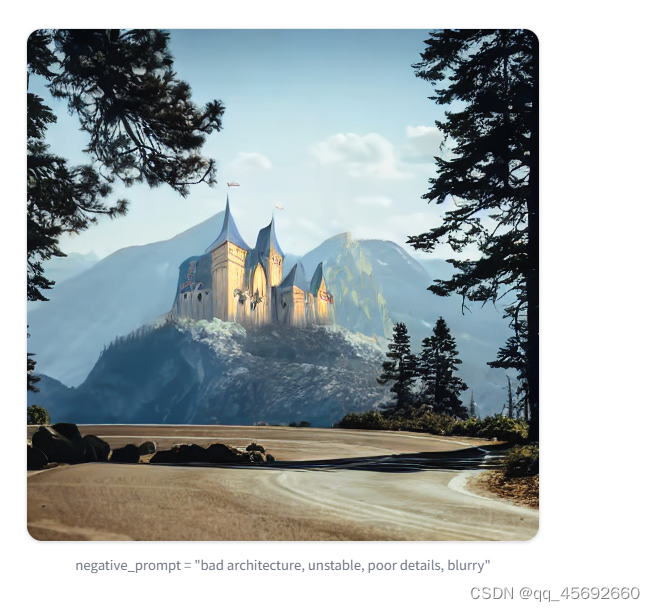

prompt = "Face of a yellow cat, high resolution, sitting on a park bench"

repainted_image = pipeline(prompt=prompt, image=init_image, mask_image=mask_image).images[0]

repainted_image.save("repainted_image.png")

# Convert mask to grayscale NumPy array

mask_image_arr = np.array(mask_image.convert("L"))

# Add a channel dimension to the end of the grayscale mask

mask_image_arr = mask_image_arr[:, :, None]

# Binarize the mask: 1s correspond to the pixels which are repainted

mask_image_arr = mask_image_arr.astype(np.float32) / 255.0

mask_image_arr[mask_image_arr < 0.5] = 0

mask_image_arr[mask_image_arr >= 0.5] = 1

# Take the masked pixels from the repainted image and the unmasked pixels from the initial image

unmasked_unchanged_image_arr = (1 - mask_image_arr) * init_image + mask_image_arr * repainted_image

unmasked_unchanged_image = PIL.Image.fromarray(unmasked_unchanged_image_arr.round().astype("uint8"))

unmasked_unchanged_image.save("force_unmasked_unchanged.png")

make_image_grid([init_image, mask_image, repainted_image, unmasked_unchanged_image], rows=2, cols=2)

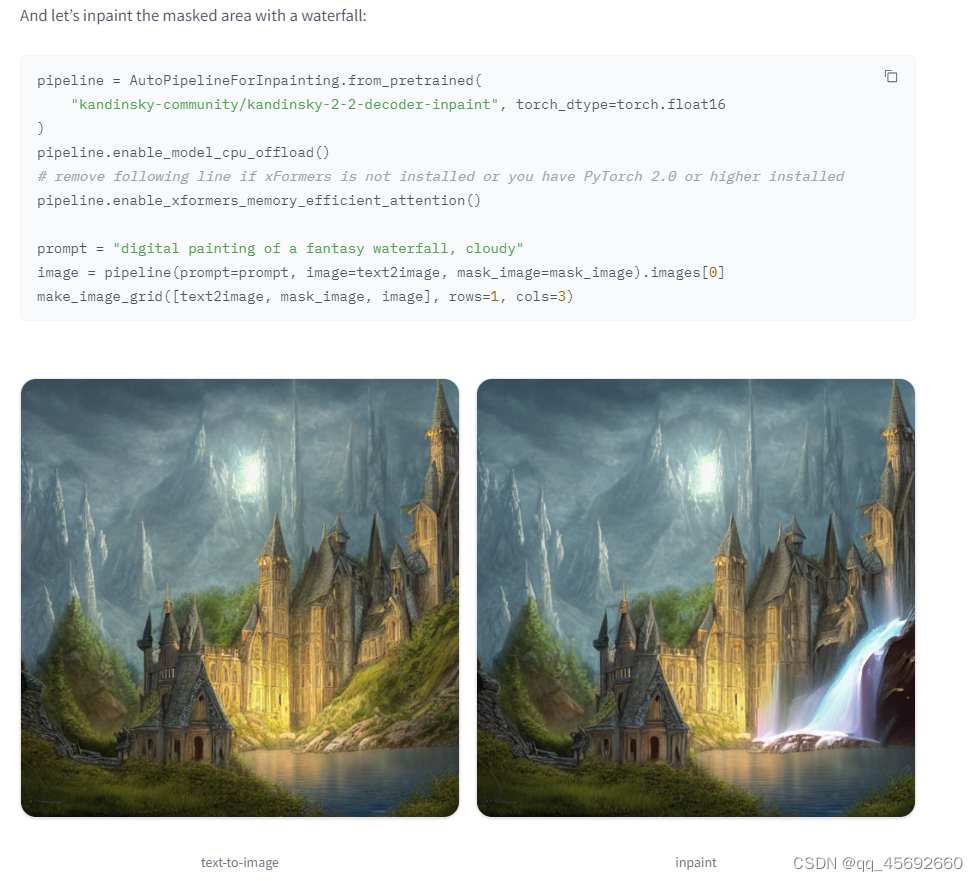

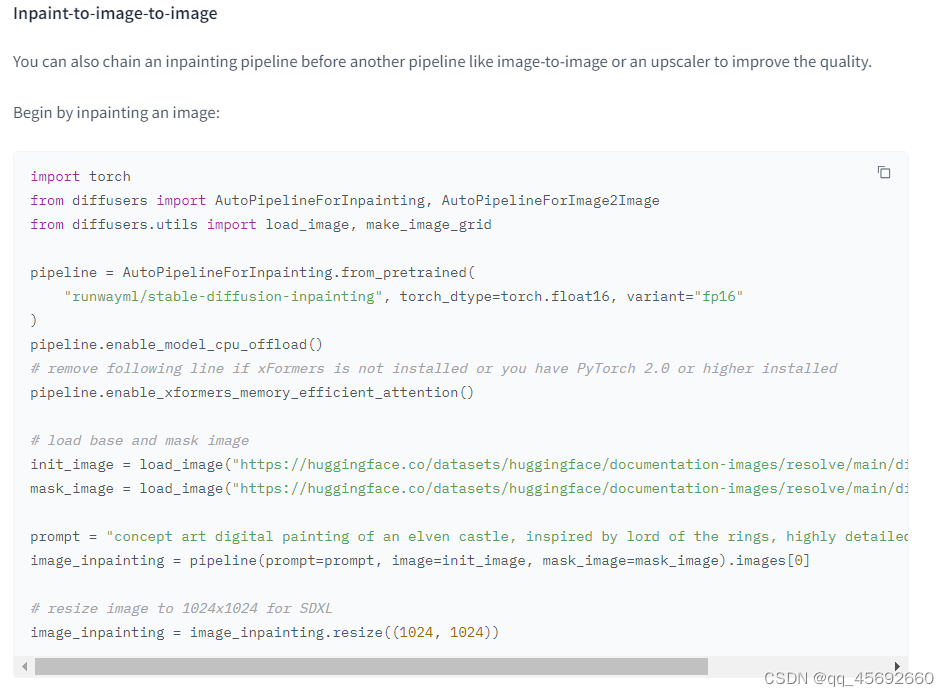

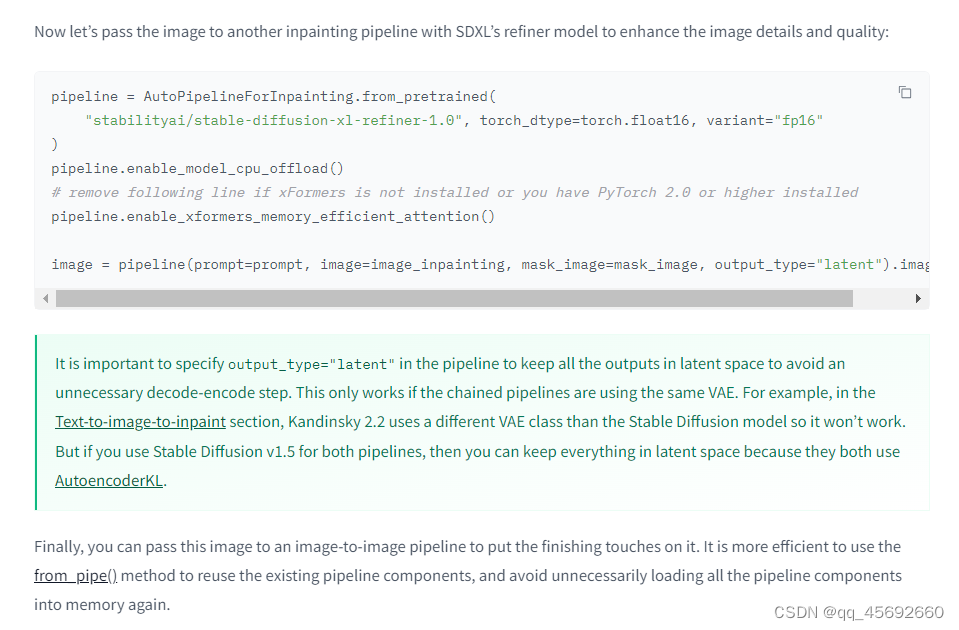

两个pipeline不是使用同一个VAE,否则第一个pipeline的输出可以是latent

文章来源:https://blog.csdn.net/qq_45692660/article/details/135096709

本文来自互联网用户投稿,该文观点仅代表作者本人,不代表本站立场。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。 如若内容造成侵权/违法违规/事实不符,请联系我的编程经验分享网邮箱:chenni525@qq.com进行投诉反馈,一经查实,立即删除!

本文来自互联网用户投稿,该文观点仅代表作者本人,不代表本站立场。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。 如若内容造成侵权/违法违规/事实不符,请联系我的编程经验分享网邮箱:chenni525@qq.com进行投诉反馈,一经查实,立即删除!

最新文章

- Python教程

- 深入理解 MySQL 中的 HAVING 关键字和聚合函数

- Qt之QChar编码(1)

- MyBatis入门基础篇

- 用Python脚本实现FFmpeg批量转换

- 吉林大学数据库系统原理期末复习笔记

- 深入了解ReadDirectoryChangesW并应用其监控文件目录

- 每日一题 2828. 判别首字母缩略词(简单)

- 构建一个安全可靠的身份认证中心和资源服务中心:SpringSecurity+OAuth2.0的完美结合

- mac上部署单体hbase

- C# WPF上位机开发(闪退问题的处理)

- 硅双通道光纤低温等离子体蚀刻控制与SiGe表面成分调制

- shiro反序列化与fastjson反序列化漏洞原理

- C例题测试1

- 移动端Vant中的Calendar日历增加显示农历(节日、节气)功能