kubernetes Service 详解

写在前面:如有问题,以你为准,

目前24年应届生,各位大佬轻喷,部分资料与图片来自网络

内容较长,页面右上角目录方便跳转

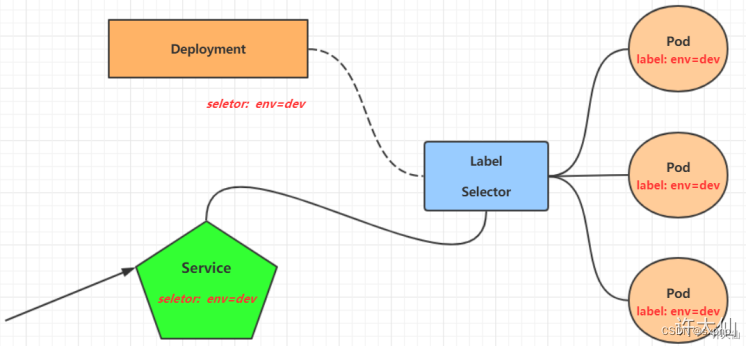

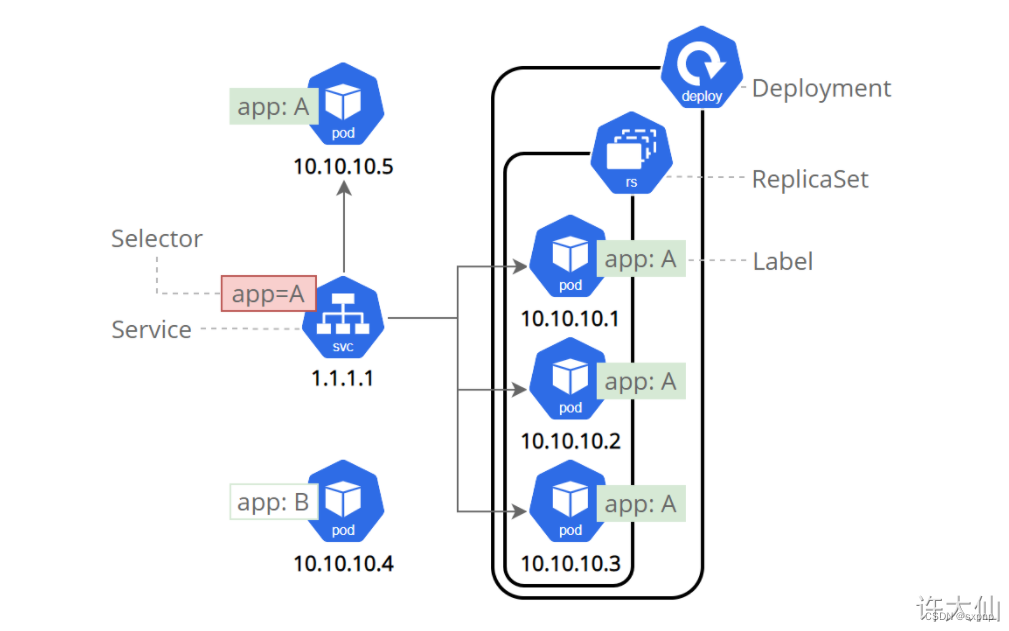

Service 介绍 架构

- 在kubernetes中,Pod是应用程序的载体,我们可以通过Pod的IP来访问应用程序,但是Pod的IP地址不是固定的,这就意味着不方便直接采用Pod的IP对服务进行访问。

- 为了解决这个问题,kubernetes提供了Service资源,Service会对提供同一个服务的多个Pod进行聚合,并且提供一个统一的入口地址,通过访问Service的入口地址就能访问到后面的Pod服务。

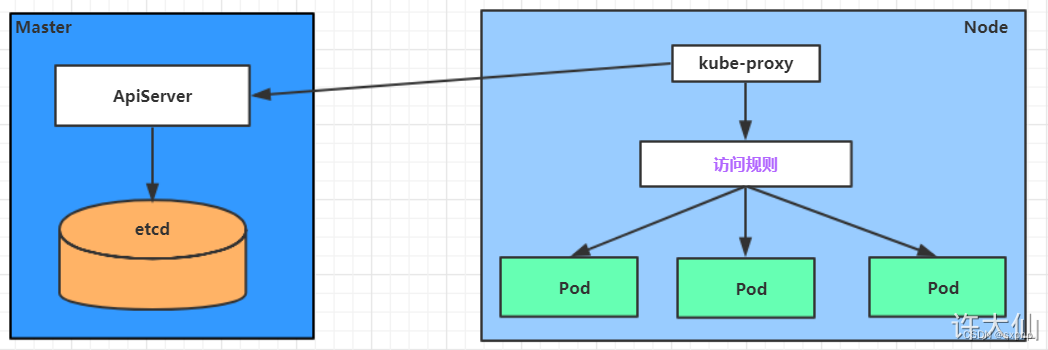

- 架构如图

注:通过labels标签选择器来将service与后端Pod进行绑定

- Service在很多情况下只是一个概念,真正起作用的其实是kube-proxy服务进程,每个Node节点上都运行了一个kube-proxy的服务进程。当创建Service的时候会通过API Server向etcd写入创建的Service的信息,而kube-proxy会基于监听的机制发现这种Service的变化,然后它会将最新的Service信息转换为对应的。

- 访问规则即流量负载分发 使用ipvs实现,其也是实现LVS的核心

# 10.97.97.97:80 是service提供的访问入口(VIP)

# 当访问这个入口的时候,可以发现后面有三个pod的服务在等待调用,

# kube-proxy会基于rr(轮询)的策略,将请求分发到其中一个pod上去

# 这个规则会同时在集群内的所有节点上都生成,所以在任何一个节点上访问都可以。

[root@k8s-node1 ~]# ipvsadm -Ln

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

?-> RemoteAddress:Port? Forward Weight ActiveConn InActConn

?TCP 10.97.97.97:80 rr

??-> 10.244.1.39:80 ? Masq? 1? 0? 0

??-> 10.244.1.40:80 ? Masq? 1? 0 ?0

??-> 10.244.2.33:80?? Masq? 1? 0? 0kube-proxy(service 工作原理)

是逐步进化的,从userspace 到 iptables 再到 ipvs

ipvs

开启 查看 ipvs

# 更改模式 vim 下输入/mode

[root@master ~]# kubectl edit cm kube-proxy -n kube-system

??? kind: KubeProxyConfiguration

??? metricsBindAddress: ""

??? mode: "ipvs"

??? nodePortAddresses: null

# 查看与重新创建每个node 对应的 kube-proxy(三个node)??????

[root@master ~]# kubectl get pod -l k8s-app=kube-proxy -n kube-system

NAME?????????????? READY?? STATUS??? RESTARTS????? AGE

kube-proxy-b8mzd?? 1/1???? Running?? 0???????????? 13d

kube-proxy-g6q8z?? 1/1???? Running?? 4 (23h ago)?? 13d

kube-proxy-trzb9?? 1/1???? Running?? 3 (23h ago)?? 12d

[root@master ~]# kubectl delete pod -l k8s-app=kube-proxy -n kube-system

pod "kube-proxy-b8mzd" deleted

pod "kube-proxy-g6q8z" deleted

pod "kube-proxy-trzb9" deleted

[root@master ~]# kubectl get pod -l k8s-app=kube-proxy -n kube-system

NAME?????????????? READY?? STATUS??? RESTARTS?? AGE

kube-proxy-cppq6?? 1/1???? Running?? 0????????? 8s

kube-proxy-cw8xn?? 1/1???? Running?? 0????????? 9s

kube-proxy-nlnpl?? 1/1???? Running?? 0????????? 9s[root@master ~]# ipvsadm -Ln

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

? -> RemoteAddress:Port?????????? Forward Weight ActiveConn InActConn

TCP? 10.96.0.1:443 rr

? -> 192.168.100.53:6443????????? Masq??? 1????? 1????????? 0????????

TCP? 10.96.0.10:53 rr

? -> 10.244.219.65:53???????????? Masq??? 1????? 0????????? 0????????

? -> 10.244.219.69:53???????????? Masq??? 1????? 0????????? 0????????

TCP? 10.96.0.10:9153 rr

? -> 10.244.219.65:9153?????????? Masq??? 1????? 0????????? 0????????

? -> 10.244.219.69:9153?????????? Masq??? 1????? 0????????? 0????????

TCP? 10.98.145.184:9094 rr

? -> 10.244.219.67:9094?????????? Masq??? 1????? 0????????? 0????????

TCP? 10.98.165.172:443 rr

? -> 192.168.100.51:4443????????? Masq??? 1????? 0????????? 0????????

? -> 192.168.100.52:4443????????? Masq??? 1????? 0????????? 0????????

TCP? 10.109.241.243:5473 rr

? -> 192.168.100.51:5473????????? Masq??? 1????? 0????????? 0????????

? -> 192.168.100.52:5473????????? Masq??? 1????? 0????????? 0????????

TCP? 10.111.111.114:443 rr

? -> 10.244.219.66:5443?????????? Masq??? 1????? 0????????? 0????????

? -> 10.244.219.68:5443?????????? Masq??? 1????? 0????????? 0????????

UDP? 10.96.0.10:53 rr

? -> 10.244.219.65:53???????????? Masq??? 1????? 0????????? 0????????

? -> 10.244.219.69:53???????????? Masq??? 1????? 0????????? 0???????负载分发策略

[root@master k8s]# ipvsadm -Ln

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

? -> RemoteAddress:Port?????????? Forward Weight ActiveConn InActConn

???

TCP? 10.97.97.97:80 rr # vip:端口 rr(轮询策略)

? -> 10.244.104.10:80???????????? Masq??? 1????? 0????????? 1????????

? -> 10.244.166.129:80??????????? Masq??? 1????? 0????????? 1????????

? -> 10.244.166.191:80??????????? Masq??? 1????? 0????????? 2?

[root@master k8s]# curl 10.97.97.97

?IP: 10.244.166.191 pod-1 node1

[root@master k8s]# curl 10.97.97.97

?IP: 10.244.166.129 pod-2 node1

[root@master k8s]# curl 10.97.97.97

?IP: 10.244.104.10 pod-3 node2Endpoint

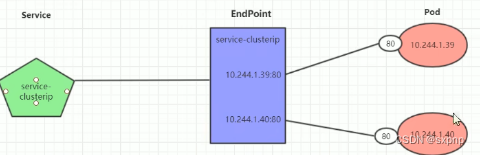

- Endpoint是kubernetes中的一个资源对象,存储在etcd中,用来记录一个service对应的所有Pod的访问地址,它是根据service配置文件中的selector描述产生的。

- 一个service由一组Pod组成,这些Pod通过Endpoints暴露出来,Endpoints是实现实际服务的端点集合。换言之,service和Pod之间的联系是通过Endpoints实现的,也就是通过labels 进行 selector 实现service 与Pod之间的绑定

[root@master k8s]# kubectl describe service -n study service-clusterip

Name:????????????? service-clusterip

Namespace:???????? study

Labels:??????????? <none>

Annotations:?????? <none>

Selector:????????? app=nginx-pod

Type:????????????? ClusterIP

IP Family Policy:? SingleStack

IP Families:?????? IPv4

IP:??????????????? 10.97.97.97

IPs:?????????????? 10.97.97.97

Port:????????????? <unset>? 80/TCP

TargetPort:??????? 80/TCP

Endpoints:???????? 10.244.1.39:80,10.244.1.40:80 #连接的pod

Session Affinity:? None

Events:??????????? <none>[root@master k8s]# kubectl get endpoints -n study -o wide

NAME??????????????? ENDPOINTS??????????????????????? AGE

service-clusterip?? 10.244.1.39:80,10.244.1.40:80?? 5m47s域名

- 当我们创建一个 Service 的时候,Kubernetes 会创建一个相应的 DNS 条目。

- 该条目的形式是

<namespace-name>.svc.cluster.local,这意味着如果容器中只使用

<服务名称>,它将被解析到本地名称空间的服务器。这对于跨多个名字空间(如开发、测试和生产) 使用相同的配置非常有用。如果你希望跨名字空间访问,则需要使用完全限定域名(FQDN)

自带 service

[root@master cks]# kubectl get svc

NAME???????? TYPE??????? CLUSTER-IP?? EXTERNAL-IP?? PORT(S)?? AGE

kubernetes?? ClusterIP?? 10.96.0.1??? <none>??????? 443/TCP?? 278dkubernetes 用于给 pod 访问 kube apiserver

Service 类型(实操)

命令行实操(expose)

集群内部访问

# 创建 deploy

[root@master k8s]# kubectl create deployment nginx -n default? --image=nginx:1.8 --replicas=2

deployment.apps/nginx created

# 暴露端口,其实就是创建 service

[root@master k8s]# kubectl expose deploy nginx --name=nginx --type=ClusterIP --port=80 --target-port=80 -n default

service/nginx exposed

[root@master k8s]# kubectl get svc -n default

NAME???????? TYPE??????? CLUSTER-IP????? EXTERNAL-IP?? PORT(S)?? AGE

kubernetes?? ClusterIP?? 10.96.0.1?????? <none>??????? 443/TCP?? 2d

nginx??????? ClusterIP?? 10.105.198.94?? <none>??????? 80/TCP??? 22s

[root@master k8s]# curl 10.105.198.94 #集群内部地址

[root@master k8s]# kubectl delete? svc nginx -n default

service "nginx" deleted集群外部访问

[root@master k8s]# kubectl expose deploy nginx --name=nginx --type=NodePort --port=80 --target-port=80 -n default

service/nginx exposed

[root@master k8s]# kubectl get svc -n default

NAME???????? TYPE??????? CLUSTER-IP????? EXTERNAL-IP?? PORT(S)??????? AGE

kubernetes?? ClusterIP?? 10.96.0.1?????? <none>??????? 443/TCP??????? 2d

nginx??????? NodePort??? 10.105.143.21?? <none>??????? 80:31627/TCP?? 7s

#? 80:31627/TCP 中 31627 是master的ip地址端口,

# 会将master ip:31627 请求转发到 10.105.143.21

[root@master k8s]# curl 192.168.100.53:31627

[root@master k8s]# kubectl delete? svc nginx -n default

service "nginx" deletedyaml 整体解析

spec.type:

? ClusterIP:默认值,它是kubernetes系统自动分配的虚拟IP,只能在集群内部访问。

? NodePort:将Service通过指定的Node(集群节点上)上的端口暴露给外部,通过此方法,就可以在集群外部访问服务。

? LoadBalancer:使用外接负载均衡器完成到服务的负载分发,注意此模式需要外部云环境的支持。

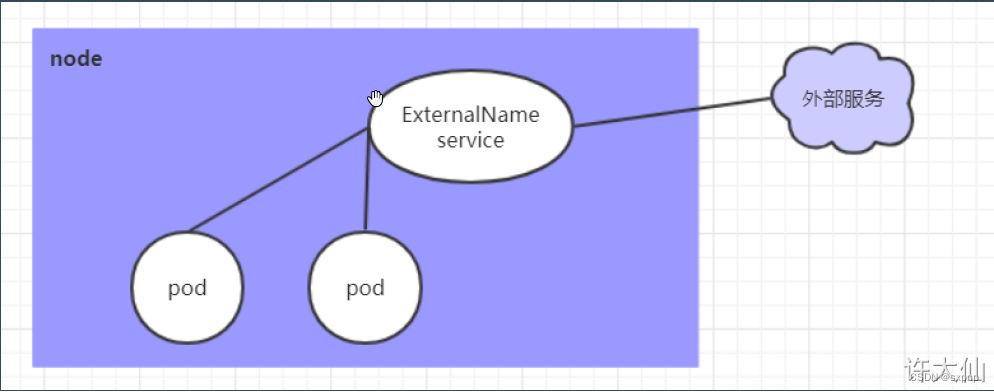

? ExternalName:把集群外部的服务引入集群内部,直接使用,可以实现pod访问外部域名地址

sessionAffinity:ClientIP 同一个ip都全部请求去同一个Pod上(会话保持模式)

NodePort???? 的缺点是会占用很多集群机器的端口,那么当集群服务变多的时候,这个缺点就愈发明显。

LoadBalancer 的缺点是每个Service都需要一个LB,浪费,麻烦,并且需要kubernetes之外的设备的支持

apiVersion: v1 # 版本

kind: Service # 类型

metadata: # 元数据

? name: # 资源名称

? namespace: # 命名空间

spec:

? selector: # 标签选择器,用于确定当前Service代理那些Pod

??? app: nginx

? type: NodePort # Service的类型,指定Service的访问方式

? clusterIP: # 虚拟服务的IP地址

? sessionAffinity: # session亲和性,支持ClientIP、None两个选项,默认值为None(不开启)

? ports: # 端口信息

??? - port: 8080 # Service 开放端口

????? protocol: TCP # 协议

????? targetPort : # 转发到 Pod 的端口

????? nodePort:? # 主机 开放端口环境准备

创建deployment控制器,注意labels为 app=nginx-pod

---

apiVersion: apps/v1

kind: Namespace

metadata:

? name: study

---

apiVersion: apps/v1

kind: Deployment

metadata:

? name: service-environment-deployment

? namespace: study

spec:

? replicas: 3

? selector:

??? matchLabels:

????? app: nginx-pod

? template:

??? metadata:

????? labels:

??????? app: nginx-pod

??? spec:

????? containers:

??????? - name: nginx

????????? image: nginx:1.17.1

????????? ports:

??????????? - containerPort: 80 # 容器开放端口[root@master k8s]# kubectl apply -f controller.yaml

namespace/study created

deployment.apps/service-environment-deployment created

[root@master k8s]# kubectl get deploy -n study

NAME???????????????????????????? READY?? UP-TO-DATE?? AVAILABLE?? AGE

service-environment-deployment?? 3/3???? 3??????????? 3?????????? 21s

[root@master k8s]# kubectl get pod -n study -o wide --show-labels

NAME???????????????????????????????????? ?????????READY?? STATUS??? RESTARTS?? AGE?? IP?????????????? NODE??? NOMINATED NODE?? READINESS GATES?? LABELS

service-environment-deployment-6bb9d9f778-5k2x6?? 1/1???? Running?? 0????????? 57s?? 10.244.166.191?? node1?? <none>?????????? <none>??????????? app=nginx-pod,pod-template-hash=6bb9d9f778

service-environment-deployment-6bb9d9f778-8bt7j?? 1/1???? Running?? 0????????? 57s?? 10.244.166.129?? node1?? <none>?????????? <none>??????????? app=nginx-pod,pod-template-hash=6bb9d9f778

service-environment-deployment-6bb9d9f778-8hcm2?? 1/1???? Running?? 0????????? 57s?? 10.244.104.10??? node2?? <none>?????????? <none>??????????? app=nginx-pod,pod-template-hash=6bb9d9f778[root@master k8s]# kubectl exec -it -n study service-environment-deployment-6bb9d9f778-5k2x6 -c nginx /bin/sh

echo " IP: 10.244.166.191 pod-1 node1" > /usr/share/nginx/html/index.html

[root@master k8s]# kubectl exec -it -n study service-environment-deployment-6bb9d9f778-8bt7j -c nginx /bin/sh

echo " IP: 10.244.166.129 pod-2 node1" > /usr/share/nginx/html/index.html

[root@master k8s]# kubectl exec -it -n study service-environment-deployment-6bb9d9f778-8hcm2 -c nginx /bin/sh

echo " IP: 10.244.104.10 pod-3 node2" > /usr/share/nginx/html/index.html[root@master k8s]# curl 10.244.166.191

?IP: 10.244.166.191 pod-1 node1

[root@master k8s]# curl 10.244.166.129

?IP: 10.244.166.129 pod-2 node1

[root@master k8s]# curl 10.244.104.10

?IP: 10.244.104.10 pod-3 node2?ClusterIP 类型

apiVersion: v1

kind: Service

metadata:

? name: service-clusterip

? namespace: study

spec:

? selector:

??? app: nginx-pod # Pod的标签

? clusterIP: 10.97.97.97 # service的IP地址,如果不写,默认会生成一个

? type: ClusterIP

? ports:

??? - port: 80 # Service的端口

????? protocol: TCP # 协议

????? targetPort: 80 # Pod的端口[root@master k8s]# kubectl apply -f service.yaml

service/service-clusterip created

[root@master k8s]# kubectl get service -n study

NAME??????????????? TYPE??????? CLUSTER-IP??? EXTERNAL-IP?? PORT(S)?? AGE

service-clusterip?? ClusterIP?? 10.97.97.97?? <none>??????? 80/TCP??? 10s

[root@master k8s]# kubectl describe service -n study service-clusterip

Name:????????????? service-clusterip

Namespace:???????? study

Labels:??????????? <none>

Annotations:?????? <none>

Selector:????????? app=nginx-pod

Type:????????????? ClusterIP

IP Family Policy:? SingleStack

IP Families:?????? IPv4

IP:??????????????? 10.97.97.97

IPs:?????????????? 10.97.97.97

Port:????????????? <unset>? 80/TCP

TargetPort:??????? 80/TCP

Endpoints:???????? 10.244.104.10:80,10.244.166.129:80,10.244.166.191:80 #连接的pod

Session Affinity:? None

Events:??????????? <none>

[root@master k8s]# ipvsadm -Ln

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

? -> RemoteAddress:Port?????????? Forward Weight ActiveConn InActConn

TCP? 10.97.97.97:80 rr #vip:端口 rr(轮询策略)

? -> 10.244.104.10:80???????????? Masq??? 1????? 0????????? 1????????

? -> 10.244.166.129:80??????????? Masq??? 1????? 0????????? 1????????

? -> 10.244.166.191:80??????????? Masq??? 1????? 0????????? 2?# 由下面可以看到是轮询策略

[root@master k8s]# curl 10.97.97.97

?IP: 10.244.166.191 pod-1 node1

[root@master k8s]# curl 10.97.97.97

?IP: 10.244.166.129 pod-2 node1

[root@master k8s]# curl 10.97.97.97

?IP: 10.244.104.10 pod-3 node2[root@master k8s]# kubectl delete -f service.yaml

service "service-clusterip" deletedHeadLiness 类型

在某些场景中,开发人员可能不想使用Service提供的负载均衡功能,而希望自己来控制负载均衡策略,针对这种情况,kubernetes提供了HeadLinesss Service,这类Service不会分配Cluster IP,如果想要访问Service,只能通过Service的域名进行访问

一般用于实现 StatefulSet(常用来部署RabbitMQ集群、Zookeeper集群、MySQL集群、Eureka集群等)

apiVersion: v1

kind: Service

metadata:

? name: service-headliness

? namespace: study

spec:

? selector:

??? app: nginx-pod

? clusterIP: None # 将clusterIP设置为None,即可创建headliness Service

? type: ClusterIP

? ports:

??? - port: 80 # Service的端口

????? targetPort: 80 # Pod的端口[root@master k8s]# kubectl apply -f service.yaml

service/service-headliness created

[root@master k8s]# kubectl get svc -n study

NAME???????????????? TYPE??????? CLUSTER-IP?? EXTERNAL-IP?? PORT(S)?? AGE

service-headliness?? ClusterIP?? None???????? <none>??????? 80/TCP??? 8s

[root@master k8s]# kubectl describe svc service-headliness -n study

Name:????????????? service-headliness

Namespace:???????? study

Labels:??????????? <none>

Annotations:?????? <none>

Selector:????????? app=nginx-pod

Type:????????????? ClusterIP

IP Family Policy:? SingleStack

IP Families:?????? IPv4

IP:??????????????? None

IPs:?????????????? None

Port:????????????? <unset>? 80/TCP

TargetPort:??????? 80/TCP

Endpoints:???????? 10.244.104.10:80,10.244.166.129:80,10.244.166.191:80

Session Affinity:? None

Events:??????????? <none>[root@master k8s]# kubectl get pod -n study

NAME????????????????????????????????????????????? READY?? STATUS??? RESTARTS?? AGE

service-environment-deployment-6bb9d9f778-5k2x6?? 1/1???? Running?? 0????????? 45m

service-environment-deployment-6bb9d9f778-8bt7j?? 1/1???? Running?? 0????????? 45m

service-environment-deployment-6bb9d9f778-8hcm2?? 1/1???? Running?? 0????????? 45m

[root@master k8s]# kubectl exec -it -n study service-environment-deployment-6bb9d9f778-5k2x6 -c nginx /bin/sh

kubectl exec [POD] [COMMAND] is DEPRECATED and will be removed in a future version. Use kubectl exec [POD] -- [COMMAND] instead.

# cat /etc/resolv.conf

nameserver 10.96.0.10

search study.svc.cluster.local svc.cluster.local cluster.local

options ndots:5[root@master k8s]# dig @10.96.0.10 service-headliness.study.svc.cluster.local

; <<>> DiG 9.11.4-P2-RedHat-9.11.4-26.P2.el8 <<>> @10.96.0.10 service-clusterip.study.svc.cluster.local

; (1 server found)

;; global options: +cmd

;; Got answer:

;; WARNING: .local is reserved for Multicast DNS

;; You are currently testing what happens when an mDNS query is leaked to DNS

;; ->>HEADER<<- opcode: QUERY, status: NOERROR, id: 6641

;; flags: qr aa rd; QUERY: 1, ANSWER: 3, AUTHORITY: 0, ADDITIONAL: 1

;; WARNING: recursion requested but not available

;; OPT PSEUDOSECTION:

; EDNS: version: 0, flags:; udp: 4096

; COOKIE: 2ea4525b90a0ac1b (echoed)

;; QUESTION SECTION:

;service-headliness.study.svc.cluster.local. IN A

;; ANSWER SECTION:

service-headliness.study.svc.cluster.local. 30 IN A 10.244.104.10

service-headliness.study.svc.cluster.local. 30 IN A 10.244.166.191

service-headliness.study.svc.cluster.local. 30 IN A 10.244.166.129

;; Query time: 18 msec

;; SERVER: 10.96.0.10#53(10.96.0.10)

;; WHEN: Wed Feb 15 09:43:58 EST 2023

;; MSG SIZE? rcvd: 253NodePort 类型

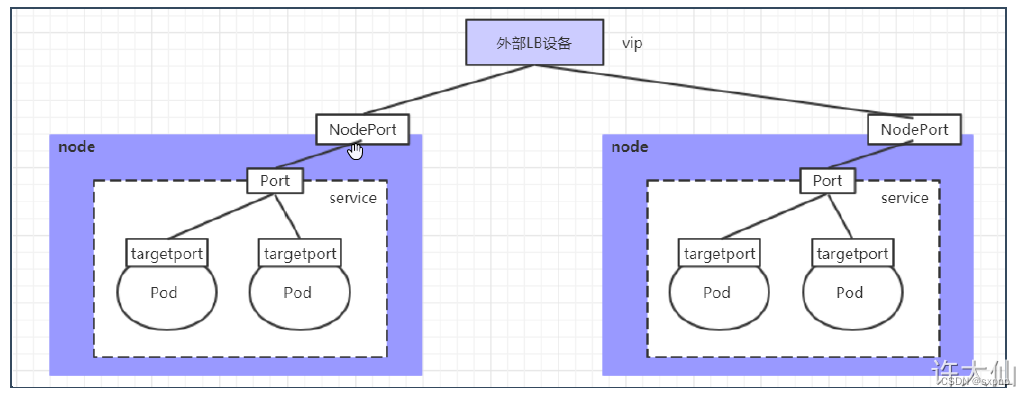

NodePort的工作原理就是将Service的端口映射到Node的一个端口上,然后就可以通过

apiVersion: v1

kind: Service

metadata:

? name: service-nodeport

? namespace: study

spec:

? selector:

??? app: nginx-pod

? type: NodePort # Service类型为NodePort,实现集群外部访问

? ports:

??? - port: 80 # Service的端口

????? targetPort: 80 # Pod的端口

????? nodePort: 30002

????? # 指定绑定的node的端口(默认取值范围是30000~32767),如果不指定,会默认分配[root@master k8s]# kubectl apply -f service.yaml

service/service-nodeport created

[root@master k8s]# kubectl get svc -n study

NAME?????????????? TYPE?????? CLUSTER-IP???? EXTERNAL-IP?? PORT(S)??????? AGE

service-nodeport?? NodePort?? 10.100.46.87?? <none>??????? 80:30002/TCP?? 48s

[root@master k8s]# ifconfig | grep inet

??????? inet6 fe80::ecee:eeff:feee:eeee? prefixlen 64? scopeid 0x20<link>

??????? inet6 fe80::ecee:eeff:feee:eeee? prefixlen 64? scopeid 0x20<link>

??????? inet6 fe80::ecee:eeff:feee:eeee? prefixlen 64? scopeid 0x20<link>

??????? inet6 fe80::ecee:eeff:feee:eeee? prefixlen 64? scopeid 0x20<link>

??????? inet6 fe80::ecee:eeff:feee:eeee? prefixlen 64? scopeid 0x20<link>

??????? inet 192.168.100.53? netmask 255.255.255.0? broadcast 192.168.100.255

??????? inet6 fe80::5523:b3a4:8bc9:b40f? prefixlen 64? scopeid 0x20<link>

??????? inet6 fe80::522c:e0a0:2c74:37c6? prefixlen 64? scopeid 0x20<link>

??????? inet6 fe80::dec0:9c00:3416:2561? prefixlen 64? scopeid 0x20<link>

??????? inet 127.0.0.1? netmask 255.0.0.0

??????? inet6 ::1? prefixlen 128? scopeid 0x10<host>

[root@master k8s]# ipvsadm -Ln

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

? -> RemoteAddress:Port?????????? Forward Weight ActiveConn InActConn

TCP? 192.168.100.53:30002 rr

? -> 10.244.104.10:80???????????? Masq??? 1????? 0????????? 0????????

? -> 10.244.166.129:80??????????? Masq??? 1????? 0????????? 0????????

? -> 10.244.166.191:80??????????? Masq??? 1????? 0????????? 0???

# 另开一台同一网络的linux或windows进行访问,地址为 masterip 192.168.100.53

[root@ip-15 ~]# curl 192.168.100.53:30002

?IP: 10.244.166.191 pod-1 node1

[root@ip-15 ~]# curl 192.168.100.53:30002

?IP: 10.244.166.129 pod-2 node1

[root@ip-15 ~]# curl 192.168.100.53:30002

?IP: 10.244.104.10 pod-3 node2扩展,访问集群中你的节点也是可以的如 node1 node2

[root@node1 ~]# ipvsadm -Ln

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

? -> RemoteAddress:Port?????????? Forward Weight ActiveConn InActConn

TCP? 192.168.100.51:30002 rr

? -> 10.244.104.10:80???????????? Masq??? 1????? 0????????? 0????????

? -> 10.244.166.129:80??????????? Masq??? 1????? 0????????? 0????????

? -> 10.244.166.191:80??????????? Masq??? 1????? 0????????? 0?

[root@ip-15 ~]# curl 192.168.100.51:30002

?IP: 10.244.166.191 pod-1 node1

[root@ip-15 ~]# curl 192.168.100.51:30002

?IP: 10.244.166.129 pod-2 node1

[root@ip-15 ~]# curl 192.168.100.51:30002

?IP: 10.244.104.10 pod-3 node2LoadBalancer 类型

LoadBalancer和NodePort很相似,目的都是向外部暴露一个端口,区别在于LoadBalancer会在集群的外部再来做一个负载均衡设备,而这个设备需要外部环境的支持,外部服务发送到这个设备上的请求,会被设备负载之后转发到集群中

ExternalName 类型

ExternalName类型的Service用于引入集群外部的服务,它通过externalName属性指定一个服务的地址,然后在集群内部访问此Service就可以访问到外部的服务了,访问外部域名地址

apiVersion: v1

kind: Service

metadata:

? name: service-externalname

? namespace: study

spec:

? type: ExternalName # Service类型为ExternalName

? externalName: www.baidu.com # 改成IP地址也可以[root@master k8s]# kubectl get svc -n study

NAME?????????????????? TYPE?????????? CLUSTER-IP?? EXTERNAL-IP???? PORT(S)?? AGE

service-externalname?? ExternalName?? <none>?????? www.baidu.com?? <none>??? 55s

[root@master k8s]# dig @10.96.0.10 service-externalname.study.svc.cluster.local

; <<>> DiG 9.11.4-P2-RedHat-9.11.4-26.P2.el8 <<>> @10.96.0.10 service-externalname.study.svc.cluster.local

; (1 server found)

;; global options: +cmd

;; Got answer:

;; WARNING: .local is reserved for Multicast DNS

;; You are currently testing what happens when an mDNS query is leaked to DNS

;; ->>HEADER<<- opcode: QUERY, status: NOERROR, id: 2116

;; flags: qr aa rd; QUERY: 1, ANSWER: 4, AUTHORITY: 0, ADDITIONAL: 1

;; WARNING: recursion requested but not available

;; OPT PSEUDOSECTION:

; EDNS: version: 0, flags:; udp: 4096

; COOKIE: 144210b36fc272dd (echoed)

;; QUESTION SECTION:

;service-externalname.study.svc.cluster.local. IN A

;; ANSWER SECTION:

service-externalname.study.svc.cluster.local. 30 IN CNAME www.baidu.com.

www.baidu.com.30INCNAMEwww.a.shifen.com.

www.a.shifen.com.30INA14.215.177.38

www.a.shifen.com.30INA14.215.177.39

;; Query time: 14 msec

;; SERVER: 10.96.0.10#53(10.96.0.10)

;; WHEN: Wed Feb 15 09:57:33 EST 2023

;; MSG SIZE? rcvd: 263实现会话保持(持久连接)

其实就是这个参数 sessionAffinity: ClientIP

apiVersion: v1

kind: Service

metadata:

? name: service-clusterip

? namespace: study

spec:

? sessionAffinity: ClientIP # 实现保持会话,如果不开启则填 None

? selector:

??? app: nginx-pod # Pod的标签

? clusterIP: 10.97.97.97 # service的IP地址,如果不写,默认会生成一个

? type: ClusterIP

? ports:

??? - port: 80 # Service的端口

????? protocol: TCP # 协议

????? targetPort: 80 # Pod的端口[root@master k8s]# kubectl get svc -n study

NAME??????????????? TYPE??????? CLUSTER-IP??? EXTERNAL-IP?? PORT(S)?? AGE

service-clusterip?? ClusterIP?? 10.97.97.97?? <none>??????? 80/TCP??? 14s

[root@master k8s]# kubectl describe svc -n study

Name:????????????? service-clusterip

Namespace:???????? study

Labels:??????????? <none>

Annotations:?????? <none>

Selector:????????? app=nginx-pod

Type:????????????? ClusterIP

IP Family Policy:? SingleStack

IP Families:?????? IPv4

IP:??????????????? 10.97.97.97

IPs:?????????????? 10.97.97.97

Port:????????????? <unset>? 80/TCP

TargetPort:??????? 80/TCP

Endpoints:???????? 10.244.104.10:80,10.244.166.129:80,10.244.166.191:80

Session Affinity:? ClientIP # 设置为这个就是保持会话

Events:??????????? <none>

[root@master k8s]# ipvsadm -Ln

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

? -> RemoteAddress:Port?????????? Forward Weight ActiveConn InActConn

????

TCP? 10.97.97.97:80 rr persistent(保持会话的标志) 10800

(保持会话的时间,单位秒)

? -> 10.244.104.10:80???????????? Masq??? 1????? 0????????? 0????????

? -> 10.244.166.129:80??????????? Masq??? 1????? 0????????? 0????????

? -> 10.244.166.191:80??????????? Masq??? 1????? 0????????? 0???本文来自互联网用户投稿,该文观点仅代表作者本人,不代表本站立场。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。 如若内容造成侵权/违法违规/事实不符,请联系我的编程经验分享网邮箱:chenni525@qq.com进行投诉反馈,一经查实,立即删除!

- Python教程

- 深入理解 MySQL 中的 HAVING 关键字和聚合函数

- Qt之QChar编码(1)

- MyBatis入门基础篇

- 用Python脚本实现FFmpeg批量转换

- 训练YOLOv7---有关FPS计算的问题

- Servlet技术之Cookie对象与HttpSession对象

- 【jQuery入门】基础使用-入口函数、顶级对象$

- 电商平台spu和sku的完整设计

- Python接口自动化测试实战详解

- springboot在线教育系统设计与实现

- 安全cdn有哪些优势

- SQL SELECT DISTINCT 语句

- 【错误记录/js】保存octet-stream为文件后数据错乱

- 宝塔发布网站问题汇总和记录