hanlp,pkuseg,jieba,cutword分词实践

发布时间:2024年01月18日

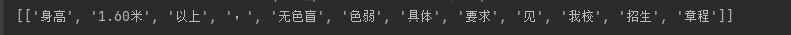

总结:只有jieba,cutword,baidu lac成功将色盲色弱成功分对,这两个库字典应该是最全的

hanlp[持续更新中]

https://github.com/hankcs/HanLP/blob/doc-zh/plugins/hanlp_demo/hanlp_demo/zh/tok_stl.ipynb

import hanlp

# hanlp.pretrained.tok.ALL # 语种见名称最后一个字段或相应语料库

tok = hanlp.load(hanlp.pretrained.tok.COARSE_ELECTRA_SMALL_ZH)

# coarse和fine模型训练自9970万字的大型综合语料库,覆盖新闻、社交媒体、金融、法律等多个领域,是已知范围内全世界最大的中文分词语料库

# tok.dict_combine = './data/dict.txt'

print(tok(['身高1.60米以上,无色盲色弱具体要求见我校招生章程']))

pkuseg[不再维护了]

https://github.com/lancopku/pkuseg-python

下载最新模型

import pkuseg

c = pkuseg.pkuseg(model_name=r'C:\Users\ymzy\.pkuseg\default_v2') #指定模型路径加载,如果只写模型名称,会报错[Errno 2] No such file or directory: 'default_v2\\unigram_word.txt'

# c = pkuseg.pkuseg(user_dict=dict_path,model_name=r'C:\Users\ymzy\.pkuseg\default_v2') #, postag = True

print(c.cut('身高1.60米以上,无色盲色弱具体要求见我校招生章程'))

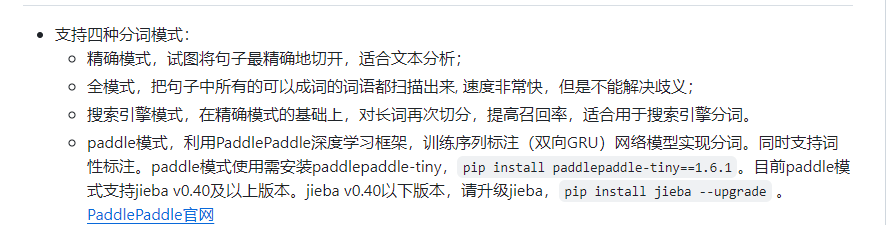

jieba[不再维护了]

https://github.com/fxsjy/jieba

HMM中文分词原理

import jieba

# jieba.load_userdict(file_name)

sentence = '身高1.60米以上,无色盲色弱具体要求见我校招生章程'

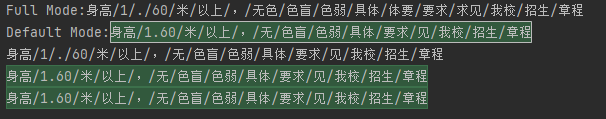

#jieba分词有三种不同的分词模式:精确模式、全模式和搜索引擎模式:

seg_list = jieba.cut(sentence, cut_all=True) #全模式

print("Full Mode:" + "/".join(seg_list))

seg_list = jieba.cut(sentence, cut_all=False) #精确模式

print("Default Mode:" + "/".join(seg_list))

seg_list = jieba.cut(sentence, HMM=False) #不使用HMM模型

print("/".join(seg_list))

seg_list = jieba.cut(sentence, HMM=True) #使用HMM模型

print("/".join(seg_list))

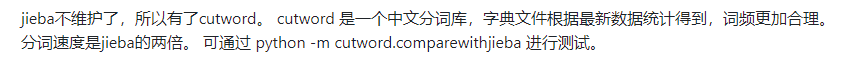

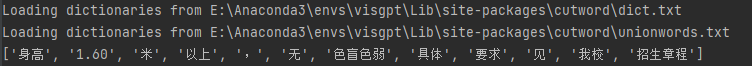

cutword[202401最新]

https://github.com/liwenju0/cutword

from cutword import Cutter

cutter = Cutter(want_long_word=True)

res = cutter.cutword("身高1.60米以上,无色盲色弱具体要求见我校招生章程")

print(res)

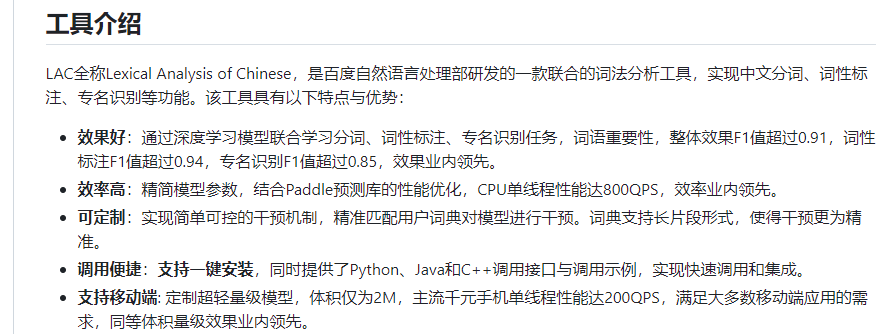

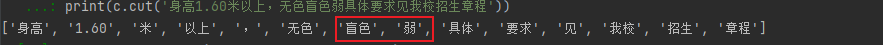

lac【不再维护】

from LAC import LAC

# 装载分词模型

seg_lac = LAC(mode='seg')

seg_lac.load_customization('./dictionary/dict.txt', sep=None)

texts = [u"身高1.60米以上,无色盲色弱具体要求见我校招生章程"]

seg_result = seg_lac.run(texts)

print(seg_result)

文章来源:https://blog.csdn.net/weixin_38235865/article/details/135627798

本文来自互联网用户投稿,该文观点仅代表作者本人,不代表本站立场。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。 如若内容造成侵权/违法违规/事实不符,请联系我的编程经验分享网邮箱:chenni525@qq.com进行投诉反馈,一经查实,立即删除!

本文来自互联网用户投稿,该文观点仅代表作者本人,不代表本站立场。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。 如若内容造成侵权/违法违规/事实不符,请联系我的编程经验分享网邮箱:chenni525@qq.com进行投诉反馈,一经查实,立即删除!

最新文章

- Python教程

- 深入理解 MySQL 中的 HAVING 关键字和聚合函数

- Qt之QChar编码(1)

- MyBatis入门基础篇

- 用Python脚本实现FFmpeg批量转换

- kafka系列(二)

- Bash 操作审计和安全加固 —— 筑梦之路

- SpringBoot+vue2.0开发在线考试系统网页

- SV-298XT IP网络广播板 SV-298XT-共公广播音频模块IP网络广播板

- mysql索引详解(十分钟时间搞定)

- java.sql.SQLException: Failed to fetch schema of XXX 问题

- python实现最小二叉堆---最小堆结构

- 【Redis集群】docker实现3主3从扩缩容架构配置案例

- Python-用中国地图验证四色定理

- 计算机论文|基于Spring Boot的汽车美容系统的设计与实现