k8s-1.23版本安装

发布时间:2023年12月17日

一、主机初始化

1、修改主机名

hostnamectl set-hostname master

hostnamectl set-hostname node1

hostnamectl set-hostname node2

hostnamectl set-hostname node3

2、主机名解析

echo 192.168.1.200 master >> /etc/hosts

echo 192.168.1.201 node1 >> /etc/hosts

echo 192.168.1.202 node2 >> /etc/hosts

echo 192.168.1.203 node3 >> /etc/hosts

3、关闭防火墙和seliunx

systemctl stop firewalld && systemctl disable firewalld && systemctl status firewalld

sed -i 's/enforcing/disabled/' /etc/selinux/config

4、 内核参数修改

1.开启内核 ipv4 转发需要执行如下命令加载 br_netfilter 模块在所有节点执行

modprobe br_netfilter

2.创建 /etc/sysctl.d/k8s.conf文件,添加如下内容:

cat > /etc/sysctl.d/k8s.conf<<EOF

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

net.ipv4.ip_forward = 1

vm.swappiness=0

EOF

sysctl -p /etc/sysctl.d/k8s.conf

bridge-nf 使得 netfilter 可以对 Linux 网桥上的IPv4/ARP/IPv6 包过滤。

比如,设置net.bridge.bridge-nfcall-iptables=1后,二层的网桥在转发包时也会被 iptables的FORWARD 规则所过滤。常用的选项包括:

- net.bridge.bridge-nf-call-arptables: 是否在arptables 的 FORWARD 中过滤网桥的 ARP 包

- net.bridge.bridge-nf-calT-ip6tables: 是否在ip6tabLes 链中过滤 IPV6 包

- net.bridge.bridge-nf-call-iptables: 是否在 iptables链中过滤 IPV4 包

- net.bridge.bridge-nf-filter-vlan-tagged: 是否在iptables/arptables 中过滤打了 vlan 标签的包。

5、 ipvs 安装

yum -y install ipset ipvsadm

cat > /etc/sysconfig/modules/ipvs.modules <<EOF

#!/bin/bash

modprobe -- ip_Vs

modprobe -- ip_vs_rr

modprobe -- ip_vs_wrr

modprobe -- ip_vs_sh

modprobe -- nf_conntrack_ipv4

EOF

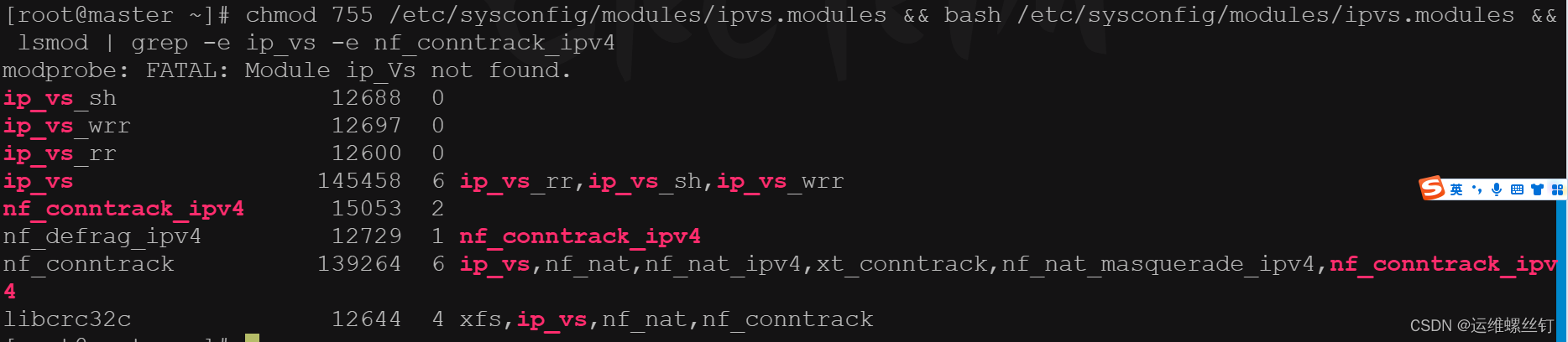

查看安装情况

chmod 755 /etc/sysconfig/modules/ipvs.modules && bash /etc/sysconfig/modules/ipvs.modules && lsmod | grep -e ip_vs -e nf_conntrack_ipv4

上面脚本创建了的/etc/sysconfig/modules/ipvs.modules文件保证在节点重启后能自动加载所需模块。

使用Lsmod grep -eip_vs -e nf_conntrack_ipv4命令查看是否已经正确加载所需的内核模块。

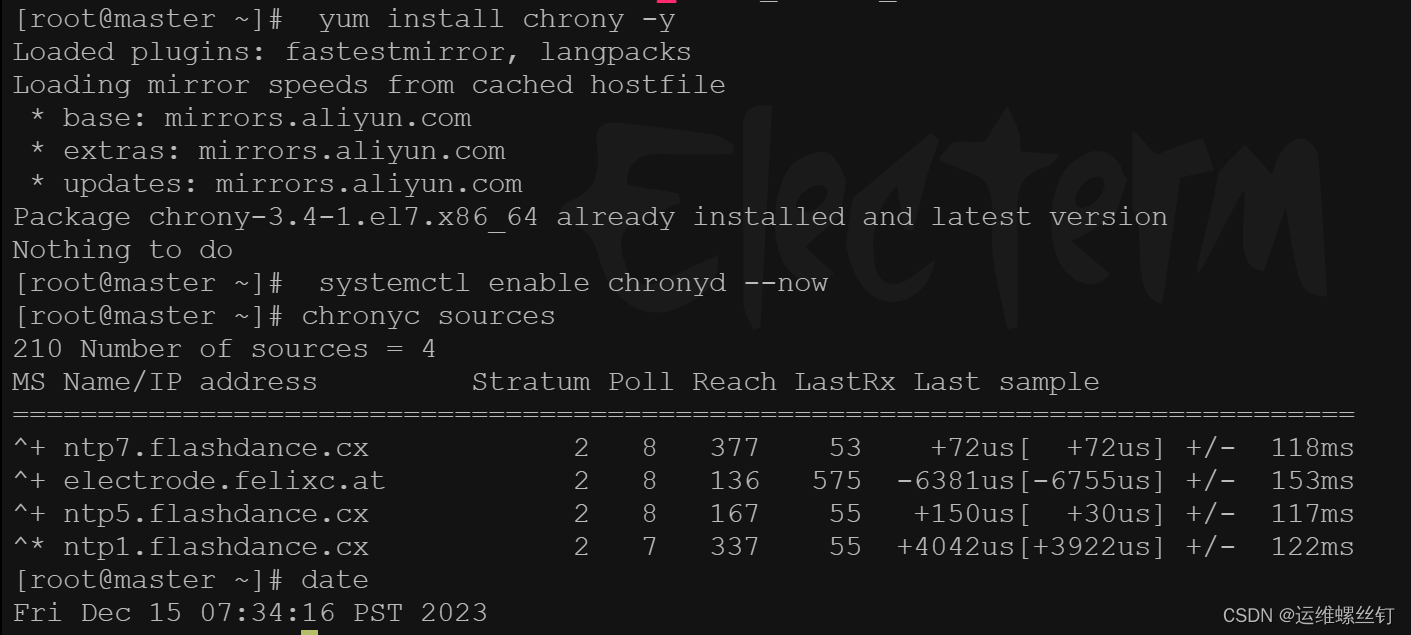

6、配置时间同步:

yum install chrony -y

systemctl enable chronyd --now

chronyc sources

date

二、安装集群公共组件

需要在所有节点上安装Docker、kubelet、kubectl、kubeadm

1、配置docker-ce的yum源(全节点安装)

yum remove docker*

yum install -y yum-utils

yum-config-manager--add-repohttp://mirrors.aliyun.com/dockerce Tinux/centos/docker-ce.repo

2、安装docker并配置加速器(全节点安装)

yum -y install docker-ce

mkdir -p /etc/docker && tee /etc/docker/daemon.json <<EOF

{

"registry-mirrors":

["https: // g2gr04ke .mirror.aliyuncs.com"],

"exec-opts": ["native.cgroupdriver=systemd"]

}

EOF

systemctl start docker

systemctl daemon-reload

systemctl enable docker

systemctl restart docker

systemctl status docker

3、安装k8s集群工具(全节点安装)

1、配置kubernetes 阿里云yum源

cat > /etc/yum.repos.d/kubernetes.repo << EOF

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

2、安装kubernetes的工具

yum install -y kubelet-1.23.0 kubeadm-1.23.0 kubectl-1.23.0

检查版本:

kubeadm version

3、启动kubelet,并加入开机自启动

systemctl start kubelet && systemctl enable kubelet

- 在每个节点安装如下软件包

- kubeadm: 初始化集群的指令

- kubeLet: 在集群中的每个节点上用来启动 Pod 和容器等

- kubectl: 用来与集群通信的命令行工具

三、初始化集群

1、使用kubeadm进行初始化,

可以使用kubeadm命令,也可以使用配置文件进行集群初始化

kubeadm init \

--apiserver-advertise-address=192.168.1.200 \

--image-repository registry.aliyuncs.com/google_containers \

--kubernetes-version v1.23.0 \

--service-cidr=10.96.0.0/12 \

--pod-network-cidr=10.244.0.0/16 \

也可以先下载好镜像,这样会节约点时间

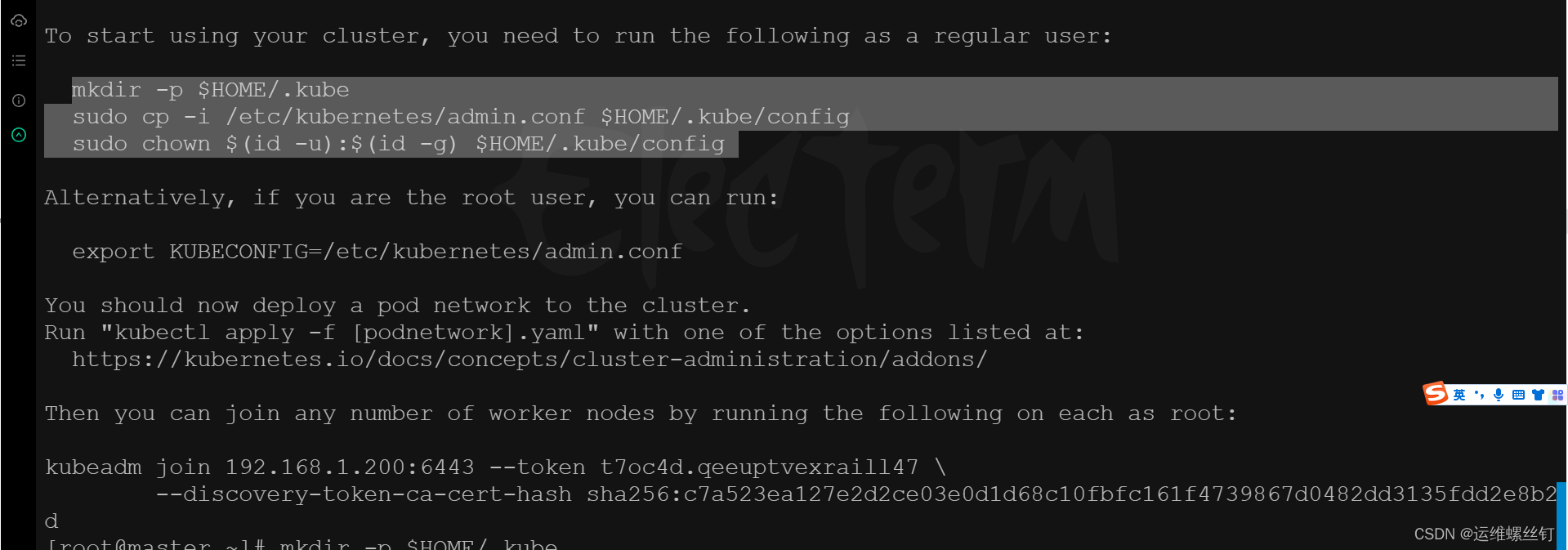

2、master节点执行

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

export KUBECONFIG=/etc/kubernetes/admin.conf

3、node节点执行

kubeadm join 192.168.1.200:6443 --token t7oc4d.qeeuptvexraill47 \

--discovery-token-ca-cert-hash sha256:c7a523ea127e2d2ce03e0d1d68c10fbfc161f4739867d0482dd3135fdd2e8b2d

4、token保持24小时,如果过期,执行以下命令

kubeadm token create --print-join-command

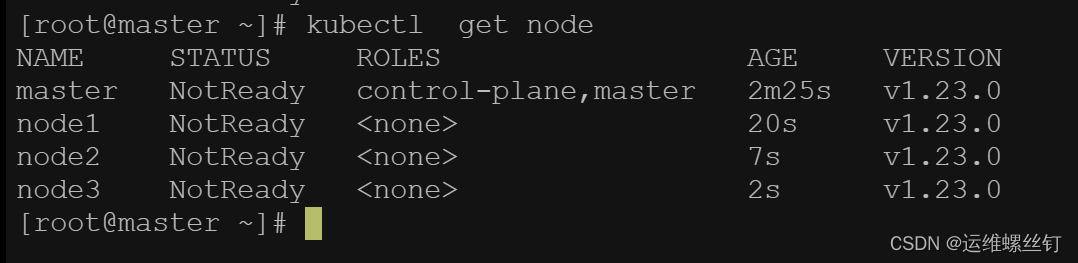

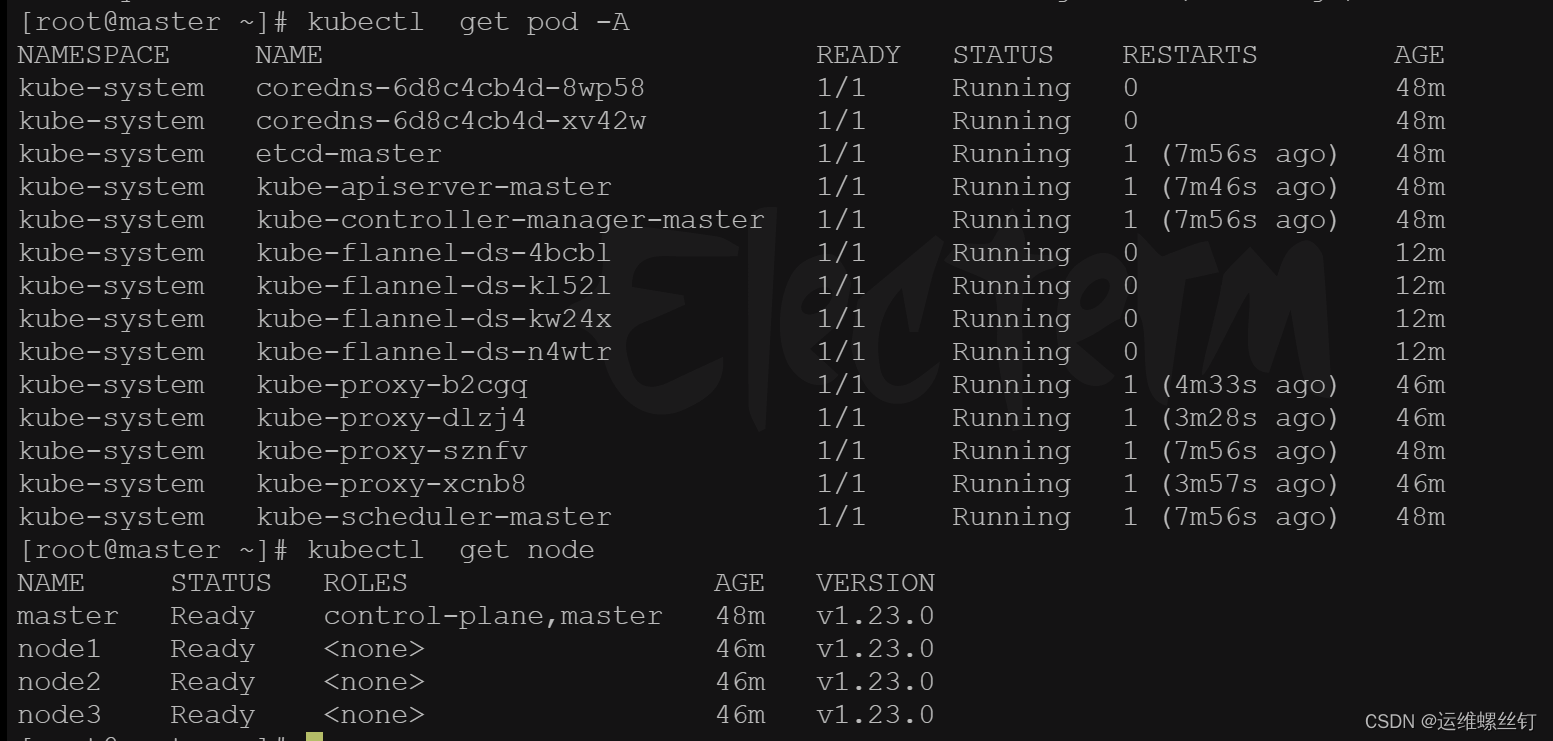

5、集群状态检查

所有节点执行完毕后,查看集群状态:

- 当前集群处于NotReady状态,需要安装网络组件

四、安装网络组件Fannel

1、下载网络组件yaml文件

wget https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

wget https://github.com/flannel-io/flannel/releases/tag/v0.22.3/kube-flannel.yml

2、配置文件修改

1、修改网络:

198 command:

199 - /opt/bin/flanneld

200 args:

201 - --ip-masq

202 - --kube-subnet-mgr

203 - --iface=ens33 ###默认是使用第一个网络,一般第一个网卡都是docker占用

204 resources:

205 requests:

206 cpu: "100m"

207 memory: "50Mi"

208 limits:

2、修改pod地址

123 }

124 ]

125 }

126 net-conf.json: |

127 {

128 "Network": "10.244.0.0/16",

129 "Backend": {

130 "Type": "vxlan"

131 }

132 }

133 ---

3、修改镜像地址:

170 image: rancher/mirrored-flannelcni-flannel-cni-plugin:v1.0.1

182 image: rancher/mirrored-flannelcni-flannel:v0.17.0

197 image: rancher/mirrored-flannelcni-flannel:v0.17.0

4、下载镜像

docker pull rancher/mirrored-flannelcni-flannel-cni-plugin:v1.0.1

docker pull rancher/mirrored-flannelcni-flannel:v0.17.0

docker pull rancher/mirrored-flannelcni-flannel:v0.17.0

一般下载时间过长,如果执行执行,容易报错

5、执行yaml配置

kubectl apply -f kube-flannel.yml

6、查看集群状态:

验证k8s集群安装完毕

五、集群可用性检查

1、启动测试容器:

kubectl run bs --image=busybox:1.28.4 -- sleep 24h

2、进入容器

kubectl exec -it bs -- sh

3、测试网络

/ # ping www.baidu.com

PING www.baidu.com (183.2.172.185): 56 data bytes

64 bytes from 183.2.172.185: seq=0 ttl=127 time=5.915 ms

64 bytes from 183.2.172.185: seq=1 ttl=127 time=6.577 ms

64 bytes from 183.2.172.185: seq=2 ttl=127 time=6.602 ms

64 bytes from 183.2.172.185: seq=3 ttl=127 time=6.438 ms

^C

--- www.baidu.com ping statistics ---

4 packets transmitted, 4 packets received, 0% packet loss

round-trip min/avg/max = 5.915/6.383/6.602 ms

/ # nslookup kubernetes.default.svc.cluster.local

Server: 10.96.0.10

Address 1: 10.96.0.10 kube-dns.kube-system.svc.cluster.local

Name: kubernetes.default.svc.cluster.local

Address 1: 10.96.0.1 kubernetes.default.svc.cluster.local

/ # exit

[root@master ~]# kubectl get service

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 110m

测试结果:出公网没问题,域名解析正常,说明安装的coredns没有问题,可用解析到service 上,并解析正确无误,集群验证成功

六、安装kubectl 补全命令

# 安装bash-completion

## bash-completion-extras需要epelrepo源

yum install -y bash-completion bash-completion-extras

# 配置自动补全

source /usr/share/bash-completion/bash_completion

# 临时生效kubectl自动补全

source <(kubectl completion bash)

## 只在当前用户生效kubectl自动补全

echo 'source <(kubectl completion bash)' >>~/.bashrc

## 全局生效

echo 'source <(kubectl completion bash)' >/etc/profile.d/k8s.sh && source /etc/profile

# 生成kubectl的自动补全脚本

kubectl completion bash >/etc/bash_completion.d/kubectl

文章来源:https://blog.csdn.net/weixin_42816196/article/details/135026838

本文来自互联网用户投稿,该文观点仅代表作者本人,不代表本站立场。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。 如若内容造成侵权/违法违规/事实不符,请联系我的编程经验分享网邮箱:chenni525@qq.com进行投诉反馈,一经查实,立即删除!

本文来自互联网用户投稿,该文观点仅代表作者本人,不代表本站立场。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。 如若内容造成侵权/违法违规/事实不符,请联系我的编程经验分享网邮箱:chenni525@qq.com进行投诉反馈,一经查实,立即删除!

最新文章

- Python教程

- 深入理解 MySQL 中的 HAVING 关键字和聚合函数

- Qt之QChar编码(1)

- MyBatis入门基础篇

- 用Python脚本实现FFmpeg批量转换

- clickhouse 数据导入导出操作

- 个人博客主题 vuepress-hope

- 【DOM笔记四】事件高级!(注册/删除事件、DOM事件流、事件对象、事件委托、鼠标 / 键盘事件、相关案例)

- 探索AI技术的奥秘:揭秘人工智能的核心原理

- three.js给模型添加标签三种方式对比(矩形平面,精灵图,CSS2DObject)

- 隐藏服务器IP的正确使用方式

- 横屏转竖屏:一键轻松转换,让视频更适应屏幕!

- HCIA的访问控制列表ACL

- php使用OpenCV实现从照片中截取身份证区域照片

- 微信小程序审核过慢有什么辅助方法吗?