安卓拍照扫描APP NDK开发——基于深度学习目标分割实现拍照文档边缘检测

前言

这是一个安卓NDK的项目,想要实现的效果就是拍照扫描,这里只涉及到的只有边缘检测,之后会写文档滤镜、证件识别与证件1比1打印,OCR、版面分析之后的文档还原。我的开发环境是Android Studio 北极狐,真机是华为mate 30 pro,系统是HarmonyOS 4.0.0, NDK 是21.1.6352462这个版本,可实现CPU与GPU、NPU推理,推理速度与精度可以按真机去匹配,测试的效果不输于当前市面上排APP市场靠前的几个商业应用。实现的效果如下,有书本和单开页面两种效果,运行速度可以看看下面的视频:

手机拍照扫描边缘检测效果

一、边缘检测

1.传统方法

传统的边缘检测方案的难度和局限性:

-

阈值参数的依赖: Canny算法的检测效果依赖于一系列阈值参数的设置,这包括高阈值和低阈值。这些阈值通常是经验值,需要通过调试和实验来确定。引入额外步骤可能导致引入新的阈值参数,使得调参更为复杂。

-

阈值参数数量的限制: 阈值参数的数量不能太多,因为过多的参数会增加调试的复杂性,难以准确设置。同时,过多的参数可能降低算法的鲁棒性,使其在不同场景下难以表现理想效果。

-

算法鲁棒性的问题: 尽管存在一系列阈值参数,最终只有一组或少数几组固定的组合。这可能导致算法在某些场景下的鲁棒性受到影响,使得在一些情况下边缘检测效果不理想。

-

边缘图上的数学模型复杂: Canny算法在边缘图上建立复杂的数学模型,这增加了算法的实现难度。对于某些情况,算法可能无法提供令人满意的边缘检测结果。

综合而言,Canny算法在实际应用中需要仔细调参,并且其性能可能受到特定场景的限制。在某些复杂情况下,可能需要考虑其他边缘检测方法或进一步改进以提高算法的鲁棒性。

传统的检测分割方法计算成本较低,但效果相对较差,往往难以满足我们的要求。相反,深度学习为主的端到端检测分割方法计算成本较高,通常需要GPU进行计算,但其效果卓越。目前,实际应用中基本上都采用以深度学习为主的端到端分割算法,这使得图像分割在实际落地应用中取得了显著的进展。

2.图像分割

图像分割是将一幅数字图像划分为多个组成部分(一系列像素或超像素)的过程。分割的目标是通过简化和/或变换图像,使其转换为更有意义和更易于分析的内容表示。图像分割通常用于定位图像中目标和边界(如线和曲面)的位置。更准确地说,图像分割是为图像中的每个像素分配标签,其中具有相同标签的像素具有相似的特征。在图像分割领域中有多种技术,包括基于区域的分割技术、边界检测分割技术和基于聚类的分割技术。

图像分割领域的一些经典算法:

-

阈值技术: 该技术的主要目的是确定图像的最佳阈值。强度值超过阈值的像素被分配为1,其余像素的强度值被分配为零,从而形成二值图。选择阈值的方法包括Otsu、k均值聚类和最大熵法。

-

运动与交互分割: 该技术基于图像中的运动进行分割。其思想是在假设目标是运动的情况下找出两幅图中的差异,从而确定目标位置。

-

边界检测: 包括多种数学方法,其目的是标记图像中亮度变化剧烈或具有不连贯性的区域中的点。边界检测通常是其他分割技术的前提步骤,因为区域边界和边之间存在很高的关联性。

-

区域增长方法: 建立在相邻像素具有相似像素值的假设上。该方法比较每个像素与其相邻像素,如果满足相似性标准,则像素被划分到一个或多个相邻点组成的聚类中。相似性标准的选择对结果影响很大,容易受到噪声的影响。

此外,还有其他一些未在上文中提及的图像分割方法,如双聚类方法、快速匹配法、分水岭变换法等。这些方法在不同的应用场景中具有各自的优势和适用性。

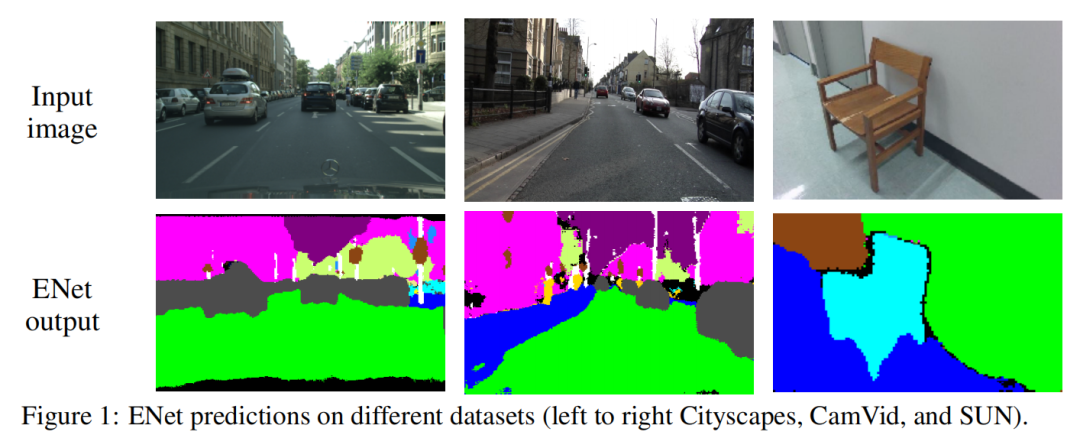

二. Enet实例分割

1. 算法简介

ENet算法旨在实现快速的语义分割,同时考虑了分割的准确性和实时性能。其基本网络结构为编码-解码结构,通过下采样实现像素级的分类和上采样实现图像目标的定位。为了提高实时性,ENet采用了一些策略来减少计算量和提高采样速度。

在下采样层,滤波器操作扩大了图片的感受野,允许网络收集更多的目标上下文信息。然而,在图像语义分割中,下采样操作有两个缺点:

-

空间信息的损失: 由于特征图分辨率的降低,导致了空间信息的损失。

-

模型尺寸和计算量增加: 对于整张图像的像素分割,需要输入和输出具有相同的分辨率,因此在进行下采样操作后同样需要上采样与之匹配,这增加了模型尺寸和计算量。

对于第一个问题,FCN算法融合不同编码层产生的特征图,但这会增加网络的参数量,不利于语义分割的实时性。对于第二个问题,SegNet算法通过在最大池化层中保存特征元素索引,在解码器中进行搜索,实现解码特征的稀疏上采样图。然而,下采样仍然会损害目标空间信息的精度。

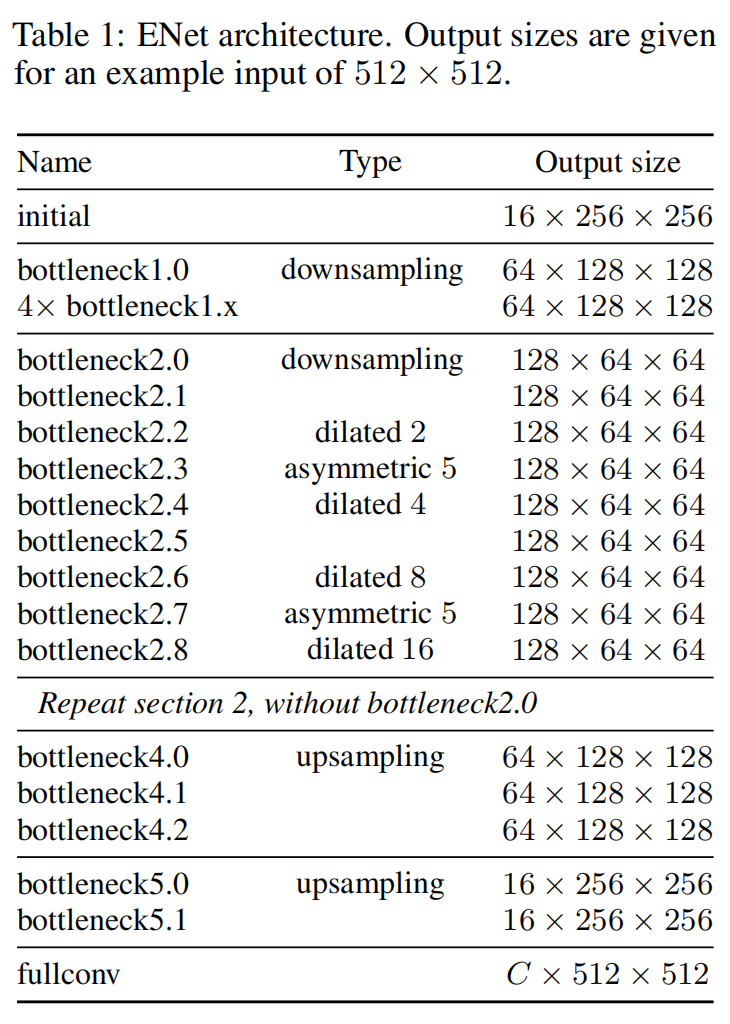

2、ENet算法网络结构

ENet算法在设计上采用了一些关键策略,以实现高效的实时语义分割:

-

初始化模块设计: ENet在初始化模块中采用了一个池化操作和一个步长为2的卷积操作并行,然后将结果特征图进行合并。这种设计旨在在网络早期使用较小尺寸和较少数量的特征图,从而大大减少了网络的参数量,提高了网络的运行速度。

-

扩张卷积的下采样: 在下采样过程中,ENet算法使用了扩张卷积(Dilated Convolution),这有助于平衡图像分辨率和图像感受野。扩张卷积允许在不降低特征图分辨率的同时扩大图像目标的感受野,提高了对更广泛上下文信息的学习能力。

-

不对称的编码器-解码器结构: ENet的结构不同于对称的结构,它包含了一个大的编码器和一个小的解码器。这样的设计有助于降低ENet网络的参数量,同时保持了对输入图像的有效表示。这种不对称结构的设计是为了更好地平衡模型的复杂性和实时性能。

3. 网络实现代码

import torch.nn as nn

import torch

class InitialBlock(nn.Module):

def __init__(self,

in_channels,

out_channels,

bias=False,

relu=True):

super().__init__()

if relu:

activation = nn.ReLU

else:

activation = nn.PReLU

# Main branch - As stated above the number of output channels for this

# branch is the total minus 3, since the remaining channels come from

# the extension branch

self.main_branch = nn.Conv2d(

in_channels,

out_channels - 3,

kernel_size=3,

stride=2,

padding=1,

bias=bias)

# Extension branch

self.ext_branch = nn.MaxPool2d(3, stride=2, padding=1)

# Initialize batch normalization to be used after concatenation

self.batch_norm = nn.BatchNorm2d(out_channels)

# PReLU layer to apply after concatenating the branches

self.out_activation = activation()

def forward(self, x):

main = self.main_branch(x)

ext = self.ext_branch(x)

# Concatenate branches

out = torch.cat((main, ext), 1)

# Apply batch normalization

out = self.batch_norm(out)

return self.out_activation(out)

class RegularBottleneck(nn.Module):

def __init__(self,

channels,

internal_ratio=4,

kernel_size=3,

padding=0,

dilation=1,

asymmetric=False,

dropout_prob=0,

bias=False,

relu=True):

super().__init__()

# Check in the internal_scale parameter is within the expected range

# [1, channels]

if internal_ratio <= 1 or internal_ratio > channels:

raise RuntimeError("Value out of range. Expected value in the "

"interval [1, {0}], got internal_scale={1}."

.format(channels, internal_ratio))

internal_channels = channels // internal_ratio

if relu:

activation = nn.ReLU

else:

activation = nn.PReLU

# Main branch - shortcut connection

# Extension branch - 1x1 convolution, followed by a regular, dilated or

# asymmetric convolution, followed by another 1x1 convolution, and,

# finally, a regularizer (spatial dropout). Number of channels is constant.

# 1x1 projection convolution

self.ext_conv1 = nn.Sequential(

nn.Conv2d(

channels,

internal_channels,

kernel_size=1,

stride=1,

bias=bias), nn.BatchNorm2d(internal_channels), activation())

# If the convolution is asymmetric we split the main convolution in

# two. Eg. for a 5x5 asymmetric convolution we have two convolution:

# the first is 5x1 and the second is 1x5.

if asymmetric:

self.ext_conv2 = nn.Sequential(

nn.Conv2d(

internal_channels,

internal_channels,

kernel_size=(kernel_size, 1),

stride=1,

padding=(padding, 0),

dilation=dilation,

bias=bias), nn.BatchNorm2d(internal_channels), activation(),

nn.Conv2d(

internal_channels,

internal_channels,

kernel_size=(1, kernel_size),

stride=1,

padding=(0, padding),

dilation=dilation,

bias=bias), nn.BatchNorm2d(internal_channels), activation())

else:

self.ext_conv2 = nn.Sequential(

nn.Conv2d(

internal_channels,

internal_channels,

kernel_size=kernel_size,

stride=1,

padding=padding,

dilation=dilation,

bias=bias), nn.BatchNorm2d(internal_channels), activation())

# 1x1 expansion convolution

self.ext_conv3 = nn.Sequential(

nn.Conv2d(

internal_channels,

channels,

kernel_size=1,

stride=1,

bias=bias), nn.BatchNorm2d(channels), activation())

self.ext_regul = nn.Dropout2d(p=dropout_prob)

# PReLU layer to apply after adding the branches

self.out_activation = activation()

def forward(self, x):

# Main branch shortcut

main = x

# Extension branch

ext = self.ext_conv1(x)

ext = self.ext_conv2(ext)

ext = self.ext_conv3(ext)

ext = self.ext_regul(ext)

# Add main and extension branches

out = main + ext

return self.out_activation(out)

class DownsamplingBottleneck(nn.Module):

def __init__(self,

in_channels,

out_channels,

internal_ratio=4,

return_indices=False,

dropout_prob=0,

bias=False,

relu=True):

super().__init__()

# Store parameters that are needed later

self.return_indices = return_indices

# Check in the internal_scale parameter is within the expected range

# [1, channels]

if internal_ratio <= 1 or internal_ratio > in_channels:

raise RuntimeError("Value out of range. Expected value in the "

"interval [1, {0}], got internal_scale={1}. "

.format(in_channels, internal_ratio))

internal_channels = in_channels // internal_ratio

if relu:

activation = nn.ReLU

else:

activation = nn.PReLU

# Main branch - max pooling followed by feature map (channels) padding

self.main_max1 = nn.MaxPool2d(

2,

stride=2,

return_indices=return_indices)

# Extension branch - 2x2 convolution, followed by a regular, dilated or

# asymmetric convolution, followed by another 1x1 convolution. Number

# of channels is doubled.

# 2x2 projection convolution with stride 2

self.ext_conv1 = nn.Sequential(

nn.Conv2d(

in_channels,

internal_channels,

kernel_size=2,

stride=2,

bias=bias), nn.BatchNorm2d(internal_channels), activation())

# Convolution

self.ext_conv2 = nn.Sequential(

nn.Conv2d(

internal_channels,

internal_channels,

kernel_size=3,

stride=1,

padding=1,

bias=bias), nn.BatchNorm2d(internal_channels), activation())

# 1x1 expansion convolution

self.ext_conv3 = nn.Sequential(

nn.Conv2d(

internal_channels,

out_channels,

kernel_size=1,

stride=1,

bias=bias), nn.BatchNorm2d(out_channels), activation())

self.ext_regul = nn.Dropout2d(p=dropout_prob)

# PReLU layer to apply after concatenating the branches

self.out_activation = activation()

def forward(self, x):

# Main branch shortcut

if self.return_indices:

main, max_indices = self.main_max1(x)

else:

main = self.main_max1(x)

# Extension branch

ext = self.ext_conv1(x)

ext = self.ext_conv2(ext)

ext = self.ext_conv3(ext)

ext = self.ext_regul(ext)

# Main branch channel padding

n, ch_ext, h, w = ext.size()

ch_main = main.size()[1]

padding = torch.zeros(n, ch_ext - ch_main, h, w)

# Before concatenating, check if main is on the CPU or GPU and

# convert padding accordingly

if main.is_cuda:

padding = padding.cuda()

# Concatenate

main = torch.cat((main, padding), 1)

# Add main and extension branches

out = main + ext

return self.out_activation(out), max_indices

class UpsamplingBottleneck(nn.Module):

def __init__(self,

in_channels,

out_channels,

internal_ratio=4,

dropout_prob=0,

bias=False,

relu=True):

super().__init__()

# Check in the internal_scale parameter is within the expected range

# [1, channels]

if internal_ratio <= 1 or internal_ratio > in_channels:

raise RuntimeError("Value out of range. Expected value in the "

"interval [1, {0}], got internal_scale={1}. "

.format(in_channels, internal_ratio))

internal_channels = in_channels // internal_ratio

if relu:

activation = nn.ReLU

else:

activation = nn.PReLU

# Main branch - max pooling followed by feature map (channels) padding

self.main_conv1 = nn.Sequential(

nn.Conv2d(in_channels, out_channels, kernel_size=1, bias=bias),

nn.BatchNorm2d(out_channels))

# Remember that the stride is the same as the kernel_size, just like

# the max pooling layers

self.main_unpool1 = nn.MaxUnpool2d(kernel_size=2)

# Extension branch - 1x1 convolution, followed by a regular, dilated or

# asymmetric convolution, followed by another 1x1 convolution. Number

# of channels is doubled.

# 1x1 projection convolution with stride 1

self.ext_conv1 = nn.Sequential(

nn.Conv2d(

in_channels, internal_channels, kernel_size=1, bias=bias),

nn.BatchNorm2d(internal_channels), activation())

# Transposed convolution

self.ext_tconv1 = nn.ConvTranspose2d(

internal_channels,

internal_channels,

kernel_size=2,

stride=2,

bias=bias)

self.ext_tconv1_bnorm = nn.BatchNorm2d(internal_channels)

self.ext_tconv1_activation = activation()

# 1x1 expansion convolution

self.ext_conv2 = nn.Sequential(

nn.Conv2d(

internal_channels, out_channels, kernel_size=1, bias=bias),

nn.BatchNorm2d(out_channels))

self.ext_regul = nn.Dropout2d(p=dropout_prob)

# PReLU layer to apply after concatenating the branches

self.out_activation = activation()

def forward(self, x, max_indices, output_size):

# Main branch shortcut

main = self.main_conv1(x)

main = self.main_unpool1(

main, max_indices, output_size=output_size)

# Extension branch

ext = self.ext_conv1(x)

ext = self.ext_tconv1(ext, output_size=output_size)

ext = self.ext_tconv1_bnorm(ext)

ext = self.ext_tconv1_activation(ext)

ext = self.ext_conv2(ext)

ext = self.ext_regul(ext)

# Add main and extension branches

out = main + ext

return self.out_activation(out)

class ENet(nn.Module):

def __init__(self, num_classes, encoder_relu=False, decoder_relu=True):

super().__init__()

self.initial_block = InitialBlock(3, 16, relu=encoder_relu)

# Stage 1 - Encoder

self.downsample1_0 = DownsamplingBottleneck(

16,

64,

return_indices=True,

dropout_prob=0.01,

relu=encoder_relu)

self.regular1_1 = RegularBottleneck(

64, padding=1, dropout_prob=0.01, relu=encoder_relu)

self.regular1_2 = RegularBottleneck(

64, padding=1, dropout_prob=0.01, relu=encoder_relu)

self.regular1_3 = RegularBottleneck(

64, padding=1, dropout_prob=0.01, relu=encoder_relu)

self.regular1_4 = RegularBottleneck(

64, padding=1, dropout_prob=0.01, relu=encoder_relu)

# Stage 2 - Encoder

self.downsample2_0 = DownsamplingBottleneck(

64,

128,

return_indices=True,

dropout_prob=0.1,

relu=encoder_relu)

self.regular2_1 = RegularBottleneck(

128, padding=1, dropout_prob=0.1, relu=encoder_relu)

self.dilated2_2 = RegularBottleneck(

128, dilation=2, padding=2, dropout_prob=0.1, relu=encoder_relu)

self.asymmetric2_3 = RegularBottleneck(

128,

kernel_size=5,

padding=2,

asymmetric=True,

dropout_prob=0.1,

relu=encoder_relu)

self.dilated2_4 = RegularBottleneck(

128, dilation=4, padding=4, dropout_prob=0.1, relu=encoder_relu)

self.regular2_5 = RegularBottleneck(

128, padding=1, dropout_prob=0.1, relu=encoder_relu)

self.dilated2_6 = RegularBottleneck(

128, dilation=8, padding=8, dropout_prob=0.1, relu=encoder_relu)

self.asymmetric2_7 = RegularBottleneck(

128,

kernel_size=5,

asymmetric=True,

padding=2,

dropout_prob=0.1,

relu=encoder_relu)

self.dilated2_8 = RegularBottleneck(

128, dilation=16, padding=16, dropout_prob=0.1, relu=encoder_relu)

# Stage 3 - Encoder

self.regular3_0 = RegularBottleneck(

128, padding=1, dropout_prob=0.1, relu=encoder_relu)

self.dilated3_1 = RegularBottleneck(

128, dilation=2, padding=2, dropout_prob=0.1, relu=encoder_relu)

self.asymmetric3_2 = RegularBottleneck(

128,

kernel_size=5,

padding=2,

asymmetric=True,

dropout_prob=0.1,

relu=encoder_relu)

self.dilated3_3 = RegularBottleneck(

128, dilation=4, padding=4, dropout_prob=0.1, relu=encoder_relu)

self.regular3_4 = RegularBottleneck(

128, padding=1, dropout_prob=0.1, relu=encoder_relu)

self.dilated3_5 = RegularBottleneck(

128, dilation=8, padding=8, dropout_prob=0.1, relu=encoder_relu)

self.asymmetric3_6 = RegularBottleneck(

128,

kernel_size=5,

asymmetric=True,

padding=2,

dropout_prob=0.1,

relu=encoder_relu)

self.dilated3_7 = RegularBottleneck(

128, dilation=16, padding=16, dropout_prob=0.1, relu=encoder_relu)

# Stage 4 - Decoder

self.upsample4_0 = UpsamplingBottleneck(

128, 64, dropout_prob=0.1, relu=decoder_relu)

self.regular4_1 = RegularBottleneck(

64, padding=1, dropout_prob=0.1, relu=decoder_relu)

self.regular4_2 = RegularBottleneck(

64, padding=1, dropout_prob=0.1, relu=decoder_relu)

# Stage 5 - Decoder

self.upsample5_0 = UpsamplingBottleneck(

64, 16, dropout_prob=0.1, relu=decoder_relu)

self.regular5_1 = RegularBottleneck(

16, padding=1, dropout_prob=0.1, relu=decoder_relu)

self.transposed_conv = nn.ConvTranspose2d(

16,

num_classes,

kernel_size=3,

stride=2,

padding=1,

bias=False)

def forward(self, x):

# Initial block

input_size = x.size()

x = self.initial_block(x)

# Stage 1 - Encoder

stage1_input_size = x.size()

x, max_indices1_0 = self.downsample1_0(x)

x = self.regular1_1(x)

x = self.regular1_2(x)

x = self.regular1_3(x)

x = self.regular1_4(x)

# Stage 2 - Encoder

stage2_input_size = x.size()

x, max_indices2_0 = self.downsample2_0(x)

x = self.regular2_1(x)

x = self.dilated2_2(x)

x = self.asymmetric2_3(x)

x = self.dilated2_4(x)

x = self.regular2_5(x)

x = self.dilated2_6(x)

x = self.asymmetric2_7(x)

x = self.dilated2_8(x)

# Stage 3 - Encoder

x = self.regular3_0(x)

x = self.dilated3_1(x)

x = self.asymmetric3_2(x)

x = self.dilated3_3(x)

x = self.regular3_4(x)

x = self.dilated3_5(x)

x = self.asymmetric3_6(x)

x = self.dilated3_7(x)

# Stage 4 - Decoder

x = self.upsample4_0(x, max_indices2_0, output_size=stage2_input_size)

x = self.regular4_1(x)

x = self.regular4_2(x)

# Stage 5 - Decoder

x = self.upsample5_0(x, max_indices1_0, output_size=stage1_input_size)

x = self.regular5_1(x)

x = self.transposed_conv(x, output_size=input_size)

return x

if __name__ == '__main__':

x = torch.randn(1, 3, 256, 256)

net = ENet(13)(x)

print(net.shape) # torch.Size([1, 13, 256, 256])

4、不对称卷积:

标准卷积权重存在相当数量的冗余。通过将一个滤波器为nxn的卷积层分解成两个连续的卷积层,其中一个卷积层具有一个nx1的滤波器,另一个卷积层具有一个1xn的滤波器,可以减少这种冗余信息。这种分解卷积也被称为不对称卷积。ENet算法中采用了n=5的不对称卷积,通过这两个步骤产生的计算量与一个3x3的卷积层相似。这有助于增加模型学习函数的多样性,并增加感受野。

5、扩张卷积:

为了避免特征图的过度下采样,ENet算法使用了扩张卷积替代了最小分辨率操作阶段中的几个编码模型的主要卷积层。这种操作使得精度显著提升。

6、正则化:

由于大多数像素级分割数据集相当较小,复杂的神经网络模型容易过拟合,从而导致模型泛化能力下降。正则化参数相当于对参数引入先验分布,调节模型允许存储的信息量,并对其加以约束,从而降低模型的复杂度,有助于减少过拟合。

7、模型推理:

ENet在CmaVid数据集上训练后的模型大小为4.27M,非常轻量。这使得ENet在嵌入式系统和移动设备上的推理过程更加高效。

三、NDK开发

1.NCNN

NCNN是一个轻量级的深度学习框架,专为移动设备和嵌入式系统设计。它在模型推理过程中注重速度和轻量级,采用C++实现,支持多种硬件平台,包括CPU、GPU、以及一些专用的神经网络加速器。

-

轻量级设计: NCNN的设计目标是轻量级,使其适用于移动设备和嵌入式系统。它采用C++实现,具有较小的代码体积和内存占用。

-

硬件加速支持: NCNN支持多种硬件平台,包括通用的CPU和GPU,以及一些专用的神经网络加速器,如华为的NPU(神经处理单元)等。

-

高性能: NCNN在模型推理过程中注重性能,采用了一系列优化策略,包括内存重用、多线程并发等,以提高推理速度。

-

跨平台支持: NCNN在多个操作系统上运行,包括Linux、Windows、Android等,为开发者提供了较大的灵活性。

-

丰富的模型支持: NCNN支持多种深度学习模型的加载和推理,包括常见的卷积神经网络(CNN)、循环神经网络(RNN)等。

-

模型转换工具: NCNN提供了模型转换工具,支持从主流深度学习框架(如TensorFlow、Caffe、ONNX等)中导入模型,以便在NCNN上进行推理。

2. 模型转换

将ONNX模型转换为NCNN模型可以通过使用NCNN框架提供的工具进行。NCNN提供了一个名为onnx2ncnn的工具,可以将ONNX模型转换为NCNN模型。

onnx2ncnn input.onnx output.param output.bin

input.onnx是输入的 ONNX 模型文件。output.param和output.bin是输出的 NCNN 模型文件,其中output.param包含了网络结构的参数,output.bin包含了模型的权重参数。

3.NDK推理代码

#pragma once

#include "net.h"

#include <opencv2/core/core.hpp>

#include <opencv2/highgui.hpp>

#include <opencv2/imgproc.hpp>

namespace SCAN

{

class DocumentEdge

{

public:

DocumentEdge();

~DocumentEdge();

int read_model(std::string edge_model_parma = "ED210113FP16.param",

std::string edge_model_bin = "ED210113FP16.bin",

std::string mid_model_parma = "M20210325F.param",

std::string mid_model_bin = "M20210325F.bin",

bool use_gpu = true, int _book_mid = 1);

int read_model(AAssetManager* mgr,std::string edge_model_parma = "ED210113FP16.param",

std::string edge_model_bin = "ED210113FP16.bin",

std::string mid_model_parma = "M20210325F.param",

std::string mid_model_bin = "M20210325F.bin",

bool use_gpu = true, int _book_mid = 1);

int detect(cv::Mat cv_src, std::vector<cv::Point>& points_out, int _od_label);

int revise_image(cv::Mat& cv_src, cv::Mat& cv_dst, std::vector<cv::Point>& in_points);

void draw_out_points(cv::Mat cv_src, cv::Mat& cv_dst, std::vector<cv::Point>& points_out);

public:

// static DocumentEdge *doc_edge;

bool book_mid = false;

private:

int inference(ncnn::Net& net, cv::Mat& cv_src, cv::Mat& cv_dst, int target_size);

int read_model(std::string param_path, std::string bin_path, ncnn::Net& net, bool use_gpu);

int read_model(AAssetManager* mgr,std::string param_path, std::string bin_path, ncnn::Net& net, bool use_gpu);

int targetArea(cv::Mat& cv_src, cv::Mat& cv_seg, int e, int d);

std::vector<cv::Point> getMidLine(cv::Mat cv_src, cv::Mat& cv_seg, int area);

ncnn::Net edge_net, mid_net;

int target_size = 512;

int threads = 4;

};

}

#include "DocumentEdge.h"

namespace SCAN

{

struct Line

{

cv::Point _p1;

cv::Point _p2;

cv::Point _center;

Line(cv::Point p1, cv::Point p2)

{

_p1 = p1;

_p2 = p2;

_center = cv::Point((p1.x + p2.x) / 2, (p1.y + p2.y) / 2);

}

};

DocumentEdge::DocumentEdge()

{

}

DocumentEdge::~DocumentEdge()

{

}

int DocumentEdge::read_model(std::string param_path, std::string bin_path, ncnn::Net& net, bool use_gpu)

{

bool has_GPU = false;

#if NCNN_VULKAN

ncnn::create_gpu_instance();

has_GPU = ncnn::get_gpu_count() > 0;

#endif

bool to_use_GPU = has_GPU && use_gpu;

net.opt.use_vulkan_compute = to_use_GPU;

int rb = -1;

int rm = -1;

rb = net.load_param(param_path.c_str());

rm = net.load_model(bin_path.c_str());

if (rb < 0 || rm < 0)

{

return -1;

}

if (to_use_GPU)

{

return 1;

}

return 0;

}

int DocumentEdge::read_model(std::string edge_model_parma,std::string edge_model_bin,

std::string mid_model_parma,std::string mid_model_bin,bool use_gpu, int book_mid)

{

if (book_mid == 1)

{

read_model(mid_model_parma, mid_model_bin, mid_net, use_gpu);

}

read_model(edge_model_parma, edge_model_bin, edge_net, use_gpu);

return 0;

}

int DocumentEdge::inference(ncnn::Net& net, cv::Mat& cv_src, cv::Mat& cv_dst, int target_size)

{

if (cv_src.empty())

{

return -20;

}

ncnn::Mat in = ncnn::Mat::from_pixels_resize(cv_src.data, ncnn::Mat::PIXEL_BGR,

cv_src.cols, cv_src.rows, target_size, target_size);

const float norm_vals[3] = { 1 / 255.f, 1 / 255.f, 1 / 255.f };

in.substract_mean_normalize(0, norm_vals);

ncnn::Extractor ex = net.create_extractor();

ex.set_num_threads(threads);

ncnn::Mat out;

ex.input("input.1", in);

ex.extract("887", out);

cv::Mat cv_seg = cv::Mat::zeros(cv::Size(out.w, out.h), CV_8UC1);

for (int i = 0; i < out.h; ++i)

{

for (int j = 0; j < out.w; ++j)

{

const float* bg = out.channel(0);

const float* fg = out.channel(1);

if (bg[i * out.w + j] < fg[i * out.w + j])

{

cv_seg.data[i * out.w + j] = 255;

}

}

}

cv::resize(cv_seg, cv_dst, cv::Size(cv_src.cols, cv_src.rows), cv::INTER_LINEAR);

return 0;

}

int DocumentEdge::targetArea(cv::Mat &cv_src, cv::Mat& cv_seg, int e = 7, int d = 5)

{

cv::Mat cv_temp = cv_src.clone();

//计算分割到的面积

float seg_total = countNonZero(cv_temp);

float pix_total = cv_src.cols * cv_src.rows;

int index = 0;

if (seg_total >= pix_total * 0.9)

{

e = 7;

d = 7;

index = 9;

}

else if ((seg_total < pix_total * 0.9) && (seg_total >= pix_total * 0.7))

{

e = 7;

d = 5;

index = 8;

}

else if ((seg_total < pix_total * 0.7) && (seg_total >= pix_total * 0.5))

{

e = 6;

d = 5;

index = 7;

}

else if ((seg_total < pix_total * 0.5) && (seg_total >= pix_total * 0.3))

{

e = 4;

d = 3;

index = 6;

}

else

{

e = 1;

d = 1;

index = 0;

}

cv::Mat cv_dilate, cv_erode;

cv::Mat element_e = getStructuringElement(cv::MORPH_RECT, cv::Size(e, e), cv::Point(-1, -1));

cv::Mat element_d = getStructuringElement(cv::MORPH_RECT, cv::Size(d, d), cv::Point(-1, -1));

cv::erode(cv_src, cv_dilate, element_e);

cv::dilate(cv_dilate, cv_seg, element_d);

cv::threshold(cv_seg, cv_seg, 100, 255, cv::THRESH_BINARY);

return index;

}

//线排序Y轴

static bool cmp_y(const Line& p1, const Line& p2)

{

return p1._center.y < p2._center.y;

}

//线排序X轴

static bool cmp_x(const Line& p1, const Line& p2)

{

return p1._center.x < p2._center.x;

}

//点排序

static bool point_y(const cv::Point& p1, const cv::Point& p2)

{

return p1.y < p2.y;

}

//点排序

static bool point_x(const cv::Point& p1, const cv::Point& p2)

{

return p1.x < p2.x;

}

//两条线的交点

static cv::Point2f computeIntersect(Line& l1, Line& l2)

{

int x1 = l1._p1.x;

int x2 = l1._p2.x;

int y1 = l1._p1.y;

int y2 = l1._p2.y;

int x3 = l2._p1.x, x4 = l2._p2.x, y3 = l2._p1.y, y4 = l2._p2.y;

if (float d = (x1 - x2) * (y3 - y4) - (y1 - y2) * (x3 - x4))

{

cv::Point2f pt;

pt.x = ((x1 * y2 - y1 * x2) * (x3 - x4) - (x1 - x2) * (x3 * y4 - y3 * x4)) / d;

pt.y = ((x1 * y2 - y1 * x2) * (y3 - y4) - (y1 - y2) * (x3 * y4 - y3 * x4)) / d;

return pt;

}

return cv::Point2f(-1, -1);

}

static bool cmpPointX(const cv::Point& p1, const cv::Point& p2)

{

return p1.x < p2.x;

}

//点排序

static void sortPoint(std::vector<cv::Point>& old_point, std::vector<cv::Point>& new_point)

{

sort(old_point.begin(), old_point.end(), cmpPointX);

new_point = std::vector<cv::Point>(4);

if (old_point.at(0).y < old_point.at(1).y)

{

new_point.at(0) = old_point.at(0);

new_point.at(2) = old_point.at(1);

}

else

{

new_point.at(2) = old_point.at(0);

new_point.at(0) = old_point.at(1);

}

if (old_point.at(2).y < old_point.at(3).y)

{

new_point.at(1) = old_point.at(2);

new_point.at(3) = old_point.at(3);

}

else

{

new_point.at(1) = old_point.at(3);

new_point.at(3) = old_point.at(2);

}

}

//得到直线斜率

static std::vector <float> getLinesArctan(std::vector<cv::Vec4f> lines)

{

float k = 0; //直线斜率

std::vector <float> lines_arctan;//直线斜率的反正切值

for (unsigned int i = 0; i < lines.size(); i++)

{

k = (double)(lines[i][3] - lines[i][1]) / (double)(lines[i][2] - lines[i][0]); //求出直线的斜率

lines_arctan.push_back(atan(k));

}

return lines_arctan;

}

//得到线的角度

static void getLinesAngle(std::vector<cv::Vec4f> lines, std::vector<double>& angle)

{

//显示每条直线的角度

std::vector <float> lines_arctan;//直线斜率的反正切值

lines_arctan = getLinesArctan(lines);

for (unsigned int i = 0; i < lines.size(); i++)

{

angle.push_back(lines_arctan[i] * 180.0 / 3.1415926);

}

}

static double angle(cv::Point pt1, cv::Point pt2, cv::Point pt0)

{

double dx1 = pt1.x - pt0.x;

double dy1 = pt1.y - pt0.y;

double dx2 = pt2.x - pt0.x;

double dy2 = pt2.y - pt0.y;

return (dx1 * dx2 + dy1 * dy2) / sqrt((dx1 * dx1 + dy1 * dy1) * (dx2 * dx2 + dy2 * dy2) + 1e-10);

}

/// <summary>

/// 矩形检测

/// </summary>

/// <param name="image">输入图像</param>

/// <param name="out_points">输出矩形的四个点</param>

/// <returns></returns>

static int findSquares(const cv::Mat& image, std::vector<cv::Point>& out_points)

{

std::vector<std::vector<cv::Point> > squares;

cv::Mat src, dst, gray_one, gray;

if (image.channels() == 3)

{

gray_one = cv::Mat(src.size(), CV_8UC1);

//滤波增强边缘检测

medianBlur(image, dst, 7);

cv::cvtColor(dst, gray_one, cv::COLOR_BGR2GRAY);

Canny(gray_one, gray, 10, 40, 3);

}

else if (image.channels() == 1)

{

cv::Mat erodeStruct = getStructuringElement(cv::MORPH_RECT, cv::Size(5, 5));

dilate(image, gray, erodeStruct);

}

else

{

return -401;

}

std::vector<std::vector<cv::Point> > contours;

std::vector<cv::Vec4i> hierarchy;

findContours(gray, contours, hierarchy, cv::RETR_CCOMP, cv::CHAIN_APPROX_SIMPLE);

std::vector<cv::Point> approx;

if (contours.size() > 4)

{

// 检测所找到的轮廓

for (int i = 0; i < contours.size(); i++)

{

//使用图像轮廓点进行多边形拟合

approxPolyDP(cv::Mat(contours[i]), approx, arcLength(cv::Mat(contours[i]), true) * 0.02, true);

//drawContours(image, contours, i, cv::Scalar(255,255,255), cv::FILLED, 8, hierarchy);

//计算轮廓面积后,得到矩形4个顶点

if (approx.size() == 4 && fabs(contourArea(cv::Mat(approx))) > 1000 && isContourConvex(cv::Mat(approx)))

{

double maxCosine = 0;

for (int j = 2; j < 5; j++)

{

// 求轮廓边缘之间角度的最大余弦

double cosine = fabs(angle(approx[j % 4], approx[j - 2], approx[j - 1]));

maxCosine = MAX(maxCosine, cosine);

}

if (maxCosine < 0.8)

{

squares.push_back(approx);

}

}

}

}

else

{

return -103;

}

if (squares.size() > 0)

{

for (int j = 0; j < squares.at(0).size(); j++)

{

out_points.push_back(squares.at(0)[j]);

}

return 100;

}

else

{

return -104;

}

return 101;

}

int getIntersectionPoint(std::vector<Line>& h_lines, std::vector<Line>& v_lines, std::vector<cv::Point>& points)

{

sort(h_lines.begin(), h_lines.end(), cmp_y);

sort(v_lines.begin(), v_lines.end(), cmp_x);

if (h_lines.size() < 2 || v_lines.size() < 2)

{

return -421;

}

points.push_back(computeIntersect(h_lines[0], v_lines[0]));

points.push_back(computeIntersect(h_lines[0], v_lines[v_lines.size() - 1]));

points.push_back(computeIntersect(h_lines[h_lines.size() - 1], v_lines[0]));

points.push_back(computeIntersect(h_lines[h_lines.size() - 1], v_lines[v_lines.size() - 1]));

return 0;

}

//拟合直线画四形

void drawQuadrangleToLines(std::vector<cv::Point>& in_points, cv::Mat& cv_src)

{

cv::line(cv_src, in_points.at(0), in_points.at(1), cv::Scalar(255), 2, cv::LINE_8);

cv::line(cv_src, in_points.at(0), in_points.at(2), cv::Scalar(255), 2, cv::LINE_8);

cv::line(cv_src, in_points.at(1), in_points.at(3), cv::Scalar(255), 2, cv::LINE_8);

cv::line(cv_src, in_points.at(2), in_points.at(3), cv::Scalar(255), 2, cv::LINE_8);

}

//求两点间距离

static float getDistance(cv::Point& point_1, cv::Point& point_2)

{

float distance;

distance = powf((point_1.x - point_2.x), 2) + powf((point_1.y - point_2.y), 2);

distance = sqrtf(distance);

return distance;

}

//缩放矩形

cv::Rect rectScale(cv::Rect& rect, float x_scale, float y_scale)

{

cv::Rect cv_rect;

cv_rect.x = rect.x / x_scale;

cv_rect.y = rect.y / y_scale;

cv_rect.width = rect.width / x_scale;

cv_rect.height = rect.height / y_scale;

return cv_rect;

}

cv::Rect rectScale(cv::Rect& rect, float x_scale, float y_scale, int i)

{

cv::Rect cv_rect;

if (i >= 0)

{

cv_rect.x = rect.x * x_scale;

cv_rect.y = rect.y * y_scale;

cv_rect.width = rect.width * x_scale;

cv_rect.height = rect.height * y_scale;

}

else

{

cv_rect.x = rect.x / x_scale;

cv_rect.y = rect.y / y_scale;

cv_rect.width = rect.width / x_scale;

cv_rect.height = rect.height / y_scale;

}

return cv_rect;

}

/// <summary>

/// 传统图像分割

/// </summary>

/// <param name="src">输入图像</param>

/// <param name="dst">输出分割后的图像</param>

/// <param name="cv_rect">输入要分割的位置矩形</param>

/// <param name="it">迭代次数</param>

void grabCutRoi(cv::Mat& src, cv::Mat& dst, cv::Rect& cv_rect, int it)

{

cv::Mat result = cv::Mat::zeros(src.size(), CV_8UC1);

cv::Mat bgModel, fgModel;

grabCut(src, result, cv_rect, bgModel, fgModel, it, cv::GC_INIT_WITH_RECT);

compare(result, cv::GC_PR_FGD, result, cv::CMP_EQ);

dst = cv::Mat(src.size(), CV_8UC3, cv::Scalar(0, 0, 0));

src.copyTo(dst, result);

}

//canny边缘检测

void getCanny(cv::Mat& src, cv::Mat& dst)

{

cv::Mat cv_blur;

if (src.channels() != 1)

{

cvtColor(src, src, cv::COLOR_BGR2GRAY);

}

medianBlur(src, cv_blur, 11);

cv::Mat thres;

cv::Canny(cv_blur, dst, 10, 40);

}

/// <summary>

/// 画二值图像的外框

/// </summary>

/// <param name="cv_src">输入图像</param>

/// <param name="cv_dst">输出图像</param>

/// <param name="line">线段大小</param>

/// <returns></returns>

int drawpoly(cv::Mat& cv_src, cv::Mat& cv_dst, int line)

{

if (cv_src.empty() || cv_src.channels() > 1)

{

return -446;

}

cv::Mat cv_dilate;

cv::Mat element_d = getStructuringElement(cv::MORPH_RECT, cv::Size(3, 3), cv::Point(-1, -1));

cv::dilate(cv_src, cv_dilate, element_d);

std::vector<std::vector<cv::Point> > contours;

std::vector<std::vector<cv::Point> > f_contours;

std::vector<cv::Point> approx2;

findContours(cv_dilate, f_contours, cv::RETR_EXTERNAL, cv::CHAIN_APPROX_NONE);

int max_area = 0;

int index = 0;

if (f_contours.size() > 0)

{

for (int i = 0; i < f_contours.size(); i++)

{

double tmparea = fabs(contourArea(f_contours[i]));

if (tmparea > max_area)

{

index = i;

max_area = tmparea;

}

}

}

else

{

return 2104;

}

contours.push_back(f_contours[index]);

std::vector<cv::Point> tmp = contours[0];

cv_dst = cv::Mat(cv_src.size(), CV_8UC1, cv::Scalar(0));

drawContours(cv_dst, contours, 0, cv::Scalar(255), line, cv::LINE_AA);

return 0;

}

int drawpoly(cv::Mat& cv_src, cv::Mat& cv_dst)

{

if (cv_src.empty() || cv_src.channels() > 1)

{

return -446;

}

cv::Mat cv_dilate;

cv::Mat element_d = getStructuringElement(cv::MORPH_RECT, cv::Size(3, 3), cv::Point(-1, -1));

cv::dilate(cv_src, cv_dilate, element_d);

std::vector<std::vector<cv::Point> > contours;

std::vector<std::vector<cv::Point> > f_contours;

std::vector<cv::Point> approx2;

//注意第5个参数为CV_RETR_EXTERNAL,只检索外框

findContours(cv_dilate, f_contours, cv::RETR_EXTERNAL, cv::CHAIN_APPROX_NONE); //找轮廓

//求出面积最大的轮廓

int max_area = 0;

int index = 0;

for (int i = 0; i < f_contours.size(); i++)

{

double tmparea = fabs(contourArea(f_contours[i]));

//std::cout << tmparea << " " ;

if (tmparea > max_area)

{

//std::cout <<"max_area = " << tmparea << std::endl;

index = i;

max_area = tmparea;

}

}

contours.push_back(f_contours[index]);

std::vector<cv::Point> tmp = contours[0];

cv_dst = cv::Mat(cv_src.size(), CV_8UC1, cv::Scalar(0));

drawContours(cv_dst, contours, 0, cv::Scalar(255), 2, cv::LINE_AA); //注意线的厚度,不要选择太细的

return 0;

}

//二值图像凸包

void binConvexHull(cv::Mat& src, cv::Mat& dst)

{

cv::Mat src_gray, bin_output;

if (src.channels() > 1)

{

cvtColor(src, src_gray, cv::COLOR_BGR2GRAY);

threshold(src_gray, bin_output, 100, 255, cv::THRESH_BINARY);

}

else

{

threshold(src, bin_output, 100, 255, cv::THRESH_BINARY);

}

std::vector<std::vector<cv::Point>> contours;

std::vector<cv::Vec4i> hierachy;

findContours(bin_output, contours, hierachy, cv::RETR_EXTERNAL, cv::CHAIN_APPROX_NONE);

//发现轮廓得到的候选点

std::vector<std::vector<cv::Point>> convexs(contours.size());

for (size_t i = 0; i < contours.size(); i++)

{

convexHull(contours[i], convexs[i], false, true);

}

dst = cv::Mat(src.size(), CV_8UC1, cv::Scalar(0));

// 绘制

std::vector<cv::Vec4i> empty(0);

for (int k = 0; k < contours.size(); k++)

{

drawContours(dst, convexs, k, cv::Scalar(255), 2, cv::LINE_AA);

}

}

/// <summary>

/// 二值图像边缘滤波

/// </summary>

/// <param name="src">输入图像</param>

/// <param name="dst">输出图像</param>

/// <param name="size">分别表示突出部的宽度阈值和高度阈值</param>

/// <param name="threshold">代表突出部的颜色,0表示黑色,1代表白色</param>

void binImageBlur(cv::Mat& src, cv::Mat& dst, cv::Size size, int threshold)

{

int height = src.rows;

int width = src.cols;

blur(src, dst, size);

for (int i = 0; i < height; i++)

{

uchar* p = dst.ptr<uchar>(i);

for (int j = 0; j < width; j++)

{

if (p[j] < threshold)

p[j] = 0;

else p[j] = 255;

}

}

}

static bool sortArea(const std::vector<cv::Point>& v1, const std::vector<cv::Point>& v2)

{

double v1Area = fabs(contourArea(cv::Mat(v1)));

double v2Area = fabs(contourArea(cv::Mat(v2)));

return v1Area > v2Area;

}

/// <summary>

/// 找出二值图像最大块

/// </summary>

/// <param name="cv_src">输入图像</param>

/// <param name="area">输出最大面积</param>

/// <returns></returns>

static int findContoursArea(cv::Mat& cv_src, int& area)

{

//auto t0 = cv::getTickCount();

if (cv_src.empty() || cv_src.channels() != 1)

{

return -2;

}

area = 0;

std::vector<std::vector<cv::Point>> contours;

std::vector<cv::Vec4i> hierarcy;

cv::Mat cv_canny_e, cv_canny_d;

cv::Mat element_d = getStructuringElement(cv::MORPH_RECT, cv::Size(7, 7));

cv::dilate(cv_src, cv_canny_d, element_d);

cv::Mat element_e = getStructuringElement(cv::MORPH_RECT, cv::Size(5, 5));

cv::erode(cv_canny_d, cv_canny_e, element_e);

cv::findContours(cv_canny_e, contours, hierarcy, cv::RETR_EXTERNAL, cv::CHAIN_APPROX_NONE);

if (contours.size() == 0)

{

return -420;

}

//按面积排序

///2021.6.10更新了按面积排序,处理速度没有明显的优化,但更易于代码管理

std::sort(contours.begin(), contours.end(), sortArea);

cv::Rect rect = boundingRect(cv::Mat(contours[0]));

area = rect.area();

///旧代码,编历排序

/*std::vector<cv::RotatedRect> box(contours.size());

for (int i = 0; i < contours.size(); i++)

{

approxPolyDP(cv::Mat(contours[i]), contours[i], 3, true);

cv::Rect rect = boundingRect(cv::Mat(contours[i]));

int area_t = rect.area();

if (area_t >= area)

{

area = area_t;

}

}*/

//auto t1 = cv::getTickCount();

//std::cout << "elapsed time: " << (t1 - t0) * 1000.0 / cv::getTickFrequency() << "ms" << std::endl;

return 0;

}

/// <summary>

/// 线分类

/// </summary>

/// <param name="lines">输入线段集</param>

/// <param name="horizontals">输出H分类线</param>

/// <param name="verticals">输出V分类线</param>

/// <param name="cv_debug">输入图像</param>

/// <returns></returns>

int linesDichotomy(std::vector<cv::Vec4f>& lines, std::vector<Line>& horizontals, std::vector<Line>& verticals, cv::Mat& cv_debug)

{

std::vector<double> angle;

getLinesAngle(lines, angle);

int mask = 0;

for (int i = 0; i < angle.size(); i++)

{

if (angle.at(i) >= 75 || angle.at(i) <= -75)

{

mask++;

}

}

if (mask > 2)

{

for (size_t i = 0; i < lines.size(); i++)

{

cv::Vec4i v = lines[i];

double delta_x = fabs(v[0] - v[2]);

double delta_y = fabs(v[1] - v[3]);

Line l(cv::Point(v[0], v[1]), cv::Point(v[2], v[3]));

if (delta_x > delta_y)

{

horizontals.push_back(l);

}

else

{

verticals.push_back(l);

}

}

}

else

{

std::vector<Line> lines_1, lines_2;

for (size_t i = 0; i < lines.size(); i++)

{

cv::Vec4i v = lines[i];

Line l(cv::Point(v[0], v[1]), cv::Point(v[2], v[3]));

if (angle.at(i) > 0)

{

lines_1.push_back(l);

}

else

{

lines_2.push_back(l);

}

}

if (lines_1.size() < 2 || lines_2.size() < 2)

{

return -431;

}

std::vector<cv::Point> points_1, points_2;

getIntersectionPoint(lines_1, lines_2, points_1);

getIntersectionPoint(lines_2, lines_1, points_2);

cv::Mat cv_line_1(cv_debug.size(), CV_8UC1, cv::Scalar(0));

cv::Mat cv_line_2(cv_debug.size(), CV_8UC1, cv::Scalar(0));

drawQuadrangleToLines(points_1, cv_line_1);

drawQuadrangleToLines(points_2, cv_line_2);

int area_1, area_2;

findContoursArea(cv_line_1, area_1);

findContoursArea(cv_line_2, area_2);

if (area_1 < area_2)

{

horizontals = lines_2;

verticals = lines_1;

}

else

{

horizontals = lines_1;

verticals = lines_2;

}

}

return 0;

}

/// <summary>

/// 筛选线段(线的近邻算法)

/// </summary>

/// <param name="h_lines">输入H分类线</param>

/// <param name="v_lines">输入V分类线</param>

/// <param name="lines_c">输出筛选之后的四条线</param>

/// <param name="near_val">近邻值</param>

/// <returns></returns>

int screenLines(std::vector<Line>& h_lines, std::vector<Line>& v_lines, std::vector<Line>& lines_c, int near_val)

{

sort(h_lines.begin(), h_lines.end(), cmp_y);

sort(v_lines.begin(), v_lines.end(), cmp_x);

std::vector<Line> h_l_t, v_l_l, h_l_d, v_l_r;

for (int i = 0; i < h_lines.size(); i++)

{

if (abs(h_lines.at(i)._center.y - (h_lines.at(0)._center.y)) < near_val)

{

h_l_t.push_back(h_lines.at(i));

}

if (abs(h_lines.at(i)._center.y - (h_lines.back()._center.y)) < near_val)

{

h_l_d.push_back(h_lines.at(i));

}

}

for (int i = 0; i < v_lines.size(); i++)

{

if (abs(v_lines.at(i)._center.x - (v_lines.at(0)._center.x)) < near_val)

{

v_l_l.push_back(v_lines.at(i));

}

if (abs(v_lines.at(i)._center.x - (v_lines.back()._center.x)) < near_val)

{

v_l_r.push_back(v_lines.at(i));

}

}

std::vector<Line> H_LT_C, V_LL_C, H_LD_C, V_LR_C;

if (h_l_t.size() >= 2)

{

for (int i = 0; i < h_l_t.size(); i++)

{

if ((h_l_t.at(i)._p1.x > ((v_l_l.front()._p2.x) - near_val)) && (h_l_t.at(i)._p1.x < ((v_l_r.front()._p2.x) + near_val)))

{

H_LT_C.push_back(h_l_t.at(i));

}

}

if (H_LT_C.empty())

{

H_LT_C.push_back(h_l_t.front());

}

}

else

{

H_LT_C.push_back(h_l_t.front());

}

if (h_l_d.size() >= 2)

{

for (int i = 0; i < h_l_d.size(); i++)

{

if ((h_l_d.at(i)._p1.x > ((v_l_l.front()._p1.x) - near_val)) && (h_l_d.at(i)._p1.x < ((v_l_r.front()._p1.x) + near_val)))

{

H_LD_C.push_back(h_l_d.at(i));

}

}

if (H_LD_C.empty())

{

H_LD_C.push_back(h_l_d.front());

}

}

else

{

H_LD_C.push_back(h_l_d.front());

}

if (v_l_l.size() >= 2)

{

for (int i = 0; i < v_l_l.size(); i++)

{

if (v_l_l.at(i)._p1.y > (h_l_t.at(0)._p1.y) && ((v_l_l.at(i)._p1.y < h_l_d.front()._p1.x) + near_val))

{

V_LL_C.push_back(v_l_l.at(i));

}

}

if (V_LL_C.empty())

{

V_LL_C.push_back(v_l_l.front());

}

}

else

{

V_LL_C.push_back(v_l_l.front());

}

if (v_l_r.size() >= 2)

{

for (int i = 0; i < v_l_r.size(); i++)

{

if ((v_l_r.at(i)._p1.y < (h_l_d.at(0)._p1.y)) && ((v_l_r.at(i)._p1.y < h_l_d.front()._p1.x) + near_val))

{

V_LR_C.push_back(v_l_r.at(i));

}

}

if (V_LR_C.empty())

{

V_LR_C.push_back(v_l_r.front());

}

}

else

{

V_LR_C.push_back(v_l_r.front());

}

if (H_LT_C.size() >= 2)

{

sort(H_LT_C.begin(), H_LT_C.end(), cmp_x);

std::vector<cv::Point> p1;

p1.push_back(H_LT_C.front()._p1);

p1.push_back(H_LT_C.front()._p2);

p1.push_back(H_LT_C.back()._p1);

p1.push_back(H_LT_C.back()._p2);

sort(p1.begin(), p1.end(), point_x);

lines_c.push_back(Line(cv::Point(p1.front()), cv::Point(p1.back())));

}

else

{

lines_c.push_back(H_LT_C.front());

}

if (H_LD_C.size() >= 2)

{

sort(H_LD_C.begin(), H_LD_C.end(), cmp_x);

std::vector<cv::Point> p1;

p1.push_back(H_LD_C.front()._p1);

p1.push_back(H_LD_C.front()._p2);

p1.push_back(H_LD_C.back()._p1);

p1.push_back(H_LD_C.back()._p2);

sort(p1.begin(), p1.end(), point_x);

lines_c.push_back(Line(cv::Point(p1.front()), cv::Point(p1.back())));

}

else

{

lines_c.push_back(H_LD_C.front());

}

if (V_LL_C.size() >= 2)

{

sort(V_LL_C.begin(), V_LL_C.end(), cmp_y);

std::vector<cv::Point> p1;

p1.push_back(V_LL_C.front()._p1);

p1.push_back(V_LL_C.front()._p2);

p1.push_back(V_LL_C.back()._p1);

p1.push_back(V_LL_C.back()._p2);

sort(p1.begin(), p1.end(), point_y);

lines_c.push_back(Line(cv::Point(p1.front()), cv::Point(p1.back())));

}

else

{

lines_c.push_back(V_LL_C.front());

}

if (V_LR_C.size() >= 2)

{

sort(V_LR_C.begin(), V_LR_C.end(), cmp_y);

std::vector<cv::Point> p1;

p1.push_back(V_LR_C.front()._p1);

p1.push_back(V_LR_C.front()._p2);

p1.push_back(V_LR_C.back()._p1);

p1.push_back(V_LR_C.back()._p2);

sort(p1.begin(), p1.end(), point_y);

lines_c.push_back(Line(cv::Point(p1.front()), cv::Point(p1.back())));

}

else

{

lines_c.push_back(V_LR_C.front());

}

return 0;

}

//选择全部

void selectAll(cv::Mat& cv_src, std::vector<cv::Point>& out_points)

{

out_points.push_back(cv::Point2f(2, 2));

out_points.push_back(cv::Point2f(2, cv_src.rows - 2));

out_points.push_back(cv::Point2f(cv_src.cols - 2, 2));

out_points.push_back(cv::Point2f(cv_src.cols - 2, cv_src.rows - 2));

}

double linesIntersectionAngle(cv::Vec4i l1, const cv::Vec4i l2)

{

cv::Point point;

double x1 = l1[0], y1 = l1[1], x2 = l1[2], y2 = l1[3];

double a1 = -(y2 - y1), b1 = x2 - x1, c1 = (y2 - y1) * x1 - (x2 - x1) * y1;

double x3 = l2[0], y3 = l2[1], x4 = l2[2], y4 = l2[3];

double a2 = -(y4 - y3), b2 = x4 - x3, c2 = (y4 - y3) * x3 - (x4 - x3) * y3;

bool r = false;

double x0 = 0, y0 = 0;

double angle = 0;

if (b1 == 0 && b2 != 0)

r = true;

else if (b1 != 0 && b2 == 0)

r = true;

else if (b1 != 0 && b2 != 0 && a1 / b1 != a2 / b2)

r = true;

if (r)

{

x0 = (b1 * c2 - b2 * c1) / (a1 * b2 - a2 * b1);

y0 = (a1 * c2 - a2 * c1) / (a2 * b1 - a1 * b2);

double a = sqrt(pow(x4 - x2, 2) + pow(y4 - y2, 2));

double b = sqrt(pow(x4 - x0, 2) + pow(y4 - y0, 2));

double c = sqrt(pow(x2 - x0, 2) + pow(y2 - y0, 2));

angle = acos((b * b + c * c - a * a) / (2 * b * c)) * 180 / CV_PI;

}

return angle;

}

bool decodeArea(cv::Mat cv_enet, std::vector<cv::Point> points, float mu)

{

cv::Mat cv_lines_a(cv_enet.size(), CV_8UC1, cv::Scalar(0));

drawQuadrangleToLines(points, cv_lines_a);

int lines_a = 0, enet_a = 0;

findContoursArea(cv_lines_a, lines_a);

findContoursArea(cv_enet, enet_a);

if (enet_a > lines_a * mu)

{

return true;

}

return false;

}

bool decideAngle(std::vector<Line> lines_in)

{

std::vector<cv::Vec4i> lines;

for (int i = 0; i < lines_in.size(); i++)

{

lines.push_back(cv::Vec4i(lines_in.at(i)._p1.x, lines_in.at(i)._p1.y, lines_in.at(i)._p2.x, lines_in.at(i)._p2.y));

}

double a0 = linesIntersectionAngle(lines.at(0), lines.at(2));

double a1 = linesIntersectionAngle(lines.at(0), lines.at(3));

double a2 = linesIntersectionAngle(lines.at(2), lines.at(1));

double a3 = linesIntersectionAngle(lines.at(1), lines.at(3));

if ((a0 > 120 || a0 < 40) || (a1 > 120 || a1 < 40) || (a2 > 120 || a2 < 40) || (a3 > 120 || a3 < 40))

{

return true;

}

return false;

}

void quadrangleDetection(cv::Mat& cv_src, std::vector<cv::Point>& out_point, cv::Size& size_d, cv::Size& size_e)

{

std::vector<std::vector<cv::Point>> contours;

std::vector<cv::Vec4i> hierarcy;

cv::Mat cv_canny_e, cv_canny_d;

cv::Mat element_d = getStructuringElement(cv::MORPH_RECT, size_d);

cv::Mat element_e = getStructuringElement(cv::MORPH_RECT, size_e);

cv::dilate(cv_src, cv_canny_d, element_d);

cv::erode(cv_canny_d, cv_canny_e, element_e);

findContours(cv_canny_e, contours, hierarcy, cv::RETR_EXTERNAL, cv::CHAIN_APPROX_NONE);

std::vector<cv::Rect> boundRect(contours.size());

std::vector<cv::RotatedRect> box(contours.size());

cv::Point2f rect[4];

int area = 0;

for (int i = 0; i < contours.size(); i++)

{

box[i] = cv::minAreaRect(cv::Mat(contours[i]));

int area_t = cv::contourArea(contours[i]);

if (area_t > area)

{

area = area_t;

}

}

if (area < 5000)

{

selectAll(cv_src, out_point);

}

else

{

for (int i = 0; i < contours.size(); i++)

{

box[i] = cv::minAreaRect(cv::Mat(contours[i]));

int area_t = cv::contourArea(contours[i]);

if (area_t >= area)

{

box[i].points(rect);

for (int j = 0; j < 4; j++)

{

out_point.push_back(rect[j]);

//line(cv_src, rect[j], rect[(j + 1) % 4], cv::Scalar(255), 1, 1);

}

}

}

}

}

int enetToCorrectionPoint(cv::Mat cv_enet, std::vector<cv::Point>& points_out)

{

cv::Mat d = cv::getStructuringElement(cv::MORPH_RECT, cv::Size(7, 7), cv::Point(-1, -1));

cv::Mat cv_dilate;

cv::dilate(cv_enet, cv_dilate, d);

cv::Mat cv_erode;

cv::Mat e = cv::getStructuringElement(cv::MORPH_RECT, cv::Size(17, 17), cv::Point(-1, -1));

cv::erode(cv_dilate, cv_erode, e);

cv::Mat cv_blur;

binImageBlur(cv_erode, cv_blur, cv::Size(5, 5), 130);

//cv::imshow("cv_blur", cv_blur);

cv::Size size_d(5, 5);

cv::Size size_e(3, 3);

std::vector<cv::Point> point_t;

quadrangleDetection(cv_blur, point_t, size_d, size_e);

sortPoint(point_t, points_out);

return 0;

}

int enetLinesToPoint(cv::Mat& cv_enet, std::vector<cv::Point>& points_out)

{

cv::Mat d = cv::getStructuringElement(cv::MORPH_RECT, cv::Size(7, 7), cv::Point(-1, -1));

cv::Mat cv_dilate;

cv::dilate(cv_enet, cv_dilate, d);

cv::Mat cv_erode;

cv::Mat e = cv::getStructuringElement(cv::MORPH_RECT, cv::Size(9, 9), cv::Point(-1, -1));

cv::erode(cv_dilate, cv_erode, e);

cv::Mat cv_blur;

binImageBlur(cv_erode, cv_blur, cv::Size(3, 3), 130);

cv::Mat cv_canny;

cv::Canny(cv_blur, cv_canny, 20, 60);

double theta = 60;

int threshold = 40;

double minLineLength = 8;

std::vector<cv::Vec4f> lines;

HoughLinesP(cv_canny, lines, 1, CV_PI * 1 / 180, theta, threshold, minLineLength);

if (lines.size() <= 3)

{

enetToCorrectionPoint(cv_enet, points_out);

return 2;

}

std::vector<Line> horizontals, verticals;

linesDichotomy(lines, horizontals, verticals, cv_enet);

if (horizontals.size() < 2 || verticals.size() < 2)

{

enetToCorrectionPoint(cv_enet, points_out);

return 3;

}

std::vector<Line> lines_out;

screenLines(horizontals, verticals, lines_out, 20);

if (lines_out.size() < 4)

{

enetToCorrectionPoint(cv_enet, points_out);

return 4;

}

if (decideAngle(lines_out))

{

enetToCorrectionPoint(cv_enet, points_out);

return 5;

}

std::vector<cv::Point> points;

points.push_back(computeIntersect(lines_out.at(0), lines_out.at(2)));

points.push_back(computeIntersect(lines_out.at(0), lines_out.at(3)));

points.push_back(computeIntersect(lines_out.at(2), lines_out.at(1)));

points.push_back(computeIntersect(lines_out.at(1), lines_out.at(3)));

if (decodeArea(cv_enet, points, 2.2))

{

enetToCorrectionPoint(cv_enet, points_out);

return 6;

}

if (((points.at(1).x - points.at(0).x) < 200) || ((points.at(3).x - points.at(2).x) < 200) || ((points.at(2).y - points.at(0).y) < 200) || ((points.at(3).y - points.at(1).y) < 200))

{

enetToCorrectionPoint(cv_enet, points_out);

return 7;

}

points_out = points;

return 0;

}

static int getCorrectionPoint(cv::Mat cv_edge, cv::Mat& cv_enet, std::vector<cv::Point>& points_out,

double theta = 50, int threshold = 30, double minLineLength = 10)

{

std::vector<cv::Vec4f> lines;

HoughLinesP(cv_edge, lines, 1, CV_PI * 1 / 180, theta, threshold, minLineLength);

if (lines.size() <= 3)

{

int mask = enetLinesToPoint(cv_enet, points_out);

return std::stoi(std::to_string(42) + std::to_string(mask));

}

std::vector<Line> horizontals, verticals;

linesDichotomy(lines, horizontals, verticals, cv_edge);

if (horizontals.size() < 2 || verticals.size() < 2)

{

int mask = enetLinesToPoint(cv_enet, points_out);

return std::stoi(std::to_string(43) + std::to_string(mask));

}

std::vector<Line> lines_out;

screenLines(horizontals, verticals, lines_out, 40);

if (lines_out.size() < 4)

{

int mask = enetLinesToPoint(cv_enet, points_out);

return std::stoi(std::to_string(44) + std::to_string(mask));

}

if (decideAngle(lines_out))

{

int mask = enetLinesToPoint(cv_enet, points_out);

return std::stoi(std::to_string(45) + std::to_string(mask));

}

std::vector<cv::Point> points;

points.push_back(computeIntersect(lines_out.at(0), lines_out.at(2)));

points.push_back(computeIntersect(lines_out.at(0), lines_out.at(3)));

points.push_back(computeIntersect(lines_out.at(2), lines_out.at(1)));

points.push_back(computeIntersect(lines_out.at(1), lines_out.at(3)));

if (decodeArea(cv_enet, points, 4))

{

int mask = enetLinesToPoint(cv_enet, points_out);

return std::stoi(std::to_string(46) + std::to_string(mask));

}

if (((points.at(1).x - points.at(0).x) < 60) || ((points.at(3).x - points.at(2).x) < 60) ||

((points.at(2).y - points.at(0).y) < 60) || ((points.at(3).y - points.at(1).y) < 60))

{

int mask = enetLinesToPoint(cv_enet, points_out);

return std::stoi(std::to_string(47) + std::to_string(mask));

}

points_out = points;

return 400;

}

static int getCorrectionPoint(cv::Mat& cv_seg, std::vector<cv::Point>& points_out)

{

double theta = 50;

int threshold = 30;

double minLineLength = 10;

cv::Mat cv_canny;

cv::Canny(cv_seg, cv_canny, 30, 110, 5, true);

std::vector<cv::Vec4f> lines;

HoughLinesP(cv_canny, lines, 1, CV_PI * 1 / 180, theta, threshold, minLineLength);

if (lines.size() <= 3)

{

int mask = enetLinesToPoint(cv_seg, points_out);

return std::stoi(std::to_string(42) + std::to_string(mask));

}

std::vector<Line> horizontals, verticals;

linesDichotomy(lines, horizontals, verticals, cv_seg);

if (horizontals.size() < 2 || verticals.size() < 2)

{

int mask = enetLinesToPoint(cv_seg, points_out);

return std::stoi(std::to_string(43) + std::to_string(mask));

}

std::vector<Line> lines_out;

screenLines(horizontals, verticals, lines_out, 40);

if (lines_out.size() < 4)

{

int mask = enetLinesToPoint(cv_seg, points_out);

return std::stoi(std::to_string(44) + std::to_string(mask));

}

if (decideAngle(lines_out))

{

int mask = enetLinesToPoint(cv_seg, points_out);

return std::stoi(std::to_string(45) + std::to_string(mask));

}

std::vector<cv::Point> points;

points.push_back(computeIntersect(lines_out.at(0), lines_out.at(2)));

points.push_back(computeIntersect(lines_out.at(0), lines_out.at(3)));

points.push_back(computeIntersect(lines_out.at(2), lines_out.at(1)));

points.push_back(computeIntersect(lines_out.at(1), lines_out.at(3)));

if (decodeArea(cv_seg, points, 4))

{

int mask = enetLinesToPoint(cv_seg, points_out);

return std::stoi(std::to_string(46) + std::to_string(mask));

}

if (((points.at(1).x - points.at(0).x) < 60) || ((points.at(3).x - points.at(2).x) < 60) || ((points.at(2).y - points.at(0).y) < 60) || ((points.at(3).y - points.at(1).y) < 60))

{

int mask = enetLinesToPoint(cv_seg, points_out);

return std::stoi(std::to_string(47) + std::to_string(mask));

}

points_out = points;

return 400;

}

/// <summary>

/// 线映射到图像边缘

/// </summary>

/// <param name="cv_src">输入图像</param>

/// <param name="p1">线的起点</param>

/// <param name="p2">线的终点</param>

void drawFullImageLine(cv::Mat cv_src, cv::Point& p1, cv::Point& p2)

{

cv::Point p, q;

if (p2.x == p1.x)

{

p = cv::Point(p1.x, 0);

q = cv::Point(p1.x, cv_src.rows);

}

else

{

double a = (double)(p2.y - p1.y) / (double)(p2.x - p1.x);

double b = p1.y - a * p1.x;

p = cv::Point(0, b);

q = cv::Point(cv_src.rows, a * cv_src.rows + b);

cv::clipLine(cv::Size(cv_src.rows, cv_src.cols), p, q);

}

p1 = p;

p2 = q;

}

int getMidcourtLine(cv::Mat cv_mid, cv::Point& p1_c, cv::Point& p2_c)

{

std::vector<std::vector<cv::Point>> contours;

std::vector<cv::Vec4i> hierarchy;

findContours(cv_mid, contours, hierarchy, cv::RETR_EXTERNAL, cv::CHAIN_APPROX_NONE, cv::Point());

//求出面积最大的轮;

int max_area = 0;

for (int i = 0; i < contours.size(); i++)

{

double tmparea = fabs(contourArea(contours[i]));

if (tmparea > max_area)

{

max_area = tmparea;

}

}

for (int i = 0; i < contours.size(); i++)

{

double tmparea = fabs(contourArea(contours[i]));

if (tmparea >= max_area || tmparea * 1.1 >= max_area)

{

//绘制轮廓的最小外结矩形

cv::RotatedRect rect = minAreaRect(contours[i]);

cv::Rect r = rect.boundingRect();

int max = r.width > r.height ? r.width : r.height;

if (max <= 200)

{

return 2;

}

cv::Point2f point[4];

rect.points(point);

cv::Size size = rect.size;

if (size.width > size.height)

{

p1_c = cv::Point((point[0].x + point[1].x) / 2, (point[0].y + point[1].y) / 2);

p2_c = cv::Point((point[2].x + point[3].x) / 2, (point[2].y + point[3].y) / 2);

}

else

{

p1_c = cv::Point((point[1].x + point[2].x) / 2, (point[1].y + point[2].y) / 2);

p2_c = cv::Point((point[0].x + point[3].x) / 2, (point[0].y + point[3].y) / 2);

}

}

}

cv::Rect rect(p1_c, p2_c);

Line mid(p1_c, p2_c);

if (rect.height > rect.width)

{

if (mid._center.x >= 220 && mid._center.x <= 440)

{

return 2;

}

else

{

drawFullImageLine(cv_mid, p1_c, p2_c);

}

}

else

{

if (mid._center.y >= 220 && mid._center.y <= 440)

{

return 2;

}

else

{

drawFullImageLine(cv_mid, p1_c, p2_c);

}

}

return 0;

}

/// <summary>

/// 以中线为基准分割出书本两页图像返回边缘图像

/// </summary>

/// <param name="cv_src">语义分割图像</param>

/// <param name="cv_big">返回边缘二值图像</param>

/// <param name="p1">中线点</param>

/// <param name="p2">中线点</param>

void midLineCutBook(cv::Mat cv_src, cv::Mat& cv_edge, cv::Point& p1, cv::Point& p2)

{

cv::Mat cv_canny;

cv::Rect rect(p1, p2);

cv::Canny(cv_src, cv_canny, 30, 110, 5, true);

cv::Mat cv_p1, cv_p2;

cv::Point p_bl, p_tr;

cv::Point p_top(0, 0);

cv::Point p_base(cv_src.cols, cv_src.rows);

cv::Rect rect_1, rect_2;

p_tr = cv::Point(rect.br().x, rect.tl().y);

p_bl = cv::Point(rect.tl().x, rect.br().y);

//垂直中线

if (rect.width < rect.height)

{

p_tr.x = p_tr.x < 0 ? 0 : p_tr.x;

p_tr.x = p_tr.x > cv_src.cols ? cv_src.cols : p_tr.x;

p_tr.y = 0;

//p_tr.y = p_tr.y < 0 ? 0 : p_tr.y;

//p_tr.y = p_tr.y > cv_src.rows ? cv_src.rows : p_tr.y;

p_bl.x = p_bl.x < 0 ? 0 : p_bl.x;

p_bl.x = p_bl.x > cv_src.cols ? cv_src.cols : p_bl.x;

p_bl.y = cv_src.rows;

//p_bl.y = p_bl.y < 0 ? 0 : p_bl.y;

//p_bl.y = p_bl.y > cv_src.rows ? cv_src.rows : p_bl.y;

rect_1 = cv::Rect(p_top, p_bl);

rect_2 = cv::Rect(p_tr, p_base);

}

else//水平中线

{

//std::cout << "matt" << std::endl;

p_tr.x = cv_src.cols;

//p_tr.x = p_tr.x > cv_src.cols ? cv_src.cols : p_tr.x;

p_tr.y = p_tr.y < 0 ? 0 : p_tr.y;

p_tr.y = p_tr.y > cv_src.rows ? cv_src.rows : p_tr.y;

p_bl.x = 0;

//p_bl.x = p_bl.x > cv_src.cols ? cv_src.cols : p_bl.x;

//p_bl.y = cv_src.rows;

p_bl.y = p_bl.y < 0 ? 0 : p_bl.y;

p_bl.y = p_bl.y > cv_src.rows ? cv_src.rows : p_bl.y;

rect_1 = cv::Rect(p_top, p_tr);

rect_2 = cv::Rect(p_bl, p_base);

}

p_tr = cv::Point(rect.br().x, rect.tl().y);

p_bl = cv::Point(rect.tl().x, rect.br().y);

p_tr.x = p_tr.x < 0 ? 0 : p_tr.x;

cv_edge = cv::Mat(cv_src.size(), CV_8UC1, cv::Scalar(0));

cv::Rect rect_roi = (rect_1.area() >= rect_2.area()) ? rect_1 : rect_2;

//cv::rectangle(cv_edge, rect, cv::Scalar(255));

cv::Mat cv_paper = cv_canny(rect_roi);

// cv::imshow("rect_roi", cv_edge);

cv::Mat roi = cv_edge(rect_roi);

cv_paper.copyTo(roi);

cv::line(cv_edge, p1, p2, cv::Scalar(255), 2);

}

std::vector<cv::Point> DocumentEdge::getMidLine(cv::Mat cv_src, cv::Mat& cv_seg,int area)

{

std::vector<cv::Point> mid_line;

cv::Mat cv_roi_c, cv_mid;

//1.得到分割的目标

cv_src.copyTo(cv_roi_c, cv_seg);

//2.对目标做中线分割

//enetSegmentationMid(cv_roi_c, cv_mid, mid_net, threads, 512);

inference(mid_net, cv_roi_c, cv_mid,target_size);

//3.判断是否能分割到

int mid_total = countNonZero(cv_mid);

cv::Point p1_c, p2_c;

if (mid_total > 1500)

{

getMidcourtLine(cv_mid, p1_c, p2_c);

double segment_length = cv::sqrt(((float)p1_c.y - p2_c.y) * ((float)p1_c.y - p2_c.y) + ((float)p1_c.x - p2_c.x) * ((float)p1_c.x - p2_c.x));

//3.1 按分割面积做中线长度判断,先过滤掉一部分

switch (area)

{

case 9:

if (segment_length > 500)

{

mid_line.push_back(p1_c);

mid_line.push_back(p2_c);

}

break;

case 8:

if (segment_length > 400)

{

mid_line.push_back(p1_c);

mid_line.push_back(p2_c);

}

break;

case 7:

if (segment_length > 300)

{

mid_line.push_back(p1_c);

mid_line.push_back(p2_c);

}

break;

case 6:

if (segment_length > 200)

{

mid_line.push_back(p1_c);

mid_line.push_back(p2_c);

}

break;

default:

break;

}

}

return mid_line;

}

int positioningBookEdgeLines(cv::Mat& cv_src, cv::Mat& cv_seg, std::vector<cv::Point>& mid_line, std::vector<cv::Point>& points_out, int area_index)

{

double theta = 50;

int threshold = 30;

double minLineLength = 10;

std::vector<Line> lines_out;

cv::Rect rect(mid_line[0], mid_line[1]);

Line mid(mid_line[0], mid_line[1]);

cv::Mat cv_canny;

switch (area_index)

{

case 9:

//垂直中线

if (rect.height > rect.width)

{

//1.1 判断中线位置,如果是正中间,则不做处理

if (mid._center.x >= 220 && mid._center.x <= 440)

{

return getCorrectionPoint(cv_seg, points_out);

}

else

{

midLineCutBook(cv_seg, cv_canny, mid_line[0], mid_line[1]);

getCorrectionPoint(cv_canny, cv_seg, points_out);

}

}

else//水平中线

{

if (mid._center.y >= 220 && mid._center.y <= 440)

{

return getCorrectionPoint(cv_seg, points_out);

}

else

{

midLineCutBook(cv_seg, cv_canny, mid_line[0], mid_line[1]);

getCorrectionPoint(cv_canny, cv_seg, points_out);

}

}

break;

case 8:

if (rect.height > rect.width)

{

//1.1 判断中线位置,如果是正中间,则不做处理

if (mid._center.x >= 220 && mid._center.x <= 440)

{

return getCorrectionPoint(cv_seg, points_out);

}

else

{

midLineCutBook(cv_seg, cv_canny, mid_line[0], mid_line[1]);

getCorrectionPoint(cv_canny, cv_seg, points_out);

}

}

else//水平中线

{

if (mid._center.y >= 220 && mid._center.y <= 440)

{

return getCorrectionPoint(cv_seg, points_out);

}

else

{

midLineCutBook(cv_seg, cv_canny, mid_line[0], mid_line[1]);

return getCorrectionPoint(cv_canny, cv_seg, points_out);

}

}

break;

case 7:

if (rect.height > rect.width)

{

//1.1 判断中线位置,如果是正中间,则不做处理

if (mid._center.x >= 220 && mid._center.x <= 440)

{

return getCorrectionPoint(cv_seg, points_out);

}

else

{

midLineCutBook(cv_seg, cv_canny, mid_line[0], mid_line[1]);

return getCorrectionPoint(cv_canny, cv_seg, points_out);

}

}

else//水平中线

{

if (mid._center.y >= 220 && mid._center.y <= 440)

{

return getCorrectionPoint(cv_seg, points_out);

}

else

{

midLineCutBook(cv_seg, cv_canny, mid_line[0], mid_line[1]);

return getCorrectionPoint(cv_canny, cv_seg, points_out);

}

}

break;

case 6:

if (rect.height > rect.width)

{

//1.1 判断中线位置,如果是正中间,则不做处理

if (mid._center.x >= 220 && mid._center.x <= 440)

{

return getCorrectionPoint(cv_seg, points_out);

}

else

{

midLineCutBook(cv_seg, cv_canny, mid_line[0], mid_line[1]);

return getCorrectionPoint(cv_canny, cv_seg, points_out);

}

}

else//水平中线

{

if (mid._center.y >= 220 && mid._center.y <= 440)

{

return getCorrectionPoint(cv_seg, points_out);

}

else

{

midLineCutBook(cv_seg, cv_canny, mid_line[0], mid_line[1]);

return getCorrectionPoint(cv_canny, cv_seg, points_out);

}

}

break;

default:

selectAll(cv_seg, points_out);

return 49999;

break;

}

return 0;

}

int DocumentEdge::detect(cv::Mat cv_src, std::vector<cv::Point>& points_out, int _od_label)

{

if (cv_src.empty())

{

return -20;

}

//1.为了提升速度,对原图做压缩

float w_s = cv_src.cols / 660.00;

float h_s = cv_src.rows / 660.00;

cv::Mat cv_resize;

cv::resize(cv_src, cv_resize, cv::Size(660, 660), cv::INTER_AREA);

//2.语义分割

cv::Mat cv_enet;

inference(edge_net,cv_resize,cv_enet,target_size);

//3.对分割出来的ROI做面积判断

cv::Mat cv_seg;

int area_index = targetArea(cv_enet, cv_seg);

int mark = 0;

//4.目标是书本

if (_od_label == 10 && book_mid)

{

cv::Mat cv_roi_c, cv_mid, cv_edge;

//4.1 中线检测并返回中线

std::vector<cv::Point> mid_line;

mid_line = getMidLine(cv_resize, cv_seg,area_index);

//4.2 判断到中线

if (mid_line.size() >= 2)

{

mark = positioningBookEdgeLines(cv_resize, cv_seg, mid_line, points_out, area_index);

}

//4.3 判断不到中线

else

{

mark = getCorrectionPoint(cv_seg, points_out);

}

}

//5.目标不是书本

else

{

mark = getCorrectionPoint(cv_seg, points_out);

}

for (int i = 0; i < points_out.size(); i++)

{

points_out.at(i).x = points_out.at(i).x * w_s;

points_out.at(i).y = points_out.at(i).y * h_s;

points_out.at(i).x = points_out.at(i).x < 0 ? 0 : points_out.at(i).x;

points_out.at(i).y = points_out.at(i).y < 0 ? 0 : points_out.at(i).y;

points_out.at(i).x = points_out.at(i).x > cv_src.cols ? cv_src.cols : points_out.at(i).x;

points_out.at(i).y = points_out.at(i).y > cv_src.rows ? cv_src.rows : points_out.at(i).y;

}

return mark;

}

int DocumentEdge::revise_image(cv::Mat& cv_src, cv::Mat& cv_dst, std::vector<cv::Point>& in_points)

{

if (cv_src.empty())

{

return -20;

}

cv::Mat cv_warp = cv_src.clone();

if (in_points.size() != 4)

{

return -444;

}

cv::Point point_f, point_b;

point_f.x = (in_points.at(0).x < in_points.at(2).x) ? in_points.at(0).x : in_points.at(2).x;

point_f.y = (in_points.at(0).y < in_points.at(1).y) ? in_points.at(0).y : in_points.at(1).y;

point_b.x = (in_points.at(3).x > in_points.at(1).x) ? in_points.at(3).x : in_points.at(1).x;

point_b.y = (in_points.at(3).y > in_points.at(2).y) ? in_points.at(3).y : in_points.at(2).y;

//2020.8.24更新了比例不对的问题,加了点到点之间的距离运算,最终取水平与垂直线最长线

float l_1 = getDistance(in_points.at(0), in_points.at(1));

float l_2 = getDistance(in_points.at(2), in_points.at(3));

float l_3 = getDistance(in_points.at(1), in_points.at(3));

float l_4 = getDistance(in_points.at(0), in_points.at(2));

int width = l_1 >= l_2 ? l_1 : l_2;

int height = l_3 >= l_4 ? l_3 : l_4;

//旧代码取目标的最小外接矩形,但倾斜45度时会出现比例变形的现象

//cv::Rect rect(point_f, point_b);

cv_dst = cv::Mat::zeros(height, width, CV_8UC3);

std::vector<cv::Point2f> dst_pts;

dst_pts.push_back(cv::Point2f(0, 0));

dst_pts.push_back(cv::Point2f(width - 1, 0));

dst_pts.push_back(cv::Point2f(0, height - 1));

dst_pts.push_back(cv::Point2f(width - 1, height - 1));

std::vector<cv::Point2f> tr_points;

tr_points.push_back(in_points.at(0));

tr_points.push_back(in_points.at(1));

tr_points.push_back(in_points.at(2));

tr_points.push_back(in_points.at(3));

cv::Mat transmtx = getPerspectiveTransform(tr_points, dst_pts);

cv::warpPerspective(cv_warp, cv_dst, transmtx, cv_dst.size());

return 0;

}

void DocumentEdge::draw_out_points(cv::Mat cv_src, cv::Mat& cv_dst, std::vector<cv::Point>& points_out)

{

cv_dst = cv_src.clone();

cv::line(cv_dst, points_out.at(0), points_out.at(1), cv::Scalar(0, 255, 0), 2, cv::LINE_8);

cv::line(cv_dst, points_out.at(0), points_out.at(2), cv::Scalar(0, 0, 255), 2, cv::LINE_8);

cv::line(cv_dst, points_out.at(1), points_out.at(3), cv::Scalar(255, 0, 0), 2, cv::LINE_8);

cv::line(cv_dst, points_out.at(2), points_out.at(3), cv::Scalar(0, 255, 255), 2, cv::LINE_8);

}

}

4.实现效果

本文来自互联网用户投稿,该文观点仅代表作者本人,不代表本站立场。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。 如若内容造成侵权/违法违规/事实不符,请联系我的编程经验分享网邮箱:chenni525@qq.com进行投诉反馈,一经查实,立即删除!

- Python教程

- 深入理解 MySQL 中的 HAVING 关键字和聚合函数

- Qt之QChar编码(1)

- MyBatis入门基础篇

- 用Python脚本实现FFmpeg批量转换

- Python发送邮件

- Spring Boot整合GraphQL

- 在无公网IP环境下实现VS Code远程开发的方法

- redis之分布式锁(四)

- 数据安全无阻,轻松远程工作!迅软DSE出差加密指南,让你出差更放心!

- 算法基础之完全背包问题

- 《JVM由浅入深学习【七】 2024-01-11》JVM由简入深学习提升分享

- 704.二分查找(力扣LeetCode)

- 【备战蓝桥杯】图的遍历问题

- 2023 英特尔On技术创新大会直播 |打造自己的聊天机器人