torch 实现inverse-square-root scheduler

发布时间:2024年01月02日

import cv2

import torch.nn as nn

import torch

from torchvision.models import AlexNet

import matplotlib.pyplot as plt

from torch.optim.lr_scheduler import LambdaLR

def get_inverse_sqrt_scheduler(optimizer, num_warmup_steps, num_cooldown_steps, num_training_steps):

# linearly warmup for the first args.warmup_updates

lr_step = 1 / num_warmup_steps

# then, decay prop. to the inverse square root of the update number

decay_factor = num_warmup_steps**0.5

decayed_lr = decay_factor * (num_training_steps - num_cooldown_steps) ** -0.5

def lr_lambda(current_step: int):

if current_step < num_warmup_steps:

return float(current_step * lr_step)

elif current_step > (num_training_steps - num_cooldown_steps):

return max(0.0, float(decayed_lr * (num_training_steps - current_step) / num_cooldown_steps))

else:

return float(decay_factor * current_step**-0.5)

return LambdaLR(optimizer, lr_lambda, last_epoch=-1)

#定义2分类网络

steps = []

lrs = []

model = AlexNet(num_classes=2)

lr = 0.1

optimizer = torch.optim.SGD(model.parameters(), lr=lr, momentum=0.9)

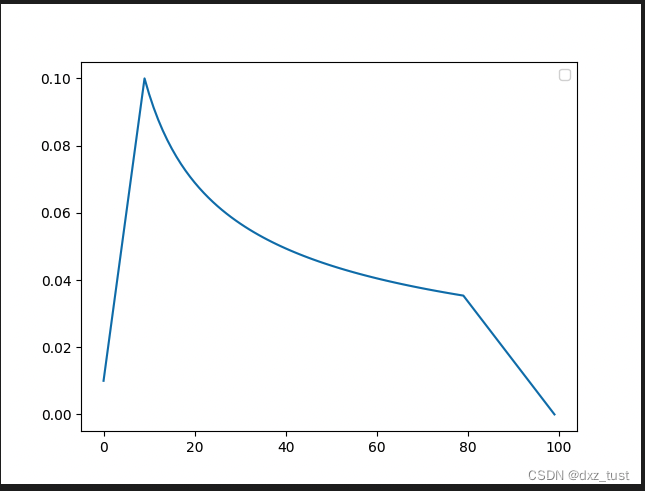

#前10steps warmup ,中间70steps正常衰减,最后20个steps期间衰减到0

scheduler = get_inverse_sqrt_scheduler(optimizer,num_warmup_steps=10, num_cooldown_steps=20, num_training_steps=100)

for epoch in range(10):

for batch in range(10):

scheduler.step()

lrs.append(scheduler.get_lr()[0])

steps.append(epoch*10+batch)

plt.figure()

plt.legend()

plt.plot(steps, lrs, label='inverse_sqrt')

plt.savefig("dd.png")

文章来源:https://blog.csdn.net/daixiangzi/article/details/135343261

本文来自互联网用户投稿,该文观点仅代表作者本人,不代表本站立场。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。 如若内容造成侵权/违法违规/事实不符,请联系我的编程经验分享网邮箱:chenni525@qq.com进行投诉反馈,一经查实,立即删除!

本文来自互联网用户投稿,该文观点仅代表作者本人,不代表本站立场。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。 如若内容造成侵权/违法违规/事实不符,请联系我的编程经验分享网邮箱:chenni525@qq.com进行投诉反馈,一经查实,立即删除!

最新文章

- Python教程

- 深入理解 MySQL 中的 HAVING 关键字和聚合函数

- Qt之QChar编码(1)

- MyBatis入门基础篇

- 用Python脚本实现FFmpeg批量转换

- Python mesh网格ply数据转STL数据

- 在检验试验台底座应注意哪几个方面——河北北重

- 了解激光打标机:技术原理、应用领域与优势

- cesium学习笔记(问题记录)——(三)

- vue前端上传图片到阿里云OSS,超详细上传图片与视频教程

- BERT(从理论到实践): Bidirectional Encoder Representations from Transformers【1】

- DISC-MedLLM—中文医疗健康助手

- 第四章 变量、作用域与内存

- 简单易用的快速原型软件终于被我找到了!

- VS2019中解决一些配置问题